The Ultimate Guide to Qualitative Research - Part 1: The Basics

- Introduction and overview

- What is qualitative research?

- What is qualitative data?

- Examples of qualitative data

- Qualitative vs. quantitative research

- Mixed methods

- Qualitative research preparation

- Theoretical perspective

- Theoretical framework

- Literature reviews

Research question

- Conceptual framework

- Conceptual vs. theoretical framework

Data collection

- Qualitative research methods

- Focus groups

- Observational research

What is a case study?

Applications for case study research, what is a good case study, process of case study design, benefits and limitations of case studies.

- Ethnographical research

- Ethical considerations

- Confidentiality and privacy

- Power dynamics

- Reflexivity

Case studies

Case studies are essential to qualitative research , offering a lens through which researchers can investigate complex phenomena within their real-life contexts. This chapter explores the concept, purpose, applications, examples, and types of case studies and provides guidance on how to conduct case study research effectively.

Whereas quantitative methods look at phenomena at scale, case study research looks at a concept or phenomenon in considerable detail. While analyzing a single case can help understand one perspective regarding the object of research inquiry, analyzing multiple cases can help obtain a more holistic sense of the topic or issue. Let's provide a basic definition of a case study, then explore its characteristics and role in the qualitative research process.

Definition of a case study

A case study in qualitative research is a strategy of inquiry that involves an in-depth investigation of a phenomenon within its real-world context. It provides researchers with the opportunity to acquire an in-depth understanding of intricate details that might not be as apparent or accessible through other methods of research. The specific case or cases being studied can be a single person, group, or organization – demarcating what constitutes a relevant case worth studying depends on the researcher and their research question .

Among qualitative research methods , a case study relies on multiple sources of evidence, such as documents, artifacts, interviews , or observations , to present a complete and nuanced understanding of the phenomenon under investigation. The objective is to illuminate the readers' understanding of the phenomenon beyond its abstract statistical or theoretical explanations.

Characteristics of case studies

Case studies typically possess a number of distinct characteristics that set them apart from other research methods. These characteristics include a focus on holistic description and explanation, flexibility in the design and data collection methods, reliance on multiple sources of evidence, and emphasis on the context in which the phenomenon occurs.

Furthermore, case studies can often involve a longitudinal examination of the case, meaning they study the case over a period of time. These characteristics allow case studies to yield comprehensive, in-depth, and richly contextualized insights about the phenomenon of interest.

The role of case studies in research

Case studies hold a unique position in the broader landscape of research methods aimed at theory development. They are instrumental when the primary research interest is to gain an intensive, detailed understanding of a phenomenon in its real-life context.

In addition, case studies can serve different purposes within research - they can be used for exploratory, descriptive, or explanatory purposes, depending on the research question and objectives. This flexibility and depth make case studies a valuable tool in the toolkit of qualitative researchers.

Remember, a well-conducted case study can offer a rich, insightful contribution to both academic and practical knowledge through theory development or theory verification, thus enhancing our understanding of complex phenomena in their real-world contexts.

What is the purpose of a case study?

Case study research aims for a more comprehensive understanding of phenomena, requiring various research methods to gather information for qualitative analysis . Ultimately, a case study can allow the researcher to gain insight into a particular object of inquiry and develop a theoretical framework relevant to the research inquiry.

Why use case studies in qualitative research?

Using case studies as a research strategy depends mainly on the nature of the research question and the researcher's access to the data.

Conducting case study research provides a level of detail and contextual richness that other research methods might not offer. They are beneficial when there's a need to understand complex social phenomena within their natural contexts.

The explanatory, exploratory, and descriptive roles of case studies

Case studies can take on various roles depending on the research objectives. They can be exploratory when the research aims to discover new phenomena or define new research questions; they are descriptive when the objective is to depict a phenomenon within its context in a detailed manner; and they can be explanatory if the goal is to understand specific relationships within the studied context. Thus, the versatility of case studies allows researchers to approach their topic from different angles, offering multiple ways to uncover and interpret the data .

The impact of case studies on knowledge development

Case studies play a significant role in knowledge development across various disciplines. Analysis of cases provides an avenue for researchers to explore phenomena within their context based on the collected data.

This can result in the production of rich, practical insights that can be instrumental in both theory-building and practice. Case studies allow researchers to delve into the intricacies and complexities of real-life situations, uncovering insights that might otherwise remain hidden.

Types of case studies

In qualitative research , a case study is not a one-size-fits-all approach. Depending on the nature of the research question and the specific objectives of the study, researchers might choose to use different types of case studies. These types differ in their focus, methodology, and the level of detail they provide about the phenomenon under investigation.

Understanding these types is crucial for selecting the most appropriate approach for your research project and effectively achieving your research goals. Let's briefly look at the main types of case studies.

Exploratory case studies

Exploratory case studies are typically conducted to develop a theory or framework around an understudied phenomenon. They can also serve as a precursor to a larger-scale research project. Exploratory case studies are useful when a researcher wants to identify the key issues or questions which can spur more extensive study or be used to develop propositions for further research. These case studies are characterized by flexibility, allowing researchers to explore various aspects of a phenomenon as they emerge, which can also form the foundation for subsequent studies.

Descriptive case studies

Descriptive case studies aim to provide a complete and accurate representation of a phenomenon or event within its context. These case studies are often based on an established theoretical framework, which guides how data is collected and analyzed. The researcher is concerned with describing the phenomenon in detail, as it occurs naturally, without trying to influence or manipulate it.

Explanatory case studies

Explanatory case studies are focused on explanation - they seek to clarify how or why certain phenomena occur. Often used in complex, real-life situations, they can be particularly valuable in clarifying causal relationships among concepts and understanding the interplay between different factors within a specific context.

Intrinsic, instrumental, and collective case studies

These three categories of case studies focus on the nature and purpose of the study. An intrinsic case study is conducted when a researcher has an inherent interest in the case itself. Instrumental case studies are employed when the case is used to provide insight into a particular issue or phenomenon. A collective case study, on the other hand, involves studying multiple cases simultaneously to investigate some general phenomena.

Each type of case study serves a different purpose and has its own strengths and challenges. The selection of the type should be guided by the research question and objectives, as well as the context and constraints of the research.

The flexibility, depth, and contextual richness offered by case studies make this approach an excellent research method for various fields of study. They enable researchers to investigate real-world phenomena within their specific contexts, capturing nuances that other research methods might miss. Across numerous fields, case studies provide valuable insights into complex issues.

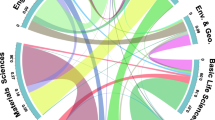

Critical information systems research

Case studies provide a detailed understanding of the role and impact of information systems in different contexts. They offer a platform to explore how information systems are designed, implemented, and used and how they interact with various social, economic, and political factors. Case studies in this field often focus on examining the intricate relationship between technology, organizational processes, and user behavior, helping to uncover insights that can inform better system design and implementation.

Health research

Health research is another field where case studies are highly valuable. They offer a way to explore patient experiences, healthcare delivery processes, and the impact of various interventions in a real-world context.

Case studies can provide a deep understanding of a patient's journey, giving insights into the intricacies of disease progression, treatment effects, and the psychosocial aspects of health and illness.

Asthma research studies

Specifically within medical research, studies on asthma often employ case studies to explore the individual and environmental factors that influence asthma development, management, and outcomes. A case study can provide rich, detailed data about individual patients' experiences, from the triggers and symptoms they experience to the effectiveness of various management strategies. This can be crucial for developing patient-centered asthma care approaches.

Other fields

Apart from the fields mentioned, case studies are also extensively used in business and management research, education research, and political sciences, among many others. They provide an opportunity to delve into the intricacies of real-world situations, allowing for a comprehensive understanding of various phenomena.

Case studies, with their depth and contextual focus, offer unique insights across these varied fields. They allow researchers to illuminate the complexities of real-life situations, contributing to both theory and practice.

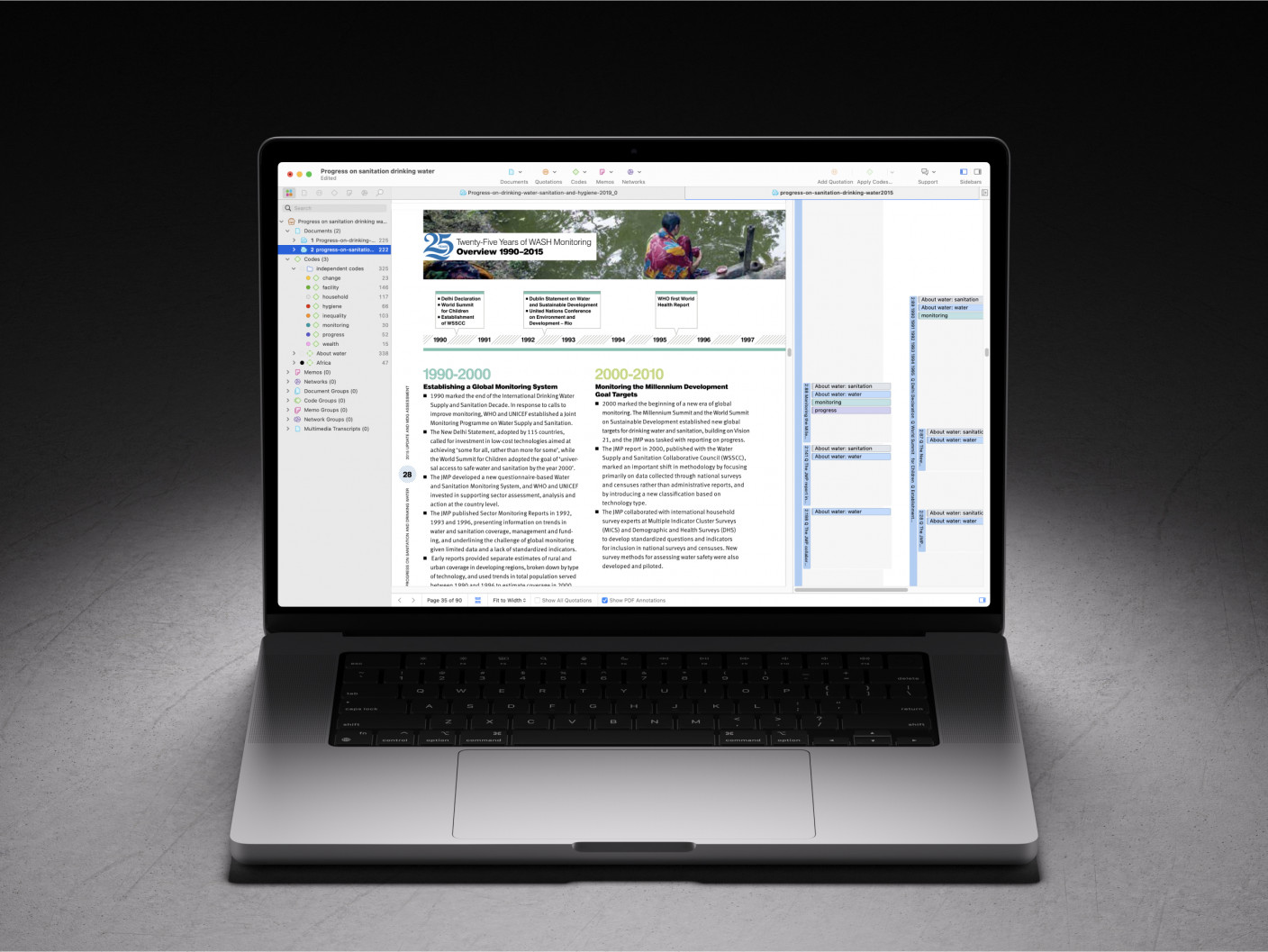

Whatever field you're in, ATLAS.ti puts your data to work for you

Download a free trial of ATLAS.ti to turn your data into insights.

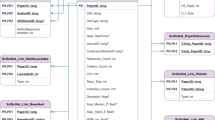

Understanding the key elements of case study design is crucial for conducting rigorous and impactful case study research. A well-structured design guides the researcher through the process, ensuring that the study is methodologically sound and its findings are reliable and valid. The main elements of case study design include the research question , propositions, units of analysis, and the logic linking the data to the propositions.

The research question is the foundation of any research study. A good research question guides the direction of the study and informs the selection of the case, the methods of collecting data, and the analysis techniques. A well-formulated research question in case study research is typically clear, focused, and complex enough to merit further detailed examination of the relevant case(s).

Propositions

Propositions, though not necessary in every case study, provide a direction by stating what we might expect to find in the data collected. They guide how data is collected and analyzed by helping researchers focus on specific aspects of the case. They are particularly important in explanatory case studies, which seek to understand the relationships among concepts within the studied phenomenon.

Units of analysis

The unit of analysis refers to the case, or the main entity or entities that are being analyzed in the study. In case study research, the unit of analysis can be an individual, a group, an organization, a decision, an event, or even a time period. It's crucial to clearly define the unit of analysis, as it shapes the qualitative data analysis process by allowing the researcher to analyze a particular case and synthesize analysis across multiple case studies to draw conclusions.

Argumentation

This refers to the inferential model that allows researchers to draw conclusions from the data. The researcher needs to ensure that there is a clear link between the data, the propositions (if any), and the conclusions drawn. This argumentation is what enables the researcher to make valid and credible inferences about the phenomenon under study.

Understanding and carefully considering these elements in the design phase of a case study can significantly enhance the quality of the research. It can help ensure that the study is methodologically sound and its findings contribute meaningful insights about the case.

Ready to jumpstart your research with ATLAS.ti?

Conceptualize your research project with our intuitive data analysis interface. Download a free trial today.

Conducting a case study involves several steps, from defining the research question and selecting the case to collecting and analyzing data . This section outlines these key stages, providing a practical guide on how to conduct case study research.

Defining the research question

The first step in case study research is defining a clear, focused research question. This question should guide the entire research process, from case selection to analysis. It's crucial to ensure that the research question is suitable for a case study approach. Typically, such questions are exploratory or descriptive in nature and focus on understanding a phenomenon within its real-life context.

Selecting and defining the case

The selection of the case should be based on the research question and the objectives of the study. It involves choosing a unique example or a set of examples that provide rich, in-depth data about the phenomenon under investigation. After selecting the case, it's crucial to define it clearly, setting the boundaries of the case, including the time period and the specific context.

Previous research can help guide the case study design. When considering a case study, an example of a case could be taken from previous case study research and used to define cases in a new research inquiry. Considering recently published examples can help understand how to select and define cases effectively.

Developing a detailed case study protocol

A case study protocol outlines the procedures and general rules to be followed during the case study. This includes the data collection methods to be used, the sources of data, and the procedures for analysis. Having a detailed case study protocol ensures consistency and reliability in the study.

The protocol should also consider how to work with the people involved in the research context to grant the research team access to collecting data. As mentioned in previous sections of this guide, establishing rapport is an essential component of qualitative research as it shapes the overall potential for collecting and analyzing data.

Collecting data

Gathering data in case study research often involves multiple sources of evidence, including documents, archival records, interviews, observations, and physical artifacts. This allows for a comprehensive understanding of the case. The process for gathering data should be systematic and carefully documented to ensure the reliability and validity of the study.

Analyzing and interpreting data

The next step is analyzing the data. This involves organizing the data , categorizing it into themes or patterns , and interpreting these patterns to answer the research question. The analysis might also involve comparing the findings with prior research or theoretical propositions.

Writing the case study report

The final step is writing the case study report . This should provide a detailed description of the case, the data, the analysis process, and the findings. The report should be clear, organized, and carefully written to ensure that the reader can understand the case and the conclusions drawn from it.

Each of these steps is crucial in ensuring that the case study research is rigorous, reliable, and provides valuable insights about the case.

The type, depth, and quality of data in your study can significantly influence the validity and utility of the study. In case study research, data is usually collected from multiple sources to provide a comprehensive and nuanced understanding of the case. This section will outline the various methods of collecting data used in case study research and discuss considerations for ensuring the quality of the data.

Interviews are a common method of gathering data in case study research. They can provide rich, in-depth data about the perspectives, experiences, and interpretations of the individuals involved in the case. Interviews can be structured , semi-structured , or unstructured , depending on the research question and the degree of flexibility needed.

Observations

Observations involve the researcher observing the case in its natural setting, providing first-hand information about the case and its context. Observations can provide data that might not be revealed in interviews or documents, such as non-verbal cues or contextual information.

Documents and artifacts

Documents and archival records provide a valuable source of data in case study research. They can include reports, letters, memos, meeting minutes, email correspondence, and various public and private documents related to the case.

These records can provide historical context, corroborate evidence from other sources, and offer insights into the case that might not be apparent from interviews or observations.

Physical artifacts refer to any physical evidence related to the case, such as tools, products, or physical environments. These artifacts can provide tangible insights into the case, complementing the data gathered from other sources.

Ensuring the quality of data collection

Determining the quality of data in case study research requires careful planning and execution. It's crucial to ensure that the data is reliable, accurate, and relevant to the research question. This involves selecting appropriate methods of collecting data, properly training interviewers or observers, and systematically recording and storing the data. It also includes considering ethical issues related to collecting and handling data, such as obtaining informed consent and ensuring the privacy and confidentiality of the participants.

Data analysis

Analyzing case study research involves making sense of the rich, detailed data to answer the research question. This process can be challenging due to the volume and complexity of case study data. However, a systematic and rigorous approach to analysis can ensure that the findings are credible and meaningful. This section outlines the main steps and considerations in analyzing data in case study research.

Organizing the data

The first step in the analysis is organizing the data. This involves sorting the data into manageable sections, often according to the data source or the theme. This step can also involve transcribing interviews, digitizing physical artifacts, or organizing observational data.

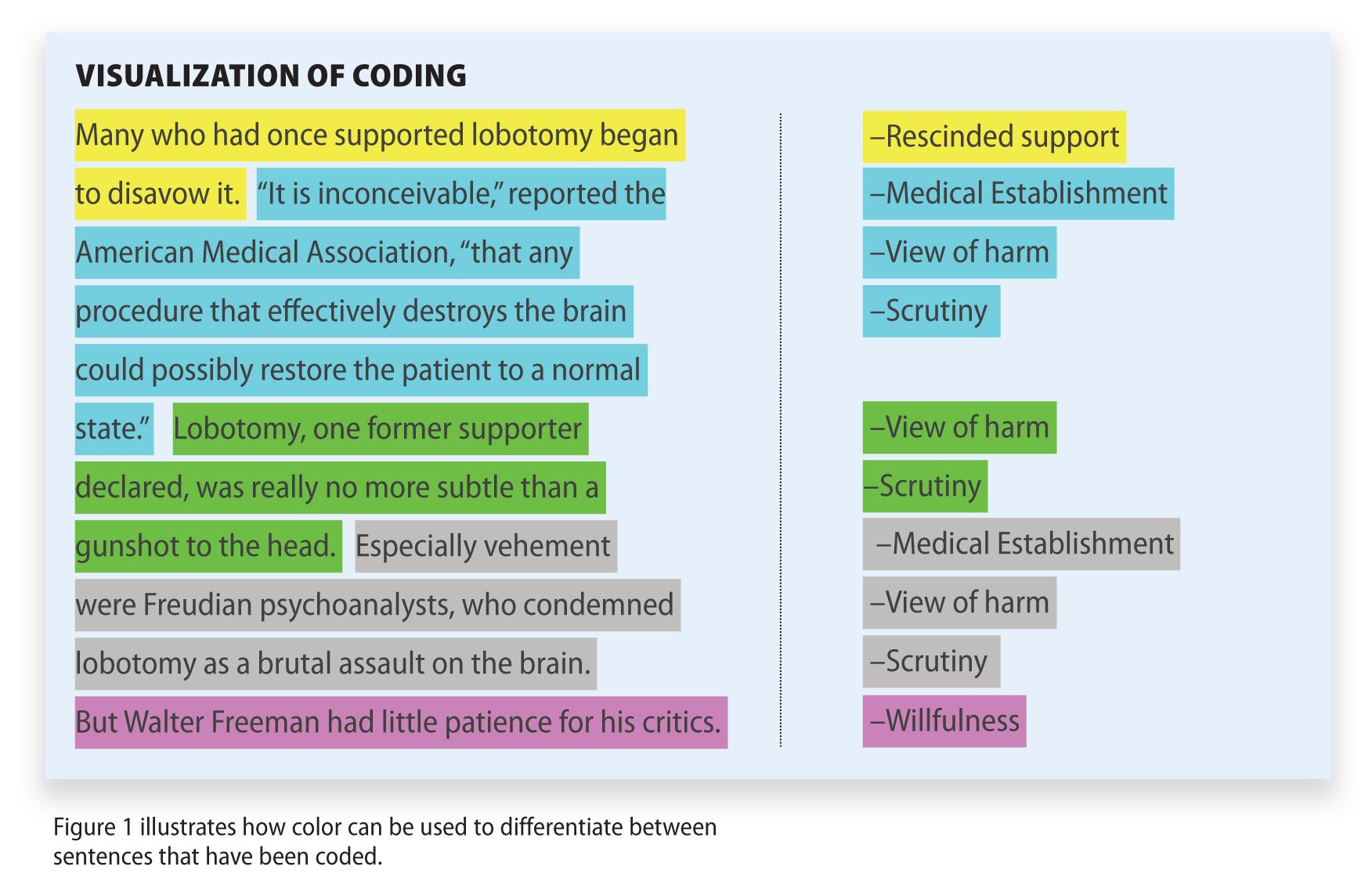

Categorizing and coding the data

Once the data is organized, the next step is to categorize or code the data. This involves identifying common themes, patterns, or concepts in the data and assigning codes to relevant data segments. Coding can be done manually or with the help of software tools, and in either case, qualitative analysis software can greatly facilitate the entire coding process. Coding helps to reduce the data to a set of themes or categories that can be more easily analyzed.

Identifying patterns and themes

After coding the data, the researcher looks for patterns or themes in the coded data. This involves comparing and contrasting the codes and looking for relationships or patterns among them. The identified patterns and themes should help answer the research question.

Interpreting the data

Once patterns and themes have been identified, the next step is to interpret these findings. This involves explaining what the patterns or themes mean in the context of the research question and the case. This interpretation should be grounded in the data, but it can also involve drawing on theoretical concepts or prior research.

Verification of the data

The last step in the analysis is verification. This involves checking the accuracy and consistency of the analysis process and confirming that the findings are supported by the data. This can involve re-checking the original data, checking the consistency of codes, or seeking feedback from research participants or peers.

Like any research method , case study research has its strengths and limitations. Researchers must be aware of these, as they can influence the design, conduct, and interpretation of the study.

Understanding the strengths and limitations of case study research can also guide researchers in deciding whether this approach is suitable for their research question . This section outlines some of the key strengths and limitations of case study research.

Benefits include the following:

- Rich, detailed data: One of the main strengths of case study research is that it can generate rich, detailed data about the case. This can provide a deep understanding of the case and its context, which can be valuable in exploring complex phenomena.

- Flexibility: Case study research is flexible in terms of design , data collection , and analysis . A sufficient degree of flexibility allows the researcher to adapt the study according to the case and the emerging findings.

- Real-world context: Case study research involves studying the case in its real-world context, which can provide valuable insights into the interplay between the case and its context.

- Multiple sources of evidence: Case study research often involves collecting data from multiple sources , which can enhance the robustness and validity of the findings.

On the other hand, researchers should consider the following limitations:

- Generalizability: A common criticism of case study research is that its findings might not be generalizable to other cases due to the specificity and uniqueness of each case.

- Time and resource intensive: Case study research can be time and resource intensive due to the depth of the investigation and the amount of collected data.

- Complexity of analysis: The rich, detailed data generated in case study research can make analyzing the data challenging.

- Subjectivity: Given the nature of case study research, there may be a higher degree of subjectivity in interpreting the data , so researchers need to reflect on this and transparently convey to audiences how the research was conducted.

Being aware of these strengths and limitations can help researchers design and conduct case study research effectively and interpret and report the findings appropriately.

Ready to analyze your data with ATLAS.ti?

See how our intuitive software can draw key insights from your data with a free trial today.

An official website of the United States government

The .gov means it’s official. Federal government websites often end in .gov or .mil. Before sharing sensitive information, make sure you’re on a federal government site.

The site is secure. The https:// ensures that you are connecting to the official website and that any information you provide is encrypted and transmitted securely.

- Publications

- Account settings

Preview improvements coming to the PMC website in October 2024. Learn More or Try it out now .

- Advanced Search

- Journal List

- Can J Hosp Pharm

- v.68(3); May-Jun 2015

Qualitative Research: Data Collection, Analysis, and Management

Introduction.

In an earlier paper, 1 we presented an introduction to using qualitative research methods in pharmacy practice. In this article, we review some principles of the collection, analysis, and management of qualitative data to help pharmacists interested in doing research in their practice to continue their learning in this area. Qualitative research can help researchers to access the thoughts and feelings of research participants, which can enable development of an understanding of the meaning that people ascribe to their experiences. Whereas quantitative research methods can be used to determine how many people undertake particular behaviours, qualitative methods can help researchers to understand how and why such behaviours take place. Within the context of pharmacy practice research, qualitative approaches have been used to examine a diverse array of topics, including the perceptions of key stakeholders regarding prescribing by pharmacists and the postgraduation employment experiences of young pharmacists (see “Further Reading” section at the end of this article).

In the previous paper, 1 we outlined 3 commonly used methodologies: ethnography 2 , grounded theory 3 , and phenomenology. 4 Briefly, ethnography involves researchers using direct observation to study participants in their “real life” environment, sometimes over extended periods. Grounded theory and its later modified versions (e.g., Strauss and Corbin 5 ) use face-to-face interviews and interactions such as focus groups to explore a particular research phenomenon and may help in clarifying a less-well-understood problem, situation, or context. Phenomenology shares some features with grounded theory (such as an exploration of participants’ behaviour) and uses similar techniques to collect data, but it focuses on understanding how human beings experience their world. It gives researchers the opportunity to put themselves in another person’s shoes and to understand the subjective experiences of participants. 6 Some researchers use qualitative methodologies but adopt a different standpoint, and an example of this appears in the work of Thurston and others, 7 discussed later in this paper.

Qualitative work requires reflection on the part of researchers, both before and during the research process, as a way of providing context and understanding for readers. When being reflexive, researchers should not try to simply ignore or avoid their own biases (as this would likely be impossible); instead, reflexivity requires researchers to reflect upon and clearly articulate their position and subjectivities (world view, perspectives, biases), so that readers can better understand the filters through which questions were asked, data were gathered and analyzed, and findings were reported. From this perspective, bias and subjectivity are not inherently negative but they are unavoidable; as a result, it is best that they be articulated up-front in a manner that is clear and coherent for readers.

THE PARTICIPANT’S VIEWPOINT

What qualitative study seeks to convey is why people have thoughts and feelings that might affect the way they behave. Such study may occur in any number of contexts, but here, we focus on pharmacy practice and the way people behave with regard to medicines use (e.g., to understand patients’ reasons for nonadherence with medication therapy or to explore physicians’ resistance to pharmacists’ clinical suggestions). As we suggested in our earlier article, 1 an important point about qualitative research is that there is no attempt to generalize the findings to a wider population. Qualitative research is used to gain insights into people’s feelings and thoughts, which may provide the basis for a future stand-alone qualitative study or may help researchers to map out survey instruments for use in a quantitative study. It is also possible to use different types of research in the same study, an approach known as “mixed methods” research, and further reading on this topic may be found at the end of this paper.

The role of the researcher in qualitative research is to attempt to access the thoughts and feelings of study participants. This is not an easy task, as it involves asking people to talk about things that may be very personal to them. Sometimes the experiences being explored are fresh in the participant’s mind, whereas on other occasions reliving past experiences may be difficult. However the data are being collected, a primary responsibility of the researcher is to safeguard participants and their data. Mechanisms for such safeguarding must be clearly articulated to participants and must be approved by a relevant research ethics review board before the research begins. Researchers and practitioners new to qualitative research should seek advice from an experienced qualitative researcher before embarking on their project.

DATA COLLECTION

Whatever philosophical standpoint the researcher is taking and whatever the data collection method (e.g., focus group, one-to-one interviews), the process will involve the generation of large amounts of data. In addition to the variety of study methodologies available, there are also different ways of making a record of what is said and done during an interview or focus group, such as taking handwritten notes or video-recording. If the researcher is audio- or video-recording data collection, then the recordings must be transcribed verbatim before data analysis can begin. As a rough guide, it can take an experienced researcher/transcriber 8 hours to transcribe one 45-minute audio-recorded interview, a process than will generate 20–30 pages of written dialogue.

Many researchers will also maintain a folder of “field notes” to complement audio-taped interviews. Field notes allow the researcher to maintain and comment upon impressions, environmental contexts, behaviours, and nonverbal cues that may not be adequately captured through the audio-recording; they are typically handwritten in a small notebook at the same time the interview takes place. Field notes can provide important context to the interpretation of audio-taped data and can help remind the researcher of situational factors that may be important during data analysis. Such notes need not be formal, but they should be maintained and secured in a similar manner to audio tapes and transcripts, as they contain sensitive information and are relevant to the research. For more information about collecting qualitative data, please see the “Further Reading” section at the end of this paper.

DATA ANALYSIS AND MANAGEMENT

If, as suggested earlier, doing qualitative research is about putting oneself in another person’s shoes and seeing the world from that person’s perspective, the most important part of data analysis and management is to be true to the participants. It is their voices that the researcher is trying to hear, so that they can be interpreted and reported on for others to read and learn from. To illustrate this point, consider the anonymized transcript excerpt presented in Appendix 1 , which is taken from a research interview conducted by one of the authors (J.S.). We refer to this excerpt throughout the remainder of this paper to illustrate how data can be managed, analyzed, and presented.

Interpretation of Data

Interpretation of the data will depend on the theoretical standpoint taken by researchers. For example, the title of the research report by Thurston and others, 7 “Discordant indigenous and provider frames explain challenges in improving access to arthritis care: a qualitative study using constructivist grounded theory,” indicates at least 2 theoretical standpoints. The first is the culture of the indigenous population of Canada and the place of this population in society, and the second is the social constructivist theory used in the constructivist grounded theory method. With regard to the first standpoint, it can be surmised that, to have decided to conduct the research, the researchers must have felt that there was anecdotal evidence of differences in access to arthritis care for patients from indigenous and non-indigenous backgrounds. With regard to the second standpoint, it can be surmised that the researchers used social constructivist theory because it assumes that behaviour is socially constructed; in other words, people do things because of the expectations of those in their personal world or in the wider society in which they live. (Please see the “Further Reading” section for resources providing more information about social constructivist theory and reflexivity.) Thus, these 2 standpoints (and there may have been others relevant to the research of Thurston and others 7 ) will have affected the way in which these researchers interpreted the experiences of the indigenous population participants and those providing their care. Another standpoint is feminist standpoint theory which, among other things, focuses on marginalized groups in society. Such theories are helpful to researchers, as they enable us to think about things from a different perspective. Being aware of the standpoints you are taking in your own research is one of the foundations of qualitative work. Without such awareness, it is easy to slip into interpreting other people’s narratives from your own viewpoint, rather than that of the participants.

To analyze the example in Appendix 1 , we will adopt a phenomenological approach because we want to understand how the participant experienced the illness and we want to try to see the experience from that person’s perspective. It is important for the researcher to reflect upon and articulate his or her starting point for such analysis; for example, in the example, the coder could reflect upon her own experience as a female of a majority ethnocultural group who has lived within middle class and upper middle class settings. This personal history therefore forms the filter through which the data will be examined. This filter does not diminish the quality or significance of the analysis, since every researcher has his or her own filters; however, by explicitly stating and acknowledging what these filters are, the researcher makes it easer for readers to contextualize the work.

Transcribing and Checking

For the purposes of this paper it is assumed that interviews or focus groups have been audio-recorded. As mentioned above, transcribing is an arduous process, even for the most experienced transcribers, but it must be done to convert the spoken word to the written word to facilitate analysis. For anyone new to conducting qualitative research, it is beneficial to transcribe at least one interview and one focus group. It is only by doing this that researchers realize how difficult the task is, and this realization affects their expectations when asking others to transcribe. If the research project has sufficient funding, then a professional transcriber can be hired to do the work. If this is the case, then it is a good idea to sit down with the transcriber, if possible, and talk through the research and what the participants were talking about. This background knowledge for the transcriber is especially important in research in which people are using jargon or medical terms (as in pharmacy practice). Involving your transcriber in this way makes the work both easier and more rewarding, as he or she will feel part of the team. Transcription editing software is also available, but it is expensive. For example, ELAN (more formally known as EUDICO Linguistic Annotator, developed at the Technical University of Berlin) 8 is a tool that can help keep data organized by linking media and data files (particularly valuable if, for example, video-taping of interviews is complemented by transcriptions). It can also be helpful in searching complex data sets. Products such as ELAN do not actually automatically transcribe interviews or complete analyses, and they do require some time and effort to learn; nonetheless, for some research applications, it may be a valuable to consider such software tools.

All audio recordings should be transcribed verbatim, regardless of how intelligible the transcript may be when it is read back. Lines of text should be numbered. Once the transcription is complete, the researcher should read it while listening to the recording and do the following: correct any spelling or other errors; anonymize the transcript so that the participant cannot be identified from anything that is said (e.g., names, places, significant events); insert notations for pauses, laughter, looks of discomfort; insert any punctuation, such as commas and full stops (periods) (see Appendix 1 for examples of inserted punctuation), and include any other contextual information that might have affected the participant (e.g., temperature or comfort of the room).

Dealing with the transcription of a focus group is slightly more difficult, as multiple voices are involved. One way of transcribing such data is to “tag” each voice (e.g., Voice A, Voice B). In addition, the focus group will usually have 2 facilitators, whose respective roles will help in making sense of the data. While one facilitator guides participants through the topic, the other can make notes about context and group dynamics. More information about group dynamics and focus groups can be found in resources listed in the “Further Reading” section.

Reading between the Lines

During the process outlined above, the researcher can begin to get a feel for the participant’s experience of the phenomenon in question and can start to think about things that could be pursued in subsequent interviews or focus groups (if appropriate). In this way, one participant’s narrative informs the next, and the researcher can continue to interview until nothing new is being heard or, as it says in the text books, “saturation is reached”. While continuing with the processes of coding and theming (described in the next 2 sections), it is important to consider not just what the person is saying but also what they are not saying. For example, is a lengthy pause an indication that the participant is finding the subject difficult, or is the person simply deciding what to say? The aim of the whole process from data collection to presentation is to tell the participants’ stories using exemplars from their own narratives, thus grounding the research findings in the participants’ lived experiences.

Smith 9 suggested a qualitative research method known as interpretative phenomenological analysis, which has 2 basic tenets: first, that it is rooted in phenomenology, attempting to understand the meaning that individuals ascribe to their lived experiences, and second, that the researcher must attempt to interpret this meaning in the context of the research. That the researcher has some knowledge and expertise in the subject of the research means that he or she can have considerable scope in interpreting the participant’s experiences. Larkin and others 10 discussed the importance of not just providing a description of what participants say. Rather, interpretative phenomenological analysis is about getting underneath what a person is saying to try to truly understand the world from his or her perspective.

Once all of the research interviews have been transcribed and checked, it is time to begin coding. Field notes compiled during an interview can be a useful complementary source of information to facilitate this process, as the gap in time between an interview, transcribing, and coding can result in memory bias regarding nonverbal or environmental context issues that may affect interpretation of data.

Coding refers to the identification of topics, issues, similarities, and differences that are revealed through the participants’ narratives and interpreted by the researcher. This process enables the researcher to begin to understand the world from each participant’s perspective. Coding can be done by hand on a hard copy of the transcript, by making notes in the margin or by highlighting and naming sections of text. More commonly, researchers use qualitative research software (e.g., NVivo, QSR International Pty Ltd; www.qsrinternational.com/products_nvivo.aspx ) to help manage their transcriptions. It is advised that researchers undertake a formal course in the use of such software or seek supervision from a researcher experienced in these tools.

Returning to Appendix 1 and reading from lines 8–11, a code for this section might be “diagnosis of mental health condition”, but this would just be a description of what the participant is talking about at that point. If we read a little more deeply, we can ask ourselves how the participant might have come to feel that the doctor assumed he or she was aware of the diagnosis or indeed that they had only just been told the diagnosis. There are a number of pauses in the narrative that might suggest the participant is finding it difficult to recall that experience. Later in the text, the participant says “nobody asked me any questions about my life” (line 19). This could be coded simply as “health care professionals’ consultation skills”, but that would not reflect how the participant must have felt never to be asked anything about his or her personal life, about the participant as a human being. At the end of this excerpt, the participant just trails off, recalling that no-one showed any interest, which makes for very moving reading. For practitioners in pharmacy, it might also be pertinent to explore the participant’s experience of akathisia and why this was left untreated for 20 years.

One of the questions that arises about qualitative research relates to the reliability of the interpretation and representation of the participants’ narratives. There are no statistical tests that can be used to check reliability and validity as there are in quantitative research. However, work by Lincoln and Guba 11 suggests that there are other ways to “establish confidence in the ‘truth’ of the findings” (p. 218). They call this confidence “trustworthiness” and suggest that there are 4 criteria of trustworthiness: credibility (confidence in the “truth” of the findings), transferability (showing that the findings have applicability in other contexts), dependability (showing that the findings are consistent and could be repeated), and confirmability (the extent to which the findings of a study are shaped by the respondents and not researcher bias, motivation, or interest).

One way of establishing the “credibility” of the coding is to ask another researcher to code the same transcript and then to discuss any similarities and differences in the 2 resulting sets of codes. This simple act can result in revisions to the codes and can help to clarify and confirm the research findings.

Theming refers to the drawing together of codes from one or more transcripts to present the findings of qualitative research in a coherent and meaningful way. For example, there may be examples across participants’ narratives of the way in which they were treated in hospital, such as “not being listened to” or “lack of interest in personal experiences” (see Appendix 1 ). These may be drawn together as a theme running through the narratives that could be named “the patient’s experience of hospital care”. The importance of going through this process is that at its conclusion, it will be possible to present the data from the interviews using quotations from the individual transcripts to illustrate the source of the researchers’ interpretations. Thus, when the findings are organized for presentation, each theme can become the heading of a section in the report or presentation. Underneath each theme will be the codes, examples from the transcripts, and the researcher’s own interpretation of what the themes mean. Implications for real life (e.g., the treatment of people with chronic mental health problems) should also be given.

DATA SYNTHESIS

In this final section of this paper, we describe some ways of drawing together or “synthesizing” research findings to represent, as faithfully as possible, the meaning that participants ascribe to their life experiences. This synthesis is the aim of the final stage of qualitative research. For most readers, the synthesis of data presented by the researcher is of crucial significance—this is usually where “the story” of the participants can be distilled, summarized, and told in a manner that is both respectful to those participants and meaningful to readers. There are a number of ways in which researchers can synthesize and present their findings, but any conclusions drawn by the researchers must be supported by direct quotations from the participants. In this way, it is made clear to the reader that the themes under discussion have emerged from the participants’ interviews and not the mind of the researcher. The work of Latif and others 12 gives an example of how qualitative research findings might be presented.

Planning and Writing the Report

As has been suggested above, if researchers code and theme their material appropriately, they will naturally find the headings for sections of their report. Qualitative researchers tend to report “findings” rather than “results”, as the latter term typically implies that the data have come from a quantitative source. The final presentation of the research will usually be in the form of a report or a paper and so should follow accepted academic guidelines. In particular, the article should begin with an introduction, including a literature review and rationale for the research. There should be a section on the chosen methodology and a brief discussion about why qualitative methodology was most appropriate for the study question and why one particular methodology (e.g., interpretative phenomenological analysis rather than grounded theory) was selected to guide the research. The method itself should then be described, including ethics approval, choice of participants, mode of recruitment, and method of data collection (e.g., semistructured interviews or focus groups), followed by the research findings, which will be the main body of the report or paper. The findings should be written as if a story is being told; as such, it is not necessary to have a lengthy discussion section at the end. This is because much of the discussion will take place around the participants’ quotes, such that all that is needed to close the report or paper is a summary, limitations of the research, and the implications that the research has for practice. As stated earlier, it is not the intention of qualitative research to allow the findings to be generalized, and therefore this is not, in itself, a limitation.

Planning out the way that findings are to be presented is helpful. It is useful to insert the headings of the sections (the themes) and then make a note of the codes that exemplify the thoughts and feelings of your participants. It is generally advisable to put in the quotations that you want to use for each theme, using each quotation only once. After all this is done, the telling of the story can begin as you give your voice to the experiences of the participants, writing around their quotations. Do not be afraid to draw assumptions from the participants’ narratives, as this is necessary to give an in-depth account of the phenomena in question. Discuss these assumptions, drawing on your participants’ words to support you as you move from one code to another and from one theme to the next. Finally, as appropriate, it is possible to include examples from literature or policy documents that add support for your findings. As an exercise, you may wish to code and theme the sample excerpt in Appendix 1 and tell the participant’s story in your own way. Further reading about “doing” qualitative research can be found at the end of this paper.

CONCLUSIONS

Qualitative research can help researchers to access the thoughts and feelings of research participants, which can enable development of an understanding of the meaning that people ascribe to their experiences. It can be used in pharmacy practice research to explore how patients feel about their health and their treatment. Qualitative research has been used by pharmacists to explore a variety of questions and problems (see the “Further Reading” section for examples). An understanding of these issues can help pharmacists and other health care professionals to tailor health care to match the individual needs of patients and to develop a concordant relationship. Doing qualitative research is not easy and may require a complete rethink of how research is conducted, particularly for researchers who are more familiar with quantitative approaches. There are many ways of conducting qualitative research, and this paper has covered some of the practical issues regarding data collection, analysis, and management. Further reading around the subject will be essential to truly understand this method of accessing peoples’ thoughts and feelings to enable researchers to tell participants’ stories.

Appendix 1. Excerpt from a sample transcript

The participant (age late 50s) had suffered from a chronic mental health illness for 30 years. The participant had become a “revolving door patient,” someone who is frequently in and out of hospital. As the participant talked about past experiences, the researcher asked:

- What was treatment like 30 years ago?

- Umm—well it was pretty much they could do what they wanted with you because I was put into the er, the er kind of system er, I was just on

- endless section threes.

- Really…

- But what I didn’t realize until later was that if you haven’t actually posed a threat to someone or yourself they can’t really do that but I didn’t know

- that. So wh-when I first went into hospital they put me on the forensic ward ’cause they said, “We don’t think you’ll stay here we think you’ll just

- run-run away.” So they put me then onto the acute admissions ward and – er – I can remember one of the first things I recall when I got onto that

- ward was sitting down with a er a Dr XXX. He had a book this thick [gestures] and on each page it was like three questions and he went through

- all these questions and I answered all these questions. So we’re there for I don’t maybe two hours doing all that and he asked me he said “well

- when did somebody tell you then that you have schizophrenia” I said “well nobody’s told me that” so he seemed very surprised but nobody had

- actually [pause] whe-when I first went up there under police escort erm the senior kind of consultants people I’d been to where I was staying and

- ermm so er [pause] I . . . the, I can remember the very first night that I was there and given this injection in this muscle here [gestures] and just

- having dreadful side effects the next day I woke up [pause]

- . . . and I suffered that akathesia I swear to you, every minute of every day for about 20 years.

- Oh how awful.

- And that side of it just makes life impossible so the care on the wards [pause] umm I don’t know it’s kind of, it’s kind of hard to put into words

- [pause]. Because I’m not saying they were sort of like not friendly or interested but then nobody ever seemed to want to talk about your life [pause]

- nobody asked me any questions about my life. The only questions that came into was they asked me if I’d be a volunteer for these student exams

- and things and I said “yeah” so all the questions were like “oh what jobs have you done,” er about your relationships and things and er but

- nobody actually sat down and had a talk and showed some interest in you as a person you were just there basically [pause] um labelled and you

- know there was there was [pause] but umm [pause] yeah . . .

This article is the 10th in the CJHP Research Primer Series, an initiative of the CJHP Editorial Board and the CSHP Research Committee. The planned 2-year series is intended to appeal to relatively inexperienced researchers, with the goal of building research capacity among practising pharmacists. The articles, presenting simple but rigorous guidance to encourage and support novice researchers, are being solicited from authors with appropriate expertise.

Previous articles in this series:

Bond CM. The research jigsaw: how to get started. Can J Hosp Pharm . 2014;67(1):28–30.

Tully MP. Research: articulating questions, generating hypotheses, and choosing study designs. Can J Hosp Pharm . 2014;67(1):31–4.

Loewen P. Ethical issues in pharmacy practice research: an introductory guide. Can J Hosp Pharm. 2014;67(2):133–7.

Tsuyuki RT. Designing pharmacy practice research trials. Can J Hosp Pharm . 2014;67(3):226–9.

Bresee LC. An introduction to developing surveys for pharmacy practice research. Can J Hosp Pharm . 2014;67(4):286–91.

Gamble JM. An introduction to the fundamentals of cohort and case–control studies. Can J Hosp Pharm . 2014;67(5):366–72.

Austin Z, Sutton J. Qualitative research: getting started. C an J Hosp Pharm . 2014;67(6):436–40.

Houle S. An introduction to the fundamentals of randomized controlled trials in pharmacy research. Can J Hosp Pharm . 2014; 68(1):28–32.

Charrois TL. Systematic reviews: What do you need to know to get started? Can J Hosp Pharm . 2014;68(2):144–8.

Competing interests: None declared.

Further Reading

Examples of qualitative research in pharmacy practice.

- Farrell B, Pottie K, Woodend K, Yao V, Dolovich L, Kennie N, et al. Shifts in expectations: evaluating physicians’ perceptions as pharmacists integrated into family practice. J Interprof Care. 2010; 24 (1):80–9. [ PubMed ] [ Google Scholar ]

- Gregory P, Austin Z. Postgraduation employment experiences of new pharmacists in Ontario in 2012–2013. Can Pharm J. 2014; 147 (5):290–9. [ PMC free article ] [ PubMed ] [ Google Scholar ]

- Marks PZ, Jennnings B, Farrell B, Kennie-Kaulbach N, Jorgenson D, Pearson-Sharpe J, et al. “I gained a skill and a change in attitude”: a case study describing how an online continuing professional education course for pharmacists supported achievement of its transfer to practice outcomes. Can J Univ Contin Educ. 2014; 40 (2):1–18. [ Google Scholar ]

- Nair KM, Dolovich L, Brazil K, Raina P. It’s all about relationships: a qualitative study of health researchers’ perspectives on interdisciplinary research. BMC Health Serv Res. 2008; 8 :110. [ PMC free article ] [ PubMed ] [ Google Scholar ]

- Pojskic N, MacKeigan L, Boon H, Austin Z. Initial perceptions of key stakeholders in Ontario regarding independent prescriptive authority for pharmacists. Res Soc Adm Pharm. 2014; 10 (2):341–54. [ PubMed ] [ Google Scholar ]

Qualitative Research in General

- Breakwell GM, Hammond S, Fife-Schaw C. Research methods in psychology. Thousand Oaks (CA): Sage Publications; 1995. [ Google Scholar ]

- Given LM. 100 questions (and answers) about qualitative research. Thousand Oaks (CA): Sage Publications; 2015. [ Google Scholar ]

- Miles B, Huberman AM. Qualitative data analysis. Thousand Oaks (CA): Sage Publications; 2009. [ Google Scholar ]

- Patton M. Qualitative research and evaluation methods. Thousand Oaks (CA): Sage Publications; 2002. [ Google Scholar ]

- Willig C. Introducing qualitative research in psychology. Buckingham (UK): Open University Press; 2001. [ Google Scholar ]

Group Dynamics in Focus Groups

- Farnsworth J, Boon B. Analysing group dynamics within the focus group. Qual Res. 2010; 10 (5):605–24. [ Google Scholar ]

Social Constructivism

- Social constructivism. Berkeley (CA): University of California, Berkeley, Berkeley Graduate Division, Graduate Student Instruction Teaching & Resource Center; [cited 2015 June 4]. Available from: http://gsi.berkeley.edu/gsi-guide-contents/learning-theory-research/social-constructivism/ [ Google Scholar ]

Mixed Methods

- Creswell J. Research design: qualitative, quantitative, and mixed methods approaches. Thousand Oaks (CA): Sage Publications; 2009. [ Google Scholar ]

Collecting Qualitative Data

- Arksey H, Knight P. Interviewing for social scientists: an introductory resource with examples. Thousand Oaks (CA): Sage Publications; 1999. [ Google Scholar ]

- Guest G, Namey EE, Mitchel ML. Collecting qualitative data: a field manual for applied research. Thousand Oaks (CA): Sage Publications; 2013. [ Google Scholar ]

Constructivist Grounded Theory

- Charmaz K. Grounded theory: objectivist and constructivist methods. In: Denzin N, Lincoln Y, editors. Handbook of qualitative research. 2nd ed. Thousand Oaks (CA): Sage Publications; 2000. pp. 509–35. [ Google Scholar ]

Case Study Research Method in Psychology

Saul Mcleod, PhD

Editor-in-Chief for Simply Psychology

BSc (Hons) Psychology, MRes, PhD, University of Manchester

Saul Mcleod, PhD., is a qualified psychology teacher with over 18 years of experience in further and higher education. He has been published in peer-reviewed journals, including the Journal of Clinical Psychology.

Learn about our Editorial Process

Olivia Guy-Evans, MSc

Associate Editor for Simply Psychology

BSc (Hons) Psychology, MSc Psychology of Education

Olivia Guy-Evans is a writer and associate editor for Simply Psychology. She has previously worked in healthcare and educational sectors.

On This Page:

Case studies are in-depth investigations of a person, group, event, or community. Typically, data is gathered from various sources using several methods (e.g., observations & interviews).

The case study research method originated in clinical medicine (the case history, i.e., the patient’s personal history). In psychology, case studies are often confined to the study of a particular individual.

The information is mainly biographical and relates to events in the individual’s past (i.e., retrospective), as well as to significant events that are currently occurring in his or her everyday life.

The case study is not a research method, but researchers select methods of data collection and analysis that will generate material suitable for case studies.

Freud (1909a, 1909b) conducted very detailed investigations into the private lives of his patients in an attempt to both understand and help them overcome their illnesses.

This makes it clear that the case study is a method that should only be used by a psychologist, therapist, or psychiatrist, i.e., someone with a professional qualification.

There is an ethical issue of competence. Only someone qualified to diagnose and treat a person can conduct a formal case study relating to atypical (i.e., abnormal) behavior or atypical development.

Famous Case Studies

- Anna O – One of the most famous case studies, documenting psychoanalyst Josef Breuer’s treatment of “Anna O” (real name Bertha Pappenheim) for hysteria in the late 1800s using early psychoanalytic theory.

- Little Hans – A child psychoanalysis case study published by Sigmund Freud in 1909 analyzing his five-year-old patient Herbert Graf’s house phobia as related to the Oedipus complex.

- Bruce/Brenda – Gender identity case of the boy (Bruce) whose botched circumcision led psychologist John Money to advise gender reassignment and raise him as a girl (Brenda) in the 1960s.

- Genie Wiley – Linguistics/psychological development case of the victim of extreme isolation abuse who was studied in 1970s California for effects of early language deprivation on acquiring speech later in life.

- Phineas Gage – One of the most famous neuropsychology case studies analyzes personality changes in railroad worker Phineas Gage after an 1848 brain injury involving a tamping iron piercing his skull.

Clinical Case Studies

- Studying the effectiveness of psychotherapy approaches with an individual patient

- Assessing and treating mental illnesses like depression, anxiety disorders, PTSD

- Neuropsychological cases investigating brain injuries or disorders

Child Psychology Case Studies

- Studying psychological development from birth through adolescence

- Cases of learning disabilities, autism spectrum disorders, ADHD

- Effects of trauma, abuse, deprivation on development

Types of Case Studies

- Explanatory case studies : Used to explore causation in order to find underlying principles. Helpful for doing qualitative analysis to explain presumed causal links.

- Exploratory case studies : Used to explore situations where an intervention being evaluated has no clear set of outcomes. It helps define questions and hypotheses for future research.

- Descriptive case studies : Describe an intervention or phenomenon and the real-life context in which it occurred. It is helpful for illustrating certain topics within an evaluation.

- Multiple-case studies : Used to explore differences between cases and replicate findings across cases. Helpful for comparing and contrasting specific cases.

- Intrinsic : Used to gain a better understanding of a particular case. Helpful for capturing the complexity of a single case.

- Collective : Used to explore a general phenomenon using multiple case studies. Helpful for jointly studying a group of cases in order to inquire into the phenomenon.

Where Do You Find Data for a Case Study?

There are several places to find data for a case study. The key is to gather data from multiple sources to get a complete picture of the case and corroborate facts or findings through triangulation of evidence. Most of this information is likely qualitative (i.e., verbal description rather than measurement), but the psychologist might also collect numerical data.

1. Primary sources

- Interviews – Interviewing key people related to the case to get their perspectives and insights. The interview is an extremely effective procedure for obtaining information about an individual, and it may be used to collect comments from the person’s friends, parents, employer, workmates, and others who have a good knowledge of the person, as well as to obtain facts from the person him or herself.

- Observations – Observing behaviors, interactions, processes, etc., related to the case as they unfold in real-time.

- Documents & Records – Reviewing private documents, diaries, public records, correspondence, meeting minutes, etc., relevant to the case.

2. Secondary sources

- News/Media – News coverage of events related to the case study.

- Academic articles – Journal articles, dissertations etc. that discuss the case.

- Government reports – Official data and records related to the case context.

- Books/films – Books, documentaries or films discussing the case.

3. Archival records

Searching historical archives, museum collections and databases to find relevant documents, visual/audio records related to the case history and context.

Public archives like newspapers, organizational records, photographic collections could all include potentially relevant pieces of information to shed light on attitudes, cultural perspectives, common practices and historical contexts related to psychology.

4. Organizational records

Organizational records offer the advantage of often having large datasets collected over time that can reveal or confirm psychological insights.

Of course, privacy and ethical concerns regarding confidential data must be navigated carefully.

However, with proper protocols, organizational records can provide invaluable context and empirical depth to qualitative case studies exploring the intersection of psychology and organizations.

- Organizational/industrial psychology research : Organizational records like employee surveys, turnover/retention data, policies, incident reports etc. may provide insight into topics like job satisfaction, workplace culture and dynamics, leadership issues, employee behaviors etc.

- Clinical psychology : Therapists/hospitals may grant access to anonymized medical records to study aspects like assessments, diagnoses, treatment plans etc. This could shed light on clinical practices.

- School psychology : Studies could utilize anonymized student records like test scores, grades, disciplinary issues, and counseling referrals to study child development, learning barriers, effectiveness of support programs, and more.

How do I Write a Case Study in Psychology?

Follow specified case study guidelines provided by a journal or your psychology tutor. General components of clinical case studies include: background, symptoms, assessments, diagnosis, treatment, and outcomes. Interpreting the information means the researcher decides what to include or leave out. A good case study should always clarify which information is the factual description and which is an inference or the researcher’s opinion.

1. Introduction

- Provide background on the case context and why it is of interest, presenting background information like demographics, relevant history, and presenting problem.

- Compare briefly to similar published cases if applicable. Clearly state the focus/importance of the case.

2. Case Presentation

- Describe the presenting problem in detail, including symptoms, duration,and impact on daily life.

- Include client demographics like age and gender, information about social relationships, and mental health history.

- Describe all physical, emotional, and/or sensory symptoms reported by the client.

- Use patient quotes to describe the initial complaint verbatim. Follow with full-sentence summaries of relevant history details gathered, including key components that led to a working diagnosis.

- Summarize clinical exam results, namely orthopedic/neurological tests, imaging, lab tests, etc. Note actual results rather than subjective conclusions. Provide images if clearly reproducible/anonymized.

- Clearly state the working diagnosis or clinical impression before transitioning to management.

3. Management and Outcome

- Indicate the total duration of care and number of treatments given over what timeframe. Use specific names/descriptions for any therapies/interventions applied.

- Present the results of the intervention,including any quantitative or qualitative data collected.

- For outcomes, utilize visual analog scales for pain, medication usage logs, etc., if possible. Include patient self-reports of improvement/worsening of symptoms. Note the reason for discharge/end of care.

4. Discussion

- Analyze the case, exploring contributing factors, limitations of the study, and connections to existing research.

- Analyze the effectiveness of the intervention,considering factors like participant adherence, limitations of the study, and potential alternative explanations for the results.

- Identify any questions raised in the case analysis and relate insights to established theories and current research if applicable. Avoid definitive claims about physiological explanations.

- Offer clinical implications, and suggest future research directions.

5. Additional Items

- Thank specific assistants for writing support only. No patient acknowledgments.

- References should directly support any key claims or quotes included.

- Use tables/figures/images only if substantially informative. Include permissions and legends/explanatory notes.

- Provides detailed (rich qualitative) information.

- Provides insight for further research.

- Permitting investigation of otherwise impractical (or unethical) situations.

Case studies allow a researcher to investigate a topic in far more detail than might be possible if they were trying to deal with a large number of research participants (nomothetic approach) with the aim of ‘averaging’.

Because of their in-depth, multi-sided approach, case studies often shed light on aspects of human thinking and behavior that would be unethical or impractical to study in other ways.

Research that only looks into the measurable aspects of human behavior is not likely to give us insights into the subjective dimension of experience, which is important to psychoanalytic and humanistic psychologists.

Case studies are often used in exploratory research. They can help us generate new ideas (that might be tested by other methods). They are an important way of illustrating theories and can help show how different aspects of a person’s life are related to each other.

The method is, therefore, important for psychologists who adopt a holistic point of view (i.e., humanistic psychologists ).

Limitations

- Lacking scientific rigor and providing little basis for generalization of results to the wider population.

- Researchers’ own subjective feelings may influence the case study (researcher bias).

- Difficult to replicate.

- Time-consuming and expensive.

- The volume of data, together with the time restrictions in place, impacted the depth of analysis that was possible within the available resources.

Because a case study deals with only one person/event/group, we can never be sure if the case study investigated is representative of the wider body of “similar” instances. This means the conclusions drawn from a particular case may not be transferable to other settings.

Because case studies are based on the analysis of qualitative (i.e., descriptive) data , a lot depends on the psychologist’s interpretation of the information she has acquired.

This means that there is a lot of scope for Anna O , and it could be that the subjective opinions of the psychologist intrude in the assessment of what the data means.

For example, Freud has been criticized for producing case studies in which the information was sometimes distorted to fit particular behavioral theories (e.g., Little Hans ).

This is also true of Money’s interpretation of the Bruce/Brenda case study (Diamond, 1997) when he ignored evidence that went against his theory.

Breuer, J., & Freud, S. (1895). Studies on hysteria . Standard Edition 2: London.

Curtiss, S. (1981). Genie: The case of a modern wild child .

Diamond, M., & Sigmundson, K. (1997). Sex Reassignment at Birth: Long-term Review and Clinical Implications. Archives of Pediatrics & Adolescent Medicine , 151(3), 298-304

Freud, S. (1909a). Analysis of a phobia of a five year old boy. In The Pelican Freud Library (1977), Vol 8, Case Histories 1, pages 169-306

Freud, S. (1909b). Bemerkungen über einen Fall von Zwangsneurose (Der “Rattenmann”). Jb. psychoanal. psychopathol. Forsch ., I, p. 357-421; GW, VII, p. 379-463; Notes upon a case of obsessional neurosis, SE , 10: 151-318.

Harlow J. M. (1848). Passage of an iron rod through the head. Boston Medical and Surgical Journal, 39 , 389–393.

Harlow, J. M. (1868). Recovery from the Passage of an Iron Bar through the Head . Publications of the Massachusetts Medical Society. 2 (3), 327-347.

Money, J., & Ehrhardt, A. A. (1972). Man & Woman, Boy & Girl : The Differentiation and Dimorphism of Gender Identity from Conception to Maturity. Baltimore, Maryland: Johns Hopkins University Press.

Money, J., & Tucker, P. (1975). Sexual signatures: On being a man or a woman.

Further Information

- Case Study Approach

- Case Study Method

- Enhancing the Quality of Case Studies in Health Services Research

- “We do things together” A case study of “couplehood” in dementia

- Using mixed methods for evaluating an integrative approach to cancer care: a case study

Related Articles

Research Methodology

Qualitative Data Coding

What Is a Focus Group?

Cross-Cultural Research Methodology In Psychology

What Is Internal Validity In Research?

Research Methodology , Statistics

What Is Face Validity In Research? Importance & How To Measure

Criterion Validity: Definition & Examples

Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, automatically generate references for free.

- Knowledge Base

- Methodology

- Data Collection Methods | Step-by-Step Guide & Examples

Data Collection Methods | Step-by-Step Guide & Examples

Published on 4 May 2022 by Pritha Bhandari .

Data collection is a systematic process of gathering observations or measurements. Whether you are performing research for business, governmental, or academic purposes, data collection allows you to gain first-hand knowledge and original insights into your research problem .

While methods and aims may differ between fields, the overall process of data collection remains largely the same. Before you begin collecting data, you need to consider:

- The aim of the research

- The type of data that you will collect

- The methods and procedures you will use to collect, store, and process the data

To collect high-quality data that is relevant to your purposes, follow these four steps.

Table of contents

Step 1: define the aim of your research, step 2: choose your data collection method, step 3: plan your data collection procedures, step 4: collect the data, frequently asked questions about data collection.

Before you start the process of data collection, you need to identify exactly what you want to achieve. You can start by writing a problem statement : what is the practical or scientific issue that you want to address, and why does it matter?

Next, formulate one or more research questions that precisely define what you want to find out. Depending on your research questions, you might need to collect quantitative or qualitative data :

- Quantitative data is expressed in numbers and graphs and is analysed through statistical methods .

- Qualitative data is expressed in words and analysed through interpretations and categorisations.

If your aim is to test a hypothesis , measure something precisely, or gain large-scale statistical insights, collect quantitative data. If your aim is to explore ideas, understand experiences, or gain detailed insights into a specific context, collect qualitative data.

If you have several aims, you can use a mixed methods approach that collects both types of data.

- Your first aim is to assess whether there are significant differences in perceptions of managers across different departments and office locations.

- Your second aim is to gather meaningful feedback from employees to explore new ideas for how managers can improve.

Prevent plagiarism, run a free check.

Based on the data you want to collect, decide which method is best suited for your research.

- Experimental research is primarily a quantitative method.

- Interviews , focus groups , and ethnographies are qualitative methods.

- Surveys , observations, archival research, and secondary data collection can be quantitative or qualitative methods.

Carefully consider what method you will use to gather data that helps you directly answer your research questions.

When you know which method(s) you are using, you need to plan exactly how you will implement them. What procedures will you follow to make accurate observations or measurements of the variables you are interested in?

For instance, if you’re conducting surveys or interviews, decide what form the questions will take; if you’re conducting an experiment, make decisions about your experimental design .

Operationalisation

Sometimes your variables can be measured directly: for example, you can collect data on the average age of employees simply by asking for dates of birth. However, often you’ll be interested in collecting data on more abstract concepts or variables that can’t be directly observed.

Operationalisation means turning abstract conceptual ideas into measurable observations. When planning how you will collect data, you need to translate the conceptual definition of what you want to study into the operational definition of what you will actually measure.

- You ask managers to rate their own leadership skills on 5-point scales assessing the ability to delegate, decisiveness, and dependability.

- You ask their direct employees to provide anonymous feedback on the managers regarding the same topics.

You may need to develop a sampling plan to obtain data systematically. This involves defining a population , the group you want to draw conclusions about, and a sample, the group you will actually collect data from.

Your sampling method will determine how you recruit participants or obtain measurements for your study. To decide on a sampling method you will need to consider factors like the required sample size, accessibility of the sample, and time frame of the data collection.

Standardising procedures

If multiple researchers are involved, write a detailed manual to standardise data collection procedures in your study.

This means laying out specific step-by-step instructions so that everyone in your research team collects data in a consistent way – for example, by conducting experiments under the same conditions and using objective criteria to record and categorise observations.

This helps ensure the reliability of your data, and you can also use it to replicate the study in the future.

Creating a data management plan

Before beginning data collection, you should also decide how you will organise and store your data.

- If you are collecting data from people, you will likely need to anonymise and safeguard the data to prevent leaks of sensitive information (e.g. names or identity numbers).