- Skip to main content

- Skip to primary sidebar

- Skip to footer

- QuestionPro

- Solutions Industries Gaming Automotive Sports and events Education Government Travel & Hospitality Financial Services Healthcare Cannabis Technology Use Case NPS+ Communities Audience Contactless surveys Mobile LivePolls Member Experience GDPR Positive People Science 360 Feedback Surveys

- Resources Blog eBooks Survey Templates Case Studies Training Help center

Home Market Research

Data Analysis in Research: Types & Methods

Content Index

Why analyze data in research?

Types of data in research, finding patterns in the qualitative data, methods used for data analysis in qualitative research, preparing data for analysis, methods used for data analysis in quantitative research, considerations in research data analysis, what is data analysis in research.

Definition of research in data analysis: According to LeCompte and Schensul, research data analysis is a process used by researchers to reduce data to a story and interpret it to derive insights. The data analysis process helps reduce a large chunk of data into smaller fragments, which makes sense.

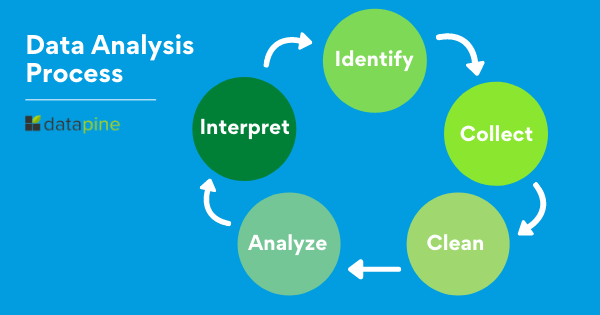

Three essential things occur during the data analysis process — the first is data organization . Summarization and categorization together contribute to becoming the second known method used for data reduction. It helps find patterns and themes in the data for easy identification and linking. The third and last way is data analysis – researchers do it in both top-down and bottom-up fashion.

LEARN ABOUT: Research Process Steps

On the other hand, Marshall and Rossman describe data analysis as a messy, ambiguous, and time-consuming but creative and fascinating process through which a mass of collected data is brought to order, structure and meaning.

We can say that “the data analysis and data interpretation is a process representing the application of deductive and inductive logic to the research and data analysis.”

Researchers rely heavily on data as they have a story to tell or research problems to solve. It starts with a question, and data is nothing but an answer to that question. But, what if there is no question to ask? Well! It is possible to explore data even without a problem – we call it ‘Data Mining’, which often reveals some interesting patterns within the data that are worth exploring.

Irrelevant to the type of data researchers explore, their mission and audiences’ vision guide them to find the patterns to shape the story they want to tell. One of the essential things expected from researchers while analyzing data is to stay open and remain unbiased toward unexpected patterns, expressions, and results. Remember, sometimes, data analysis tells the most unforeseen yet exciting stories that were not expected when initiating data analysis. Therefore, rely on the data you have at hand and enjoy the journey of exploratory research.

Create a Free Account

Every kind of data has a rare quality of describing things after assigning a specific value to it. For analysis, you need to organize these values, processed and presented in a given context, to make it useful. Data can be in different forms; here are the primary data types.

- Qualitative data: When the data presented has words and descriptions, then we call it qualitative data . Although you can observe this data, it is subjective and harder to analyze data in research, especially for comparison. Example: Quality data represents everything describing taste, experience, texture, or an opinion that is considered quality data. This type of data is usually collected through focus groups, personal qualitative interviews , qualitative observation or using open-ended questions in surveys.

- Quantitative data: Any data expressed in numbers of numerical figures are called quantitative data . This type of data can be distinguished into categories, grouped, measured, calculated, or ranked. Example: questions such as age, rank, cost, length, weight, scores, etc. everything comes under this type of data. You can present such data in graphical format, charts, or apply statistical analysis methods to this data. The (Outcomes Measurement Systems) OMS questionnaires in surveys are a significant source of collecting numeric data.

- Categorical data: It is data presented in groups. However, an item included in the categorical data cannot belong to more than one group. Example: A person responding to a survey by telling his living style, marital status, smoking habit, or drinking habit comes under the categorical data. A chi-square test is a standard method used to analyze this data.

Learn More : Examples of Qualitative Data in Education

Data analysis in qualitative research

Data analysis and qualitative data research work a little differently from the numerical data as the quality data is made up of words, descriptions, images, objects, and sometimes symbols. Getting insight from such complicated information is a complicated process. Hence it is typically used for exploratory research and data analysis .

Although there are several ways to find patterns in the textual information, a word-based method is the most relied and widely used global technique for research and data analysis. Notably, the data analysis process in qualitative research is manual. Here the researchers usually read the available data and find repetitive or commonly used words.

For example, while studying data collected from African countries to understand the most pressing issues people face, researchers might find “food” and “hunger” are the most commonly used words and will highlight them for further analysis.

LEARN ABOUT: Level of Analysis

The keyword context is another widely used word-based technique. In this method, the researcher tries to understand the concept by analyzing the context in which the participants use a particular keyword.

For example , researchers conducting research and data analysis for studying the concept of ‘diabetes’ amongst respondents might analyze the context of when and how the respondent has used or referred to the word ‘diabetes.’

The scrutiny-based technique is also one of the highly recommended text analysis methods used to identify a quality data pattern. Compare and contrast is the widely used method under this technique to differentiate how a specific text is similar or different from each other.

For example: To find out the “importance of resident doctor in a company,” the collected data is divided into people who think it is necessary to hire a resident doctor and those who think it is unnecessary. Compare and contrast is the best method that can be used to analyze the polls having single-answer questions types .

Metaphors can be used to reduce the data pile and find patterns in it so that it becomes easier to connect data with theory.

Variable Partitioning is another technique used to split variables so that researchers can find more coherent descriptions and explanations from the enormous data.

LEARN ABOUT: Qualitative Research Questions and Questionnaires

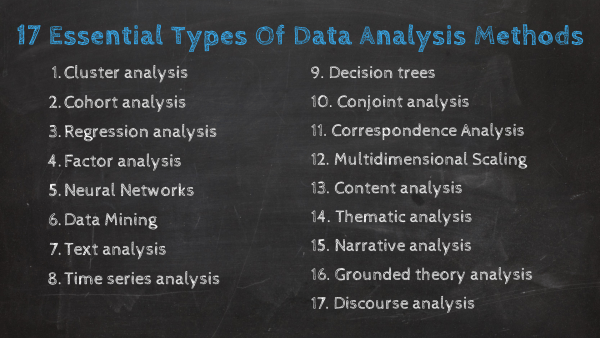

There are several techniques to analyze the data in qualitative research, but here are some commonly used methods,

- Content Analysis: It is widely accepted and the most frequently employed technique for data analysis in research methodology. It can be used to analyze the documented information from text, images, and sometimes from the physical items. It depends on the research questions to predict when and where to use this method.

- Narrative Analysis: This method is used to analyze content gathered from various sources such as personal interviews, field observation, and surveys . The majority of times, stories, or opinions shared by people are focused on finding answers to the research questions.

- Discourse Analysis: Similar to narrative analysis, discourse analysis is used to analyze the interactions with people. Nevertheless, this particular method considers the social context under which or within which the communication between the researcher and respondent takes place. In addition to that, discourse analysis also focuses on the lifestyle and day-to-day environment while deriving any conclusion.

- Grounded Theory: When you want to explain why a particular phenomenon happened, then using grounded theory for analyzing quality data is the best resort. Grounded theory is applied to study data about the host of similar cases occurring in different settings. When researchers are using this method, they might alter explanations or produce new ones until they arrive at some conclusion.

LEARN ABOUT: 12 Best Tools for Researchers

Data analysis in quantitative research

The first stage in research and data analysis is to make it for the analysis so that the nominal data can be converted into something meaningful. Data preparation consists of the below phases.

Phase I: Data Validation

Data validation is done to understand if the collected data sample is per the pre-set standards, or it is a biased data sample again divided into four different stages

- Fraud: To ensure an actual human being records each response to the survey or the questionnaire

- Screening: To make sure each participant or respondent is selected or chosen in compliance with the research criteria

- Procedure: To ensure ethical standards were maintained while collecting the data sample

- Completeness: To ensure that the respondent has answered all the questions in an online survey. Else, the interviewer had asked all the questions devised in the questionnaire.

Phase II: Data Editing

More often, an extensive research data sample comes loaded with errors. Respondents sometimes fill in some fields incorrectly or sometimes skip them accidentally. Data editing is a process wherein the researchers have to confirm that the provided data is free of such errors. They need to conduct necessary checks and outlier checks to edit the raw edit and make it ready for analysis.

Phase III: Data Coding

Out of all three, this is the most critical phase of data preparation associated with grouping and assigning values to the survey responses . If a survey is completed with a 1000 sample size, the researcher will create an age bracket to distinguish the respondents based on their age. Thus, it becomes easier to analyze small data buckets rather than deal with the massive data pile.

LEARN ABOUT: Steps in Qualitative Research

After the data is prepared for analysis, researchers are open to using different research and data analysis methods to derive meaningful insights. For sure, statistical analysis plans are the most favored to analyze numerical data. In statistical analysis, distinguishing between categorical data and numerical data is essential, as categorical data involves distinct categories or labels, while numerical data consists of measurable quantities. The method is again classified into two groups. First, ‘Descriptive Statistics’ used to describe data. Second, ‘Inferential statistics’ that helps in comparing the data .

Descriptive statistics

This method is used to describe the basic features of versatile types of data in research. It presents the data in such a meaningful way that pattern in the data starts making sense. Nevertheless, the descriptive analysis does not go beyond making conclusions. The conclusions are again based on the hypothesis researchers have formulated so far. Here are a few major types of descriptive analysis methods.

Measures of Frequency

- Count, Percent, Frequency

- It is used to denote home often a particular event occurs.

- Researchers use it when they want to showcase how often a response is given.

Measures of Central Tendency

- Mean, Median, Mode

- The method is widely used to demonstrate distribution by various points.

- Researchers use this method when they want to showcase the most commonly or averagely indicated response.

Measures of Dispersion or Variation

- Range, Variance, Standard deviation

- Here the field equals high/low points.

- Variance standard deviation = difference between the observed score and mean

- It is used to identify the spread of scores by stating intervals.

- Researchers use this method to showcase data spread out. It helps them identify the depth until which the data is spread out that it directly affects the mean.

Measures of Position

- Percentile ranks, Quartile ranks

- It relies on standardized scores helping researchers to identify the relationship between different scores.

- It is often used when researchers want to compare scores with the average count.

For quantitative research use of descriptive analysis often give absolute numbers, but the in-depth analysis is never sufficient to demonstrate the rationale behind those numbers. Nevertheless, it is necessary to think of the best method for research and data analysis suiting your survey questionnaire and what story researchers want to tell. For example, the mean is the best way to demonstrate the students’ average scores in schools. It is better to rely on the descriptive statistics when the researchers intend to keep the research or outcome limited to the provided sample without generalizing it. For example, when you want to compare average voting done in two different cities, differential statistics are enough.

Descriptive analysis is also called a ‘univariate analysis’ since it is commonly used to analyze a single variable.

Inferential statistics

Inferential statistics are used to make predictions about a larger population after research and data analysis of the representing population’s collected sample. For example, you can ask some odd 100 audiences at a movie theater if they like the movie they are watching. Researchers then use inferential statistics on the collected sample to reason that about 80-90% of people like the movie.

Here are two significant areas of inferential statistics.

- Estimating parameters: It takes statistics from the sample research data and demonstrates something about the population parameter.

- Hypothesis test: I t’s about sampling research data to answer the survey research questions. For example, researchers might be interested to understand if the new shade of lipstick recently launched is good or not, or if the multivitamin capsules help children to perform better at games.

These are sophisticated analysis methods used to showcase the relationship between different variables instead of describing a single variable. It is often used when researchers want something beyond absolute numbers to understand the relationship between variables.

Here are some of the commonly used methods for data analysis in research.

- Correlation: When researchers are not conducting experimental research or quasi-experimental research wherein the researchers are interested to understand the relationship between two or more variables, they opt for correlational research methods.

- Cross-tabulation: Also called contingency tables, cross-tabulation is used to analyze the relationship between multiple variables. Suppose provided data has age and gender categories presented in rows and columns. A two-dimensional cross-tabulation helps for seamless data analysis and research by showing the number of males and females in each age category.

- Regression analysis: For understanding the strong relationship between two variables, researchers do not look beyond the primary and commonly used regression analysis method, which is also a type of predictive analysis used. In this method, you have an essential factor called the dependent variable. You also have multiple independent variables in regression analysis. You undertake efforts to find out the impact of independent variables on the dependent variable. The values of both independent and dependent variables are assumed as being ascertained in an error-free random manner.

- Frequency tables: The statistical procedure is used for testing the degree to which two or more vary or differ in an experiment. A considerable degree of variation means research findings were significant. In many contexts, ANOVA testing and variance analysis are similar.

- Analysis of variance: The statistical procedure is used for testing the degree to which two or more vary or differ in an experiment. A considerable degree of variation means research findings were significant. In many contexts, ANOVA testing and variance analysis are similar.

- Researchers must have the necessary research skills to analyze and manipulation the data , Getting trained to demonstrate a high standard of research practice. Ideally, researchers must possess more than a basic understanding of the rationale of selecting one statistical method over the other to obtain better data insights.

- Usually, research and data analytics projects differ by scientific discipline; therefore, getting statistical advice at the beginning of analysis helps design a survey questionnaire, select data collection methods , and choose samples.

LEARN ABOUT: Best Data Collection Tools

- The primary aim of data research and analysis is to derive ultimate insights that are unbiased. Any mistake in or keeping a biased mind to collect data, selecting an analysis method, or choosing audience sample il to draw a biased inference.

- Irrelevant to the sophistication used in research data and analysis is enough to rectify the poorly defined objective outcome measurements. It does not matter if the design is at fault or intentions are not clear, but lack of clarity might mislead readers, so avoid the practice.

- The motive behind data analysis in research is to present accurate and reliable data. As far as possible, avoid statistical errors, and find a way to deal with everyday challenges like outliers, missing data, data altering, data mining , or developing graphical representation.

LEARN MORE: Descriptive Research vs Correlational Research The sheer amount of data generated daily is frightening. Especially when data analysis has taken center stage. in 2018. In last year, the total data supply amounted to 2.8 trillion gigabytes. Hence, it is clear that the enterprises willing to survive in the hypercompetitive world must possess an excellent capability to analyze complex research data, derive actionable insights, and adapt to the new market needs.

LEARN ABOUT: Average Order Value

QuestionPro is an online survey platform that empowers organizations in data analysis and research and provides them a medium to collect data by creating appealing surveys.

MORE LIKE THIS

Customer Communication Tool: Types, Methods, Uses, & Tools

Apr 23, 2024

Top 12 Sentiment Analysis Tools for Understanding Emotions

QuestionPro BI: From research data to actionable dashboards within minutes

Apr 22, 2024

21 Best Customer Experience Management Software in 2024

Other categories.

- Academic Research

- Artificial Intelligence

- Assessments

- Brand Awareness

- Case Studies

- Communities

- Consumer Insights

- Customer effort score

- Customer Engagement

- Customer Experience

- Customer Loyalty

- Customer Research

- Customer Satisfaction

- Employee Benefits

- Employee Engagement

- Employee Retention

- Friday Five

- General Data Protection Regulation

- Insights Hub

- Life@QuestionPro

- Market Research

- Mobile diaries

- Mobile Surveys

- New Features

- Online Communities

- Question Types

- Questionnaire

- QuestionPro Products

- Release Notes

- Research Tools and Apps

- Revenue at Risk

- Survey Templates

- Training Tips

- Uncategorized

- Video Learning Series

- What’s Coming Up

- Workforce Intelligence

News alert: UC Berkeley has announced its next university librarian

Secondary menu

- Log in to your Library account

- Hours and Maps

- Connect from Off Campus

- UC Berkeley Home

Search form

Research methods--quantitative, qualitative, and more: overview.

- Quantitative Research

- Qualitative Research

- Data Science Methods (Machine Learning, AI, Big Data)

- Text Mining and Computational Text Analysis

- Evidence Synthesis/Systematic Reviews

- Get Data, Get Help!

About Research Methods

This guide provides an overview of research methods, how to choose and use them, and supports and resources at UC Berkeley.

As Patten and Newhart note in the book Understanding Research Methods , "Research methods are the building blocks of the scientific enterprise. They are the "how" for building systematic knowledge. The accumulation of knowledge through research is by its nature a collective endeavor. Each well-designed study provides evidence that may support, amend, refute, or deepen the understanding of existing knowledge...Decisions are important throughout the practice of research and are designed to help researchers collect evidence that includes the full spectrum of the phenomenon under study, to maintain logical rules, and to mitigate or account for possible sources of bias. In many ways, learning research methods is learning how to see and make these decisions."

The choice of methods varies by discipline, by the kind of phenomenon being studied and the data being used to study it, by the technology available, and more. This guide is an introduction, but if you don't see what you need here, always contact your subject librarian, and/or take a look to see if there's a library research guide that will answer your question.

Suggestions for changes and additions to this guide are welcome!

START HERE: SAGE Research Methods

Without question, the most comprehensive resource available from the library is SAGE Research Methods. HERE IS THE ONLINE GUIDE to this one-stop shopping collection, and some helpful links are below:

- SAGE Research Methods

- Little Green Books (Quantitative Methods)

- Little Blue Books (Qualitative Methods)

- Dictionaries and Encyclopedias

- Case studies of real research projects

- Sample datasets for hands-on practice

- Streaming video--see methods come to life

- Methodspace- -a community for researchers

- SAGE Research Methods Course Mapping

Library Data Services at UC Berkeley

Library Data Services Program and Digital Scholarship Services

The LDSP offers a variety of services and tools ! From this link, check out pages for each of the following topics: discovering data, managing data, collecting data, GIS data, text data mining, publishing data, digital scholarship, open science, and the Research Data Management Program.

Be sure also to check out the visual guide to where to seek assistance on campus with any research question you may have!

Library GIS Services

Other Data Services at Berkeley

D-Lab Supports Berkeley faculty, staff, and graduate students with research in data intensive social science, including a wide range of training and workshop offerings Dryad Dryad is a simple self-service tool for researchers to use in publishing their datasets. It provides tools for the effective publication of and access to research data. Geospatial Innovation Facility (GIF) Provides leadership and training across a broad array of integrated mapping technologies on campu Research Data Management A UC Berkeley guide and consulting service for research data management issues

General Research Methods Resources

Here are some general resources for assistance:

- Assistance from ICPSR (must create an account to access): Getting Help with Data , and Resources for Students

- Wiley Stats Ref for background information on statistics topics

- Survey Documentation and Analysis (SDA) . Program for easy web-based analysis of survey data.

Consultants

- D-Lab/Data Science Discovery Consultants Request help with your research project from peer consultants.

- Research data (RDM) consulting Meet with RDM consultants before designing the data security, storage, and sharing aspects of your qualitative project.

- Statistics Department Consulting Services A service in which advanced graduate students, under faculty supervision, are available to consult during specified hours in the Fall and Spring semesters.

Related Resourcex

- IRB / CPHS Qualitative research projects with human subjects often require that you go through an ethics review.

- OURS (Office of Undergraduate Research and Scholarships) OURS supports undergraduates who want to embark on research projects and assistantships. In particular, check out their "Getting Started in Research" workshops

- Sponsored Projects Sponsored projects works with researchers applying for major external grants.

- Next: Quantitative Research >>

- Last Updated: Apr 25, 2024 11:09 AM

- URL: https://guides.lib.berkeley.edu/researchmethods

- Online Degree Explore Bachelor’s & Master’s degrees

- MasterTrack™ Earn credit towards a Master’s degree

- University Certificates Advance your career with graduate-level learning

- Top Courses

- Join for Free

What Is Data Analysis? (With Examples)

Data analysis is the practice of working with data to glean useful information, which can then be used to make informed decisions.

![the analysis of research [Featured image] A female data analyst takes notes on her laptop at a standing desk in a modern office space](https://d3njjcbhbojbot.cloudfront.net/api/utilities/v1/imageproxy/https://images.ctfassets.net/wp1lcwdav1p1/2CUbULaq9mEfSSIq6lsCUu/b8ec58abf5106bf9bf75b17da09c39c0/What_is_data_analysis.png?w=1500&h=680&q=60&fit=fill&f=faces&fm=jpg&fl=progressive&auto=format%2Ccompress&dpr=1&w=1000)

"It is a capital mistake to theorize before one has data. Insensibly one begins to twist facts to suit theories, instead of theories to suit facts," Sherlock Holme's proclaims in Sir Arthur Conan Doyle's A Scandal in Bohemia.

This idea lies at the root of data analysis. When we can extract meaning from data, it empowers us to make better decisions. And we’re living in a time when we have more data than ever at our fingertips.

Companies are wisening up to the benefits of leveraging data. Data analysis can help a bank to personalize customer interactions, a health care system to predict future health needs, or an entertainment company to create the next big streaming hit.

The World Economic Forum Future of Jobs Report 2023 listed data analysts and scientists as one of the most in-demand jobs, alongside AI and machine learning specialists and big data specialists [ 1 ]. In this article, you'll learn more about the data analysis process, different types of data analysis, and recommended courses to help you get started in this exciting field.

Read more: How to Become a Data Analyst (with or Without a Degree)

Beginner-friendly data analysis courses

Interested in building your knowledge of data analysis today? Consider enrolling in one of these popular courses on Coursera:

In Google's Foundations: Data, Data, Everywhere course, you'll explore key data analysis concepts, tools, and jobs.

In Duke University's Data Analysis and Visualization course, you'll learn how to identify key components for data analytics projects, explore data visualization, and find out how to create a compelling data story.

Data analysis process

As the data available to companies continues to grow both in amount and complexity, so too does the need for an effective and efficient process by which to harness the value of that data. The data analysis process typically moves through several iterative phases. Let’s take a closer look at each.

Identify the business question you’d like to answer. What problem is the company trying to solve? What do you need to measure, and how will you measure it?

Collect the raw data sets you’ll need to help you answer the identified question. Data collection might come from internal sources, like a company’s client relationship management (CRM) software, or from secondary sources, like government records or social media application programming interfaces (APIs).

Clean the data to prepare it for analysis. This often involves purging duplicate and anomalous data, reconciling inconsistencies, standardizing data structure and format, and dealing with white spaces and other syntax errors.

Analyze the data. By manipulating the data using various data analysis techniques and tools, you can begin to find trends, correlations, outliers, and variations that tell a story. During this stage, you might use data mining to discover patterns within databases or data visualization software to help transform data into an easy-to-understand graphical format.

Interpret the results of your analysis to see how well the data answered your original question. What recommendations can you make based on the data? What are the limitations to your conclusions?

You can complete hands-on projects for your portfolio while practicing statistical analysis, data management, and programming with Meta's beginner-friendly Data Analyst Professional Certificate . Designed to prepare you for an entry-level role, this self-paced program can be completed in just 5 months.

Or, L earn more about data analysis in this lecture by Kevin, Director of Data Analytics at Google, from Google's Data Analytics Professional Certificate :

Read more: What Does a Data Analyst Do? A Career Guide

Types of data analysis (with examples)

Data can be used to answer questions and support decisions in many different ways. To identify the best way to analyze your date, it can help to familiarize yourself with the four types of data analysis commonly used in the field.

In this section, we’ll take a look at each of these data analysis methods, along with an example of how each might be applied in the real world.

Descriptive analysis

Descriptive analysis tells us what happened. This type of analysis helps describe or summarize quantitative data by presenting statistics. For example, descriptive statistical analysis could show the distribution of sales across a group of employees and the average sales figure per employee.

Descriptive analysis answers the question, “what happened?”

Diagnostic analysis

If the descriptive analysis determines the “what,” diagnostic analysis determines the “why.” Let’s say a descriptive analysis shows an unusual influx of patients in a hospital. Drilling into the data further might reveal that many of these patients shared symptoms of a particular virus. This diagnostic analysis can help you determine that an infectious agent—the “why”—led to the influx of patients.

Diagnostic analysis answers the question, “why did it happen?”

Predictive analysis

So far, we’ve looked at types of analysis that examine and draw conclusions about the past. Predictive analytics uses data to form projections about the future. Using predictive analysis, you might notice that a given product has had its best sales during the months of September and October each year, leading you to predict a similar high point during the upcoming year.

Predictive analysis answers the question, “what might happen in the future?”

Prescriptive analysis

Prescriptive analysis takes all the insights gathered from the first three types of analysis and uses them to form recommendations for how a company should act. Using our previous example, this type of analysis might suggest a market plan to build on the success of the high sales months and harness new growth opportunities in the slower months.

Prescriptive analysis answers the question, “what should we do about it?”

This last type is where the concept of data-driven decision-making comes into play.

Read more : Advanced Analytics: Definition, Benefits, and Use Cases

What is data-driven decision-making (DDDM)?

Data-driven decision-making, sometimes abbreviated to DDDM), can be defined as the process of making strategic business decisions based on facts, data, and metrics instead of intuition, emotion, or observation.

This might sound obvious, but in practice, not all organizations are as data-driven as they could be. According to global management consulting firm McKinsey Global Institute, data-driven companies are better at acquiring new customers, maintaining customer loyalty, and achieving above-average profitability [ 2 ].

Get started with Coursera

If you’re interested in a career in the high-growth field of data analytics, consider these top-rated courses on Coursera:

Begin building job-ready skills with the Google Data Analytics Professional Certificate . Prepare for an entry-level job as you learn from Google employees—no experience or degree required.

Practice working with data with Macquarie University's Excel Skills for Business Specialization . Learn how to use Microsoft Excel to analyze data and make data-informed business decisions.

Deepen your skill set with Google's Advanced Data Analytics Professional Certificate . In this advanced program, you'll continue exploring the concepts introduced in the beginner-level courses, plus learn Python, statistics, and Machine Learning concepts.

Frequently asked questions (FAQ)

Where is data analytics used .

Just about any business or organization can use data analytics to help inform their decisions and boost their performance. Some of the most successful companies across a range of industries — from Amazon and Netflix to Starbucks and General Electric — integrate data into their business plans to improve their overall business performance.

What are the top skills for a data analyst?

Data analysis makes use of a range of analysis tools and technologies. Some of the top skills for data analysts include SQL, data visualization, statistical programming languages (like R and Python), machine learning, and spreadsheets.

Read : 7 In-Demand Data Analyst Skills to Get Hired in 2022

What is a data analyst job salary?

Data from Glassdoor indicates that the average base salary for a data analyst in the United States is $75,349 as of March 2024 [ 3 ]. How much you make will depend on factors like your qualifications, experience, and location.

Do data analysts need to be good at math?

Data analytics tends to be less math-intensive than data science. While you probably won’t need to master any advanced mathematics, a foundation in basic math and statistical analysis can help set you up for success.

Learn more: Data Analyst vs. Data Scientist: What’s the Difference?

Article sources

World Economic Forum. " The Future of Jobs Report 2023 , https://www3.weforum.org/docs/WEF_Future_of_Jobs_2023.pdf." Accessed March 19, 2024.

McKinsey & Company. " Five facts: How customer analytics boosts corporate performance , https://www.mckinsey.com/business-functions/marketing-and-sales/our-insights/five-facts-how-customer-analytics-boosts-corporate-performance." Accessed March 19, 2024.

Glassdoor. " Data Analyst Salaries , https://www.glassdoor.com/Salaries/data-analyst-salary-SRCH_KO0,12.htm" Accessed March 19, 2024.

Keep reading

Coursera staff.

Editorial Team

Coursera’s editorial team is comprised of highly experienced professional editors, writers, and fact...

This content has been made available for informational purposes only. Learners are advised to conduct additional research to ensure that courses and other credentials pursued meet their personal, professional, and financial goals.

How to conduct a meta-analysis in eight steps: a practical guide

- Open access

- Published: 30 November 2021

- Volume 72 , pages 1–19, ( 2022 )

Cite this article

You have full access to this open access article

- Christopher Hansen 1 ,

- Holger Steinmetz 2 &

- Jörn Block 3 , 4 , 5

144k Accesses

44 Citations

157 Altmetric

Explore all metrics

Avoid common mistakes on your manuscript.

1 Introduction

“Scientists have known for centuries that a single study will not resolve a major issue. Indeed, a small sample study will not even resolve a minor issue. Thus, the foundation of science is the cumulation of knowledge from the results of many studies.” (Hunter et al. 1982 , p. 10)

Meta-analysis is a central method for knowledge accumulation in many scientific fields (Aguinis et al. 2011c ; Kepes et al. 2013 ). Similar to a narrative review, it serves as a synopsis of a research question or field. However, going beyond a narrative summary of key findings, a meta-analysis adds value in providing a quantitative assessment of the relationship between two target variables or the effectiveness of an intervention (Gurevitch et al. 2018 ). Also, it can be used to test competing theoretical assumptions against each other or to identify important moderators where the results of different primary studies differ from each other (Aguinis et al. 2011b ; Bergh et al. 2016 ). Rooted in the synthesis of the effectiveness of medical and psychological interventions in the 1970s (Glass 2015 ; Gurevitch et al. 2018 ), meta-analysis is nowadays also an established method in management research and related fields.

The increasing importance of meta-analysis in management research has resulted in the publication of guidelines in recent years that discuss the merits and best practices in various fields, such as general management (Bergh et al. 2016 ; Combs et al. 2019 ; Gonzalez-Mulé and Aguinis 2018 ), international business (Steel et al. 2021 ), economics and finance (Geyer-Klingeberg et al. 2020 ; Havranek et al. 2020 ), marketing (Eisend 2017 ; Grewal et al. 2018 ), and organizational studies (DeSimone et al. 2020 ; Rudolph et al. 2020 ). These articles discuss existing and trending methods and propose solutions for often experienced problems. This editorial briefly summarizes the insights of these papers; provides a workflow of the essential steps in conducting a meta-analysis; suggests state-of-the art methodological procedures; and points to other articles for in-depth investigation. Thus, this article has two goals: (1) based on the findings of previous editorials and methodological articles, it defines methodological recommendations for meta-analyses submitted to Management Review Quarterly (MRQ); and (2) it serves as a practical guide for researchers who have little experience with meta-analysis as a method but plan to conduct one in the future.

2 Eight steps in conducting a meta-analysis

2.1 step 1: defining the research question.

The first step in conducting a meta-analysis, as with any other empirical study, is the definition of the research question. Most importantly, the research question determines the realm of constructs to be considered or the type of interventions whose effects shall be analyzed. When defining the research question, two hurdles might develop. First, when defining an adequate study scope, researchers must consider that the number of publications has grown exponentially in many fields of research in recent decades (Fortunato et al. 2018 ). On the one hand, a larger number of studies increases the potentially relevant literature basis and enables researchers to conduct meta-analyses. Conversely, scanning a large amount of studies that could be potentially relevant for the meta-analysis results in a perhaps unmanageable workload. Thus, Steel et al. ( 2021 ) highlight the importance of balancing manageability and relevance when defining the research question. Second, similar to the number of primary studies also the number of meta-analyses in management research has grown strongly in recent years (Geyer-Klingeberg et al. 2020 ; Rauch 2020 ; Schwab 2015 ). Therefore, it is likely that one or several meta-analyses for many topics of high scholarly interest already exist. However, this should not deter researchers from investigating their research questions. One possibility is to consider moderators or mediators of a relationship that have previously been ignored. For example, a meta-analysis about startup performance could investigate the impact of different ways to measure the performance construct (e.g., growth vs. profitability vs. survival time) or certain characteristics of the founders as moderators. Another possibility is to replicate previous meta-analyses and test whether their findings can be confirmed with an updated sample of primary studies or newly developed methods. Frequent replications and updates of meta-analyses are important contributions to cumulative science and are increasingly called for by the research community (Anderson & Kichkha 2017 ; Steel et al. 2021 ). Consistent with its focus on replication studies (Block and Kuckertz 2018 ), MRQ therefore also invites authors to submit replication meta-analyses.

2.2 Step 2: literature search

2.2.1 search strategies.

Similar to conducting a literature review, the search process of a meta-analysis should be systematic, reproducible, and transparent, resulting in a sample that includes all relevant studies (Fisch and Block 2018 ; Gusenbauer and Haddaway 2020 ). There are several identification strategies for relevant primary studies when compiling meta-analytical datasets (Harari et al. 2020 ). First, previous meta-analyses on the same or a related topic may provide lists of included studies that offer a good starting point to identify and become familiar with the relevant literature. This practice is also applicable to topic-related literature reviews, which often summarize the central findings of the reviewed articles in systematic tables. Both article types likely include the most prominent studies of a research field. The most common and important search strategy, however, is a keyword search in electronic databases (Harari et al. 2020 ). This strategy will probably yield the largest number of relevant studies, particularly so-called ‘grey literature’, which may not be considered by literature reviews. Gusenbauer and Haddaway ( 2020 ) provide a detailed overview of 34 scientific databases, of which 18 are multidisciplinary or have a focus on management sciences, along with their suitability for literature synthesis. To prevent biased results due to the scope or journal coverage of one database, researchers should use at least two different databases (DeSimone et al. 2020 ; Martín-Martín et al. 2021 ; Mongeon & Paul-Hus 2016 ). However, a database search can easily lead to an overload of potentially relevant studies. For example, key term searches in Google Scholar for “entrepreneurial intention” and “firm diversification” resulted in more than 660,000 and 810,000 hits, respectively. Footnote 1 Therefore, a precise research question and precise search terms using Boolean operators are advisable (Gusenbauer and Haddaway 2020 ). Addressing the challenge of identifying relevant articles in the growing number of database publications, (semi)automated approaches using text mining and machine learning (Bosco et al. 2017 ; O’Mara-Eves et al. 2015 ; Ouzzani et al. 2016 ; Thomas et al. 2017 ) can also be promising and time-saving search tools in the future. Also, some electronic databases offer the possibility to track forward citations of influential studies and thereby identify further relevant articles. Finally, collecting unpublished or undetected studies through conferences, personal contact with (leading) scholars, or listservs can be strategies to increase the study sample size (Grewal et al. 2018 ; Harari et al. 2020 ; Pigott and Polanin 2020 ).

2.2.2 Study inclusion criteria and sample composition

Next, researchers must decide which studies to include in the meta-analysis. Some guidelines for literature reviews recommend limiting the sample to studies published in renowned academic journals to ensure the quality of findings (e.g., Kraus et al. 2020 ). For meta-analysis, however, Steel et al. ( 2021 ) advocate for the inclusion of all available studies, including grey literature, to prevent selection biases based on availability, cost, familiarity, and language (Rothstein et al. 2005 ), or the “Matthew effect”, which denotes the phenomenon that highly cited articles are found faster than less cited articles (Merton 1968 ). Harrison et al. ( 2017 ) find that the effects of published studies in management are inflated on average by 30% compared to unpublished studies. This so-called publication bias or “file drawer problem” (Rosenthal 1979 ) results from the preference of academia to publish more statistically significant and less statistically insignificant study results. Owen and Li ( 2020 ) showed that publication bias is particularly severe when variables of interest are used as key variables rather than control variables. To consider the true effect size of a target variable or relationship, the inclusion of all types of research outputs is therefore recommended (Polanin et al. 2016 ). Different test procedures to identify publication bias are discussed subsequently in Step 7.

In addition to the decision of whether to include certain study types (i.e., published vs. unpublished studies), there can be other reasons to exclude studies that are identified in the search process. These reasons can be manifold and are primarily related to the specific research question and methodological peculiarities. For example, studies identified by keyword search might not qualify thematically after all, may use unsuitable variable measurements, or may not report usable effect sizes. Furthermore, there might be multiple studies by the same authors using similar datasets. If they do not differ sufficiently in terms of their sample characteristics or variables used, only one of these studies should be included to prevent bias from duplicates (Wood 2008 ; see this article for a detection heuristic).

In general, the screening process should be conducted stepwise, beginning with a removal of duplicate citations from different databases, followed by abstract screening to exclude clearly unsuitable studies and a final full-text screening of the remaining articles (Pigott and Polanin 2020 ). A graphical tool to systematically document the sample selection process is the PRISMA flow diagram (Moher et al. 2009 ). Page et al. ( 2021 ) recently presented an updated version of the PRISMA statement, including an extended item checklist and flow diagram to report the study process and findings.

2.3 Step 3: choice of the effect size measure

2.3.1 types of effect sizes.

The two most common meta-analytical effect size measures in management studies are (z-transformed) correlation coefficients and standardized mean differences (Aguinis et al. 2011a ; Geyskens et al. 2009 ). However, meta-analyses in management science and related fields may not be limited to those two effect size measures but rather depend on the subfield of investigation (Borenstein 2009 ; Stanley and Doucouliagos 2012 ). In economics and finance, researchers are more interested in the examination of elasticities and marginal effects extracted from regression models than in pure bivariate correlations (Stanley and Doucouliagos 2012 ). Regression coefficients can also be converted to partial correlation coefficients based on their t-statistics to make regression results comparable across studies (Stanley and Doucouliagos 2012 ). Although some meta-analyses in management research have combined bivariate and partial correlations in their study samples, Aloe ( 2015 ) and Combs et al. ( 2019 ) advise researchers not to use this practice. Most importantly, they argue that the effect size strength of partial correlations depends on the other variables included in the regression model and is therefore incomparable to bivariate correlations (Schmidt and Hunter 2015 ), resulting in a possible bias of the meta-analytic results (Roth et al. 2018 ). We endorse this opinion. If at all, we recommend separate analyses for each measure. In addition to these measures, survival rates, risk ratios or odds ratios, which are common measures in medical research (Borenstein 2009 ), can be suitable effect sizes for specific management research questions, such as understanding the determinants of the survival of startup companies. To summarize, the choice of a suitable effect size is often taken away from the researcher because it is typically dependent on the investigated research question as well as the conventions of the specific research field (Cheung and Vijayakumar 2016 ).

2.3.2 Conversion of effect sizes to a common measure

After having defined the primary effect size measure for the meta-analysis, it might become necessary in the later coding process to convert study findings that are reported in effect sizes that are different from the chosen primary effect size. For example, a study might report only descriptive statistics for two study groups but no correlation coefficient, which is used as the primary effect size measure in the meta-analysis. Different effect size measures can be harmonized using conversion formulae, which are provided by standard method books such as Borenstein et al. ( 2009 ) or Lipsey and Wilson ( 2001 ). There also exist online effect size calculators for meta-analysis. Footnote 2

2.4 Step 4: choice of the analytical method used

Choosing which meta-analytical method to use is directly connected to the research question of the meta-analysis. Research questions in meta-analyses can address a relationship between constructs or an effect of an intervention in a general manner, or they can focus on moderating or mediating effects. There are four meta-analytical methods that are primarily used in contemporary management research (Combs et al. 2019 ; Geyer-Klingeberg et al. 2020 ), which allow the investigation of these different types of research questions: traditional univariate meta-analysis, meta-regression, meta-analytic structural equation modeling, and qualitative meta-analysis (Hoon 2013 ). While the first three are quantitative, the latter summarizes qualitative findings. Table 1 summarizes the key characteristics of the three quantitative methods.

2.4.1 Univariate meta-analysis

In its traditional form, a meta-analysis reports a weighted mean effect size for the relationship or intervention of investigation and provides information on the magnitude of variance among primary studies (Aguinis et al. 2011c ; Borenstein et al. 2009 ). Accordingly, it serves as a quantitative synthesis of a research field (Borenstein et al. 2009 ; Geyskens et al. 2009 ). Prominent traditional approaches have been developed, for example, by Hedges and Olkin ( 1985 ) or Hunter and Schmidt ( 1990 , 2004 ). However, going beyond its simple summary function, the traditional approach has limitations in explaining the observed variance among findings (Gonzalez-Mulé and Aguinis 2018 ). To identify moderators (or boundary conditions) of the relationship of interest, meta-analysts can create subgroups and investigate differences between those groups (Borenstein and Higgins 2013 ; Hunter and Schmidt 2004 ). Potential moderators can be study characteristics (e.g., whether a study is published vs. unpublished), sample characteristics (e.g., study country, industry focus, or type of survey/experiment participants), or measurement artifacts (e.g., different types of variable measurements). The univariate approach is thus suitable to identify the overall direction of a relationship and can serve as a good starting point for additional analyses. However, due to its limitations in examining boundary conditions and developing theory, the univariate approach on its own is currently oftentimes viewed as not sufficient (Rauch 2020 ; Shaw and Ertug 2017 ).

2.4.2 Meta-regression analysis

Meta-regression analysis (Hedges and Olkin 1985 ; Lipsey and Wilson 2001 ; Stanley and Jarrell 1989 ) aims to investigate the heterogeneity among observed effect sizes by testing multiple potential moderators simultaneously. In meta-regression, the coded effect size is used as the dependent variable and is regressed on a list of moderator variables. These moderator variables can be categorical variables as described previously in the traditional univariate approach or (semi)continuous variables such as country scores that are merged with the meta-analytical data. Thus, meta-regression analysis overcomes the disadvantages of the traditional approach, which only allows us to investigate moderators singularly using dichotomized subgroups (Combs et al. 2019 ; Gonzalez-Mulé and Aguinis 2018 ). These possibilities allow a more fine-grained analysis of research questions that are related to moderating effects. However, Schmidt ( 2017 ) critically notes that the number of effect sizes in the meta-analytical sample must be sufficiently large to produce reliable results when investigating multiple moderators simultaneously in a meta-regression. For further reading, Tipton et al. ( 2019 ) outline the technical, conceptual, and practical developments of meta-regression over the last decades. Gonzalez-Mulé and Aguinis ( 2018 ) provide an overview of methodological choices and develop evidence-based best practices for future meta-analyses in management using meta-regression.

2.4.3 Meta-analytic structural equation modeling (MASEM)

MASEM is a combination of meta-analysis and structural equation modeling and allows to simultaneously investigate the relationships among several constructs in a path model. Researchers can use MASEM to test several competing theoretical models against each other or to identify mediation mechanisms in a chain of relationships (Bergh et al. 2016 ). This method is typically performed in two steps (Cheung and Chan 2005 ): In Step 1, a pooled correlation matrix is derived, which includes the meta-analytical mean effect sizes for all variable combinations; Step 2 then uses this matrix to fit the path model. While MASEM was based primarily on traditional univariate meta-analysis to derive the pooled correlation matrix in its early years (Viswesvaran and Ones 1995 ), more advanced methods, such as the GLS approach (Becker 1992 , 1995 ) or the TSSEM approach (Cheung and Chan 2005 ), have been subsequently developed. Cheung ( 2015a ) and Jak ( 2015 ) provide an overview of these approaches in their books with exemplary code. For datasets with more complex data structures, Wilson et al. ( 2016 ) also developed a multilevel approach that is related to the TSSEM approach in the second step. Bergh et al. ( 2016 ) discuss nine decision points and develop best practices for MASEM studies.

2.4.4 Qualitative meta-analysis

While the approaches explained above focus on quantitative outcomes of empirical studies, qualitative meta-analysis aims to synthesize qualitative findings from case studies (Hoon 2013 ; Rauch et al. 2014 ). The distinctive feature of qualitative case studies is their potential to provide in-depth information about specific contextual factors or to shed light on reasons for certain phenomena that cannot usually be investigated by quantitative studies (Rauch 2020 ; Rauch et al. 2014 ). In a qualitative meta-analysis, the identified case studies are systematically coded in a meta-synthesis protocol, which is then used to identify influential variables or patterns and to derive a meta-causal network (Hoon 2013 ). Thus, the insights of contextualized and typically nongeneralizable single studies are aggregated to a larger, more generalizable picture (Habersang et al. 2019 ). Although still the exception, this method can thus provide important contributions for academics in terms of theory development (Combs et al., 2019 ; Hoon 2013 ) and for practitioners in terms of evidence-based management or entrepreneurship (Rauch et al. 2014 ). Levitt ( 2018 ) provides a guide and discusses conceptual issues for conducting qualitative meta-analysis in psychology, which is also useful for management researchers.

2.5 Step 5: choice of software

Software solutions to perform meta-analyses range from built-in functions or additional packages of statistical software to software purely focused on meta-analyses and from commercial to open-source solutions. However, in addition to personal preferences, the choice of the most suitable software depends on the complexity of the methods used and the dataset itself (Cheung and Vijayakumar 2016 ). Meta-analysts therefore must carefully check if their preferred software is capable of performing the intended analysis.

Among commercial software providers, Stata (from version 16 on) offers built-in functions to perform various meta-analytical analyses or to produce various plots (Palmer and Sterne 2016 ). For SPSS and SAS, there exist several macros for meta-analyses provided by scholars, such as David B. Wilson or Andy P. Field and Raphael Gillet (Field and Gillett 2010 ). Footnote 3 Footnote 4 For researchers using the open-source software R (R Core Team 2021 ), Polanin et al. ( 2017 ) provide an overview of 63 meta-analysis packages and their functionalities. For new users, they recommend the package metafor (Viechtbauer 2010 ), which includes most necessary functions and for which the author Wolfgang Viechtbauer provides tutorials on his project website. Footnote 5 Footnote 6 In addition to packages and macros for statistical software, templates for Microsoft Excel have also been developed to conduct simple meta-analyses, such as Meta-Essentials by Suurmond et al. ( 2017 ). Footnote 7 Finally, programs purely dedicated to meta-analysis also exist, such as Comprehensive Meta-Analysis (Borenstein et al. 2013 ) or RevMan by The Cochrane Collaboration ( 2020 ).

2.6 Step 6: coding of effect sizes

2.6.1 coding sheet.

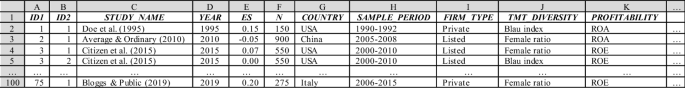

The first step in the coding process is the design of the coding sheet. A universal template does not exist because the design of the coding sheet depends on the methods used, the respective software, and the complexity of the research design. For univariate meta-analysis or meta-regression, data are typically coded in wide format. In its simplest form, when investigating a correlational relationship between two variables using the univariate approach, the coding sheet would contain a column for the study name or identifier, the effect size coded from the primary study, and the study sample size. However, such simple relationships are unlikely in management research because the included studies are typically not identical but differ in several respects. With more complex data structures or moderator variables being investigated, additional columns are added to the coding sheet to reflect the data characteristics. These variables can be coded as dummy, factor, or (semi)continuous variables and later used to perform a subgroup analysis or meta regression. For MASEM, the required data input format can deviate depending on the method used (e.g., TSSEM requires a list of correlation matrices as data input). For qualitative meta-analysis, the coding scheme typically summarizes the key qualitative findings and important contextual and conceptual information (see Hoon ( 2013 ) for a coding scheme for qualitative meta-analysis). Figure 1 shows an exemplary coding scheme for a quantitative meta-analysis on the correlational relationship between top-management team diversity and profitability. In addition to effect and sample sizes, information about the study country, firm type, and variable operationalizations are coded. The list could be extended by further study and sample characteristics.

Exemplary coding sheet for a meta-analysis on the relationship (correlation) between top-management team diversity and profitability

2.6.2 Inclusion of moderator or control variables

It is generally important to consider the intended research model and relevant nontarget variables before coding a meta-analytic dataset. For example, study characteristics can be important moderators or function as control variables in a meta-regression model. Similarly, control variables may be relevant in a MASEM approach to reduce confounding bias. Coding additional variables or constructs subsequently can be arduous if the sample of primary studies is large. However, the decision to include respective moderator or control variables, as in any empirical analysis, should always be based on strong (theoretical) rationales about how these variables can impact the investigated effect (Bernerth and Aguinis 2016 ; Bernerth et al. 2018 ; Thompson and Higgins 2002 ). While substantive moderators refer to theoretical constructs that act as buffers or enhancers of a supposed causal process, methodological moderators are features of the respective research designs that denote the methodological context of the observations and are important to control for systematic statistical particularities (Rudolph et al. 2020 ). Havranek et al. ( 2020 ) provide a list of recommended variables to code as potential moderators. While researchers may have clear expectations about the effects for some of these moderators, the concerns for other moderators may be tentative, and moderator analysis may be approached in a rather exploratory fashion. Thus, we argue that researchers should make full use of the meta-analytical design to obtain insights about potential context dependence that a primary study cannot achieve.

2.6.3 Treatment of multiple effect sizes in a study

A long-debated issue in conducting meta-analyses is whether to use only one or all available effect sizes for the same construct within a single primary study. For meta-analyses in management research, this question is fundamental because many empirical studies, particularly those relying on company databases, use multiple variables for the same construct to perform sensitivity analyses, resulting in multiple relevant effect sizes. In this case, researchers can either (randomly) select a single value, calculate a study average, or use the complete set of effect sizes (Bijmolt and Pieters 2001 ; López-López et al. 2018 ). Multiple effect sizes from the same study enrich the meta-analytic dataset and allow us to investigate the heterogeneity of the relationship of interest, such as different variable operationalizations (López-López et al. 2018 ; Moeyaert et al. 2017 ). However, including more than one effect size from the same study violates the independency assumption of observations (Cheung 2019 ; López-López et al. 2018 ), which can lead to biased results and erroneous conclusions (Gooty et al. 2021 ). We follow the recommendation of current best practice guides to take advantage of using all available effect size observations but to carefully consider interdependencies using appropriate methods such as multilevel models, panel regression models, or robust variance estimation (Cheung 2019 ; Geyer-Klingeberg et al. 2020 ; Gooty et al. 2021 ; López-López et al. 2018 ; Moeyaert et al. 2017 ).

2.7 Step 7: analysis

2.7.1 outlier analysis and tests for publication bias.

Before conducting the primary analysis, some preliminary sensitivity analyses might be necessary, which should ensure the robustness of the meta-analytical findings (Rudolph et al. 2020 ). First, influential outlier observations could potentially bias the observed results, particularly if the number of total effect sizes is small. Several statistical methods can be used to identify outliers in meta-analytical datasets (Aguinis et al. 2013 ; Viechtbauer and Cheung 2010 ). However, there is a debate about whether to keep or omit these observations. Anyhow, relevant studies should be closely inspected to infer an explanation about their deviating results. As in any other primary study, outliers can be a valid representation, albeit representing a different population, measure, construct, design or procedure. Thus, inferences about outliers can provide the basis to infer potential moderators (Aguinis et al. 2013 ; Steel et al. 2021 ). On the other hand, outliers can indicate invalid research, for instance, when unrealistically strong correlations are due to construct overlap (i.e., lack of a clear demarcation between independent and dependent variables), invalid measures, or simply typing errors when coding effect sizes. An advisable step is therefore to compare the results both with and without outliers and base the decision on whether to exclude outlier observations with careful consideration (Geyskens et al. 2009 ; Grewal et al. 2018 ; Kepes et al. 2013 ). However, instead of simply focusing on the size of the outlier, its leverage should be considered. Thus, Viechtbauer and Cheung ( 2010 ) propose considering a combination of standardized deviation and a study’s leverage.

Second, as mentioned in the context of a literature search, potential publication bias may be an issue. Publication bias can be examined in multiple ways (Rothstein et al. 2005 ). First, the funnel plot is a simple graphical tool that can provide an overview of the effect size distribution and help to detect publication bias (Stanley and Doucouliagos 2010 ). A funnel plot can also support in identifying potential outliers. As mentioned above, a graphical display of deviation (e.g., studentized residuals) and leverage (Cook’s distance) can help detect the presence of outliers and evaluate their influence (Viechtbauer and Cheung 2010 ). Moreover, several statistical procedures can be used to test for publication bias (Harrison et al. 2017 ; Kepes et al. 2012 ), including subgroup comparisons between published and unpublished studies, Begg and Mazumdar’s ( 1994 ) rank correlation test, cumulative meta-analysis (Borenstein et al. 2009 ), the trim and fill method (Duval and Tweedie 2000a , b ), Egger et al.’s ( 1997 ) regression test, failsafe N (Rosenthal 1979 ), or selection models (Hedges and Vevea 2005 ; Vevea and Woods 2005 ). In examining potential publication bias, Kepes et al. ( 2012 ) and Harrison et al. ( 2017 ) both recommend not relying only on a single test but rather using multiple conceptionally different test procedures (i.e., the so-called “triangulation approach”).

2.7.2 Model choice

After controlling and correcting for the potential presence of impactful outliers or publication bias, the next step in meta-analysis is the primary analysis, where meta-analysts must decide between two different types of models that are based on different assumptions: fixed-effects and random-effects (Borenstein et al. 2010 ). Fixed-effects models assume that all observations share a common mean effect size, which means that differences are only due to sampling error, while random-effects models assume heterogeneity and allow for a variation of the true effect sizes across studies (Borenstein et al. 2010 ; Cheung and Vijayakumar 2016 ; Hunter and Schmidt 2004 ). Both models are explained in detail in standard textbooks (e.g., Borenstein et al. 2009 ; Hunter and Schmidt 2004 ; Lipsey and Wilson 2001 ).

In general, the presence of heterogeneity is likely in management meta-analyses because most studies do not have identical empirical settings, which can yield different effect size strengths or directions for the same investigated phenomenon. For example, the identified studies have been conducted in different countries with different institutional settings, or the type of study participants varies (e.g., students vs. employees, blue-collar vs. white-collar workers, or manufacturing vs. service firms). Thus, the vast majority of meta-analyses in management research and related fields use random-effects models (Aguinis et al. 2011a ). In a meta-regression, the random-effects model turns into a so-called mixed-effects model because moderator variables are added as fixed effects to explain the impact of observed study characteristics on effect size variations (Raudenbush 2009 ).

2.8 Step 8: reporting results

2.8.1 reporting in the article.

The final step in performing a meta-analysis is reporting its results. Most importantly, all steps and methodological decisions should be comprehensible to the reader. DeSimone et al. ( 2020 ) provide an extensive checklist for journal reviewers of meta-analytical studies. This checklist can also be used by authors when performing their analyses and reporting their results to ensure that all important aspects have been addressed. Alternative checklists are provided, for example, by Appelbaum et al. ( 2018 ) or Page et al. ( 2021 ). Similarly, Levitt et al. ( 2018 ) provide a detailed guide for qualitative meta-analysis reporting standards.

For quantitative meta-analyses, tables reporting results should include all important information and test statistics, including mean effect sizes; standard errors and confidence intervals; the number of observations and study samples included; and heterogeneity measures. If the meta-analytic sample is rather small, a forest plot provides a good overview of the different findings and their accuracy. However, this figure will be less feasible for meta-analyses with several hundred effect sizes included. Also, results displayed in the tables and figures must be explained verbally in the results and discussion sections. Most importantly, authors must answer the primary research question, i.e., whether there is a positive, negative, or no relationship between the variables of interest, or whether the examined intervention has a certain effect. These results should be interpreted with regard to their magnitude (or significance), both economically and statistically. However, when discussing meta-analytical results, authors must describe the complexity of the results, including the identified heterogeneity and important moderators, future research directions, and theoretical relevance (DeSimone et al. 2019 ). In particular, the discussion of identified heterogeneity and underlying moderator effects is critical; not including this information can lead to false conclusions among readers, who interpret the reported mean effect size as universal for all included primary studies and ignore the variability of findings when citing the meta-analytic results in their research (Aytug et al. 2012 ; DeSimone et al. 2019 ).

2.8.2 Open-science practices

Another increasingly important topic is the public provision of meta-analytical datasets and statistical codes via open-source repositories. Open-science practices allow for results validation and for the use of coded data in subsequent meta-analyses ( Polanin et al. 2020 ), contributing to the development of cumulative science. Steel et al. ( 2021 ) refer to open science meta-analyses as a step towards “living systematic reviews” (Elliott et al. 2017 ) with continuous updates in real time. MRQ supports this development and encourages authors to make their datasets publicly available. Moreau and Gamble ( 2020 ), for example, provide various templates and video tutorials to conduct open science meta-analyses. There exist several open science repositories, such as the Open Science Foundation (OSF; for a tutorial, see Soderberg 2018 ), to preregister and make documents publicly available. Furthermore, several initiatives in the social sciences have been established to develop dynamic meta-analyses, such as metaBUS (Bosco et al. 2015 , 2017 ), MetaLab (Bergmann et al. 2018 ), or PsychOpen CAMA (Burgard et al. 2021 ).

3 Conclusion

This editorial provides a comprehensive overview of the essential steps in conducting and reporting a meta-analysis with references to more in-depth methodological articles. It also serves as a guide for meta-analyses submitted to MRQ and other management journals. MRQ welcomes all types of meta-analyses from all subfields and disciplines of management research.

Gusenbauer and Haddaway ( 2020 ), however, point out that Google Scholar is not appropriate as a primary search engine due to a lack of reproducibility of search results.

One effect size calculator by David B. Wilson is accessible via: https://www.campbellcollaboration.org/escalc/html/EffectSizeCalculator-Home.php .

The macros of David B. Wilson can be downloaded from: http://mason.gmu.edu/~dwilsonb/ .

The macros of Field and Gillet ( 2010 ) can be downloaded from: https://www.discoveringstatistics.com/repository/fieldgillett/how_to_do_a_meta_analysis.html .

The tutorials can be found via: https://www.metafor-project.org/doku.php .

Metafor does currently not provide functions to conduct MASEM. For MASEM, users can, for instance, use the package metaSEM (Cheung 2015b ).

The workbooks can be downloaded from: https://www.erim.eur.nl/research-support/meta-essentials/ .

Aguinis H, Dalton DR, Bosco FA, Pierce CA, Dalton CM (2011a) Meta-analytic choices and judgment calls: Implications for theory building and testing, obtained effect sizes, and scholarly impact. J Manag 37(1):5–38

Google Scholar

Aguinis H, Gottfredson RK, Joo H (2013) Best-practice recommendations for defining, identifying, and handling outliers. Organ Res Methods 16(2):270–301

Article Google Scholar

Aguinis H, Gottfredson RK, Wright TA (2011b) Best-practice recommendations for estimating interaction effects using meta-analysis. J Organ Behav 32(8):1033–1043

Aguinis H, Pierce CA, Bosco FA, Dalton DR, Dalton CM (2011c) Debunking myths and urban legends about meta-analysis. Organ Res Methods 14(2):306–331

Aloe AM (2015) Inaccuracy of regression results in replacing bivariate correlations. Res Synth Methods 6(1):21–27

Anderson RG, Kichkha A (2017) Replication, meta-analysis, and research synthesis in economics. Am Econ Rev 107(5):56–59

Appelbaum M, Cooper H, Kline RB, Mayo-Wilson E, Nezu AM, Rao SM (2018) Journal article reporting standards for quantitative research in psychology: the APA publications and communications BOARD task force report. Am Psychol 73(1):3–25

Aytug ZG, Rothstein HR, Zhou W, Kern MC (2012) Revealed or concealed? Transparency of procedures, decisions, and judgment calls in meta-analyses. Organ Res Methods 15(1):103–133

Begg CB, Mazumdar M (1994) Operating characteristics of a rank correlation test for publication bias. Biometrics 50(4):1088–1101. https://doi.org/10.2307/2533446

Bergh DD, Aguinis H, Heavey C, Ketchen DJ, Boyd BK, Su P, Lau CLL, Joo H (2016) Using meta-analytic structural equation modeling to advance strategic management research: Guidelines and an empirical illustration via the strategic leadership-performance relationship. Strateg Manag J 37(3):477–497

Becker BJ (1992) Using results from replicated studies to estimate linear models. J Educ Stat 17(4):341–362

Becker BJ (1995) Corrections to “Using results from replicated studies to estimate linear models.” J Edu Behav Stat 20(1):100–102

Bergmann C, Tsuji S, Piccinini PE, Lewis ML, Braginsky M, Frank MC, Cristia A (2018) Promoting replicability in developmental research through meta-analyses: Insights from language acquisition research. Child Dev 89(6):1996–2009

Bernerth JB, Aguinis H (2016) A critical review and best-practice recommendations for control variable usage. Pers Psychol 69(1):229–283

Bernerth JB, Cole MS, Taylor EC, Walker HJ (2018) Control variables in leadership research: A qualitative and quantitative review. J Manag 44(1):131–160

Bijmolt TH, Pieters RG (2001) Meta-analysis in marketing when studies contain multiple measurements. Mark Lett 12(2):157–169

Block J, Kuckertz A (2018) Seven principles of effective replication studies: Strengthening the evidence base of management research. Manag Rev Quart 68:355–359

Borenstein M (2009) Effect sizes for continuous data. In: Cooper H, Hedges LV, Valentine JC (eds) The handbook of research synthesis and meta-analysis. Russell Sage Foundation, pp 221–235

Borenstein M, Hedges LV, Higgins JPT, Rothstein HR (2009) Introduction to meta-analysis. John Wiley, Chichester

Book Google Scholar

Borenstein M, Hedges LV, Higgins JPT, Rothstein HR (2010) A basic introduction to fixed-effect and random-effects models for meta-analysis. Res Synth Methods 1(2):97–111

Borenstein M, Hedges L, Higgins J, Rothstein H (2013) Comprehensive meta-analysis (version 3). Biostat, Englewood, NJ

Borenstein M, Higgins JP (2013) Meta-analysis and subgroups. Prev Sci 14(2):134–143

Bosco FA, Steel P, Oswald FL, Uggerslev K, Field JG (2015) Cloud-based meta-analysis to bridge science and practice: Welcome to metaBUS. Person Assess Decis 1(1):3–17

Bosco FA, Uggerslev KL, Steel P (2017) MetaBUS as a vehicle for facilitating meta-analysis. Hum Resour Manag Rev 27(1):237–254

Burgard T, Bošnjak M, Studtrucker R (2021) Community-augmented meta-analyses (CAMAs) in psychology: potentials and current systems. Zeitschrift Für Psychologie 229(1):15–23

Cheung MWL (2015a) Meta-analysis: A structural equation modeling approach. John Wiley & Sons, Chichester

Cheung MWL (2015b) metaSEM: An R package for meta-analysis using structural equation modeling. Front Psychol 5:1521

Cheung MWL (2019) A guide to conducting a meta-analysis with non-independent effect sizes. Neuropsychol Rev 29(4):387–396

Cheung MWL, Chan W (2005) Meta-analytic structural equation modeling: a two-stage approach. Psychol Methods 10(1):40–64

Cheung MWL, Vijayakumar R (2016) A guide to conducting a meta-analysis. Neuropsychol Rev 26(2):121–128

Combs JG, Crook TR, Rauch A (2019) Meta-analytic research in management: contemporary approaches unresolved controversies and rising standards. J Manag Stud 56(1):1–18. https://doi.org/10.1111/joms.12427

DeSimone JA, Köhler T, Schoen JL (2019) If it were only that easy: the use of meta-analytic research by organizational scholars. Organ Res Methods 22(4):867–891. https://doi.org/10.1177/1094428118756743

DeSimone JA, Brannick MT, O’Boyle EH, Ryu JW (2020) Recommendations for reviewing meta-analyses in organizational research. Organ Res Methods 56:455–463

Duval S, Tweedie R (2000a) Trim and fill: a simple funnel-plot–based method of testing and adjusting for publication bias in meta-analysis. Biometrics 56(2):455–463

Duval S, Tweedie R (2000b) A nonparametric “trim and fill” method of accounting for publication bias in meta-analysis. J Am Stat Assoc 95(449):89–98

Egger M, Smith GD, Schneider M, Minder C (1997) Bias in meta-analysis detected by a simple, graphical test. BMJ 315(7109):629–634

Eisend M (2017) Meta-Analysis in advertising research. J Advert 46(1):21–35