Chapter 2. Research Design

Getting started.

When I teach undergraduates qualitative research methods, the final product of the course is a “research proposal” that incorporates all they have learned and enlists the knowledge they have learned about qualitative research methods in an original design that addresses a particular research question. I highly recommend you think about designing your own research study as you progress through this textbook. Even if you don’t have a study in mind yet, it can be a helpful exercise as you progress through the course. But how to start? How can one design a research study before they even know what research looks like? This chapter will serve as a brief overview of the research design process to orient you to what will be coming in later chapters. Think of it as a “skeleton” of what you will read in more detail in later chapters. Ideally, you will read this chapter both now (in sequence) and later during your reading of the remainder of the text. Do not worry if you have questions the first time you read this chapter. Many things will become clearer as the text advances and as you gain a deeper understanding of all the components of good qualitative research. This is just a preliminary map to get you on the right road.

Research Design Steps

Before you even get started, you will need to have a broad topic of interest in mind. [1] . In my experience, students can confuse this broad topic with the actual research question, so it is important to clearly distinguish the two. And the place to start is the broad topic. It might be, as was the case with me, working-class college students. But what about working-class college students? What’s it like to be one? Why are there so few compared to others? How do colleges assist (or fail to assist) them? What interested me was something I could barely articulate at first and went something like this: “Why was it so difficult and lonely to be me?” And by extension, “Did others share this experience?”

Once you have a general topic, reflect on why this is important to you. Sometimes we connect with a topic and we don’t really know why. Even if you are not willing to share the real underlying reason you are interested in a topic, it is important that you know the deeper reasons that motivate you. Otherwise, it is quite possible that at some point during the research, you will find yourself turned around facing the wrong direction. I have seen it happen many times. The reason is that the research question is not the same thing as the general topic of interest, and if you don’t know the reasons for your interest, you are likely to design a study answering a research question that is beside the point—to you, at least. And this means you will be much less motivated to carry your research to completion.

Researcher Note

Why do you employ qualitative research methods in your area of study? What are the advantages of qualitative research methods for studying mentorship?

Qualitative research methods are a huge opportunity to increase access, equity, inclusion, and social justice. Qualitative research allows us to engage and examine the uniquenesses/nuances within minoritized and dominant identities and our experiences with these identities. Qualitative research allows us to explore a specific topic, and through that exploration, we can link history to experiences and look for patterns or offer up a unique phenomenon. There’s such beauty in being able to tell a particular story, and qualitative research is a great mode for that! For our work, we examined the relationships we typically use the term mentorship for but didn’t feel that was quite the right word. Qualitative research allowed us to pick apart what we did and how we engaged in our relationships, which then allowed us to more accurately describe what was unique about our mentorship relationships, which we ultimately named liberationships ( McAloney and Long 2021) . Qualitative research gave us the means to explore, process, and name our experiences; what a powerful tool!

How do you come up with ideas for what to study (and how to study it)? Where did you get the idea for studying mentorship?

Coming up with ideas for research, for me, is kind of like Googling a question I have, not finding enough information, and then deciding to dig a little deeper to get the answer. The idea to study mentorship actually came up in conversation with my mentorship triad. We were talking in one of our meetings about our relationship—kind of meta, huh? We discussed how we felt that mentorship was not quite the right term for the relationships we had built. One of us asked what was different about our relationships and mentorship. This all happened when I was taking an ethnography course. During the next session of class, we were discussing auto- and duoethnography, and it hit me—let’s explore our version of mentorship, which we later went on to name liberationships ( McAloney and Long 2021 ). The idea and questions came out of being curious and wanting to find an answer. As I continue to research, I see opportunities in questions I have about my work or during conversations that, in our search for answers, end up exposing gaps in the literature. If I can’t find the answer already out there, I can study it.

—Kim McAloney, PhD, College Student Services Administration Ecampus coordinator and instructor

When you have a better idea of why you are interested in what it is that interests you, you may be surprised to learn that the obvious approaches to the topic are not the only ones. For example, let’s say you think you are interested in preserving coastal wildlife. And as a social scientist, you are interested in policies and practices that affect the long-term viability of coastal wildlife, especially around fishing communities. It would be natural then to consider designing a research study around fishing communities and how they manage their ecosystems. But when you really think about it, you realize that what interests you the most is how people whose livelihoods depend on a particular resource act in ways that deplete that resource. Or, even deeper, you contemplate the puzzle, “How do people justify actions that damage their surroundings?” Now, there are many ways to design a study that gets at that broader question, and not all of them are about fishing communities, although that is certainly one way to go. Maybe you could design an interview-based study that includes and compares loggers, fishers, and desert golfers (those who golf in arid lands that require a great deal of wasteful irrigation). Or design a case study around one particular example where resources were completely used up by a community. Without knowing what it is you are really interested in, what motivates your interest in a surface phenomenon, you are unlikely to come up with the appropriate research design.

These first stages of research design are often the most difficult, but have patience . Taking the time to consider why you are going to go through a lot of trouble to get answers will prevent a lot of wasted energy in the future.

There are distinct reasons for pursuing particular research questions, and it is helpful to distinguish between them. First, you may be personally motivated. This is probably the most important and the most often overlooked. What is it about the social world that sparks your curiosity? What bothers you? What answers do you need in order to keep living? For me, I knew I needed to get a handle on what higher education was for before I kept going at it. I needed to understand why I felt so different from my peers and whether this whole “higher education” thing was “for the likes of me” before I could complete my degree. That is the personal motivation question. Your personal motivation might also be political in nature, in that you want to change the world in a particular way. It’s all right to acknowledge this. In fact, it is better to acknowledge it than to hide it.

There are also academic and professional motivations for a particular study. If you are an absolute beginner, these may be difficult to find. We’ll talk more about this when we discuss reviewing the literature. Simply put, you are probably not the only person in the world to have thought about this question or issue and those related to it. So how does your interest area fit into what others have studied? Perhaps there is a good study out there of fishing communities, but no one has quite asked the “justification” question. You are motivated to address this to “fill the gap” in our collective knowledge. And maybe you are really not at all sure of what interests you, but you do know that [insert your topic] interests a lot of people, so you would like to work in this area too. You want to be involved in the academic conversation. That is a professional motivation and a very important one to articulate.

Practical and strategic motivations are a third kind. Perhaps you want to encourage people to take better care of the natural resources around them. If this is also part of your motivation, you will want to design your research project in a way that might have an impact on how people behave in the future. There are many ways to do this, one of which is using qualitative research methods rather than quantitative research methods, as the findings of qualitative research are often easier to communicate to a broader audience than the results of quantitative research. You might even be able to engage the community you are studying in the collecting and analyzing of data, something taboo in quantitative research but actively embraced and encouraged by qualitative researchers. But there are other practical reasons, such as getting “done” with your research in a certain amount of time or having access (or no access) to certain information. There is nothing wrong with considering constraints and opportunities when designing your study. Or maybe one of the practical or strategic goals is about learning competence in this area so that you can demonstrate the ability to conduct interviews and focus groups with future employers. Keeping that in mind will help shape your study and prevent you from getting sidetracked using a technique that you are less invested in learning about.

STOP HERE for a moment

I recommend you write a paragraph (at least) explaining your aims and goals. Include a sentence about each of the following: personal/political goals, practical or professional/academic goals, and practical/strategic goals. Think through how all of the goals are related and can be achieved by this particular research study . If they can’t, have a rethink. Perhaps this is not the best way to go about it.

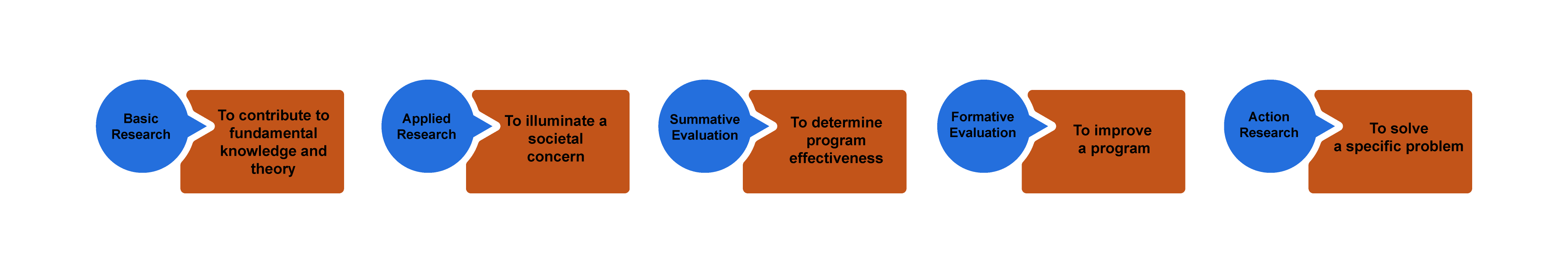

You will also want to be clear about the purpose of your study. “Wait, didn’t we just do this?” you might ask. No! Your goals are not the same as the purpose of the study, although they are related. You can think about purpose lying on a continuum from “ theory ” to “action” (figure 2.1). Sometimes you are doing research to discover new knowledge about the world, while other times you are doing a study because you want to measure an impact or make a difference in the world.

Basic research involves research that is done for the sake of “pure” knowledge—that is, knowledge that, at least at this moment in time, may not have any apparent use or application. Often, and this is very important, knowledge of this kind is later found to be extremely helpful in solving problems. So one way of thinking about basic research is that it is knowledge for which no use is yet known but will probably one day prove to be extremely useful. If you are doing basic research, you do not need to argue its usefulness, as the whole point is that we just don’t know yet what this might be.

Researchers engaged in basic research want to understand how the world operates. They are interested in investigating a phenomenon to get at the nature of reality with regard to that phenomenon. The basic researcher’s purpose is to understand and explain ( Patton 2002:215 ).

Basic research is interested in generating and testing hypotheses about how the world works. Grounded Theory is one approach to qualitative research methods that exemplifies basic research (see chapter 4). Most academic journal articles publish basic research findings. If you are working in academia (e.g., writing your dissertation), the default expectation is that you are conducting basic research.

Applied research in the social sciences is research that addresses human and social problems. Unlike basic research, the researcher has expectations that the research will help contribute to resolving a problem, if only by identifying its contours, history, or context. From my experience, most students have this as their baseline assumption about research. Why do a study if not to make things better? But this is a common mistake. Students and their committee members are often working with default assumptions here—the former thinking about applied research as their purpose, the latter thinking about basic research: “The purpose of applied research is to contribute knowledge that will help people to understand the nature of a problem in order to intervene, thereby allowing human beings to more effectively control their environment. While in basic research the source of questions is the tradition within a scholarly discipline, in applied research the source of questions is in the problems and concerns experienced by people and by policymakers” ( Patton 2002:217 ).

Applied research is less geared toward theory in two ways. First, its questions do not derive from previous literature. For this reason, applied research studies have much more limited literature reviews than those found in basic research (although they make up for this by having much more “background” about the problem). Second, it does not generate theory in the same way as basic research does. The findings of an applied research project may not be generalizable beyond the boundaries of this particular problem or context. The findings are more limited. They are useful now but may be less useful later. This is why basic research remains the default “gold standard” of academic research.

Evaluation research is research that is designed to evaluate or test the effectiveness of specific solutions and programs addressing specific social problems. We already know the problems, and someone has already come up with solutions. There might be a program, say, for first-generation college students on your campus. Does this program work? Are first-generation students who participate in the program more likely to graduate than those who do not? These are the types of questions addressed by evaluation research. There are two types of research within this broader frame; however, one more action-oriented than the next. In summative evaluation , an overall judgment about the effectiveness of a program or policy is made. Should we continue our first-gen program? Is it a good model for other campuses? Because the purpose of such summative evaluation is to measure success and to determine whether this success is scalable (capable of being generalized beyond the specific case), quantitative data is more often used than qualitative data. In our example, we might have “outcomes” data for thousands of students, and we might run various tests to determine if the better outcomes of those in the program are statistically significant so that we can generalize the findings and recommend similar programs elsewhere. Qualitative data in the form of focus groups or interviews can then be used for illustrative purposes, providing more depth to the quantitative analyses. In contrast, formative evaluation attempts to improve a program or policy (to help “form” or shape its effectiveness). Formative evaluations rely more heavily on qualitative data—case studies, interviews, focus groups. The findings are meant not to generalize beyond the particular but to improve this program. If you are a student seeking to improve your qualitative research skills and you do not care about generating basic research, formative evaluation studies might be an attractive option for you to pursue, as there are always local programs that need evaluation and suggestions for improvement. Again, be very clear about your purpose when talking through your research proposal with your committee.

Action research takes a further step beyond evaluation, even formative evaluation, to being part of the solution itself. This is about as far from basic research as one could get and definitely falls beyond the scope of “science,” as conventionally defined. The distinction between action and research is blurry, the research methods are often in constant flux, and the only “findings” are specific to the problem or case at hand and often are findings about the process of intervention itself. Rather than evaluate a program as a whole, action research often seeks to change and improve some particular aspect that may not be working—maybe there is not enough diversity in an organization or maybe women’s voices are muted during meetings and the organization wonders why and would like to change this. In a further step, participatory action research , those women would become part of the research team, attempting to amplify their voices in the organization through participation in the action research. As action research employs methods that involve people in the process, focus groups are quite common.

If you are working on a thesis or dissertation, chances are your committee will expect you to be contributing to fundamental knowledge and theory ( basic research ). If your interests lie more toward the action end of the continuum, however, it is helpful to talk to your committee about this before you get started. Knowing your purpose in advance will help avoid misunderstandings during the later stages of the research process!

The Research Question

Once you have written your paragraph and clarified your purpose and truly know that this study is the best study for you to be doing right now , you are ready to write and refine your actual research question. Know that research questions are often moving targets in qualitative research, that they can be refined up to the very end of data collection and analysis. But you do have to have a working research question at all stages. This is your “anchor” when you get lost in the data. What are you addressing? What are you looking at and why? Your research question guides you through the thicket. It is common to have a whole host of questions about a phenomenon or case, both at the outset and throughout the study, but you should be able to pare it down to no more than two or three sentences when asked. These sentences should both clarify the intent of the research and explain why this is an important question to answer. More on refining your research question can be found in chapter 4.

Chances are, you will have already done some prior reading before coming up with your interest and your questions, but you may not have conducted a systematic literature review. This is the next crucial stage to be completed before venturing further. You don’t want to start collecting data and then realize that someone has already beaten you to the punch. A review of the literature that is already out there will let you know (1) if others have already done the study you are envisioning; (2) if others have done similar studies, which can help you out; and (3) what ideas or concepts are out there that can help you frame your study and make sense of your findings. More on literature reviews can be found in chapter 9.

In addition to reviewing the literature for similar studies to what you are proposing, it can be extremely helpful to find a study that inspires you. This may have absolutely nothing to do with the topic you are interested in but is written so beautifully or organized so interestingly or otherwise speaks to you in such a way that you want to post it somewhere to remind you of what you want to be doing. You might not understand this in the early stages—why would you find a study that has nothing to do with the one you are doing helpful? But trust me, when you are deep into analysis and writing, having an inspirational model in view can help you push through. If you are motivated to do something that might change the world, you probably have read something somewhere that inspired you. Go back to that original inspiration and read it carefully and see how they managed to convey the passion that you so appreciate.

At this stage, you are still just getting started. There are a lot of things to do before setting forth to collect data! You’ll want to consider and choose a research tradition and a set of data-collection techniques that both help you answer your research question and match all your aims and goals. For example, if you really want to help migrant workers speak for themselves, you might draw on feminist theory and participatory action research models. Chapters 3 and 4 will provide you with more information on epistemologies and approaches.

Next, you have to clarify your “units of analysis.” What is the level at which you are focusing your study? Often, the unit in qualitative research methods is individual people, or “human subjects.” But your units of analysis could just as well be organizations (colleges, hospitals) or programs or even whole nations. Think about what it is you want to be saying at the end of your study—are the insights you are hoping to make about people or about organizations or about something else entirely? A unit of analysis can even be a historical period! Every unit of analysis will call for a different kind of data collection and analysis and will produce different kinds of “findings” at the conclusion of your study. [2]

Regardless of what unit of analysis you select, you will probably have to consider the “human subjects” involved in your research. [3] Who are they? What interactions will you have with them—that is, what kind of data will you be collecting? Before answering these questions, define your population of interest and your research setting. Use your research question to help guide you.

Let’s use an example from a real study. In Geographies of Campus Inequality , Benson and Lee ( 2020 ) list three related research questions: “(1) What are the different ways that first-generation students organize their social, extracurricular, and academic activities at selective and highly selective colleges? (2) how do first-generation students sort themselves and get sorted into these different types of campus lives; and (3) how do these different patterns of campus engagement prepare first-generation students for their post-college lives?” (3).

Note that we are jumping into this a bit late, after Benson and Lee have described previous studies (the literature review) and what is known about first-generation college students and what is not known. They want to know about differences within this group, and they are interested in ones attending certain kinds of colleges because those colleges will be sites where academic and extracurricular pressures compete. That is the context for their three related research questions. What is the population of interest here? First-generation college students . What is the research setting? Selective and highly selective colleges . But a host of questions remain. Which students in the real world, which colleges? What about gender, race, and other identity markers? Will the students be asked questions? Are the students still in college, or will they be asked about what college was like for them? Will they be observed? Will they be shadowed? Will they be surveyed? Will they be asked to keep diaries of their time in college? How many students? How many colleges? For how long will they be observed?

Recommendation

Take a moment and write down suggestions for Benson and Lee before continuing on to what they actually did.

Have you written down your own suggestions? Good. Now let’s compare those with what they actually did. Benson and Lee drew on two sources of data: in-depth interviews with sixty-four first-generation students and survey data from a preexisting national survey of students at twenty-eight selective colleges. Let’s ignore the survey for our purposes here and focus on those interviews. The interviews were conducted between 2014 and 2016 at a single selective college, “Hilltop” (a pseudonym ). They employed a “purposive” sampling strategy to ensure an equal number of male-identifying and female-identifying students as well as equal numbers of White, Black, and Latinx students. Each student was interviewed once. Hilltop is a selective liberal arts college in the northeast that enrolls about three thousand students.

How did your suggestions match up to those actually used by the researchers in this study? It is possible your suggestions were too ambitious? Beginning qualitative researchers can often make that mistake. You want a research design that is both effective (it matches your question and goals) and doable. You will never be able to collect data from your entire population of interest (unless your research question is really so narrow to be relevant to very few people!), so you will need to come up with a good sample. Define the criteria for this sample, as Benson and Lee did when deciding to interview an equal number of students by gender and race categories. Define the criteria for your sample setting too. Hilltop is typical for selective colleges. That was a research choice made by Benson and Lee. For more on sampling and sampling choices, see chapter 5.

Benson and Lee chose to employ interviews. If you also would like to include interviews, you have to think about what will be asked in them. Most interview-based research involves an interview guide, a set of questions or question areas that will be asked of each participant. The research question helps you create a relevant interview guide. You want to ask questions whose answers will provide insight into your research question. Again, your research question is the anchor you will continually come back to as you plan for and conduct your study. It may be that once you begin interviewing, you find that people are telling you something totally unexpected, and this makes you rethink your research question. That is fine. Then you have a new anchor. But you always have an anchor. More on interviewing can be found in chapter 11.

Let’s imagine Benson and Lee also observed college students as they went about doing the things college students do, both in the classroom and in the clubs and social activities in which they participate. They would have needed a plan for this. Would they sit in on classes? Which ones and how many? Would they attend club meetings and sports events? Which ones and how many? Would they participate themselves? How would they record their observations? More on observation techniques can be found in both chapters 13 and 14.

At this point, the design is almost complete. You know why you are doing this study, you have a clear research question to guide you, you have identified your population of interest and research setting, and you have a reasonable sample of each. You also have put together a plan for data collection, which might include drafting an interview guide or making plans for observations. And so you know exactly what you will be doing for the next several months (or years!). To put the project into action, there are a few more things necessary before actually going into the field.

First, you will need to make sure you have any necessary supplies, including recording technology. These days, many researchers use their phones to record interviews. Second, you will need to draft a few documents for your participants. These include informed consent forms and recruiting materials, such as posters or email texts, that explain what this study is in clear language. Third, you will draft a research protocol to submit to your institutional review board (IRB) ; this research protocol will include the interview guide (if you are using one), the consent form template, and all examples of recruiting material. Depending on your institution and the details of your study design, it may take weeks or even, in some unfortunate cases, months before you secure IRB approval. Make sure you plan on this time in your project timeline. While you wait, you can continue to review the literature and possibly begin drafting a section on the literature review for your eventual presentation/publication. More on IRB procedures can be found in chapter 8 and more general ethical considerations in chapter 7.

Once you have approval, you can begin!

Research Design Checklist

Before data collection begins, do the following:

- Write a paragraph explaining your aims and goals (personal/political, practical/strategic, professional/academic).

- Define your research question; write two to three sentences that clarify the intent of the research and why this is an important question to answer.

- Review the literature for similar studies that address your research question or similar research questions; think laterally about some literature that might be helpful or illuminating but is not exactly about the same topic.

- Find a written study that inspires you—it may or may not be on the research question you have chosen.

- Consider and choose a research tradition and set of data-collection techniques that (1) help answer your research question and (2) match your aims and goals.

- Define your population of interest and your research setting.

- Define the criteria for your sample (How many? Why these? How will you find them, gain access, and acquire consent?).

- If you are conducting interviews, draft an interview guide.

- If you are making observations, create a plan for observations (sites, times, recording, access).

- Acquire any necessary technology (recording devices/software).

- Draft consent forms that clearly identify the research focus and selection process.

- Create recruiting materials (posters, email, texts).

- Apply for IRB approval (proposal plus consent form plus recruiting materials).

- Block out time for collecting data.

- At the end of the chapter, you will find a " Research Design Checklist " that summarizes the main recommendations made here ↵

- For example, if your focus is society and culture , you might collect data through observation or a case study. If your focus is individual lived experience , you are probably going to be interviewing some people. And if your focus is language and communication , you will probably be analyzing text (written or visual). ( Marshall and Rossman 2016:16 ). ↵

- You may not have any "live" human subjects. There are qualitative research methods that do not require interactions with live human beings - see chapter 16 , "Archival and Historical Sources." But for the most part, you are probably reading this textbook because you are interested in doing research with people. The rest of the chapter will assume this is the case. ↵

One of the primary methodological traditions of inquiry in qualitative research, ethnography is the study of a group or group culture, largely through observational fieldwork supplemented by interviews. It is a form of fieldwork that may include participant-observation data collection. See chapter 14 for a discussion of deep ethnography.

A methodological tradition of inquiry and research design that focuses on an individual case (e.g., setting, institution, or sometimes an individual) in order to explore its complexity, history, and interactive parts. As an approach, it is particularly useful for obtaining a deep appreciation of an issue, event, or phenomenon of interest in its particular context.

The controlling force in research; can be understood as lying on a continuum from basic research (knowledge production) to action research (effecting change).

In its most basic sense, a theory is a story we tell about how the world works that can be tested with empirical evidence. In qualitative research, we use the term in a variety of ways, many of which are different from how they are used by quantitative researchers. Although some qualitative research can be described as “testing theory,” it is more common to “build theory” from the data using inductive reasoning , as done in Grounded Theory . There are so-called “grand theories” that seek to integrate a whole series of findings and stories into an overarching paradigm about how the world works, and much smaller theories or concepts about particular processes and relationships. Theory can even be used to explain particular methodological perspectives or approaches, as in Institutional Ethnography , which is both a way of doing research and a theory about how the world works.

Research that is interested in generating and testing hypotheses about how the world works.

A methodological tradition of inquiry and approach to analyzing qualitative data in which theories emerge from a rigorous and systematic process of induction. This approach was pioneered by the sociologists Glaser and Strauss (1967). The elements of theory generated from comparative analysis of data are, first, conceptual categories and their properties and, second, hypotheses or generalized relations among the categories and their properties – “The constant comparing of many groups draws the [researcher’s] attention to their many similarities and differences. Considering these leads [the researcher] to generate abstract categories and their properties, which, since they emerge from the data, will clearly be important to a theory explaining the kind of behavior under observation.” (36).

An approach to research that is “multimethod in focus, involving an interpretative, naturalistic approach to its subject matter. This means that qualitative researchers study things in their natural settings, attempting to make sense of, or interpret, phenomena in terms of the meanings people bring to them. Qualitative research involves the studied use and collection of a variety of empirical materials – case study, personal experience, introspective, life story, interview, observational, historical, interactional, and visual texts – that describe routine and problematic moments and meanings in individuals’ lives." ( Denzin and Lincoln 2005:2 ). Contrast with quantitative research .

Research that contributes knowledge that will help people to understand the nature of a problem in order to intervene, thereby allowing human beings to more effectively control their environment.

Research that is designed to evaluate or test the effectiveness of specific solutions and programs addressing specific social problems. There are two kinds: summative and formative .

Research in which an overall judgment about the effectiveness of a program or policy is made, often for the purpose of generalizing to other cases or programs. Generally uses qualitative research as a supplement to primary quantitative data analyses. Contrast formative evaluation research .

Research designed to improve a program or policy (to help “form” or shape its effectiveness); relies heavily on qualitative research methods. Contrast summative evaluation research

Research carried out at a particular organizational or community site with the intention of affecting change; often involves research subjects as participants of the study. See also participatory action research .

Research in which both researchers and participants work together to understand a problematic situation and change it for the better.

The level of the focus of analysis (e.g., individual people, organizations, programs, neighborhoods).

The large group of interest to the researcher. Although it will likely be impossible to design a study that incorporates or reaches all members of the population of interest, this should be clearly defined at the outset of a study so that a reasonable sample of the population can be taken. For example, if one is studying working-class college students, the sample may include twenty such students attending a particular college, while the population is “working-class college students.” In quantitative research, clearly defining the general population of interest is a necessary step in generalizing results from a sample. In qualitative research, defining the population is conceptually important for clarity.

A fictional name assigned to give anonymity to a person, group, or place. Pseudonyms are important ways of protecting the identity of research participants while still providing a “human element” in the presentation of qualitative data. There are ethical considerations to be made in selecting pseudonyms; some researchers allow research participants to choose their own.

A requirement for research involving human participants; the documentation of informed consent. In some cases, oral consent or assent may be sufficient, but the default standard is a single-page easy-to-understand form that both the researcher and the participant sign and date. Under federal guidelines, all researchers "shall seek such consent only under circumstances that provide the prospective subject or the representative sufficient opportunity to consider whether or not to participate and that minimize the possibility of coercion or undue influence. The information that is given to the subject or the representative shall be in language understandable to the subject or the representative. No informed consent, whether oral or written, may include any exculpatory language through which the subject or the representative is made to waive or appear to waive any of the subject's rights or releases or appears to release the investigator, the sponsor, the institution, or its agents from liability for negligence" (21 CFR 50.20). Your IRB office will be able to provide a template for use in your study .

An administrative body established to protect the rights and welfare of human research subjects recruited to participate in research activities conducted under the auspices of the institution with which it is affiliated. The IRB is charged with the responsibility of reviewing all research involving human participants. The IRB is concerned with protecting the welfare, rights, and privacy of human subjects. The IRB has the authority to approve, disapprove, monitor, and require modifications in all research activities that fall within its jurisdiction as specified by both the federal regulations and institutional policy.

Introduction to Qualitative Research Methods Copyright © 2023 by Allison Hurst is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License , except where otherwise noted.

An official website of the United States government

The .gov means it’s official. Federal government websites often end in .gov or .mil. Before sharing sensitive information, make sure you’re on a federal government site.

The site is secure. The https:// ensures that you are connecting to the official website and that any information you provide is encrypted and transmitted securely.

- Publications

- Account settings

Preview improvements coming to the PMC website in October 2024. Learn More or Try it out now .

- Advanced Search

- Journal List

- Neurol Res Pract

How to use and assess qualitative research methods

Loraine busetto.

1 Department of Neurology, Heidelberg University Hospital, Im Neuenheimer Feld 400, 69120 Heidelberg, Germany

Wolfgang Wick

2 Clinical Cooperation Unit Neuro-Oncology, German Cancer Research Center, Heidelberg, Germany

Christoph Gumbinger

Associated data.

Not applicable.

This paper aims to provide an overview of the use and assessment of qualitative research methods in the health sciences. Qualitative research can be defined as the study of the nature of phenomena and is especially appropriate for answering questions of why something is (not) observed, assessing complex multi-component interventions, and focussing on intervention improvement. The most common methods of data collection are document study, (non-) participant observations, semi-structured interviews and focus groups. For data analysis, field-notes and audio-recordings are transcribed into protocols and transcripts, and coded using qualitative data management software. Criteria such as checklists, reflexivity, sampling strategies, piloting, co-coding, member-checking and stakeholder involvement can be used to enhance and assess the quality of the research conducted. Using qualitative in addition to quantitative designs will equip us with better tools to address a greater range of research problems, and to fill in blind spots in current neurological research and practice.

The aim of this paper is to provide an overview of qualitative research methods, including hands-on information on how they can be used, reported and assessed. This article is intended for beginning qualitative researchers in the health sciences as well as experienced quantitative researchers who wish to broaden their understanding of qualitative research.

What is qualitative research?

Qualitative research is defined as “the study of the nature of phenomena”, including “their quality, different manifestations, the context in which they appear or the perspectives from which they can be perceived” , but excluding “their range, frequency and place in an objectively determined chain of cause and effect” [ 1 ]. This formal definition can be complemented with a more pragmatic rule of thumb: qualitative research generally includes data in form of words rather than numbers [ 2 ].

Why conduct qualitative research?

Because some research questions cannot be answered using (only) quantitative methods. For example, one Australian study addressed the issue of why patients from Aboriginal communities often present late or not at all to specialist services offered by tertiary care hospitals. Using qualitative interviews with patients and staff, it found one of the most significant access barriers to be transportation problems, including some towns and communities simply not having a bus service to the hospital [ 3 ]. A quantitative study could have measured the number of patients over time or even looked at possible explanatory factors – but only those previously known or suspected to be of relevance. To discover reasons for observed patterns, especially the invisible or surprising ones, qualitative designs are needed.

While qualitative research is common in other fields, it is still relatively underrepresented in health services research. The latter field is more traditionally rooted in the evidence-based-medicine paradigm, as seen in " research that involves testing the effectiveness of various strategies to achieve changes in clinical practice, preferably applying randomised controlled trial study designs (...) " [ 4 ]. This focus on quantitative research and specifically randomised controlled trials (RCT) is visible in the idea of a hierarchy of research evidence which assumes that some research designs are objectively better than others, and that choosing a "lesser" design is only acceptable when the better ones are not practically or ethically feasible [ 5 , 6 ]. Others, however, argue that an objective hierarchy does not exist, and that, instead, the research design and methods should be chosen to fit the specific research question at hand – "questions before methods" [ 2 , 7 – 9 ]. This means that even when an RCT is possible, some research problems require a different design that is better suited to addressing them. Arguing in JAMA, Berwick uses the example of rapid response teams in hospitals, which he describes as " a complex, multicomponent intervention – essentially a process of social change" susceptible to a range of different context factors including leadership or organisation history. According to him, "[in] such complex terrain, the RCT is an impoverished way to learn. Critics who use it as a truth standard in this context are incorrect" [ 8 ] . Instead of limiting oneself to RCTs, Berwick recommends embracing a wider range of methods , including qualitative ones, which for "these specific applications, (...) are not compromises in learning how to improve; they are superior" [ 8 ].

Research problems that can be approached particularly well using qualitative methods include assessing complex multi-component interventions or systems (of change), addressing questions beyond “what works”, towards “what works for whom when, how and why”, and focussing on intervention improvement rather than accreditation [ 7 , 9 – 12 ]. Using qualitative methods can also help shed light on the “softer” side of medical treatment. For example, while quantitative trials can measure the costs and benefits of neuro-oncological treatment in terms of survival rates or adverse effects, qualitative research can help provide a better understanding of patient or caregiver stress, visibility of illness or out-of-pocket expenses.

How to conduct qualitative research?

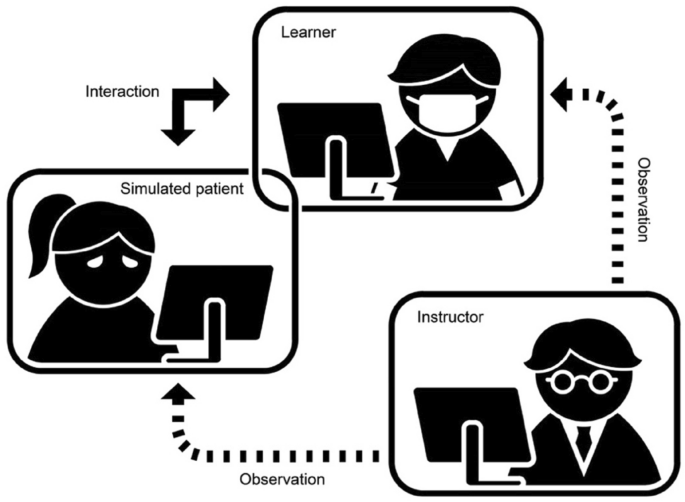

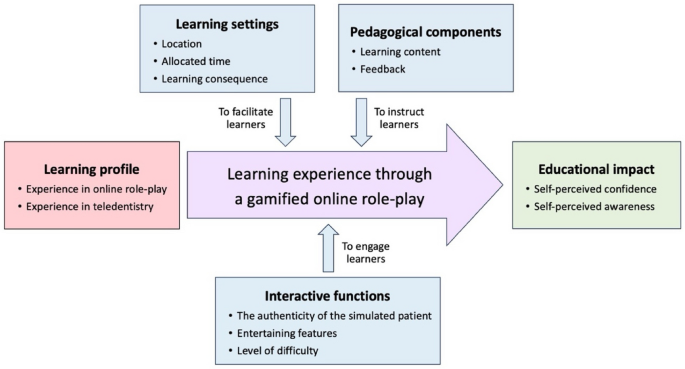

Given that qualitative research is characterised by flexibility, openness and responsivity to context, the steps of data collection and analysis are not as separate and consecutive as they tend to be in quantitative research [ 13 , 14 ]. As Fossey puts it : “sampling, data collection, analysis and interpretation are related to each other in a cyclical (iterative) manner, rather than following one after another in a stepwise approach” [ 15 ]. The researcher can make educated decisions with regard to the choice of method, how they are implemented, and to which and how many units they are applied [ 13 ]. As shown in Fig. 1 , this can involve several back-and-forth steps between data collection and analysis where new insights and experiences can lead to adaption and expansion of the original plan. Some insights may also necessitate a revision of the research question and/or the research design as a whole. The process ends when saturation is achieved, i.e. when no relevant new information can be found (see also below: sampling and saturation). For reasons of transparency, it is essential for all decisions as well as the underlying reasoning to be well-documented.

Iterative research process

While it is not always explicitly addressed, qualitative methods reflect a different underlying research paradigm than quantitative research (e.g. constructivism or interpretivism as opposed to positivism). The choice of methods can be based on the respective underlying substantive theory or theoretical framework used by the researcher [ 2 ].

Data collection

The methods of qualitative data collection most commonly used in health research are document study, observations, semi-structured interviews and focus groups [ 1 , 14 , 16 , 17 ].

Document study

Document study (also called document analysis) refers to the review by the researcher of written materials [ 14 ]. These can include personal and non-personal documents such as archives, annual reports, guidelines, policy documents, diaries or letters.

Observations

Observations are particularly useful to gain insights into a certain setting and actual behaviour – as opposed to reported behaviour or opinions [ 13 ]. Qualitative observations can be either participant or non-participant in nature. In participant observations, the observer is part of the observed setting, for example a nurse working in an intensive care unit [ 18 ]. In non-participant observations, the observer is “on the outside looking in”, i.e. present in but not part of the situation, trying not to influence the setting by their presence. Observations can be planned (e.g. for 3 h during the day or night shift) or ad hoc (e.g. as soon as a stroke patient arrives at the emergency room). During the observation, the observer takes notes on everything or certain pre-determined parts of what is happening around them, for example focusing on physician-patient interactions or communication between different professional groups. Written notes can be taken during or after the observations, depending on feasibility (which is usually lower during participant observations) and acceptability (e.g. when the observer is perceived to be judging the observed). Afterwards, these field notes are transcribed into observation protocols. If more than one observer was involved, field notes are taken independently, but notes can be consolidated into one protocol after discussions. Advantages of conducting observations include minimising the distance between the researcher and the researched, the potential discovery of topics that the researcher did not realise were relevant and gaining deeper insights into the real-world dimensions of the research problem at hand [ 18 ].

Semi-structured interviews

Hijmans & Kuyper describe qualitative interviews as “an exchange with an informal character, a conversation with a goal” [ 19 ]. Interviews are used to gain insights into a person’s subjective experiences, opinions and motivations – as opposed to facts or behaviours [ 13 ]. Interviews can be distinguished by the degree to which they are structured (i.e. a questionnaire), open (e.g. free conversation or autobiographical interviews) or semi-structured [ 2 , 13 ]. Semi-structured interviews are characterized by open-ended questions and the use of an interview guide (or topic guide/list) in which the broad areas of interest, sometimes including sub-questions, are defined [ 19 ]. The pre-defined topics in the interview guide can be derived from the literature, previous research or a preliminary method of data collection, e.g. document study or observations. The topic list is usually adapted and improved at the start of the data collection process as the interviewer learns more about the field [ 20 ]. Across interviews the focus on the different (blocks of) questions may differ and some questions may be skipped altogether (e.g. if the interviewee is not able or willing to answer the questions or for concerns about the total length of the interview) [ 20 ]. Qualitative interviews are usually not conducted in written format as it impedes on the interactive component of the method [ 20 ]. In comparison to written surveys, qualitative interviews have the advantage of being interactive and allowing for unexpected topics to emerge and to be taken up by the researcher. This can also help overcome a provider or researcher-centred bias often found in written surveys, which by nature, can only measure what is already known or expected to be of relevance to the researcher. Interviews can be audio- or video-taped; but sometimes it is only feasible or acceptable for the interviewer to take written notes [ 14 , 16 , 20 ].

Focus groups

Focus groups are group interviews to explore participants’ expertise and experiences, including explorations of how and why people behave in certain ways [ 1 ]. Focus groups usually consist of 6–8 people and are led by an experienced moderator following a topic guide or “script” [ 21 ]. They can involve an observer who takes note of the non-verbal aspects of the situation, possibly using an observation guide [ 21 ]. Depending on researchers’ and participants’ preferences, the discussions can be audio- or video-taped and transcribed afterwards [ 21 ]. Focus groups are useful for bringing together homogeneous (to a lesser extent heterogeneous) groups of participants with relevant expertise and experience on a given topic on which they can share detailed information [ 21 ]. Focus groups are a relatively easy, fast and inexpensive method to gain access to information on interactions in a given group, i.e. “the sharing and comparing” among participants [ 21 ]. Disadvantages include less control over the process and a lesser extent to which each individual may participate. Moreover, focus group moderators need experience, as do those tasked with the analysis of the resulting data. Focus groups can be less appropriate for discussing sensitive topics that participants might be reluctant to disclose in a group setting [ 13 ]. Moreover, attention must be paid to the emergence of “groupthink” as well as possible power dynamics within the group, e.g. when patients are awed or intimidated by health professionals.

Choosing the “right” method

As explained above, the school of thought underlying qualitative research assumes no objective hierarchy of evidence and methods. This means that each choice of single or combined methods has to be based on the research question that needs to be answered and a critical assessment with regard to whether or to what extent the chosen method can accomplish this – i.e. the “fit” between question and method [ 14 ]. It is necessary for these decisions to be documented when they are being made, and to be critically discussed when reporting methods and results.

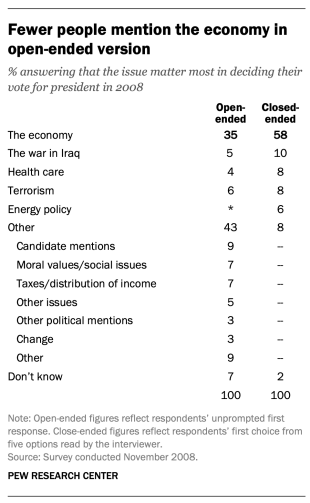

Let us assume that our research aim is to examine the (clinical) processes around acute endovascular treatment (EVT), from the patient’s arrival at the emergency room to recanalization, with the aim to identify possible causes for delay and/or other causes for sub-optimal treatment outcome. As a first step, we could conduct a document study of the relevant standard operating procedures (SOPs) for this phase of care – are they up-to-date and in line with current guidelines? Do they contain any mistakes, irregularities or uncertainties that could cause delays or other problems? Regardless of the answers to these questions, the results have to be interpreted based on what they are: a written outline of what care processes in this hospital should look like. If we want to know what they actually look like in practice, we can conduct observations of the processes described in the SOPs. These results can (and should) be analysed in themselves, but also in comparison to the results of the document analysis, especially as regards relevant discrepancies. Do the SOPs outline specific tests for which no equipment can be observed or tasks to be performed by specialized nurses who are not present during the observation? It might also be possible that the written SOP is outdated, but the actual care provided is in line with current best practice. In order to find out why these discrepancies exist, it can be useful to conduct interviews. Are the physicians simply not aware of the SOPs (because their existence is limited to the hospital’s intranet) or do they actively disagree with them or does the infrastructure make it impossible to provide the care as described? Another rationale for adding interviews is that some situations (or all of their possible variations for different patient groups or the day, night or weekend shift) cannot practically or ethically be observed. In this case, it is possible to ask those involved to report on their actions – being aware that this is not the same as the actual observation. A senior physician’s or hospital manager’s description of certain situations might differ from a nurse’s or junior physician’s one, maybe because they intentionally misrepresent facts or maybe because different aspects of the process are visible or important to them. In some cases, it can also be relevant to consider to whom the interviewee is disclosing this information – someone they trust, someone they are otherwise not connected to, or someone they suspect or are aware of being in a potentially “dangerous” power relationship to them. Lastly, a focus group could be conducted with representatives of the relevant professional groups to explore how and why exactly they provide care around EVT. The discussion might reveal discrepancies (between SOPs and actual care or between different physicians) and motivations to the researchers as well as to the focus group members that they might not have been aware of themselves. For the focus group to deliver relevant information, attention has to be paid to its composition and conduct, for example, to make sure that all participants feel safe to disclose sensitive or potentially problematic information or that the discussion is not dominated by (senior) physicians only. The resulting combination of data collection methods is shown in Fig. 2 .

Possible combination of data collection methods

Attributions for icons: “Book” by Serhii Smirnov, “Interview” by Adrien Coquet, FR, “Magnifying Glass” by anggun, ID, “Business communication” by Vectors Market; all from the Noun Project

The combination of multiple data source as described for this example can be referred to as “triangulation”, in which multiple measurements are carried out from different angles to achieve a more comprehensive understanding of the phenomenon under study [ 22 , 23 ].

Data analysis

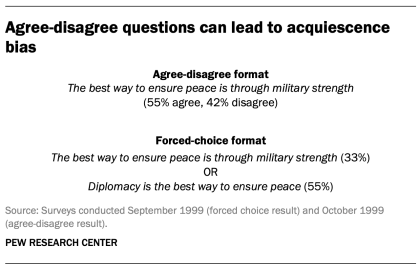

To analyse the data collected through observations, interviews and focus groups these need to be transcribed into protocols and transcripts (see Fig. 3 ). Interviews and focus groups can be transcribed verbatim , with or without annotations for behaviour (e.g. laughing, crying, pausing) and with or without phonetic transcription of dialects and filler words, depending on what is expected or known to be relevant for the analysis. In the next step, the protocols and transcripts are coded , that is, marked (or tagged, labelled) with one or more short descriptors of the content of a sentence or paragraph [ 2 , 15 , 23 ]. Jansen describes coding as “connecting the raw data with “theoretical” terms” [ 20 ]. In a more practical sense, coding makes raw data sortable. This makes it possible to extract and examine all segments describing, say, a tele-neurology consultation from multiple data sources (e.g. SOPs, emergency room observations, staff and patient interview). In a process of synthesis and abstraction, the codes are then grouped, summarised and/or categorised [ 15 , 20 ]. The end product of the coding or analysis process is a descriptive theory of the behavioural pattern under investigation [ 20 ]. The coding process is performed using qualitative data management software, the most common ones being InVivo, MaxQDA and Atlas.ti. It should be noted that these are data management tools which support the analysis performed by the researcher(s) [ 14 ].

From data collection to data analysis

Attributions for icons: see Fig. Fig.2, 2 , also “Speech to text” by Trevor Dsouza, “Field Notes” by Mike O’Brien, US, “Voice Record” by ProSymbols, US, “Inspection” by Made, AU, and “Cloud” by Graphic Tigers; all from the Noun Project

How to report qualitative research?

Protocols of qualitative research can be published separately and in advance of the study results. However, the aim is not the same as in RCT protocols, i.e. to pre-define and set in stone the research questions and primary or secondary endpoints. Rather, it is a way to describe the research methods in detail, which might not be possible in the results paper given journals’ word limits. Qualitative research papers are usually longer than their quantitative counterparts to allow for deep understanding and so-called “thick description”. In the methods section, the focus is on transparency of the methods used, including why, how and by whom they were implemented in the specific study setting, so as to enable a discussion of whether and how this may have influenced data collection, analysis and interpretation. The results section usually starts with a paragraph outlining the main findings, followed by more detailed descriptions of, for example, the commonalities, discrepancies or exceptions per category [ 20 ]. Here it is important to support main findings by relevant quotations, which may add information, context, emphasis or real-life examples [ 20 , 23 ]. It is subject to debate in the field whether it is relevant to state the exact number or percentage of respondents supporting a certain statement (e.g. “Five interviewees expressed negative feelings towards XYZ”) [ 21 ].

How to combine qualitative with quantitative research?

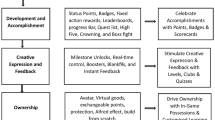

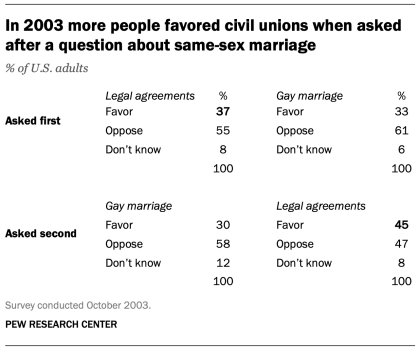

Qualitative methods can be combined with other methods in multi- or mixed methods designs, which “[employ] two or more different methods [ …] within the same study or research program rather than confining the research to one single method” [ 24 ]. Reasons for combining methods can be diverse, including triangulation for corroboration of findings, complementarity for illustration and clarification of results, expansion to extend the breadth and range of the study, explanation of (unexpected) results generated with one method with the help of another, or offsetting the weakness of one method with the strength of another [ 1 , 17 , 24 – 26 ]. The resulting designs can be classified according to when, why and how the different quantitative and/or qualitative data strands are combined. The three most common types of mixed method designs are the convergent parallel design , the explanatory sequential design and the exploratory sequential design. The designs with examples are shown in Fig. 4 .

Three common mixed methods designs

In the convergent parallel design, a qualitative study is conducted in parallel to and independently of a quantitative study, and the results of both studies are compared and combined at the stage of interpretation of results. Using the above example of EVT provision, this could entail setting up a quantitative EVT registry to measure process times and patient outcomes in parallel to conducting the qualitative research outlined above, and then comparing results. Amongst other things, this would make it possible to assess whether interview respondents’ subjective impressions of patients receiving good care match modified Rankin Scores at follow-up, or whether observed delays in care provision are exceptions or the rule when compared to door-to-needle times as documented in the registry. In the explanatory sequential design, a quantitative study is carried out first, followed by a qualitative study to help explain the results from the quantitative study. This would be an appropriate design if the registry alone had revealed relevant delays in door-to-needle times and the qualitative study would be used to understand where and why these occurred, and how they could be improved. In the exploratory design, the qualitative study is carried out first and its results help informing and building the quantitative study in the next step [ 26 ]. If the qualitative study around EVT provision had shown a high level of dissatisfaction among the staff members involved, a quantitative questionnaire investigating staff satisfaction could be set up in the next step, informed by the qualitative study on which topics dissatisfaction had been expressed. Amongst other things, the questionnaire design would make it possible to widen the reach of the research to more respondents from different (types of) hospitals, regions, countries or settings, and to conduct sub-group analyses for different professional groups.

How to assess qualitative research?

A variety of assessment criteria and lists have been developed for qualitative research, ranging in their focus and comprehensiveness [ 14 , 17 , 27 ]. However, none of these has been elevated to the “gold standard” in the field. In the following, we therefore focus on a set of commonly used assessment criteria that, from a practical standpoint, a researcher can look for when assessing a qualitative research report or paper.

Assessors should check the authors’ use of and adherence to the relevant reporting checklists (e.g. Standards for Reporting Qualitative Research (SRQR)) to make sure all items that are relevant for this type of research are addressed [ 23 , 28 ]. Discussions of quantitative measures in addition to or instead of these qualitative measures can be a sign of lower quality of the research (paper). Providing and adhering to a checklist for qualitative research contributes to an important quality criterion for qualitative research, namely transparency [ 15 , 17 , 23 ].

Reflexivity

While methodological transparency and complete reporting is relevant for all types of research, some additional criteria must be taken into account for qualitative research. This includes what is called reflexivity, i.e. sensitivity to the relationship between the researcher and the researched, including how contact was established and maintained, or the background and experience of the researcher(s) involved in data collection and analysis. Depending on the research question and population to be researched this can be limited to professional experience, but it may also include gender, age or ethnicity [ 17 , 27 ]. These details are relevant because in qualitative research, as opposed to quantitative research, the researcher as a person cannot be isolated from the research process [ 23 ]. It may influence the conversation when an interviewed patient speaks to an interviewer who is a physician, or when an interviewee is asked to discuss a gynaecological procedure with a male interviewer, and therefore the reader must be made aware of these details [ 19 ].

Sampling and saturation

The aim of qualitative sampling is for all variants of the objects of observation that are deemed relevant for the study to be present in the sample “ to see the issue and its meanings from as many angles as possible” [ 1 , 16 , 19 , 20 , 27 ] , and to ensure “information-richness [ 15 ]. An iterative sampling approach is advised, in which data collection (e.g. five interviews) is followed by data analysis, followed by more data collection to find variants that are lacking in the current sample. This process continues until no new (relevant) information can be found and further sampling becomes redundant – which is called saturation [ 1 , 15 ] . In other words: qualitative data collection finds its end point not a priori , but when the research team determines that saturation has been reached [ 29 , 30 ].

This is also the reason why most qualitative studies use deliberate instead of random sampling strategies. This is generally referred to as “ purposive sampling” , in which researchers pre-define which types of participants or cases they need to include so as to cover all variations that are expected to be of relevance, based on the literature, previous experience or theory (i.e. theoretical sampling) [ 14 , 20 ]. Other types of purposive sampling include (but are not limited to) maximum variation sampling, critical case sampling or extreme or deviant case sampling [ 2 ]. In the above EVT example, a purposive sample could include all relevant professional groups and/or all relevant stakeholders (patients, relatives) and/or all relevant times of observation (day, night and weekend shift).

Assessors of qualitative research should check whether the considerations underlying the sampling strategy were sound and whether or how researchers tried to adapt and improve their strategies in stepwise or cyclical approaches between data collection and analysis to achieve saturation [ 14 ].

Good qualitative research is iterative in nature, i.e. it goes back and forth between data collection and analysis, revising and improving the approach where necessary. One example of this are pilot interviews, where different aspects of the interview (especially the interview guide, but also, for example, the site of the interview or whether the interview can be audio-recorded) are tested with a small number of respondents, evaluated and revised [ 19 ]. In doing so, the interviewer learns which wording or types of questions work best, or which is the best length of an interview with patients who have trouble concentrating for an extended time. Of course, the same reasoning applies to observations or focus groups which can also be piloted.

Ideally, coding should be performed by at least two researchers, especially at the beginning of the coding process when a common approach must be defined, including the establishment of a useful coding list (or tree), and when a common meaning of individual codes must be established [ 23 ]. An initial sub-set or all transcripts can be coded independently by the coders and then compared and consolidated after regular discussions in the research team. This is to make sure that codes are applied consistently to the research data.

Member checking

Member checking, also called respondent validation , refers to the practice of checking back with study respondents to see if the research is in line with their views [ 14 , 27 ]. This can happen after data collection or analysis or when first results are available [ 23 ]. For example, interviewees can be provided with (summaries of) their transcripts and asked whether they believe this to be a complete representation of their views or whether they would like to clarify or elaborate on their responses [ 17 ]. Respondents’ feedback on these issues then becomes part of the data collection and analysis [ 27 ].

Stakeholder involvement

In those niches where qualitative approaches have been able to evolve and grow, a new trend has seen the inclusion of patients and their representatives not only as study participants (i.e. “members”, see above) but as consultants to and active participants in the broader research process [ 31 – 33 ]. The underlying assumption is that patients and other stakeholders hold unique perspectives and experiences that add value beyond their own single story, making the research more relevant and beneficial to researchers, study participants and (future) patients alike [ 34 , 35 ]. Using the example of patients on or nearing dialysis, a recent scoping review found that 80% of clinical research did not address the top 10 research priorities identified by patients and caregivers [ 32 , 36 ]. In this sense, the involvement of the relevant stakeholders, especially patients and relatives, is increasingly being seen as a quality indicator in and of itself.

How not to assess qualitative research

The above overview does not include certain items that are routine in assessments of quantitative research. What follows is a non-exhaustive, non-representative, experience-based list of the quantitative criteria often applied to the assessment of qualitative research, as well as an explanation of the limited usefulness of these endeavours.

Protocol adherence

Given the openness and flexibility of qualitative research, it should not be assessed by how well it adheres to pre-determined and fixed strategies – in other words: its rigidity. Instead, the assessor should look for signs of adaptation and refinement based on lessons learned from earlier steps in the research process.

Sample size

For the reasons explained above, qualitative research does not require specific sample sizes, nor does it require that the sample size be determined a priori [ 1 , 14 , 27 , 37 – 39 ]. Sample size can only be a useful quality indicator when related to the research purpose, the chosen methodology and the composition of the sample, i.e. who was included and why.

Randomisation

While some authors argue that randomisation can be used in qualitative research, this is not commonly the case, as neither its feasibility nor its necessity or usefulness has been convincingly established for qualitative research [ 13 , 27 ]. Relevant disadvantages include the negative impact of a too large sample size as well as the possibility (or probability) of selecting “ quiet, uncooperative or inarticulate individuals ” [ 17 ]. Qualitative studies do not use control groups, either.

Interrater reliability, variability and other “objectivity checks”

The concept of “interrater reliability” is sometimes used in qualitative research to assess to which extent the coding approach overlaps between the two co-coders. However, it is not clear what this measure tells us about the quality of the analysis [ 23 ]. This means that these scores can be included in qualitative research reports, preferably with some additional information on what the score means for the analysis, but it is not a requirement. Relatedly, it is not relevant for the quality or “objectivity” of qualitative research to separate those who recruited the study participants and collected and analysed the data. Experiences even show that it might be better to have the same person or team perform all of these tasks [ 20 ]. First, when researchers introduce themselves during recruitment this can enhance trust when the interview takes place days or weeks later with the same researcher. Second, when the audio-recording is transcribed for analysis, the researcher conducting the interviews will usually remember the interviewee and the specific interview situation during data analysis. This might be helpful in providing additional context information for interpretation of data, e.g. on whether something might have been meant as a joke [ 18 ].

Not being quantitative research

Being qualitative research instead of quantitative research should not be used as an assessment criterion if it is used irrespectively of the research problem at hand. Similarly, qualitative research should not be required to be combined with quantitative research per se – unless mixed methods research is judged as inherently better than single-method research. In this case, the same criterion should be applied for quantitative studies without a qualitative component.

The main take-away points of this paper are summarised in Table Table1. 1 . We aimed to show that, if conducted well, qualitative research can answer specific research questions that cannot to be adequately answered using (only) quantitative designs. Seeing qualitative and quantitative methods as equal will help us become more aware and critical of the “fit” between the research problem and our chosen methods: I can conduct an RCT to determine the reasons for transportation delays of acute stroke patients – but should I? It also provides us with a greater range of tools to tackle a greater range of research problems more appropriately and successfully, filling in the blind spots on one half of the methodological spectrum to better address the whole complexity of neurological research and practice.

Take-away-points

Acknowledgements

Abbreviations, authors’ contributions.

LB drafted the manuscript; WW and CG revised the manuscript; all authors approved the final versions.

no external funding.

Availability of data and materials

Ethics approval and consent to participate, consent for publication, competing interests.

The authors declare no competing interests.

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Qualitative Research Design: Start

Qualitative Research Design

What is Qualitative research design?

Qualitative research is a type of research that explores and provides deeper insights into real-world problems. Instead of collecting numerical data points or intervening or introducing treatments just like in quantitative research, qualitative research helps generate hypotheses as well as further investigate and understand quantitative data. Qualitative research gathers participants' experiences, perceptions, and behavior. It answers the hows and whys instead of how many or how much . It could be structured as a stand-alone study, purely relying on qualitative data or it could be part of mixed-methods research that combines qualitative and quantitative data.

Qualitative research involves collecting and analyzing non-numerical data (e.g., text, video, or audio) to understand concepts, opinions, or experiences. It can be used to gather in-depth insights into a problem or generate new ideas for research. Qualitative research is the opposite of quantitative research, which involves collecting and analyzing numerical data for statistical analysis. Qualitative research is commonly used in the humanities and social sciences, in subjects such as anthropology, sociology, education, health sciences, history, etc.

While qualitative and quantitative approaches are different, they are not necessarily opposites, and they are certainly not mutually exclusive. For instance, qualitative research can help expand and deepen understanding of data or results obtained from quantitative analysis. For example, say a quantitative analysis has determined that there is a correlation between length of stay and level of patient satisfaction, but why does this correlation exist? This dual-focus scenario shows one way in which qualitative and quantitative research could be integrated together.

Research Paradigms

- Positivist versus Post-Positivist

- Social Constructivist (this paradigm/ideology mostly birth qualitative studies)

Events Relating to the Qualitative Research and Community Engagement Workshops @ CMU Libraries

CMU Libraries is committed to helping members of our community become data experts. To that end, CMU is offering public facing workshops that discuss Qualitative Research, Coding, and Community Engagement best practices.

The following workshops are a part of a broader series on using data. Please follow the links to register for the events.

Qualitative Coding

Using Community Data to improve Outcome (Grant Writing)

Survey Design

Upcoming Event: March 21st, 2024 (12:00pm -1:00 pm)

Community Engagement and Collaboration Event

Join us for an event to improve, build on and expand the connections between Carnegie Mellon University resources and the Pittsburgh community. CMU resources such as the Libraries and Sustainability Initiative can be leveraged by users not affiliated with the university, but barriers can prevent them from fully engaging.

The conversation features representatives from CMU departments and local organizations about the community engagement efforts currently underway at CMU and opportunities to improve upon them. Speakers will highlight current and ongoing projects and share resources to support future collaboration.

Event Moderators:

Taiwo Lasisi, CLIR Postdoctoral Fellow in Community Data Literacy, Carnegie Mellon University Libraries

Emma Slayton, Data Curation, Visualization, & GIS Specialist, Carnegie Mellon University Libraries

Nicky Agate , Associate Dean for Academic Engagement, Carnegie Mellon University Libraries

Chelsea Cohen , The University’s Executive fellow for community engagement, Carnegie Mellon University

Sarah Ceurvorst , Academic Pathways Manager, Program Director, LEAP (Leadership, Excellence, Access, Persistence) Carnegie Mellon University

Julia Poeppibg , Associate Director of Partnership Development, Information Systems, Carnegie Mellon University

Scott Wolovich , Director of New Sun Rising, Pittsburgh

Additional workshops and events will be forthcoming. Watch this space for updates.

Workshop Organizer