Rubrics for Oral Presentations

Introduction.

Many instructors require students to give oral presentations, which they evaluate and count in students’ grades. It is important that instructors clarify their goals for these presentations as well as the student learning objectives to which they are related. Embedding the assignment in course goals and learning objectives allows instructors to be clear with students about their expectations and to develop a rubric for evaluating the presentations.

A rubric is a scoring guide that articulates and assesses specific components and expectations for an assignment. Rubrics identify the various criteria relevant to an assignment and then explicitly state the possible levels of achievement along a continuum, so that an effective rubric accurately reflects the expectations of an assignment. Using a rubric to evaluate student performance has advantages for both instructors and students. Creating Rubrics

Rubrics can be either analytic or holistic. An analytic rubric comprises a set of specific criteria, with each one evaluated separately and receiving a separate score. The template resembles a grid with the criteria listed in the left column and levels of performance listed across the top row, using numbers and/or descriptors. The cells within the center of the rubric contain descriptions of what expected performance looks like for each level of performance.

A holistic rubric consists of a set of descriptors that generate a single, global score for the entire work. The single score is based on raters’ overall perception of the quality of the performance. Often, sentence- or paragraph-length descriptions of different levels of competencies are provided.

When applied to an oral presentation, rubrics should reflect the elements of the presentation that will be evaluated as well as their relative importance. Thus, the instructor must decide whether to include dimensions relevant to both form and content and, if so, which one. Additionally, the instructor must decide how to weight each of the dimensions – are they all equally important, or are some more important than others? Additionally, if the presentation represents a group project, the instructor must decide how to balance grading individual and group contributions. Evaluating Group Projects

Creating Rubrics

The steps for creating an analytic rubric include the following:

1. Clarify the purpose of the assignment. What learning objectives are associated with the assignment?

2. Look for existing rubrics that can be adopted or adapted for the specific assignment

3. Define the criteria to be evaluated

4. Choose the rating scale to measure levels of performance

5. Write descriptions for each criterion for each performance level of the rating scale

6. Test and revise the rubric

Examples of criteria that have been included in rubrics for evaluation oral presentations include:

- Knowledge of content

- Organization of content

- Presentation of ideas

- Research/sources

- Visual aids/handouts

- Language clarity

- Grammatical correctness

- Time management

- Volume of speech

- Rate/pacing of Speech

- Mannerisms/gestures

- Eye contact/audience engagement

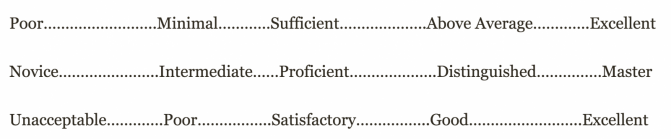

Examples of scales/ratings that have been used to rate student performance include:

- Strong, Satisfactory, Weak

- Beginning, Intermediate, High

- Exemplary, Competent, Developing

- Excellent, Competent, Needs Work

- Exceeds Standard, Meets Standard, Approaching Standard, Below Standard

- Exemplary, Proficient, Developing, Novice

- Excellent, Good, Marginal, Unacceptable

- Advanced, Intermediate High, Intermediate, Developing

- Exceptional, Above Average, Sufficient, Minimal, Poor

- Master, Distinguished, Proficient, Intermediate, Novice

- Excellent, Good, Satisfactory, Poor, Unacceptable

- Always, Often, Sometimes, Rarely, Never

- Exemplary, Accomplished, Acceptable, Minimally Acceptable, Emerging, Unacceptable

Grading and Performance Rubrics Carnegie Mellon University Eberly Center for Teaching Excellence & Educational Innovation

Creating and Using Rubrics Carnegie Mellon University Eberly Center for Teaching Excellence & Educational Innovation

Using Rubrics Cornell University Center for Teaching Innovation

Rubrics DePaul University Teaching Commons

Building a Rubric University of Texas/Austin Faculty Innovation Center

Building a Rubric Columbia University Center for Teaching and Learning

Rubric Development University of West Florida Center for University Teaching, Learning, and Assessment

Creating and Using Rubrics Yale University Poorvu Center for Teaching and Learning

Designing Grading Rubrics Brown University Sheridan Center for Teaching and Learning

Examples of Oral Presentation Rubrics

Oral Presentation Rubric Pomona College Teaching and Learning Center

Oral Presentation Evaluation Rubric University of Michigan

Oral Presentation Rubric Roanoke College

Oral Presentation: Scoring Guide Fresno State University Office of Institutional Effectiveness

Presentation Skills Rubric State University of New York/New Paltz School of Business

Oral Presentation Rubric Oregon State University Center for Teaching and Learning

Oral Presentation Rubric Purdue University College of Science

Group Class Presentation Sample Rubric Pepperdine University Graziadio Business School

Search form

- About Faculty Development and Support

- Programs and Funding Opportunities

Consultations, Observations, and Services

- Strategic Resources & Digital Publications

- Canvas @ Yale Support

- Learning Environments @ Yale

- Teaching Workshops

- Teaching Consultations and Classroom Observations

- Teaching Programs

- Spring Teaching Forum

- Written and Oral Communication Workshops and Panels

- Writing Resources & Tutorials

- About the Graduate Writing Laboratory

- Writing and Public Speaking Consultations

- Writing Workshops and Panels

- Writing Peer-Review Groups

- Writing Retreats and All Writes

- Online Writing Resources for Graduate Students

- About Teaching Development for Graduate and Professional School Students

- Teaching Programs and Grants

- Teaching Forums

- Resources for Graduate Student Teachers

- About Undergraduate Writing and Tutoring

- Academic Strategies Program

- The Writing Center

- STEM Tutoring & Programs

- Humanities & Social Sciences

- Center for Language Study

- Online Course Catalog

- Antiracist Pedagogy

- NECQL 2019: NorthEast Consortium for Quantitative Literacy XXII Meeting

- STEMinar Series

- Teaching in Context: Troubling Times

- Helmsley Postdoctoral Teaching Scholars

- Pedagogical Partners

- Instructional Materials

- Evaluation & Research

- STEM Education Job Opportunities

- Yale Connect

- Online Education Legal Statements

You are here

Creating and using rubrics.

A rubric describes the criteria that will be used to evaluate a specific task, such as a student writing assignment, poster, oral presentation, or other project. Rubrics allow instructors to communicate expectations to students, allow students to check in on their progress mid-assignment, and can increase the reliability of scores. Research suggests that when rubrics are used on an instructional basis (for instance, included with an assignment prompt for reference), students tend to utilize and appreciate them (Reddy and Andrade, 2010).

Rubrics generally exist in tabular form and are composed of:

- A description of the task that is being evaluated,

- The criteria that is being evaluated (row headings),

- A rating scale that demonstrates different levels of performance (column headings), and

- A description of each level of performance for each criterion (within each box of the table).

When multiple individuals are grading, rubrics also help improve the consistency of scoring across all graders. Instructors should insure that the structure, presentation, consistency, and use of their rubrics pass rigorous standards of validity , reliability , and fairness (Andrade, 2005).

Major Types of Rubrics

There are two major categories of rubrics:

- Holistic : In this type of rubric, a single score is provided based on raters’ overall perception of the quality of the performance. Holistic rubrics are useful when only one attribute is being evaluated, as they detail different levels of performance within a single attribute. This category of rubric is designed for quick scoring but does not provide detailed feedback. For these rubrics, the criteria may be the same as the description of the task.

- Analytic : In this type of rubric, scores are provided for several different criteria that are being evaluated. Analytic rubrics provide more detailed feedback to students and instructors about their performance. Scoring is usually more consistent across students and graders with analytic rubrics.

Rubrics utilize a scale that denotes level of success with a particular assignment, usually a 3-, 4-, or 5- category grid:

Figure 1: Grading Rubrics: Sample Scales (Brown Sheridan Center)

Sample Rubrics

Instructors can consider a sample holistic rubric developed for an English Writing Seminar course at Yale.

The Association of American Colleges and Universities also has a number of free (non-invasive free account required) analytic rubrics that can be downloaded and modified by instructors. These 16 VALUE rubrics enable instructors to measure items such as inquiry and analysis, critical thinking, written communication, oral communication, quantitative literacy, teamwork, problem-solving, and more.

Recommendations

The following provides a procedure for developing a rubric, adapted from Brown’s Sheridan Center for Teaching and Learning :

- Define the goal and purpose of the task that is being evaluated - Before constructing a rubric, instructors should review their learning outcomes associated with a given assignment. Are skills, content, and deeper conceptual knowledge clearly defined in the syllabus , and do class activities and assignments work towards intended outcomes? The rubric can only function effectively if goals are clear and student work progresses towards them.

- Decide what kind of rubric to use - The kind of rubric used may depend on the nature of the assignment, intended learning outcomes (for instance, does the task require the demonstration of several different skills?), and the amount and kind of feedback students will receive (for instance, is the task a formative or a summative assessment ?). Instructors can read the above, or consider “Additional Resources” for kinds of rubrics.

- Define the criteria - Instructors can review their learning outcomes and assessment parameters to determine specific criteria for the rubric to cover. Instructors should consider what knowledge and skills are required for successful completion, and create a list of criteria that assess outcomes across different vectors (comprehensiveness, maturity of thought, revisions, presentation, timeliness, etc). Criteria should be distinct and clearly described, and ideally, not surpass seven in number.

- Define the rating scale to measure levels of performance - Whatever rating scale instructors choose, they should insure that it is clear, and review it in-class to field student question and concerns. Instructors can consider if the scale will include descriptors or only be numerical, and might include prompts on the rubric for achieving higher achievement levels. Rubrics typically include 3-5 levels in their rating scales (see Figure 1 above).

- Write descriptions for each performance level of the rating scale - Each level should be accompanied by a descriptive paragraph that outlines ideals for each level, lists or names all performance expectations within the level, and if possible, provides a detail or example of ideal performance within each level. Across the rubric, descriptions should be parallel, observable, and measurable.

- Test and revise the rubric - The rubric can be tested before implementation, by arranging for writing or testing conditions with several graders or TFs who can use the rubric together. After grading with the rubric, graders might grade a similar set of materials without the rubric to assure consistency. Instructors can consider discrepancies, share the rubric and results with faculty colleagues for further opinions, and revise the rubric for use in class. Instructors might also seek out colleagues’ rubrics as well, for comparison. Regarding course implementation, instructors might consider passing rubrics out during the first class, in order to make grading expectations clear as early as possible. Rubrics should fit on one page, so that descriptions and criteria are viewable quickly and simultaneously. During and after a class or course, instructors can collect feedback on the rubric’s clarity and effectiveness from TFs and even students through anonymous surveys. Comparing scores and quality of assignments with parallel or previous assignments that did not include a rubric can reveal effectiveness as well. Instructors should feel free to revise a rubric following a course too, based on student performance and areas of confusion.

Additional Resources

Cox, G. C., Brathwaite, B. H., & Morrison, J. (2015). The Rubric: An assessment tool to guide students and markers. Advances in Higher Education, 149-163.

Creating and Using Rubrics - Carnegie Mellon Eberly Center for Teaching Excellence and & Educational Innovation

Creating a Rubric - UC Denver Center for Faculty Development

Grading Rubric Design - Brown University Sheridan Center for Teaching and Learning

Moskal, B. M. (2000). Scoring rubrics: What, when and how? Practical Assessment, Research & Evaluation 7(3).

Quinlan A. M., (2011) A Complete Guide to Rubrics: Assessment Made Easy for Teachers of K-college 2nd edition, Rowman & Littlefield Education.

Andrade, H. (2005). Teaching with Rubrics: The Good, the Bad, and the Ugly. College Teaching 53(1):27-30.

Reddy, Y. M., & Andrade, H. (2010). A review of rubric use in higher education. Assessment & Evaluation in Higher Education, 35(4), 435-448.

Sheridan Center for Teaching and Learning , Brown University

Downloads

YOU MAY BE INTERESTED IN

Instructional Enhancement Fund

The Instructional Enhancement Fund (IEF) awards grants of up to $500 to support the timely integration of new learning activities into an existing undergraduate or graduate course. All Yale instructors of record, including tenured and tenure-track faculty, clinical instructional faculty, lecturers, lectors, and part-time acting instructors (PTAIs), are eligible to apply. Award decisions are typically provided within two weeks to help instructors implement ideas for the current semester.

Reserve a Room

The Poorvu Center for Teaching and Learning partners with departments and groups on-campus throughout the year to share its space. Please review the reservation form and submit a request.

The Poorvu Center for Teaching and Learning routinely supports members of the Yale community with individual instructional consultations and classroom observations.

Creating an Oral Presentation Rubric

In-class activity.

This activity helps students clarify the oral presentation genre; do this after distributing an assignment–in this case, a standard individual oral presentation near the end of the semester which allows students to practice public speaking while also providing a means of workshopping their final paper argument. Together, the class will determine the criteria by which their presentations should–and should not–be assessed.

Guide to Oral/Signed Communication in Writing Classrooms

To collaboratively determine the requirements for students’ oral presentations; to clarify the audience’s expectations of this genre

rhetorical situation; genre; metacognition; oral communication; rubric; assessment; collaboration

- Ask students to free-write and think about these questions: What makes a good oral presentation? Think of examples of oral presentations that you’ve seen, one “bad” and one “good.” They can be from any genre–for example, a course lecture, a museum talk, a presentation you have given, even a video. Jot down specific strengths and weaknesses.

- Facilitate a full-class discussion to list the important characteristics of an oral presentation. Group things together. For example, students may say “speaking clearly” as a strength; elicit specifics (intonation, pace, etc.) and encourage them to elaborate.

- Clarify to students that the more they add to the list, the more information they have in regards to expectations on the oral presentation rubric. If they do not add enough, or specific enough, items, they won’t know what to aim for or how they will be assessed.

- Review the list on the board and ask students to decide what they think are the most important parts of their oral presentations, ranking their top three components.

- Create a second list to the side of the board, called “Let it slide,” asking students what, as a class, they should “let slide” in the oral presentations. Guide and elaborate, choosing whether to reject, accept, or compromise on the students’ proposals.

- Distribute the two lists to students as-is as a checklist-style rubric or flesh the primary list out into a full analytic rubric .

Here’s an example of one possible rubric created from this activity; here’s another example of an oral presentation rubric that assesses only the delivery of the speech/presentation, and which can be used by classmates to evaluate each other.

Rubric Best Practices, Examples, and Templates

A rubric is a scoring tool that identifies the different criteria relevant to an assignment, assessment, or learning outcome and states the possible levels of achievement in a specific, clear, and objective way. Use rubrics to assess project-based student work including essays, group projects, creative endeavors, and oral presentations.

Rubrics can help instructors communicate expectations to students and assess student work fairly, consistently and efficiently. Rubrics can provide students with informative feedback on their strengths and weaknesses so that they can reflect on their performance and work on areas that need improvement.

How to Get Started

Best practices, moodle how-to guides.

- Workshop Recording (Fall 2022)

- Workshop Registration

Step 1: Analyze the assignment

The first step in the rubric creation process is to analyze the assignment or assessment for which you are creating a rubric. To do this, consider the following questions:

- What is the purpose of the assignment and your feedback? What do you want students to demonstrate through the completion of this assignment (i.e. what are the learning objectives measured by it)? Is it a summative assessment, or will students use the feedback to create an improved product?

- Does the assignment break down into different or smaller tasks? Are these tasks equally important as the main assignment?

- What would an “excellent” assignment look like? An “acceptable” assignment? One that still needs major work?

- How detailed do you want the feedback you give students to be? Do you want/need to give them a grade?

Step 2: Decide what kind of rubric you will use

Types of rubrics: holistic, analytic/descriptive, single-point

Holistic Rubric. A holistic rubric includes all the criteria (such as clarity, organization, mechanics, etc.) to be considered together and included in a single evaluation. With a holistic rubric, the rater or grader assigns a single score based on an overall judgment of the student’s work, using descriptions of each performance level to assign the score.

Advantages of holistic rubrics:

- Can p lace an emphasis on what learners can demonstrate rather than what they cannot

- Save grader time by minimizing the number of evaluations to be made for each student

- Can be used consistently across raters, provided they have all been trained

Disadvantages of holistic rubrics:

- Provide less specific feedback than analytic/descriptive rubrics

- Can be difficult to choose a score when a student’s work is at varying levels across the criteria

- Any weighting of c riteria cannot be indicated in the rubric

Analytic/Descriptive Rubric . An analytic or descriptive rubric often takes the form of a table with the criteria listed in the left column and with levels of performance listed across the top row. Each cell contains a description of what the specified criterion looks like at a given level of performance. Each of the criteria is scored individually.

Advantages of analytic rubrics:

- Provide detailed feedback on areas of strength or weakness

- Each criterion can be weighted to reflect its relative importance

Disadvantages of analytic rubrics:

- More time-consuming to create and use than a holistic rubric

- May not be used consistently across raters unless the cells are well defined

- May result in giving less personalized feedback

Single-Point Rubric . A single-point rubric is breaks down the components of an assignment into different criteria, but instead of describing different levels of performance, only the “proficient” level is described. Feedback space is provided for instructors to give individualized comments to help students improve and/or show where they excelled beyond the proficiency descriptors.

Advantages of single-point rubrics:

- Easier to create than an analytic/descriptive rubric

- Perhaps more likely that students will read the descriptors

- Areas of concern and excellence are open-ended

- May removes a focus on the grade/points

- May increase student creativity in project-based assignments

Disadvantage of analytic rubrics: Requires more work for instructors writing feedback

Step 3 (Optional): Look for templates and examples.

You might Google, “Rubric for persuasive essay at the college level” and see if there are any publicly available examples to start from. Ask your colleagues if they have used a rubric for a similar assignment. Some examples are also available at the end of this article. These rubrics can be a great starting point for you, but consider steps 3, 4, and 5 below to ensure that the rubric matches your assignment description, learning objectives and expectations.

Step 4: Define the assignment criteria

Make a list of the knowledge and skills are you measuring with the assignment/assessment Refer to your stated learning objectives, the assignment instructions, past examples of student work, etc. for help.

Helpful strategies for defining grading criteria:

- Collaborate with co-instructors, teaching assistants, and other colleagues

- Brainstorm and discuss with students

- Can they be observed and measured?

- Are they important and essential?

- Are they distinct from other criteria?

- Are they phrased in precise, unambiguous language?

- Revise the criteria as needed

- Consider whether some are more important than others, and how you will weight them.

Step 5: Design the rating scale

Most ratings scales include between 3 and 5 levels. Consider the following questions when designing your rating scale:

- Given what students are able to demonstrate in this assignment/assessment, what are the possible levels of achievement?

- How many levels would you like to include (more levels means more detailed descriptions)

- Will you use numbers and/or descriptive labels for each level of performance? (for example 5, 4, 3, 2, 1 and/or Exceeds expectations, Accomplished, Proficient, Developing, Beginning, etc.)

- Don’t use too many columns, and recognize that some criteria can have more columns that others . The rubric needs to be comprehensible and organized. Pick the right amount of columns so that the criteria flow logically and naturally across levels.

Step 6: Write descriptions for each level of the rating scale

Artificial Intelligence tools like Chat GPT have proven to be useful tools for creating a rubric. You will want to engineer your prompt that you provide the AI assistant to ensure you get what you want. For example, you might provide the assignment description, the criteria you feel are important, and the number of levels of performance you want in your prompt. Use the results as a starting point, and adjust the descriptions as needed.

Building a rubric from scratch

For a single-point rubric , describe what would be considered “proficient,” i.e. B-level work, and provide that description. You might also include suggestions for students outside of the actual rubric about how they might surpass proficient-level work.

For analytic and holistic rubrics , c reate statements of expected performance at each level of the rubric.

- Consider what descriptor is appropriate for each criteria, e.g., presence vs absence, complete vs incomplete, many vs none, major vs minor, consistent vs inconsistent, always vs never. If you have an indicator described in one level, it will need to be described in each level.

- You might start with the top/exemplary level. What does it look like when a student has achieved excellence for each/every criterion? Then, look at the “bottom” level. What does it look like when a student has not achieved the learning goals in any way? Then, complete the in-between levels.

- For an analytic rubric , do this for each particular criterion of the rubric so that every cell in the table is filled. These descriptions help students understand your expectations and their performance in regard to those expectations.

Well-written descriptions:

- Describe observable and measurable behavior

- Use parallel language across the scale

- Indicate the degree to which the standards are met

Step 7: Create your rubric

Create your rubric in a table or spreadsheet in Word, Google Docs, Sheets, etc., and then transfer it by typing it into Moodle. You can also use online tools to create the rubric, but you will still have to type the criteria, indicators, levels, etc., into Moodle. Rubric creators: Rubistar , iRubric

Step 8: Pilot-test your rubric

Prior to implementing your rubric on a live course, obtain feedback from:

- Teacher assistants

Try out your new rubric on a sample of student work. After you pilot-test your rubric, analyze the results to consider its effectiveness and revise accordingly.

- Limit the rubric to a single page for reading and grading ease

- Use parallel language . Use similar language and syntax/wording from column to column. Make sure that the rubric can be easily read from left to right or vice versa.

- Use student-friendly language . Make sure the language is learning-level appropriate. If you use academic language or concepts, you will need to teach those concepts.

- Share and discuss the rubric with your students . Students should understand that the rubric is there to help them learn, reflect, and self-assess. If students use a rubric, they will understand the expectations and their relevance to learning.

- Consider scalability and reusability of rubrics. Create rubric templates that you can alter as needed for multiple assignments.

- Maximize the descriptiveness of your language. Avoid words like “good” and “excellent.” For example, instead of saying, “uses excellent sources,” you might describe what makes a resource excellent so that students will know. You might also consider reducing the reliance on quantity, such as a number of allowable misspelled words. Focus instead, for example, on how distracting any spelling errors are.

Example of an analytic rubric for a final paper

Example of a holistic rubric for a final paper, single-point rubric, more examples:.

- Single Point Rubric Template ( variation )

- Analytic Rubric Template make a copy to edit

- A Rubric for Rubrics

- Bank of Online Discussion Rubrics in different formats

- Mathematical Presentations Descriptive Rubric

- Math Proof Assessment Rubric

- Kansas State Sample Rubrics

- Design Single Point Rubric

Technology Tools: Rubrics in Moodle

- Moodle Docs: Rubrics

- Moodle Docs: Grading Guide (use for single-point rubrics)

Tools with rubrics (other than Moodle)

- Google Assignments

- Turnitin Assignments: Rubric or Grading Form

Other resources

- DePaul University (n.d.). Rubrics .

- Gonzalez, J. (2014). Know your terms: Holistic, Analytic, and Single-Point Rubrics . Cult of Pedagogy.

- Goodrich, H. (1996). Understanding rubrics . Teaching for Authentic Student Performance, 54 (4), 14-17. Retrieved from

- Miller, A. (2012). Tame the beast: tips for designing and using rubrics.

- Ragupathi, K., Lee, A. (2020). Beyond Fairness and Consistency in Grading: The Role of Rubrics in Higher Education. In: Sanger, C., Gleason, N. (eds) Diversity and Inclusion in Global Higher Education. Palgrave Macmillan, Singapore.

Center for Excellence in Teaching

Home > Resources > Group presentation rubric

Group presentation rubric

This is a grading rubric an instructor uses to assess students’ work on this type of assignment. It is a sample rubric that needs to be edited to reflect the specifics of a particular assignment. Students can self-assess using the rubric as a checklist before submitting their assignment.

Download this file

Download this file [63.74 KB]

Back to Resources Page

ESL Presentation Rubric

- Resources for Teachers

- Pronunciation & Conversation

- Writing Skills

- Reading Comprehension

- Business English

- TESOL Diploma, Trinity College London

- M.A., Music Performance, Cologne University of Music

- B.A., Vocal Performance, Eastman School of Music

In-class presentations are a great way to encourage a number of English communicative skills in a realistic task that provides students not only help with their English skills but prepares them in a broader way for future education and work situations. Grading these presentations can be tricky, as there are many elements such as key presentation phrases beyond simple grammar and structure, pronunciation and so on that make a good presentation. This ESL presentation rubric can help you provide valuable feedback to your students and has been created with English learners in mind. Skills included in this rubric include stress and intonation , appropriate linking language, body language , fluency, as well as standard grammar structures.

- ESL Essay Writing Rubric

- How to Teach Essay Writing

- How to Teach Pronunciation

- Top Vocabulary Building Books

- Dear Abby Lesson Plan

- Writing English Drama Scripts in ESL Class

- Learn How to Use YouTube in the ESL Classroom

- Standard Lesson Plan Format for ESL Teachers

- Top Lesson Plans for ESL and EFL

- How to Successfully Teach English One-to-One

- CALL Use in the ESL/EFL Classroom

- Beginning Level Curriculum for ESL Classes

- Process Writing

- Dialogue Activities for ESL Students

- Understand Your Class With This Fun Survey for ESL/EFL Learners

- 5 Top English Learner Dictionaries

18 Biocore Oral Presentation Rubric

Organization, presentation mechanics, rubric scores to letter grade conversion guide.

Download Biocore rubrics in PDF format

Process of Science Companion: Science Communication Copyright © 2017 by University of Wisconsin-Madison Biology Core Curriculum (Biocore) is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License , except where otherwise noted.

Share This Book

An official website of the United States government

The .gov means it’s official. Federal government websites often end in .gov or .mil. Before sharing sensitive information, make sure you’re on a federal government site.

The site is secure. The https:// ensures that you are connecting to the official website and that any information you provide is encrypted and transmitted securely.

- Publications

- Account settings

Preview improvements coming to the PMC website in October 2024. Learn More or Try it out now .

- Advanced Search

- Journal List

- Am J Pharm Educ

- v.74(9); 2010 Nov 10

A Standardized Rubric to Evaluate Student Presentations

Michael j. peeters.

a University of Toledo College of Pharmacy

Eric G. Sahloff

Gregory e. stone.

b University of Toledo College of Education

To design, implement, and assess a rubric to evaluate student presentations in a capstone doctor of pharmacy (PharmD) course.

A 20-item rubric was designed and used to evaluate student presentations in a capstone fourth-year course in 2007-2008, and then revised and expanded to 25 items and used to evaluate student presentations for the same course in 2008-2009. Two faculty members evaluated each presentation.

The Many-Facets Rasch Model (MFRM) was used to determine the rubric's reliability, quantify the contribution of evaluator harshness/leniency in scoring, and assess grading validity by comparing the current grading method with a criterion-referenced grading scheme. In 2007-2008, rubric reliability was 0.98, with a separation of 7.1 and 4 rating scale categories. In 2008-2009, MFRM analysis suggested 2 of 98 grades be adjusted to eliminate evaluator leniency, while a further criterion-referenced MFRM analysis suggested 10 of 98 grades should be adjusted.

The evaluation rubric was reliable and evaluator leniency appeared minimal. However, a criterion-referenced re-analysis suggested a need for further revisions to the rubric and evaluation process.

INTRODUCTION

Evaluations are important in the process of teaching and learning. In health professions education, performance-based evaluations are identified as having “an emphasis on testing complex, ‘higher-order’ knowledge and skills in the real-world context in which they are actually used.” 1 Objective structured clinical examinations (OSCEs) are a common, notable example. 2 On Miller's pyramid, a framework used in medical education for measuring learner outcomes, “knows” is placed at the base of the pyramid, followed by “knows how,” then “shows how,” and finally, “does” is placed at the top. 3 Based on Miller's pyramid, evaluation formats that use multiple-choice testing focus on “knows” while an OSCE focuses on “shows how.” Just as performance evaluations remain highly valued in medical education, 4 authentic task evaluations in pharmacy education may be better indicators of future pharmacist performance. 5 Much attention in medical education has been focused on reducing the unreliability of high-stakes evaluations. 6 Regardless of educational discipline, high-stakes performance-based evaluations should meet educational standards for reliability and validity. 7

PharmD students at University of Toledo College of Pharmacy (UTCP) were required to complete a course on presentations during their final year of pharmacy school and then give a presentation that served as both a capstone experience and a performance-based evaluation for the course. Pharmacists attending the presentations were given Accreditation Council for Pharmacy Education (ACPE)-approved continuing education credits. An evaluation rubric for grading the presentations was designed to allow multiple faculty evaluators to objectively score student performances in the domains of presentation delivery and content. Given the pass/fail grading procedure used in advanced pharmacy practice experiences, passing this presentation-based course and subsequently graduating from pharmacy school were contingent upon this high-stakes evaluation. As a result, the reliability and validity of the rubric used and the evaluation process needed to be closely scrutinized.

Each year, about 100 students completed presentations and at least 40 faculty members served as evaluators. With the use of multiple evaluators, a question of evaluator leniency often arose (ie, whether evaluators used the same criteria for evaluating performances or whether some evaluators graded easier or more harshly than others). At UTCP, opinions among some faculty evaluators and many PharmD students implied that evaluator leniency in judging the students' presentations significantly affected specific students' grades and ultimately their graduation from pharmacy school. While it was plausible that evaluator leniency was occurring, the magnitude of the effect was unknown. Thus, this study was initiated partly to address this concern over grading consistency and scoring variability among evaluators.

Because both students' presentation style and content were deemed important, each item of the rubric was weighted the same across delivery and content. However, because there were more categories related to delivery than content, an additional faculty concern was that students feasibly could present poor content but have an effective presentation delivery and pass the course.

The objectives for this investigation were: (1) to describe and optimize the reliability of the evaluation rubric used in this high-stakes evaluation; (2) to identify the contribution and significance of evaluator leniency to evaluation reliability; and (3) to assess the validity of this evaluation rubric within a criterion-referenced grading paradigm focused on both presentation delivery and content.

The University of Toledo's Institutional Review Board approved this investigation. This study investigated performance evaluation data for an oral presentation course for final-year PharmD students from 2 consecutive academic years (2007-2008 and 2008-2009). The course was taken during the fourth year (P4) of the PharmD program and was a high-stakes, performance-based evaluation. The goal of the course was to serve as a capstone experience, enabling students to demonstrate advanced drug literature evaluation and verbal presentations skills through the development and delivery of a 1-hour presentation. These presentations were to be on a current pharmacy practice topic and of sufficient quality for ACPE-approved continuing education. This experience allowed students to demonstrate their competencies in literature searching, literature evaluation, and application of evidence-based medicine, as well as their oral presentation skills. Students worked closely with a faculty advisor to develop their presentation. Each class (2007-2008 and 2008-2009) was randomly divided, with half of the students taking the course and completing their presentation and evaluation in the fall semester and the other half in the spring semester. To accommodate such a large number of students presenting for 1 hour each, it was necessary to use multiple rooms with presentations taking place concurrently over 2.5 days for both the fall and spring sessions of the course. Two faculty members independently evaluated each student presentation using the provided evaluation rubric. The 2007-2008 presentations involved 104 PharmD students and 40 faculty evaluators, while the 2008-2009 presentations involved 98 students and 46 faculty evaluators.

After vetting through the pharmacy practice faculty, the initial rubric used in 2007-2008 focused on describing explicit, specific evaluation criteria such as amounts of eye contact, voice pitch/volume, and descriptions of study methods. The evaluation rubric used in 2008-2009 was similar to the initial rubric, but with 5 items added (Figure (Figure1). 1 ). The evaluators rated each item (eg, eye contact) based on their perception of the student's performance. The 25 rubric items had equal weight (ie, 4 points each), but each item received a rating from the evaluator of 1 to 4 points. Thus, only 4 rating categories were included as has been recommended in the literature. 8 However, some evaluators created an additional 3 rating categories by marking lines in between the 4 ratings to signify half points ie, 1.5, 2.5, and 3.5. For example, for the “notecards/notes” item in Figure Figure1, 1 , a student looked at her notes sporadically during her presentation, but not distractingly nor enough to warrant a score of 3 in the faculty evaluator's opinion, so a 3.5 was given. Thus, a 7-category rating scale (1, 1.5, 2, 2.5. 3, 3.5, and 4) was analyzed. Each independent evaluator's ratings for the 25 items were summed to form a score (0-100%). The 2 evaluators' scores then were averaged and a letter grade was assigned based on the following scale: >90% = A, 80%-89% = B, 70%-79% = C, <70% = F.

Rubric used to evaluate student presentations given in a 2008-2009 capstone PharmD course.

EVALUATION AND ASSESSMENT

Rubric reliability.

To measure rubric reliability, iterative analyses were performed on the evaluations using the Many-Facets Rasch Model (MFRM) following the 2007-2008 data collection period. While Cronbach's alpha is the most commonly reported coefficient of reliability, its single number reporting without supplementary information can provide incomplete information about reliability. 9 - 11 Due to its formula, Cronbach's alpha can be increased by simply adding more repetitive rubric items or having more rating scale categories, even when no further useful information has been added. The MFRM reports separation , which is calculated differently than Cronbach's alpha, is another source of reliability information. Unlike Cronbach's alpha, separation does not appear enhanced by adding further redundant items. From a measurement perspective, a higher separation value is better than a lower one because students are being divided into meaningful groups after measurement error has been accounted for. Separation can be thought of as the number of units on a ruler where the more units the ruler has, the larger the range of performance levels that can be measured among students. For example, a separation of 4.0 suggests 4 graduations such that a grade of A is distinctly different from a grade of B, which in turn is different from a grade of C or of F. In measuring performances, a separation of 9.0 is better than 5.5, just as a separation of 7.0 is better than a 6.5; a higher separation coefficient suggests that student performance potentially could be divided into a larger number of meaningfully separate groups.

The rating scale can have substantial effects on reliability, 8 while description of how a rating scale functions is a unique aspect of the MFRM. With analysis iterations of the 2007-2008 data, the number of rating scale categories were collapsed consecutively until improvements in reliability and/or separation were no longer found. The last positive iteration that led to positive improvements in reliability or separation was deemed an optimal rating scale for this evaluation rubric.

In the 2007-2008 analysis, iterations of the data where run through the MFRM. While only 4 rating scale categories had been included on the rubric, because some faculty members inserted 3 in-between categories, 7 categories had to be included in the analysis. This initial analysis based on a 7-category rubric provided a reliability coefficient (similar to Cronbach's alpha) of 0.98, while the separation coefficient was 6.31. The separation coefficient denoted 6 distinctly separate groups of students based on the items. Rating scale categories were collapsed, with “in-between” categories included in adjacent full-point categories. Table Table1 1 shows the reliability and separation for the iterations as the rating scale was collapsed. As shown, the optimal evaluation rubric maintained a reliability of 0.98, but separation improved the reliability to 7.10 or 7 distinctly separate groups of students based on the items. Another distinctly separate group was added through a reduction in the rating scale while no change was seen to Cronbach's alpha, even though the number of rating scale categories was reduced. Table Table1 1 describes the stepwise, sequential pattern across the final 4 rating scale categories analyzed. Informed by the 2007-2008 results, the 2008-2009 evaluation rubric (Figure (Figure1) 1 ) used 4 rating scale categories and reliability remained high.

Evaluation Rubric Reliability and Separation with Iterations While Collapsing Rating Scale Categories.

a Reliability coefficient of variance in rater response that is reproducible (ie, Cronbach's alpha).

b Separation is a coefficient of item standard deviation divided by average measurement error and is an additional reliability coefficient.

c Optimal number of rating scale categories based on the highest reliability (0.98) and separation (7.1) values.

Evaluator Leniency

Described by Fleming and colleagues over half a century ago, 6 harsh raters (ie, hawks) or lenient raters (ie, doves) have also been demonstrated in more recent studies as an issue as well. 12 - 14 Shortly after 2008-2009 data were collected, those evaluations by multiple faculty evaluators were collated and analyzed in the MFRM to identify possible inconsistent scoring. While traditional interrater reliability does not deal with this issue, the MFRM had been used previously to illustrate evaluator leniency on licensing examinations for medical students and medical residents in the United Kingdom. 13 Thus, accounting for evaluator leniency may prove important to grading consistency (and reliability) in a course using multiple evaluators. Along with identifying evaluator leniency, the MFRM also corrected for this variability. For comparison, course grades were calculated by summing the evaluators' actual ratings (as discussed in the Design section) and compared with the MFRM-adjusted grades to quantify the degree of evaluator leniency occurring in this evaluation.

Measures created from the data analysis in the MFRM were converted to percentages using a common linear test-equating procedure involving the mean and standard deviation of the dataset. 15 To these percentages, student letter grades were assigned using the same traditional method used in 2007-2008 (ie, 90% = A, 80% - 89% = B, 70% - 79% = C, <70% = F). Letter grades calculated using the revised rubric and the MFRM then were compared to letter grades calculated using the previous rubric and course grading method.

In the analysis of the 2008-2009 data, the interrater reliability for the letter grades when comparing the 2 independent faculty evaluations for each presentation was 0.98 by Cohen's kappa. However, using the 3-facet MRFM revealed significant variation in grading. The interaction of evaluator leniency on student ability and item difficulty was significant, with a chi-square of p < 0.01. As well, the MFRM showed a reliability of 0.77, with a separation of 1.85 (ie, almost 2 groups of evaluators). The MFRM student ability measures were scaled to letter grades and compared with course letter grades. As a result, 2 B's became A's and so evaluator leniency accounted for a 2% change in letter grades (ie, 2 of 98 grades).

Validity and Grading

Explicit criterion-referenced standards for grading are recommended for higher evaluation validity. 3 , 16 - 18 The course coordinator completed 3 additional evaluations of a hypothetical student presentation rating the minimal criteria expected to describe each of an A, B, or C letter grade performance. These evaluations were placed with the other 196 evaluations (2 evaluators × 98 students) from 2008-2009 into the MFRM, with the resulting analysis report giving specific cutoff percentage scores for each letter grade. Unlike the traditional scoring method of assigning all items an equal weight, the MFRM ordered evaluation items from those more difficult for students (given more weight) to those less difficult for students (given less weight). These criterion-referenced letter grades were compared with the grades generated using the traditional grading process.

When the MFRM data were rerun with the criterion-referenced evaluations added into the dataset, a 10% change was seen with letter grades (ie, 10 of 98 grades). When the 10 letter grades were lowered, 1 was below a C, the minimum standard, and suggested a failing performance. Qualitative feedback from faculty evaluators agreed with this suggested criterion-referenced performance failure.

Measurement Model

Within modern test theory, the Rasch Measurement Model maps examinee ability with evaluation item difficulty. Items are not arbitrarily given the same value (ie, 1 point) but vary based on how difficult or easy the items were for examinees. The Rasch measurement model has been used frequently in educational research, 19 by numerous high-stakes testing professional bodies such as the National Board of Medical Examiners, 20 and also by various state-level departments of education for standardized secondary education examinations. 21 The Rasch measurement model itself has rigorous construct validity and reliability. 22 A 3-facet MFRM model allows an evaluator variable to be added to the student ability and item difficulty variables that are routine in other Rasch measurement analyses. Just as multiple regression accounts for additional variables in analysis compared to a simple bivariate regression, the MFRM is a multiple variable variant of the Rasch measurement model and was applied in this study using the Facets software (Linacre, Chicago, IL). The MFRM is ideal for performance-based evaluations with the addition of independent evaluator/judges. 8 , 23 From both yearly cohorts in this investigation, evaluation rubric data were collated and placed into the MFRM for separate though subsequent analyses. Within the MFRM output report, a chi-square for a difference in evaluator leniency was reported with an alpha of 0.05.

The presentation rubric was reliable. Results from the 2007-2008 analysis illustrated that the number of rating scale categories impacted the reliability of this rubric and that use of only 4 rating scale categories appeared best for measurement. While a 10-point Likert-like scale may commonly be used in patient care settings, such as in quantifying pain, most people cannot process more then 7 points or categories reliably. 24 Presumably, when more than 7 categories are used, the categories beyond 7 either are not used or are collapsed by respondents into fewer than 7 categories. Five-point scales commonly are encountered, but use of an odd number of categories can be problematic to interpretation and is not recommended. 25 Responses using the middle category could denote a true perceived average or neutral response or responder indecisiveness or even confusion over the question. Therefore, removing the middle category appears advantageous and is supported by our results.

With 2008-2009 data, the MFRM identified evaluator leniency with some evaluators grading more harshly while others were lenient. Evaluator leniency was indeed found in the dataset but only a couple of changes were suggested based on the MFRM-corrected evaluator leniency and did not appear to play a substantial role in the evaluation of this course at this time.

Performance evaluation instruments are either holistic or analytic rubrics. 26 The evaluation instrument used in this investigation exemplified an analytic rubric, which elicits specific observations and often demonstrates high reliability. However, Norman and colleagues point out a conundrum where drastically increasing the number of evaluation rubric items (creating something similar to a checklist) could augment a reliability coefficient though it appears to dissociate from that evaluation rubric's validity. 27 Validity may be more than the sum of behaviors on evaluation rubric items. 28 Having numerous, highly specific evaluation items appears to undermine the rubric's function. With this investigation's evaluation rubric and its numerous items for both presentation style and presentation content, equal numeric weighting of items can in fact allow student presentations to receive a passing score while falling short of the course objectives, as was shown in the present investigation. As opposed to analytic rubrics, holistic rubrics often demonstrate lower yet acceptable reliability, while offering a higher degree of explicit connection to course objectives. A summative, holistic evaluation of presentations may improve validity by allowing expert evaluators to provide their “gut feeling” as experts on whether a performance is “outstanding,” “sufficient,” “borderline,” or “subpar” for dimensions of presentation delivery and content. A holistic rubric that integrates with criteria of the analytic rubric (Figure (Figure1) 1 ) for evaluators to reflect on but maintains a summary, overall evaluation for each dimension (delivery/content) of the performance, may allow for benefits of each type of rubric to be used advantageously. This finding has been demonstrated with OSCEs in medical education where checklists for completed items (ie, yes/no) at an OSCE station have been successfully replaced with a few reliable global impression rating scales. 29 - 31

Alternatively, and because the MFRM model was used in the current study, an items-weighting approach could be used with the analytic rubric. That is, item weighting based on the difficulty of each rubric item could suggest how many points should be given for that rubric items, eg, some items would be worth 0.25 points, while others would be worth 0.5 points or 1 point (Table (Table2). 2 ). As could be expected, the more complex the rubric scoring becomes, the less feasible the rubric is to use. This was the main reason why this revision approach was not chosen by the course coordinator following this study. As well, it does not address the conundrum that the performance may be more than the summation of behavior items in the Figure Figure1 1 rubric. This current study cannot suggest which approach would be better as each would have its merits and pitfalls.

Rubric Item Weightings Suggested in the 2008-2009 Data Many-Facet Rasch Measurement Analysis

Regardless of which approach is used, alignment of the evaluation rubric with the course objectives is imperative. Objectivity has been described as a general striving for value-free measurement (ie, free of the evaluator's interests, opinions, preferences, sentiments). 27 This is a laudable goal pursued through educational research. Strategies to reduce measurement error, termed objectification , may not necessarily lead to increased objectivity. 27 The current investigation suggested that a rubric could become too explicit if all the possible areas of an oral presentation that could be assessed (ie, objectification) were included. This appeared to dilute the effect of important items and lose validity. A holistic rubric that is more straightforward and easier to score quickly may be less likely to lose validity (ie, “lose the forest for the trees”), though operationalizing a revised rubric would need to be investigated further. Similarly, weighting items in an analytic rubric based on their importance and difficulty for students may alleviate this issue; however, adding up individual items might prove arduous. While the rubric in Figure Figure1, 1 , which has evolved over the years, is the subject of ongoing revisions, it appears a reliable rubric on which to build.

The major limitation of this study involves the observational method that was employed. Although the 2 cohorts were from a single institution, investigators did use a completely separate class of PharmD students to verify initial instrument revisions. Optimizing the rubric's rating scale involved collapsing data from misuse of a 4-category rating scale (expanded by evaluators to 7 categories) by a few of the evaluators into 4 independent categories without middle ratings. As a result of the study findings, no actual grading adjustments were made for students in the 2008-2009 presentation course; however, adjustment using the MFRM have been suggested by Roberts and colleagues. 13 Since 2008-2009, the course coordinator has made further small revisions to the rubric based on feedback from evaluators, but these have not yet been re-analyzed with the MFRM.

The evaluation rubric used in this study for student performance evaluations showed high reliability and the data analysis agreed with using 4 rating scale categories to optimize the rubric's reliability. While lenient and harsh faculty evaluators were found, variability in evaluator scoring affected grading in this course only minimally. Aside from reliability, issues of validity were raised using criterion-referenced grading. Future revisions to this evaluation rubric should reflect these criterion-referenced concerns. The rubric analyzed herein appears a suitable starting point for reliable evaluation of PharmD oral presentations, though it has limitations that could be addressed with further attention and revisions.

ACKNOWLEDGEMENT

Author contributions— MJP and EGS conceptualized the study, while MJP and GES designed it. MJP, EGS, and GES gave educational content foci for the rubric. As the study statistician, MJP analyzed and interpreted the study data. MJP reviewed the literature and drafted a manuscript. EGS and GES critically reviewed this manuscript and approved the final version for submission. MJP accepts overall responsibility for the accuracy of the data, its analysis, and this report.

IMAGES

VIDEO

COMMENTS

Rubric for Oral Presentation Criteria Points 01 23 Content Knowledge The student cannot answer questions about the presentation. The student can answer rudimentary questions, but is unable to elaborate. The student answers questions without elaboration. The student's answers show mastery of the subject with full explanations. Delivery

The goal of this rubric is to identify and assess elements of research presentations, including delivery strategies and slide design. • Self-assessment: Record yourself presenting your talk using your computer's pre-downloaded recording software or by using the coach in Microsoft PowerPoint. Then review your recording, fill in the rubric ...

This rubric is designed to help you evaluate the organization, design, and delivery of standard research talks and other oral presentations. Here are some ways to use it: Distribute the rubric to colleagues before a dress rehearsal of your talk. Use the rubric to collect feedback and improve your presentation and delivery.

The rubric allows teachers to assess students in several key areas of oral presentation. Students are scored on a scale of 1-4 in three major areas. The first area is Delivery, which includes eye contact, and voice inflection. The second area, Content/Organization, scores students based on their knowledge and understanding of the topic being ...

Creating Rubrics. Examples of criteria that have been included in rubrics for evaluation oral presentations include: Knowledge of content. Organization of content. Presentation of ideas. Research/sources. Visual aids/handouts. Language clarity. Grammatical correctness.

Problematic No organization apparent; content of presentation reflects interests of speaker but not of audience; inappropriate . use of language . Structure: Does the organization reflect the purpose of the presentation and the needs of the audience? ... Rubric: Professional Presentations Author: Breslow, Lori Created Date: 9/3/2005 5:54:23 PM ...

Oral Presentation Rubric Criteria Unsuccessful Somewhat Successful Mostly Successful Successful Claim Claim is clearly and There is no claim, or claim is so confusingly worded that audience cannot discern it. Claim is present/implied but too late or in a confusing manner, and/or there are significant mismatches between claim and argument/evidence.

Creating and Using Rubrics. A rubric describes the criteria that will be used to evaluate a specific task, such as a student writing assignment, poster, oral presentation, or other project. Rubrics allow instructors to communicate expectations to students, allow students to check in on their progress mid-assignment, and can increase the ...

Oral Presentation Rubric Exemplary Proficient Developing Novice PRESENTATION CONTENT Introduction Introduced topic, established rapport and explained the purpose of presentation in creative, clear way capturing attention. Introduced presentation in clear way. Started with a self introduction or "My topic is" before capturing attention.

Create a second list to the side of the board, called "Let it slide," asking students what, as a class, they should "let slide" in the oral presentations. Guide and elaborate, choosing whether to reject, accept, or compromise on the students' proposals. Distribute the two lists to students as-is as a checklist-style rubric or flesh ...

Content : Introduction is attention-getting, lays out the problem well, and establishes a framework for the rest of the presentation. ... Scoring Rubric for Oral Presentations: Example #1 Author: Testing and Evaluation Services Created Date: 8/10/2017 9:45:03 AM ...

Audience members have difficulty hearing presentation. Presenter mumbles, talks very fast, and speaks too quietly for a majority of students to hear & understand. Timing 4 - Exceptional 3 - Admirable 2 - Acceptable 1 - Poor. Length of Presentation Within two minutes of allotted time +/-. Within four minutes of allotted time +/-.

Oral Presentation Evaluation Rubric, Formal Setting . PRESENTER: Non-verbal skills (Poise) 5 4 3 2 1 Comfort Relaxed, easy presentation with minimal hesitation Generally ... Content. 5 4 3 2 1 Information Well -versed in subject, responds to questions with further explanation Overall command of subject matter,

3 points - Mostly organized, but loses focus once or twice. 2 points - Somewhat organized, but loses focus 3 or more times. 1 point - No clear organization to the presentation. ) Content: currency & relevance. 4 points - Incorporates relevant course concepts into presentation where appropriate. 3 points - Incorporates several course ...

Step 7: Create your rubric. Create your rubric in a table or spreadsheet in Word, Google Docs, Sheets, etc., and then transfer it by typing it into Moodle. You can also use online tools to create the rubric, but you will still have to type the criteria, indicators, levels, etc., into Moodle.

An oral presentation allows the student to demonstrate content knowledge by presenting the findings of an inquiry to an audience. The audience may include students in the class and other classes, parents and relatives, and community members. Presenting allows the student to explain the methods that were used, to report the results of the ...

Oral Presentation Rubric. Holds attention of entire audience with the use of direct eye contact, seldom looking at notes. Consistent use of direct eye contact with audience, but still returns to notes. Displayed minimal eye contact with audience, while reading mostly from the notes. No eye contact with audience, as entire report is read from notes.

Group presentation rubric. This is a grading rubric an instructor uses to assess students' work on this type of assignment. It is a sample rubric that needs to be edited to reflect the specifics of a particular assignment. Students can self-assess using the rubric as a checklist before submitting their assignment.

Oral Presentation Scoring Guide, Hampden‐Sydney College. Top‐half score (4, 5, or 6): Despite differences among them, oral presentations that receive a top‐half score all demonstrate a speaker's proficiency in the use of spoken language to express an idea: the speaker chooses a topic and develops a specific purpose appropriate for the ...

This presentation rubric has been created especially for ESL classes and learners to help with appropriate scoring for presentations in class. ... Uses clear and purposeful content with ample examples to support ideas presented during the course of the presentation. Uses content which is well structured and relevant, although further examples ...

18 Biocore Oral Presentation Rubric Content. 4=excellent: With a few minor exceptions, the team clearly, concisely, & thoroughly conveyed their research project such that the audience could grasp & evaluate the work. The presentation contained all of these key components: 1. a clear, logical biological rationale summarizing research goals, key ...

An evaluation rubric for grading the presentations was designed to allow multiple faculty evaluators to objectively score student performances in the domains of presentation delivery and content. Given the pass/fail grading procedure used in advanced pharmacy practice experiences, passing this presentation-based course and subsequently ...

Evaluation of Oral Presentation by Judges . Project Title (see program): Judge: 4-Excellent 3-Good 2-Satisfactory 1-Unsatisfactory Content • Score •Addresses all specified content areas. •Material abundantly supports the project. •Use of engineering terms and jargon matches audience knowledge level. Addresses most content areas.