Psychological Experimental Design

- Living reference work entry

- First Online: 15 February 2024

- Cite this living reference work entry

- Zhang Houcan 2 &

- He Dongjun 3

This is a preview of subscription content, log in via an institution to check access.

Access this chapter

Institutional subscriptions

Further Reading

Kantowitz BH, Roediger HL, Elmes DG (2015) Experimental psychology, 10th edn. Cengage Learning, Boston

Google Scholar

Zhang X-M, Hua S (2014) Experimental psychology. Beijing Normal University Publishing Group, Beijing

Download references

Author information

Authors and affiliations.

Faculty of Psychology, Beijing Normal University, Beijing, China

Zhang Houcan

School of Psychology, Chengdu Medical University, Chengdu, China

You can also search for this author in PubMed Google Scholar

Corresponding author

Correspondence to He Dongjun .

Rights and permissions

Reprints and permissions

Copyright information

© 2024 Encyclopedia of China Publishing House

About this entry

Cite this entry.

Houcan, Z., Dongjun, H. (2024). Psychological Experimental Design. In: The ECPH Encyclopedia of Psychology. Springer, Singapore. https://doi.org/10.1007/978-981-99-6000-2_490-1

Download citation

DOI : https://doi.org/10.1007/978-981-99-6000-2_490-1

Received : 04 January 2024

Accepted : 05 January 2024

Published : 15 February 2024

Publisher Name : Springer, Singapore

Print ISBN : 978-981-99-6000-2

Online ISBN : 978-981-99-6000-2

eBook Packages : Springer Reference Behavioral Science and Psychology Reference Module Humanities and Social Sciences Reference Module Business, Economics and Social Sciences

- Publish with us

Policies and ethics

- Find a journal

- Track your research

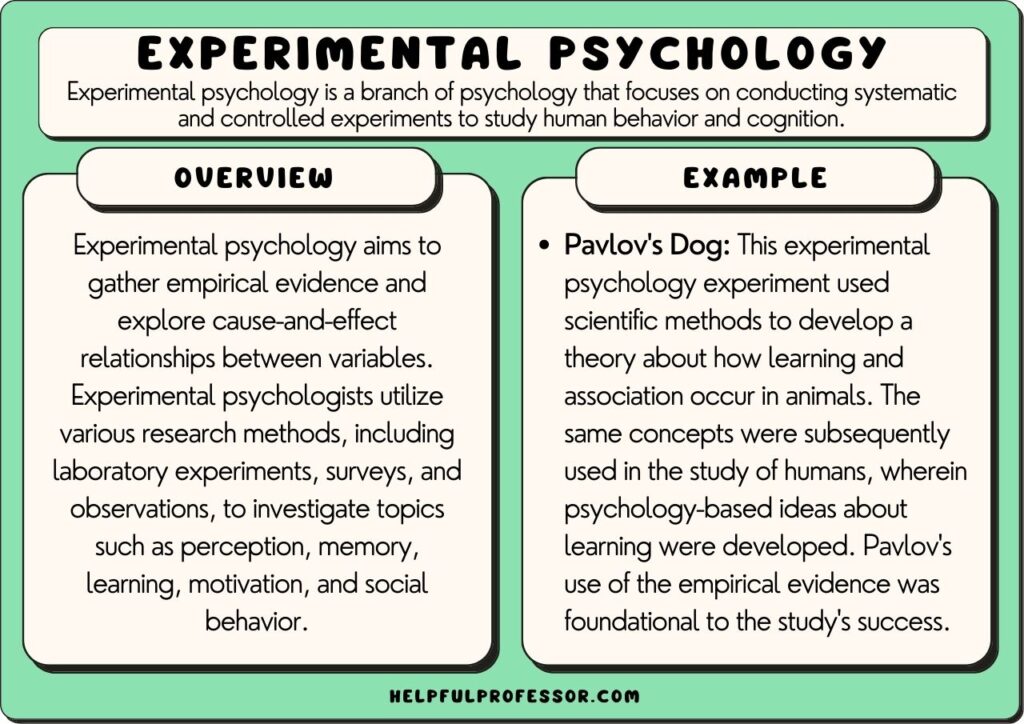

Experimental Psychology: 10 Examples & Definition

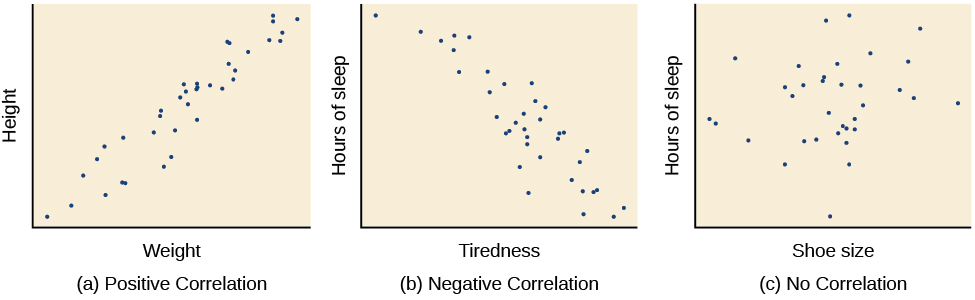

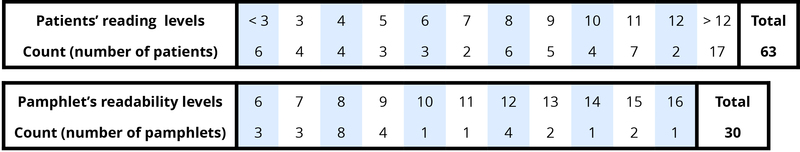

Experimental psychology refers to studying psychological phenomena using scientific methods. Originally, the primary scientific method involved manipulating one variable and observing systematic changes in another variable.

Today, psychologists utilize several types of scientific methodologies.

Experimental psychology examines a wide range of psychological phenomena, including: memory, sensation and perception, cognitive processes, motivation, emotion, developmental processes, in addition to the neurophysiological concomitants of each of these subjects.

Studies are conducted on both animal and human participants, and must comply with stringent requirements and controls regarding the ethical treatment of both.

Definition of Experimental Psychology

Experimental psychology is a branch of psychology that utilizes scientific methods to investigate the mind and behavior.

It involves the systematic and controlled study of human and animal behavior through observation and experimentation .

Experimental psychologists design and conduct experiments to understand cognitive processes, perception, learning, memory, emotion, and many other aspects of psychology. They often manipulate variables ( independent variables ) to see how this affects behavior or mental processes (dependent variables).

The findings from experimental psychology research are often used to better understand human behavior and can be applied in a range of contexts, such as education, health, business, and more.

Experimental Psychology Examples

1. The Puzzle Box Studies (Thorndike, 1898) Placing different cats in a box that can only be escaped by pulling a cord, and then taking detailed notes on how long it took for them to escape allowed Edward Thorndike to derive the Law of Effect: actions followed by positive consequences are more likely to occur again, and actions followed by negative consequences are less likely to occur again (Thorndike, 1898).

2. Reinforcement Schedules (Skinner, 1956) By placing rats in a Skinner Box and changing when and how often the rats are rewarded for pressing a lever, it is possible to identify how each schedule results in different behavior patterns (Skinner, 1956). This led to a wide range of theoretical ideas around how rewards and consequences can shape the behaviors of both animals and humans.

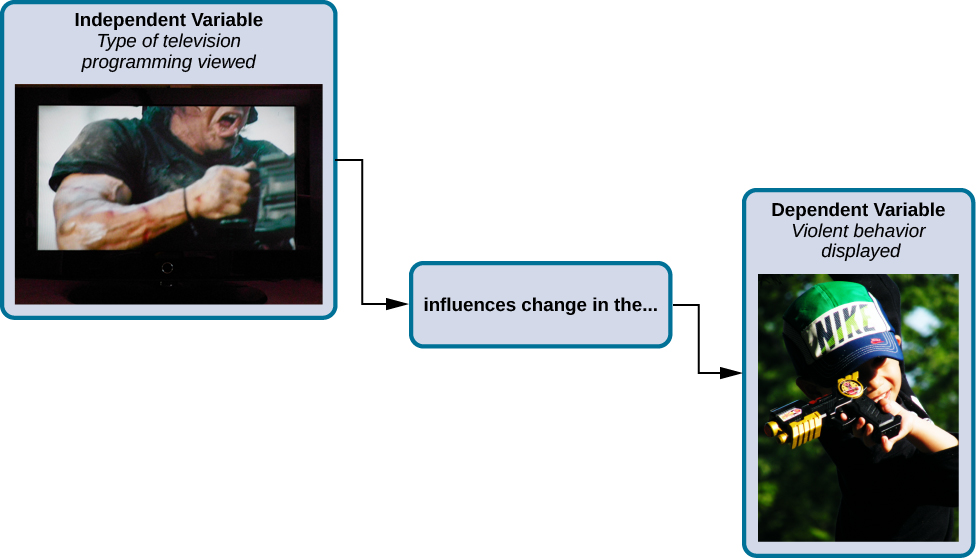

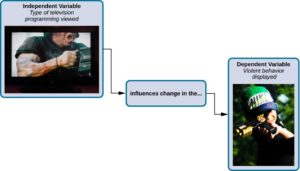

3. Observational Learning (Bandura, 1980) Some children watch a video of an adult punching and kicking a Bobo doll. Other children watch a video in which the adult plays nicely with the doll. By carefully observing the children’s behavior later when in a room with a Bobo doll, researchers can determine if television violence affects children’s behavior (Bandura, 1980).

4. The Fallibility of Memory (Loftus & Palmer, 1974) A group of participants watch the same video of two cars having an accident. Two weeks later, some are asked to estimate the rate of speed the cars were going when they “smashed” into each other. Some participants are asked to estimate the rate of speed the cars were going when they “bumped” into each other. Changing the phrasing of the question changes the memory of the eyewitness.

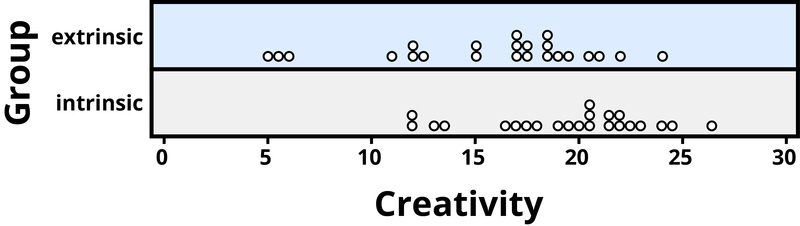

5. Intrinsic Motivation in the Classroom (Dweck, 1990) To investigate the role of autonomy on intrinsic motivation, half of the students are told they are “free to choose” which tasks to complete. The other half of the students are told they “must choose” some of the tasks. Researchers then carefully observe how long the students engage in the tasks and later ask them some questions about if they enjoyed doing the tasks or not.

6. Systematic Desensitization (Wolpe, 1958) A clinical psychologist carefully documents his treatment of a patient’s social phobia with progressive relaxation. At first, the patient is trained to monitor, tense, and relax various muscle groups while viewing photos of parties. Weeks later, they approach a stranger to ask for directions, initiate a conversation on a crowded bus, and attend a small social gathering. The therapist’s notes are transcribed into a scientific report and published in a peer-reviewed journal.

7. Study of Remembering (Bartlett, 1932) Bartlett’s work is a seminal study in the field of memory, where he used the concept of “schema” to describe an organized pattern of thought or behavior. He conducted a series of experiments using folk tales to show that memory recall is influenced by cultural schemas and personal experiences.

8. Study of Obedience (Milgram, 1963) This famous study explored the conflict between obedience to authority and personal conscience. Milgram found that a majority of participants were willing to administer what they believed were harmful electric shocks to a stranger when instructed by an authority figure, highlighting the power of authority and situational factors in driving behavior.

9. Pavlov’s Dog Study (Pavlov, 1927) Ivan Pavlov, a Russian physiologist, conducted a series of experiments that became a cornerstone in the field of experimental psychology. Pavlov noticed that dogs would salivate when they saw food. He then began to ring a bell each time he presented the food to the dogs. After a while, the dogs began to salivate merely at the sound of the bell. This experiment demonstrated the principle of “classical conditioning.”

10, Piaget’s Stages of Development (Piaget, 1958) Jean Piaget proposed a theory of cognitive development in children that consists of four distinct stages: the sensorimotor stage (birth to 2 years), where children learn about the world through their senses and motor activities, through to the the formal operational stage (12 years and beyond), where abstract reasoning and hypothetical thinking develop. Piaget’s theory is an example of experimental psychology as it was developed through systematic observation and experimentation on children’s problem-solving behaviors .

Types of Research Methodologies in Experimental Psychology

Researchers utilize several different types of research methodologies since the early days of Wundt (1832-1920).

1. The Experiment

The experiment involves the researcher manipulating the level of one variable, called the Independent Variable (IV), and then observing changes in another variable, called the Dependent Variable (DV).

The researcher is interested in determining if the IV causes changes in the DV. For example, does television violence make children more aggressive?

So, some children in the study, called research participants, will watch a show with TV violence, called the treatment group. Others will watch a show with no TV violence, called the control group.

So, there are two levels of the IV: violence and no violence. Next, children will be observed to see if they act more aggressively. This is the DV.

If TV violence makes children more aggressive, then the children that watched the violent show will me more aggressive than the children that watched the non-violent show.

A key requirement of the experiment is random assignment . Each research participant is assigned to one of the two groups in a way that makes it a completely random process. This means that each group will have a mix of children: different personality types, diverse family backgrounds, and range of intelligence levels.

2. The Longitudinal Study

A longitudinal study involves selecting a sample of participants and then following them for years, or decades, periodically collecting data on the variables of interest.

For example, a researcher might be interested in determining if parenting style affects academic performance of children. Parenting style is called the predictor variable , and academic performance is called the outcome variable .

Researchers will begin by randomly selecting a group of children to be in the study. Then, they will identify the type of parenting practices used when the children are 4 and 5 years old.

A few years later, perhaps when the children are 8 and 9, the researchers will collect data on their grades. This process can be repeated over the next 10 years, including through college.

If parenting style has an effect on academic performance, then the researchers will see a connection between the predictor variable and outcome variable.

Children raised with parenting style X will have higher grades than children raised with parenting style Y.

3. The Case Study

The case study is an in-depth study of one individual. This is a research methodology often used early in the examination of a psychological phenomenon or therapeutic treatment.

For example, in the early days of treating phobias, a clinical psychologist may try teaching one of their patients how to relax every time they see the object that creates so much fear and anxiety, such as a large spider.

The therapist would take very detailed notes on how the teaching process was implemented and the reactions of the patient. When the treatment had been completed, those notes would be written in a scientific form and submitted for publication in a scientific journal for other therapists to learn from.

There are several other types of methodologies available which vary different aspects of the three described above. The researcher will select a methodology that is most appropriate to the phenomenon they want to examine.

They also must take into account various practical considerations such as how much time and resources are needed to complete the study. Conducting research always costs money.

People and equipment are needed to carry-out every study, so researchers often try to obtain funding from their university or a government agency.

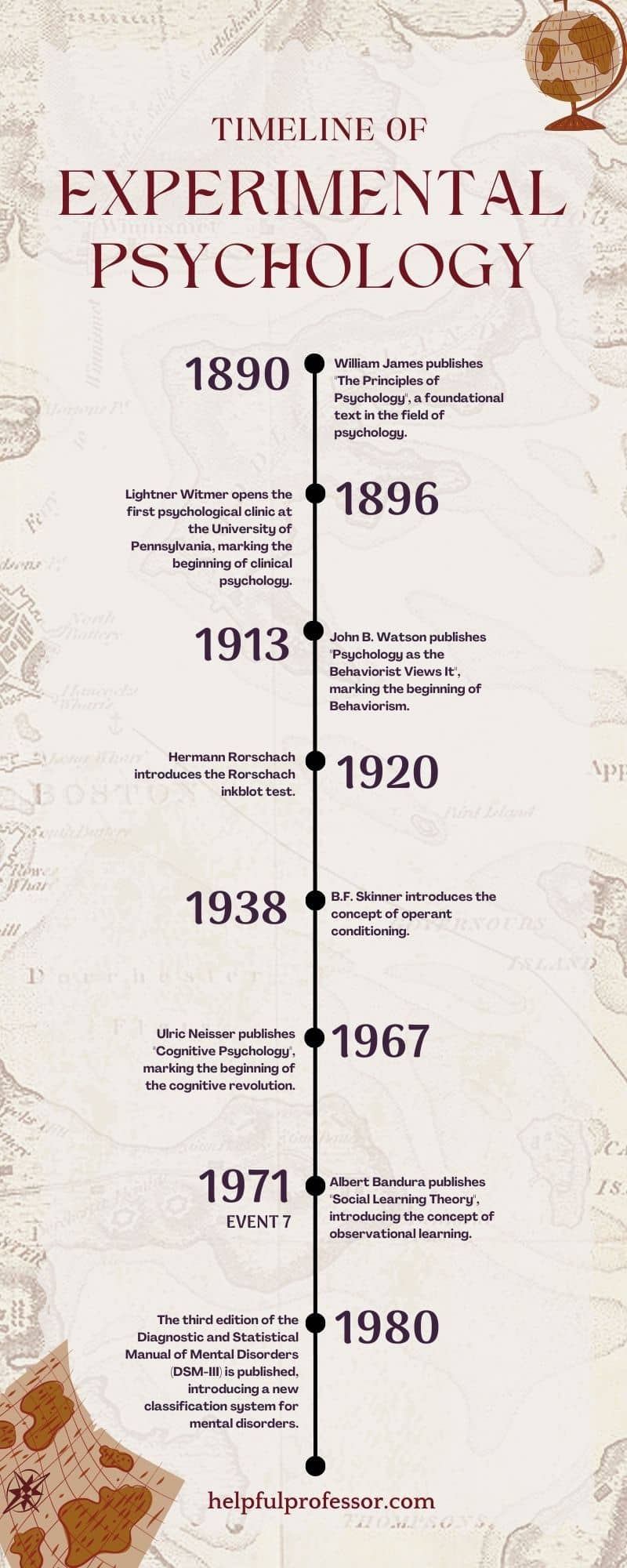

Origins and Key Developments in Experimental Psychology

Wilhelm Maximilian Wundt (1832-1920) is considered one of the fathers of modern psychology. He was a physiologist and philosopher and helped establish psychology as a distinct discipline (Khaleefa, 1999).

In 1879 he established the world’s first psychology research lab at the University of Leipzig. This is considered a key milestone for establishing psychology as a scientific discipline. In addition to being the first person to use the term “psychologist,” to describe himself, he also founded the discipline’s first scientific journal Philosphische Studien in 1883.

Another notable figure in the development of experimental psychology is Ernest Weber . Trained as a physician, Weber studied sensation and perception and created the first quantitative law in psychology.

The equation denotes how judgments of sensory differences are relative to previous levels of sensation, referred to as the just-noticeable difference (jnd). This is known today as Weber’s Law (Hergenhahn, 2009).

Gustav Fechner , one of Weber’s students, published the first book on experimental psychology in 1860, titled Elemente der Psychophysik. His worked centered on the measurement of psychophysical facets of sensation and perception, with many of his methods still in use today.

The first American textbook on experimental psychology was Elements of Physiological Psychology, published in 1887 by George Trumball Ladd .

Ladd also established a psychology lab at Yale University, while Stanley Hall and Charles Sanders continued Wundt’s work at a lab at Johns Hopkins University.

In the late 1800s, Charles Pierce’s contribution to experimental psychology is especially noteworthy because he invented the concept of random assignment (Stigler, 1992; Dehue, 1997).

Go Deeper: 15 Random Assignment Examples

This procedure ensures that each participant has an equal chance of being placed in any of the experimental groups (e.g., treatment or control group). This eliminates the influence of confounding factors related to inherent characteristics of the participants.

Random assignment is a fundamental criterion for a study to be considered a valid experiment.

From there, experimental psychology flourished in the 20th century as a science and transformed into an approach utilized in cognitive psychology, developmental psychology, and social psychology .

Today, the term experimental psychology refers to the study of a wide range of phenomena and involves methodologies not limited to the manipulation of variables.

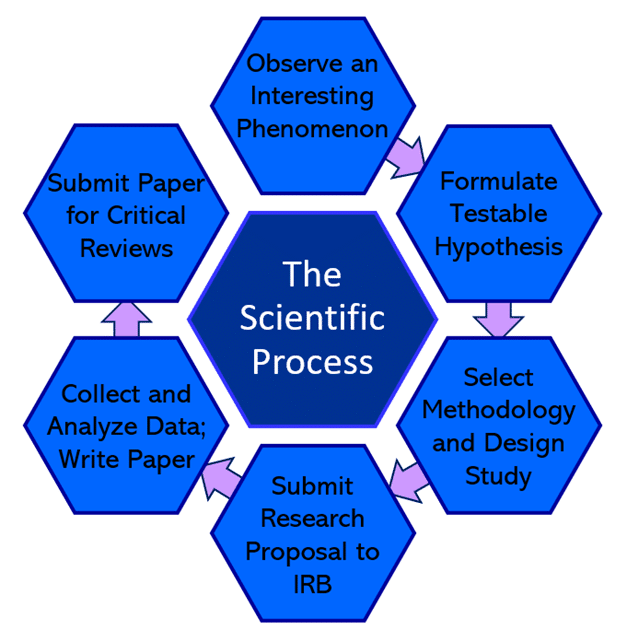

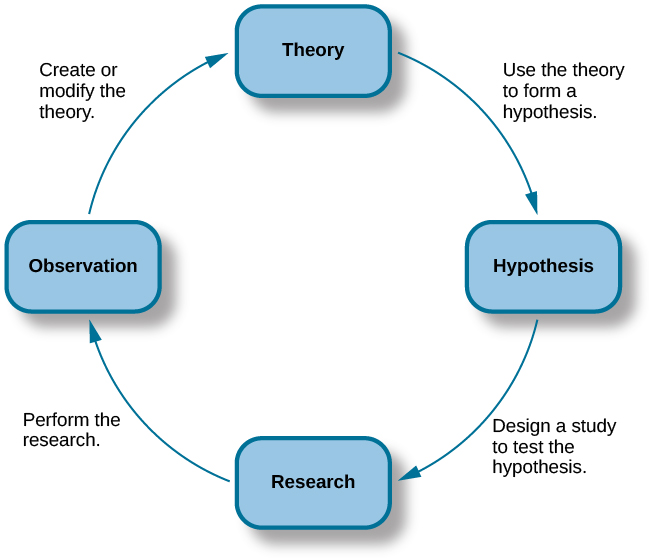

The Scientific Process and Experimental Psychology

The one thing that makes psychology a science and distinguishes it from its roots in philosophy is the reliance upon the scientific process to answer questions. This makes psychology a science was the main goal of its earliest founders such as Wilhelm Wundt.

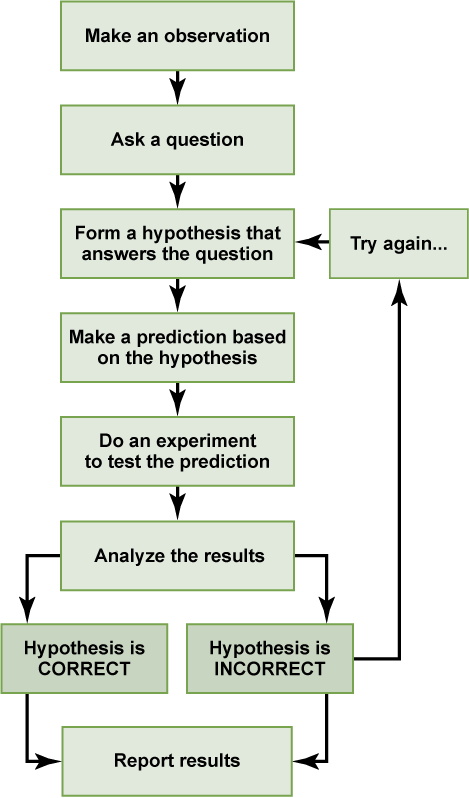

There are numerous steps in the scientific process, outlined in the graphic below.

1. Observation

First, the scientist observes an interesting phenomenon that sparks a question. For example, are the memories of eyewitnesses really reliable, or are they subject to bias or unintentional manipulation?

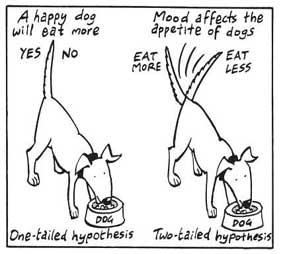

2. Hypothesize

Next, this question is converted into a testable hypothesis. For instance: the words used to question a witness can influence what they think they remember.

3. Devise a Study

Then the researcher(s) select a methodology that will allow them to test that hypothesis. In this case, the researchers choose the experiment, which will involve randomly assigning some participants to different conditions.

In one condition, participants are asked a question that implies a certain memory (treatment group), while other participants are asked a question which is phrased neutrally and does not imply a certain memory (control group).

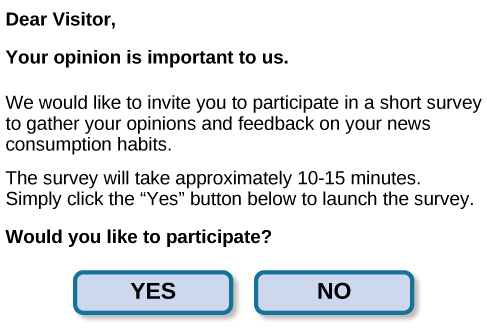

The researchers then write a proposal that describes in detail the procedures they want to use, how participants will be selected, and the safeguards they will employ to ensure the rights of the participants.

That proposal is submitted to an Institutional Review Board (IRB). The IRB is comprised of a panel of researchers, community representatives, and other professionals that are responsible for reviewing all studies involving human participants.

4. Conduct the Study

If the IRB accepts the proposal, then the researchers may begin collecting data. After the data has been collected, it is analyzed using a software program such as SPSS.

Those analyses will either support or reject the hypothesis. That is, either the participants’ memories were affected by the wording of the question, or not.

5. Publish the study

Finally, the researchers write a paper detailing their procedures and results of the statistical analyses. That paper is then submitted to a scientific journal.

The lead editor of that journal will then send copies of the paper to 3-5 experts in that subject. Each of those experts will read the paper and basically try to find as many things wrong with it as possible. Because they are experts, they are very good at this task.

After reading those critiques, most likely, the editor will send the paper back to the researchers and require that they respond to the criticisms, collect more data, or reject the paper outright.

In some cases, the study was so well-done that the criticisms were minimal and the editor accepts the paper. It then gets published in the scientific journal several months later.

That entire process can easily take 2 years, usually more. But, the findings of that study went through a very rigorous process. This means that we can have substantial confidence that the conclusions of the study are valid.

Experimental psychology refers to utilizing a scientific process to investigate psychological phenomenon.

There are a variety of methods employed today. They are used to study a wide range of subjects, including memory, cognitive processes, emotions and the neurophysiological basis of each.

The history of psychology as a science began in the 1800s primarily in Germany. As interest grew, the field expanded to the United States where several influential research labs were established.

As more methodologies were developed, the field of psychology as a science evolved into a prolific scientific discipline that has provided invaluable insights into human behavior.

Bartlett, F. C., & Bartlett, F. C. (1995). Remembering: A study in experimental and social psychology . Cambridge university press.

Dehue, T. (1997). Deception, efficiency, and random groups: Psychology and the gradual origination of the random group design. Isis , 88 (4), 653-673.

Ebbinghaus, H. (2013). Memory: A contribution to experimental psychology. Annals of neurosciences , 20 (4), 155.

Hergenhahn, B. R. (2009). An introduction to the history of psychology. Belmont. CA: Wadsworth Cengage Learning .

Khaleefa, O. (1999). Who is the founder of psychophysics and experimental psychology? American Journal of Islam and Society , 16 (2), 1-26.

Loftus, E. F., & Palmer, J. C. (1974). Reconstruction of auto-mobile destruction : An example of the interaction between language and memory. Journal of Verbal Learning and Verbal behavior , 13, 585-589.

Pavlov, I.P. (1927). Conditioned reflexes . Dover, New York.

Piaget, J. (1959). The language and thought of the child (Vol. 5). Psychology Press.

Piaget, J., Fraisse, P., & Reuchlin, M. (2014). Experimental psychology its scope and method: Volume I (Psychology Revivals): History and method . Psychology Press.

Skinner, B. F. (1956). A case history in scientlfic method. American Psychologist, 11 , 221-233

Stigler, S. M. (1992). A historical view of statistical concepts in psychology and educational research. American Journal of Education , 101 (1), 60-70.

Thorndike, E. L. (1898). Animal intelligence: An experimental study of the associative processes in animals. Psychological Review Monograph Supplement 2 .

Wolpe, J. (1958). Psychotherapy by reciprocal inhibition. Stanford, CA: Stanford University Press.

Appendix: Images reproduced as Text

Definition: Experimental psychology is a branch of psychology that focuses on conducting systematic and controlled experiments to study human behavior and cognition.

Overview: Experimental psychology aims to gather empirical evidence and explore cause-and-effect relationships between variables. Experimental psychologists utilize various research methods, including laboratory experiments, surveys, and observations, to investigate topics such as perception, memory, learning, motivation, and social behavior .

Example: The Pavlov’s Dog experimental psychology experiment used scientific methods to develop a theory about how learning and association occur in animals. The same concepts were subsequently used in the study of humans, wherein psychology-based ideas about learning were developed. Pavlov’s use of the empirical evidence was foundational to the study’s success.

Experimental Psychology Milestones:

1890: William James publishes “The Principles of Psychology”, a foundational text in the field of psychology.

1896: Lightner Witmer opens the first psychological clinic at the University of Pennsylvania, marking the beginning of clinical psychology.

1913: John B. Watson publishes “Psychology as the Behaviorist Views It”, marking the beginning of Behaviorism.

1920: Hermann Rorschach introduces the Rorschach inkblot test.

1938: B.F. Skinner introduces the concept of operant conditioning .

1967: Ulric Neisser publishes “Cognitive Psychology” , marking the beginning of the cognitive revolution.

1980: The third edition of the Diagnostic and Statistical Manual of Mental Disorders (DSM-III) is published, introducing a new classification system for mental disorders.

The Scientific Process

- Observe an interesting phenomenon

- Formulate testable hypothesis

- Select methodology and design study

- Submit research proposal to IRB

- Collect and analyzed data; write paper

- Submit paper for critical reviews

Dave Cornell (PhD)

Dr. Cornell has worked in education for more than 20 years. His work has involved designing teacher certification for Trinity College in London and in-service training for state governments in the United States. He has trained kindergarten teachers in 8 countries and helped businessmen and women open baby centers and kindergartens in 3 countries.

- Dave Cornell (PhD) https://helpfulprofessor.com/author/dave-cornell-phd/ 25 Positive Punishment Examples

- Dave Cornell (PhD) https://helpfulprofessor.com/author/dave-cornell-phd/ 25 Dissociation Examples (Psychology)

- Dave Cornell (PhD) https://helpfulprofessor.com/author/dave-cornell-phd/ 15 Zone of Proximal Development Examples

- Dave Cornell (PhD) https://helpfulprofessor.com/author/dave-cornell-phd/ Perception Checking: 15 Examples and Definition

Chris Drew (PhD)

This article was peer-reviewed and edited by Chris Drew (PhD). The review process on Helpful Professor involves having a PhD level expert fact check, edit, and contribute to articles. Reviewers ensure all content reflects expert academic consensus and is backed up with reference to academic studies. Dr. Drew has published over 20 academic articles in scholarly journals. He is the former editor of the Journal of Learning Development in Higher Education and holds a PhD in Education from ACU.

- Chris Drew (PhD) #molongui-disabled-link 25 Positive Punishment Examples

- Chris Drew (PhD) #molongui-disabled-link 25 Dissociation Examples (Psychology)

- Chris Drew (PhD) #molongui-disabled-link 15 Zone of Proximal Development Examples

- Chris Drew (PhD) #molongui-disabled-link Perception Checking: 15 Examples and Definition

Leave a Comment Cancel Reply

Your email address will not be published. Required fields are marked *

Want to create or adapt books like this? Learn more about how Pressbooks supports open publishing practices.

6.2 Experimental Design

Learning objectives.

- Explain the difference between between-subjects and within-subjects experiments, list some of the pros and cons of each approach, and decide which approach to use to answer a particular research question.

- Define random assignment, distinguish it from random sampling, explain its purpose in experimental research, and use some simple strategies to implement it.

- Define what a control condition is, explain its purpose in research on treatment effectiveness, and describe some alternative types of control conditions.

- Define several types of carryover effect, give examples of each, and explain how counterbalancing helps to deal with them.

In this section, we look at some different ways to design an experiment. The primary distinction we will make is between approaches in which each participant experiences one level of the independent variable and approaches in which each participant experiences all levels of the independent variable. The former are called between-subjects experiments and the latter are called within-subjects experiments.

Between-Subjects Experiments

In a between-subjects experiment , each participant is tested in only one condition. For example, a researcher with a sample of 100 college students might assign half of them to write about a traumatic event and the other half write about a neutral event. Or a researcher with a sample of 60 people with severe agoraphobia (fear of open spaces) might assign 20 of them to receive each of three different treatments for that disorder. It is essential in a between-subjects experiment that the researcher assign participants to conditions so that the different groups are, on average, highly similar to each other. Those in a trauma condition and a neutral condition, for example, should include a similar proportion of men and women, and they should have similar average intelligence quotients (IQs), similar average levels of motivation, similar average numbers of health problems, and so on. This is a matter of controlling these extraneous participant variables across conditions so that they do not become confounding variables.

Random Assignment

The primary way that researchers accomplish this kind of control of extraneous variables across conditions is called random assignment , which means using a random process to decide which participants are tested in which conditions. Do not confuse random assignment with random sampling. Random sampling is a method for selecting a sample from a population, and it is rarely used in psychological research. Random assignment is a method for assigning participants in a sample to the different conditions, and it is an important element of all experimental research in psychology and other fields too.

In its strictest sense, random assignment should meet two criteria. One is that each participant has an equal chance of being assigned to each condition (e.g., a 50% chance of being assigned to each of two conditions). The second is that each participant is assigned to a condition independently of other participants. Thus one way to assign participants to two conditions would be to flip a coin for each one. If the coin lands heads, the participant is assigned to Condition A, and if it lands tails, the participant is assigned to Condition B. For three conditions, one could use a computer to generate a random integer from 1 to 3 for each participant. If the integer is 1, the participant is assigned to Condition A; if it is 2, the participant is assigned to Condition B; and if it is 3, the participant is assigned to Condition C. In practice, a full sequence of conditions—one for each participant expected to be in the experiment—is usually created ahead of time, and each new participant is assigned to the next condition in the sequence as he or she is tested. When the procedure is computerized, the computer program often handles the random assignment.

One problem with coin flipping and other strict procedures for random assignment is that they are likely to result in unequal sample sizes in the different conditions. Unequal sample sizes are generally not a serious problem, and you should never throw away data you have already collected to achieve equal sample sizes. However, for a fixed number of participants, it is statistically most efficient to divide them into equal-sized groups. It is standard practice, therefore, to use a kind of modified random assignment that keeps the number of participants in each group as similar as possible. One approach is block randomization . In block randomization, all the conditions occur once in the sequence before any of them is repeated. Then they all occur again before any of them is repeated again. Within each of these “blocks,” the conditions occur in a random order. Again, the sequence of conditions is usually generated before any participants are tested, and each new participant is assigned to the next condition in the sequence. Table 6.2 “Block Randomization Sequence for Assigning Nine Participants to Three Conditions” shows such a sequence for assigning nine participants to three conditions. The Research Randomizer website ( http://www.randomizer.org ) will generate block randomization sequences for any number of participants and conditions. Again, when the procedure is computerized, the computer program often handles the block randomization.

Table 6.2 Block Randomization Sequence for Assigning Nine Participants to Three Conditions

Random assignment is not guaranteed to control all extraneous variables across conditions. It is always possible that just by chance, the participants in one condition might turn out to be substantially older, less tired, more motivated, or less depressed on average than the participants in another condition. However, there are some reasons that this is not a major concern. One is that random assignment works better than one might expect, especially for large samples. Another is that the inferential statistics that researchers use to decide whether a difference between groups reflects a difference in the population takes the “fallibility” of random assignment into account. Yet another reason is that even if random assignment does result in a confounding variable and therefore produces misleading results, this is likely to be detected when the experiment is replicated. The upshot is that random assignment to conditions—although not infallible in terms of controlling extraneous variables—is always considered a strength of a research design.

Treatment and Control Conditions

Between-subjects experiments are often used to determine whether a treatment works. In psychological research, a treatment is any intervention meant to change people’s behavior for the better. This includes psychotherapies and medical treatments for psychological disorders but also interventions designed to improve learning, promote conservation, reduce prejudice, and so on. To determine whether a treatment works, participants are randomly assigned to either a treatment condition , in which they receive the treatment, or a control condition , in which they do not receive the treatment. If participants in the treatment condition end up better off than participants in the control condition—for example, they are less depressed, learn faster, conserve more, express less prejudice—then the researcher can conclude that the treatment works. In research on the effectiveness of psychotherapies and medical treatments, this type of experiment is often called a randomized clinical trial .

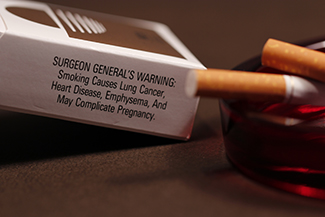

There are different types of control conditions. In a no-treatment control condition , participants receive no treatment whatsoever. One problem with this approach, however, is the existence of placebo effects. A placebo is a simulated treatment that lacks any active ingredient or element that should make it effective, and a placebo effect is a positive effect of such a treatment. Many folk remedies that seem to work—such as eating chicken soup for a cold or placing soap under the bedsheets to stop nighttime leg cramps—are probably nothing more than placebos. Although placebo effects are not well understood, they are probably driven primarily by people’s expectations that they will improve. Having the expectation to improve can result in reduced stress, anxiety, and depression, which can alter perceptions and even improve immune system functioning (Price, Finniss, & Benedetti, 2008).

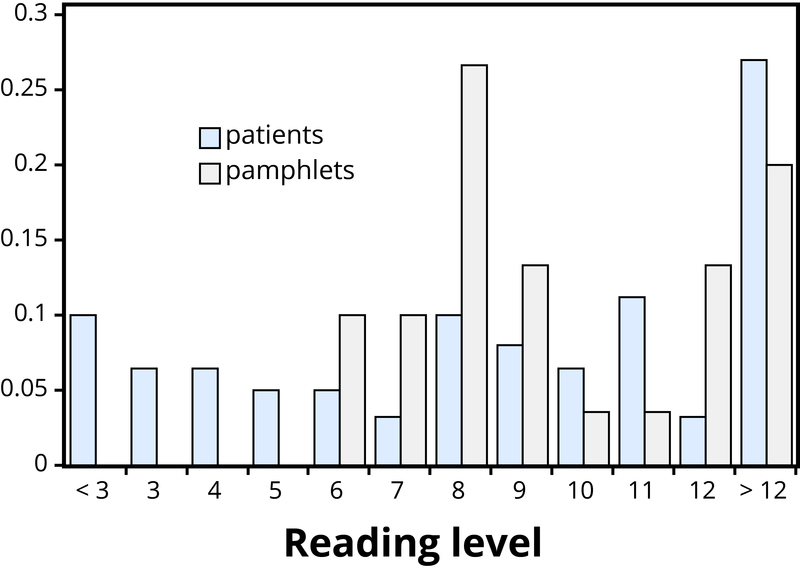

Placebo effects are interesting in their own right (see Note 6.28 “The Powerful Placebo” ), but they also pose a serious problem for researchers who want to determine whether a treatment works. Figure 6.2 “Hypothetical Results From a Study Including Treatment, No-Treatment, and Placebo Conditions” shows some hypothetical results in which participants in a treatment condition improved more on average than participants in a no-treatment control condition. If these conditions (the two leftmost bars in Figure 6.2 “Hypothetical Results From a Study Including Treatment, No-Treatment, and Placebo Conditions” ) were the only conditions in this experiment, however, one could not conclude that the treatment worked. It could be instead that participants in the treatment group improved more because they expected to improve, while those in the no-treatment control condition did not.

Figure 6.2 Hypothetical Results From a Study Including Treatment, No-Treatment, and Placebo Conditions

Fortunately, there are several solutions to this problem. One is to include a placebo control condition , in which participants receive a placebo that looks much like the treatment but lacks the active ingredient or element thought to be responsible for the treatment’s effectiveness. When participants in a treatment condition take a pill, for example, then those in a placebo control condition would take an identical-looking pill that lacks the active ingredient in the treatment (a “sugar pill”). In research on psychotherapy effectiveness, the placebo might involve going to a psychotherapist and talking in an unstructured way about one’s problems. The idea is that if participants in both the treatment and the placebo control groups expect to improve, then any improvement in the treatment group over and above that in the placebo control group must have been caused by the treatment and not by participants’ expectations. This is what is shown by a comparison of the two outer bars in Figure 6.2 “Hypothetical Results From a Study Including Treatment, No-Treatment, and Placebo Conditions” .

Of course, the principle of informed consent requires that participants be told that they will be assigned to either a treatment or a placebo control condition—even though they cannot be told which until the experiment ends. In many cases the participants who had been in the control condition are then offered an opportunity to have the real treatment. An alternative approach is to use a waitlist control condition , in which participants are told that they will receive the treatment but must wait until the participants in the treatment condition have already received it. This allows researchers to compare participants who have received the treatment with participants who are not currently receiving it but who still expect to improve (eventually). A final solution to the problem of placebo effects is to leave out the control condition completely and compare any new treatment with the best available alternative treatment. For example, a new treatment for simple phobia could be compared with standard exposure therapy. Because participants in both conditions receive a treatment, their expectations about improvement should be similar. This approach also makes sense because once there is an effective treatment, the interesting question about a new treatment is not simply “Does it work?” but “Does it work better than what is already available?”

The Powerful Placebo

Many people are not surprised that placebos can have a positive effect on disorders that seem fundamentally psychological, including depression, anxiety, and insomnia. However, placebos can also have a positive effect on disorders that most people think of as fundamentally physiological. These include asthma, ulcers, and warts (Shapiro & Shapiro, 1999). There is even evidence that placebo surgery—also called “sham surgery”—can be as effective as actual surgery.

Medical researcher J. Bruce Moseley and his colleagues conducted a study on the effectiveness of two arthroscopic surgery procedures for osteoarthritis of the knee (Moseley et al., 2002). The control participants in this study were prepped for surgery, received a tranquilizer, and even received three small incisions in their knees. But they did not receive the actual arthroscopic surgical procedure. The surprising result was that all participants improved in terms of both knee pain and function, and the sham surgery group improved just as much as the treatment groups. According to the researchers, “This study provides strong evidence that arthroscopic lavage with or without débridement [the surgical procedures used] is not better than and appears to be equivalent to a placebo procedure in improving knee pain and self-reported function” (p. 85).

Research has shown that patients with osteoarthritis of the knee who receive a “sham surgery” experience reductions in pain and improvement in knee function similar to those of patients who receive a real surgery.

Army Medicine – Surgery – CC BY 2.0.

Within-Subjects Experiments

In a within-subjects experiment , each participant is tested under all conditions. Consider an experiment on the effect of a defendant’s physical attractiveness on judgments of his guilt. Again, in a between-subjects experiment, one group of participants would be shown an attractive defendant and asked to judge his guilt, and another group of participants would be shown an unattractive defendant and asked to judge his guilt. In a within-subjects experiment, however, the same group of participants would judge the guilt of both an attractive and an unattractive defendant.

The primary advantage of this approach is that it provides maximum control of extraneous participant variables. Participants in all conditions have the same mean IQ, same socioeconomic status, same number of siblings, and so on—because they are the very same people. Within-subjects experiments also make it possible to use statistical procedures that remove the effect of these extraneous participant variables on the dependent variable and therefore make the data less “noisy” and the effect of the independent variable easier to detect. We will look more closely at this idea later in the book.

Carryover Effects and Counterbalancing

The primary disadvantage of within-subjects designs is that they can result in carryover effects. A carryover effect is an effect of being tested in one condition on participants’ behavior in later conditions. One type of carryover effect is a practice effect , where participants perform a task better in later conditions because they have had a chance to practice it. Another type is a fatigue effect , where participants perform a task worse in later conditions because they become tired or bored. Being tested in one condition can also change how participants perceive stimuli or interpret their task in later conditions. This is called a context effect . For example, an average-looking defendant might be judged more harshly when participants have just judged an attractive defendant than when they have just judged an unattractive defendant. Within-subjects experiments also make it easier for participants to guess the hypothesis. For example, a participant who is asked to judge the guilt of an attractive defendant and then is asked to judge the guilt of an unattractive defendant is likely to guess that the hypothesis is that defendant attractiveness affects judgments of guilt. This could lead the participant to judge the unattractive defendant more harshly because he thinks this is what he is expected to do. Or it could make participants judge the two defendants similarly in an effort to be “fair.”

Carryover effects can be interesting in their own right. (Does the attractiveness of one person depend on the attractiveness of other people that we have seen recently?) But when they are not the focus of the research, carryover effects can be problematic. Imagine, for example, that participants judge the guilt of an attractive defendant and then judge the guilt of an unattractive defendant. If they judge the unattractive defendant more harshly, this might be because of his unattractiveness. But it could be instead that they judge him more harshly because they are becoming bored or tired. In other words, the order of the conditions is a confounding variable. The attractive condition is always the first condition and the unattractive condition the second. Thus any difference between the conditions in terms of the dependent variable could be caused by the order of the conditions and not the independent variable itself.

There is a solution to the problem of order effects, however, that can be used in many situations. It is counterbalancing , which means testing different participants in different orders. For example, some participants would be tested in the attractive defendant condition followed by the unattractive defendant condition, and others would be tested in the unattractive condition followed by the attractive condition. With three conditions, there would be six different orders (ABC, ACB, BAC, BCA, CAB, and CBA), so some participants would be tested in each of the six orders. With counterbalancing, participants are assigned to orders randomly, using the techniques we have already discussed. Thus random assignment plays an important role in within-subjects designs just as in between-subjects designs. Here, instead of randomly assigning to conditions, they are randomly assigned to different orders of conditions. In fact, it can safely be said that if a study does not involve random assignment in one form or another, it is not an experiment.

There are two ways to think about what counterbalancing accomplishes. One is that it controls the order of conditions so that it is no longer a confounding variable. Instead of the attractive condition always being first and the unattractive condition always being second, the attractive condition comes first for some participants and second for others. Likewise, the unattractive condition comes first for some participants and second for others. Thus any overall difference in the dependent variable between the two conditions cannot have been caused by the order of conditions. A second way to think about what counterbalancing accomplishes is that if there are carryover effects, it makes it possible to detect them. One can analyze the data separately for each order to see whether it had an effect.

When 9 Is “Larger” Than 221

Researcher Michael Birnbaum has argued that the lack of context provided by between-subjects designs is often a bigger problem than the context effects created by within-subjects designs. To demonstrate this, he asked one group of participants to rate how large the number 9 was on a 1-to-10 rating scale and another group to rate how large the number 221 was on the same 1-to-10 rating scale (Birnbaum, 1999). Participants in this between-subjects design gave the number 9 a mean rating of 5.13 and the number 221 a mean rating of 3.10. In other words, they rated 9 as larger than 221! According to Birnbaum, this is because participants spontaneously compared 9 with other one-digit numbers (in which case it is relatively large) and compared 221 with other three-digit numbers (in which case it is relatively small).

Simultaneous Within-Subjects Designs

So far, we have discussed an approach to within-subjects designs in which participants are tested in one condition at a time. There is another approach, however, that is often used when participants make multiple responses in each condition. Imagine, for example, that participants judge the guilt of 10 attractive defendants and 10 unattractive defendants. Instead of having people make judgments about all 10 defendants of one type followed by all 10 defendants of the other type, the researcher could present all 20 defendants in a sequence that mixed the two types. The researcher could then compute each participant’s mean rating for each type of defendant. Or imagine an experiment designed to see whether people with social anxiety disorder remember negative adjectives (e.g., “stupid,” “incompetent”) better than positive ones (e.g., “happy,” “productive”). The researcher could have participants study a single list that includes both kinds of words and then have them try to recall as many words as possible. The researcher could then count the number of each type of word that was recalled. There are many ways to determine the order in which the stimuli are presented, but one common way is to generate a different random order for each participant.

Between-Subjects or Within-Subjects?

Almost every experiment can be conducted using either a between-subjects design or a within-subjects design. This means that researchers must choose between the two approaches based on their relative merits for the particular situation.

Between-subjects experiments have the advantage of being conceptually simpler and requiring less testing time per participant. They also avoid carryover effects without the need for counterbalancing. Within-subjects experiments have the advantage of controlling extraneous participant variables, which generally reduces noise in the data and makes it easier to detect a relationship between the independent and dependent variables.

A good rule of thumb, then, is that if it is possible to conduct a within-subjects experiment (with proper counterbalancing) in the time that is available per participant—and you have no serious concerns about carryover effects—this is probably the best option. If a within-subjects design would be difficult or impossible to carry out, then you should consider a between-subjects design instead. For example, if you were testing participants in a doctor’s waiting room or shoppers in line at a grocery store, you might not have enough time to test each participant in all conditions and therefore would opt for a between-subjects design. Or imagine you were trying to reduce people’s level of prejudice by having them interact with someone of another race. A within-subjects design with counterbalancing would require testing some participants in the treatment condition first and then in a control condition. But if the treatment works and reduces people’s level of prejudice, then they would no longer be suitable for testing in the control condition. This is true for many designs that involve a treatment meant to produce long-term change in participants’ behavior (e.g., studies testing the effectiveness of psychotherapy). Clearly, a between-subjects design would be necessary here.

Remember also that using one type of design does not preclude using the other type in a different study. There is no reason that a researcher could not use both a between-subjects design and a within-subjects design to answer the same research question. In fact, professional researchers often do exactly this.

Key Takeaways

- Experiments can be conducted using either between-subjects or within-subjects designs. Deciding which to use in a particular situation requires careful consideration of the pros and cons of each approach.

- Random assignment to conditions in between-subjects experiments or to orders of conditions in within-subjects experiments is a fundamental element of experimental research. Its purpose is to control extraneous variables so that they do not become confounding variables.

- Experimental research on the effectiveness of a treatment requires both a treatment condition and a control condition, which can be a no-treatment control condition, a placebo control condition, or a waitlist control condition. Experimental treatments can also be compared with the best available alternative.

Discussion: For each of the following topics, list the pros and cons of a between-subjects and within-subjects design and decide which would be better.

- You want to test the relative effectiveness of two training programs for running a marathon.

- Using photographs of people as stimuli, you want to see if smiling people are perceived as more intelligent than people who are not smiling.

- In a field experiment, you want to see if the way a panhandler is dressed (neatly vs. sloppily) affects whether or not passersby give him any money.

- You want to see if concrete nouns (e.g., dog ) are recalled better than abstract nouns (e.g., truth ).

- Discussion: Imagine that an experiment shows that participants who receive psychodynamic therapy for a dog phobia improve more than participants in a no-treatment control group. Explain a fundamental problem with this research design and at least two ways that it might be corrected.

Birnbaum, M. H. (1999). How to show that 9 > 221: Collect judgments in a between-subjects design. Psychological Methods, 4 , 243–249.

Moseley, J. B., O’Malley, K., Petersen, N. J., Menke, T. J., Brody, B. A., Kuykendall, D. H., … Wray, N. P. (2002). A controlled trial of arthroscopic surgery for osteoarthritis of the knee. The New England Journal of Medicine, 347 , 81–88.

Price, D. D., Finniss, D. G., & Benedetti, F. (2008). A comprehensive review of the placebo effect: Recent advances and current thought. Annual Review of Psychology, 59 , 565–590.

Shapiro, A. K., & Shapiro, E. (1999). The powerful placebo: From ancient priest to modern physician . Baltimore, MD: Johns Hopkins University Press.

Research Methods in Psychology Copyright © 2016 by University of Minnesota is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License , except where otherwise noted.

5.2 Experimental Design

Learning objectives.

- Explain the difference between between-subjects and within-subjects experiments, list some of the pros and cons of each approach, and decide which approach to use to answer a particular research question.

- Define random assignment, distinguish it from random sampling, explain its purpose in experimental research, and use some simple strategies to implement it

- Define several types of carryover effect, give examples of each, and explain how counterbalancing helps to deal with them.

In this section, we look at some different ways to design an experiment. The primary distinction we will make is between approaches in which each participant experiences one level of the independent variable and approaches in which each participant experiences all levels of the independent variable. The former are called between-subjects experiments and the latter are called within-subjects experiments.

Between-Subjects Experiments

In a between-subjects experiment , each participant is tested in only one condition. For example, a researcher with a sample of 100 university students might assign half of them to write about a traumatic event and the other half write about a neutral event. Or a researcher with a sample of 60 people with severe agoraphobia (fear of open spaces) might assign 20 of them to receive each of three different treatments for that disorder. It is essential in a between-subjects experiment that the researcher assigns participants to conditions so that the different groups are, on average, highly similar to each other. Those in a trauma condition and a neutral condition, for example, should include a similar proportion of men and women, and they should have similar average intelligence quotients (IQs), similar average levels of motivation, similar average numbers of health problems, and so on. This matching is a matter of controlling these extraneous participant variables across conditions so that they do not become confounding variables.

Random Assignment

The primary way that researchers accomplish this kind of control of extraneous variables across conditions is called random assignment , which means using a random process to decide which participants are tested in which conditions. Do not confuse random assignment with random sampling. Random sampling is a method for selecting a sample from a population, and it is rarely used in psychological research. Random assignment is a method for assigning participants in a sample to the different conditions, and it is an important element of all experimental research in psychology and other fields too.

In its strictest sense, random assignment should meet two criteria. One is that each participant has an equal chance of being assigned to each condition (e.g., a 50% chance of being assigned to each of two conditions). The second is that each participant is assigned to a condition independently of other participants. Thus one way to assign participants to two conditions would be to flip a coin for each one. If the coin lands heads, the participant is assigned to Condition A, and if it lands tails, the participant is assigned to Condition B. For three conditions, one could use a computer to generate a random integer from 1 to 3 for each participant. If the integer is 1, the participant is assigned to Condition A; if it is 2, the participant is assigned to Condition B; and if it is 3, the participant is assigned to Condition C. In practice, a full sequence of conditions—one for each participant expected to be in the experiment—is usually created ahead of time, and each new participant is assigned to the next condition in the sequence as he or she is tested. When the procedure is computerized, the computer program often handles the random assignment.

One problem with coin flipping and other strict procedures for random assignment is that they are likely to result in unequal sample sizes in the different conditions. Unequal sample sizes are generally not a serious problem, and you should never throw away data you have already collected to achieve equal sample sizes. However, for a fixed number of participants, it is statistically most efficient to divide them into equal-sized groups. It is standard practice, therefore, to use a kind of modified random assignment that keeps the number of participants in each group as similar as possible. One approach is block randomization . In block randomization, all the conditions occur once in the sequence before any of them is repeated. Then they all occur again before any of them is repeated again. Within each of these “blocks,” the conditions occur in a random order. Again, the sequence of conditions is usually generated before any participants are tested, and each new participant is assigned to the next condition in the sequence. Table 5.2 shows such a sequence for assigning nine participants to three conditions. The Research Randomizer website ( http://www.randomizer.org ) will generate block randomization sequences for any number of participants and conditions. Again, when the procedure is computerized, the computer program often handles the block randomization.

Random assignment is not guaranteed to control all extraneous variables across conditions. The process is random, so it is always possible that just by chance, the participants in one condition might turn out to be substantially older, less tired, more motivated, or less depressed on average than the participants in another condition. However, there are some reasons that this possibility is not a major concern. One is that random assignment works better than one might expect, especially for large samples. Another is that the inferential statistics that researchers use to decide whether a difference between groups reflects a difference in the population takes the “fallibility” of random assignment into account. Yet another reason is that even if random assignment does result in a confounding variable and therefore produces misleading results, this confound is likely to be detected when the experiment is replicated. The upshot is that random assignment to conditions—although not infallible in terms of controlling extraneous variables—is always considered a strength of a research design.

Matched Groups

An alternative to simple random assignment of participants to conditions is the use of a matched-groups design . Using this design, participants in the various conditions are matched on the dependent variable or on some extraneous variable(s) prior the manipulation of the independent variable. This guarantees that these variables will not be confounded across the experimental conditions. For instance, if we want to determine whether expressive writing affects people’s health then we could start by measuring various health-related variables in our prospective research participants. We could then use that information to rank-order participants according to how healthy or unhealthy they are. Next, the two healthiest participants would be randomly assigned to complete different conditions (one would be randomly assigned to the traumatic experiences writing condition and the other to the neutral writing condition). The next two healthiest participants would then be randomly assigned to complete different conditions, and so on until the two least healthy participants. This method would ensure that participants in the traumatic experiences writing condition are matched to participants in the neutral writing condition with respect to health at the beginning of the study. If at the end of the experiment, a difference in health was detected across the two conditions, then we would know that it is due to the writing manipulation and not to pre-existing differences in health.

Within-Subjects Experiments

In a within-subjects experiment , each participant is tested under all conditions. Consider an experiment on the effect of a defendant’s physical attractiveness on judgments of his guilt. Again, in a between-subjects experiment, one group of participants would be shown an attractive defendant and asked to judge his guilt, and another group of participants would be shown an unattractive defendant and asked to judge his guilt. In a within-subjects experiment, however, the same group of participants would judge the guilt of both an attractive and an unattractive defendant.

The primary advantage of this approach is that it provides maximum control of extraneous participant variables. Participants in all conditions have the same mean IQ, same socioeconomic status, same number of siblings, and so on—because they are the very same people. Within-subjects experiments also make it possible to use statistical procedures that remove the effect of these extraneous participant variables on the dependent variable and therefore make the data less “noisy” and the effect of the independent variable easier to detect. We will look more closely at this idea later in the book . However, not all experiments can use a within-subjects design nor would it be desirable to do so.

One disadvantage of within-subjects experiments is that they make it easier for participants to guess the hypothesis. For example, a participant who is asked to judge the guilt of an attractive defendant and then is asked to judge the guilt of an unattractive defendant is likely to guess that the hypothesis is that defendant attractiveness affects judgments of guilt. This knowledge could lead the participant to judge the unattractive defendant more harshly because he thinks this is what he is expected to do. Or it could make participants judge the two defendants similarly in an effort to be “fair.”

Carryover Effects and Counterbalancing

The primary disadvantage of within-subjects designs is that they can result in order effects. An order effect occurs when participants’ responses in the various conditions are affected by the order of conditions to which they were exposed. One type of order effect is a carryover effect. A carryover effect is an effect of being tested in one condition on participants’ behavior in later conditions. One type of carryover effect is a practice effect , where participants perform a task better in later conditions because they have had a chance to practice it. Another type is a fatigue effect , where participants perform a task worse in later conditions because they become tired or bored. Being tested in one condition can also change how participants perceive stimuli or interpret their task in later conditions. This type of effect is called a context effect (or contrast effect) . For example, an average-looking defendant might be judged more harshly when participants have just judged an attractive defendant than when they have just judged an unattractive defendant. Within-subjects experiments also make it easier for participants to guess the hypothesis. For example, a participant who is asked to judge the guilt of an attractive defendant and then is asked to judge the guilt of an unattractive defendant is likely to guess that the hypothesis is that defendant attractiveness affects judgments of guilt.

Carryover effects can be interesting in their own right. (Does the attractiveness of one person depend on the attractiveness of other people that we have seen recently?) But when they are not the focus of the research, carryover effects can be problematic. Imagine, for example, that participants judge the guilt of an attractive defendant and then judge the guilt of an unattractive defendant. If they judge the unattractive defendant more harshly, this might be because of his unattractiveness. But it could be instead that they judge him more harshly because they are becoming bored or tired. In other words, the order of the conditions is a confounding variable. The attractive condition is always the first condition and the unattractive condition the second. Thus any difference between the conditions in terms of the dependent variable could be caused by the order of the conditions and not the independent variable itself.

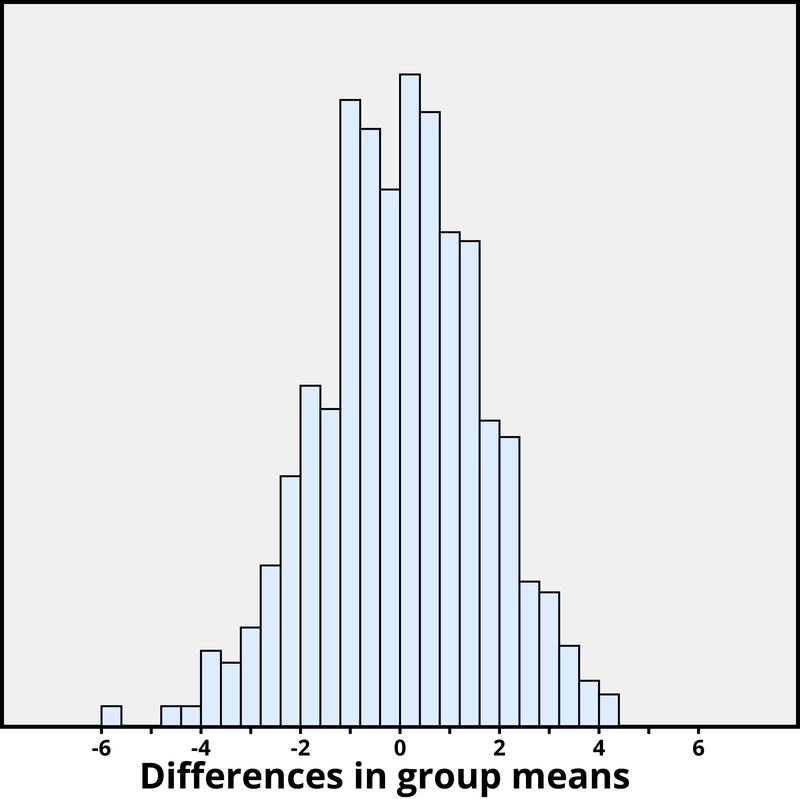

There is a solution to the problem of order effects, however, that can be used in many situations. It is counterbalancing , which means testing different participants in different orders. The best method of counterbalancing is complete counterbalancing in which an equal number of participants complete each possible order of conditions. For example, half of the participants would be tested in the attractive defendant condition followed by the unattractive defendant condition, and others half would be tested in the unattractive condition followed by the attractive condition. With three conditions, there would be six different orders (ABC, ACB, BAC, BCA, CAB, and CBA), so some participants would be tested in each of the six orders. With four conditions, there would be 24 different orders; with five conditions there would be 120 possible orders. With counterbalancing, participants are assigned to orders randomly, using the techniques we have already discussed. Thus, random assignment plays an important role in within-subjects designs just as in between-subjects designs. Here, instead of randomly assigning to conditions, they are randomly assigned to different orders of conditions. In fact, it can safely be said that if a study does not involve random assignment in one form or another, it is not an experiment.

A more efficient way of counterbalancing is through a Latin square design which randomizes through having equal rows and columns. For example, if you have four treatments, you must have four versions. Like a Sudoku puzzle, no treatment can repeat in a row or column. For four versions of four treatments, the Latin square design would look like:

You can see in the diagram above that the square has been constructed to ensure that each condition appears at each ordinal position (A appears first once, second once, third once, and fourth once) and each condition preceded and follows each other condition one time. A Latin square for an experiment with 6 conditions would by 6 x 6 in dimension, one for an experiment with 8 conditions would be 8 x 8 in dimension, and so on. So while complete counterbalancing of 6 conditions would require 720 orders, a Latin square would only require 6 orders.

Finally, when the number of conditions is large experiments can use random counterbalancing in which the order of the conditions is randomly determined for each participant. Using this technique every possible order of conditions is determined and then one of these orders is randomly selected for each participant. This is not as powerful a technique as complete counterbalancing or partial counterbalancing using a Latin squares design. Use of random counterbalancing will result in more random error, but if order effects are likely to be small and the number of conditions is large, this is an option available to researchers.

There are two ways to think about what counterbalancing accomplishes. One is that it controls the order of conditions so that it is no longer a confounding variable. Instead of the attractive condition always being first and the unattractive condition always being second, the attractive condition comes first for some participants and second for others. Likewise, the unattractive condition comes first for some participants and second for others. Thus any overall difference in the dependent variable between the two conditions cannot have been caused by the order of conditions. A second way to think about what counterbalancing accomplishes is that if there are carryover effects, it makes it possible to detect them. One can analyze the data separately for each order to see whether it had an effect.

When 9 Is “Larger” Than 221

Researcher Michael Birnbaum has argued that the lack of context provided by between-subjects designs is often a bigger problem than the context effects created by within-subjects designs. To demonstrate this problem, he asked participants to rate two numbers on how large they were on a scale of 1-to-10 where 1 was “very very small” and 10 was “very very large”. One group of participants were asked to rate the number 9 and another group was asked to rate the number 221 (Birnbaum, 1999) [1] . Participants in this between-subjects design gave the number 9 a mean rating of 5.13 and the number 221 a mean rating of 3.10. In other words, they rated 9 as larger than 221! According to Birnbaum, this difference is because participants spontaneously compared 9 with other one-digit numbers (in which case it is relatively large) and compared 221 with other three-digit numbers (in which case it is relatively small).

Simultaneous Within-Subjects Designs

So far, we have discussed an approach to within-subjects designs in which participants are tested in one condition at a time. There is another approach, however, that is often used when participants make multiple responses in each condition. Imagine, for example, that participants judge the guilt of 10 attractive defendants and 10 unattractive defendants. Instead of having people make judgments about all 10 defendants of one type followed by all 10 defendants of the other type, the researcher could present all 20 defendants in a sequence that mixed the two types. The researcher could then compute each participant’s mean rating for each type of defendant. Or imagine an experiment designed to see whether people with social anxiety disorder remember negative adjectives (e.g., “stupid,” “incompetent”) better than positive ones (e.g., “happy,” “productive”). The researcher could have participants study a single list that includes both kinds of words and then have them try to recall as many words as possible. The researcher could then count the number of each type of word that was recalled.

Between-Subjects or Within-Subjects?

Almost every experiment can be conducted using either a between-subjects design or a within-subjects design. This possibility means that researchers must choose between the two approaches based on their relative merits for the particular situation.

Between-subjects experiments have the advantage of being conceptually simpler and requiring less testing time per participant. They also avoid carryover effects without the need for counterbalancing. Within-subjects experiments have the advantage of controlling extraneous participant variables, which generally reduces noise in the data and makes it easier to detect a relationship between the independent and dependent variables.

A good rule of thumb, then, is that if it is possible to conduct a within-subjects experiment (with proper counterbalancing) in the time that is available per participant—and you have no serious concerns about carryover effects—this design is probably the best option. If a within-subjects design would be difficult or impossible to carry out, then you should consider a between-subjects design instead. For example, if you were testing participants in a doctor’s waiting room or shoppers in line at a grocery store, you might not have enough time to test each participant in all conditions and therefore would opt for a between-subjects design. Or imagine you were trying to reduce people’s level of prejudice by having them interact with someone of another race. A within-subjects design with counterbalancing would require testing some participants in the treatment condition first and then in a control condition. But if the treatment works and reduces people’s level of prejudice, then they would no longer be suitable for testing in the control condition. This difficulty is true for many designs that involve a treatment meant to produce long-term change in participants’ behavior (e.g., studies testing the effectiveness of psychotherapy). Clearly, a between-subjects design would be necessary here.

Remember also that using one type of design does not preclude using the other type in a different study. There is no reason that a researcher could not use both a between-subjects design and a within-subjects design to answer the same research question. In fact, professional researchers often take exactly this type of mixed methods approach.

Key Takeaways

- Experiments can be conducted using either between-subjects or within-subjects designs. Deciding which to use in a particular situation requires careful consideration of the pros and cons of each approach.

- Random assignment to conditions in between-subjects experiments or counterbalancing of orders of conditions in within-subjects experiments is a fundamental element of experimental research. The purpose of these techniques is to control extraneous variables so that they do not become confounding variables.

- You want to test the relative effectiveness of two training programs for running a marathon.

- Using photographs of people as stimuli, you want to see if smiling people are perceived as more intelligent than people who are not smiling.

- In a field experiment, you want to see if the way a panhandler is dressed (neatly vs. sloppily) affects whether or not passersby give him any money.

- You want to see if concrete nouns (e.g., dog ) are recalled better than abstract nouns (e.g., truth).

- Birnbaum, M.H. (1999). How to show that 9>221: Collect judgments in a between-subjects design. Psychological Methods, 4 (3), 243-249. ↵

Share This Book

- Increase Font Size

Final dates! Join the tutor2u subject teams in London for a day of exam technique and revision at the cinema. Learn more →

Reference Library

Collections

- See what's new

- All Resources

- Student Resources

- Assessment Resources

- Teaching Resources

- CPD Courses

- Livestreams

Study notes, videos, interactive activities and more!

Psychology news, insights and enrichment

Currated collections of free resources

Browse resources by topic

- All Psychology Resources

Resource Selections

Currated lists of resources

Study Notes

Types of Experiment: Overview

Last updated 6 Sept 2022

- Share on Facebook

- Share on Twitter

- Share by Email

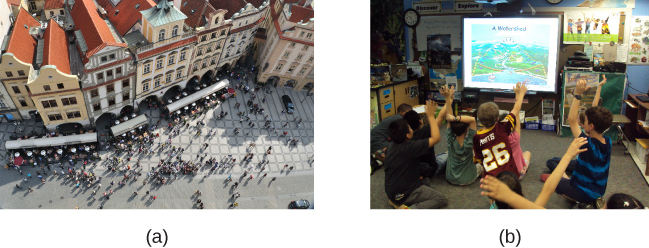

Different types of methods are used in research, which loosely fall into 1 of 2 categories.

Experimental (Laboratory, Field & Natural) & N on experimental ( correlations, observations, interviews, questionnaires and case studies).

All the three types of experiments have characteristics in common. They all have:

- an independent variable (I.V.) which is manipulated or a naturally occurring variable

- a dependent variable (D.V.) which is measured

- there will be at least two conditions in which participants produce data.

Note – natural and quasi experiments are often used synonymously but are not strictly the same, as with quasi experiments participants cannot be randomly assigned, so rather than there being a condition there is a condition.

Laboratory Experiments

These are conducted under controlled conditions, in which the researcher deliberately changes something (I.V.) to see the effect of this on something else (D.V.).

Control – lab experiments have a high degree of control over the environment & other extraneous variables which means that the researcher can accurately assess the effects of the I.V, so it has higher internal validity.

Replicable – due to the researcher’s high levels of control, research procedures can be repeated so that the reliability of results can be checked.

Limitations

Lacks ecological validity – due to the involvement of the researcher in manipulating and controlling variables, findings cannot be easily generalised to other (real life) settings, resulting in poor external validity.

Field Experiments

These are carried out in a natural setting, in which the researcher manipulates something (I.V.) to see the effect of this on something else (D.V.).

Validity – field experiments have some degree of control but also are conducted in a natural environment, so can be seen to have reasonable internal and external validity.

Less control than lab experiments and therefore extraneous variables are more likely to distort findings and so internal validity is likely to be lower.

Natural / Quasi Experiments

These are typically carried out in a natural setting, in which the researcher measures the effect of something which is to see the effect of this on something else (D.V.). Note that in this case there is no deliberate manipulation of a variable; this already naturally changing, which means the research is merely measuring the effect of something that is already happening.

High ecological validity – due to the lack of involvement of the researcher; variables are naturally occurring so findings can be easily generalised to other (real life) settings, resulting in high external validity.

Lack of control – natural experiments have no control over the environment & other extraneous variables which means that the researcher cannot always accurately assess the effects of the I.V, so it has low internal validity.

Not replicable – due to the researcher’s lack of control, research procedures cannot be repeated so that the reliability of results cannot be checked.

- Laboratory Experiment

- Field experiment

- Quasi Experiment

- Natural Experiment

- Field experiments

You might also like

Experimental design, field experiments, laboratory experiments, natural experiments, control of extraneous variables, independent and dependent variables, duration of long-term memory, our subjects.

- › Criminology

- › Economics

- › Geography

- › Health & Social Care

- › Psychology

- › Sociology

- › Teaching & learning resources

- › Student revision workshops

- › Online student courses

- › CPD for teachers

- › Livestreams

- › Teaching jobs

Boston House, 214 High Street, Boston Spa, West Yorkshire, LS23 6AD Tel: 01937 848885

- › Contact us

- › Terms of use

- › Privacy & cookies

© 2002-2024 Tutor2u Limited. Company Reg no: 04489574. VAT reg no 816865400.

Want to create or adapt books like this? Learn more about how Pressbooks supports open publishing practices.

Experimental Research

In the late 1960s social psychologists John Darley and Bibb Latané proposed a counter-intuitive hypothesis. The more witnesses there are to an accident or a crime, the less likely any of them is to help the victim (Darley & Latané, 1968) [1] .

They also suggested the theory that this phenomenon occurs because each witness feels less responsible for helping—a process referred to as the “diffusion of responsibility.” Darley and Latané noted that their ideas were consistent with many real-world cases. For example, a New York woman named Catherine “Kitty” Genovese was assaulted and murdered while several witnesses evidently failed to help. But Darley and Latané also understood that such isolated cases did not provide convincing evidence for their hypothesized “bystander effect.” There was no way to know, for example, whether any of the witnesses to Kitty Genovese’s murder would have helped had there been fewer of them.

So to test their hypothesis, Darley and Latané created a simulated emergency situation in a laboratory. Each of their university student participants was isolated in a small room and told that they would be having a discussion about university life with other students via an intercom system. Early in the discussion, however, one of the students began having what seemed to be an epileptic seizure. Over the intercom came the following: “I could really-er-use some help so if somebody would-er-give me a little h-help-uh-er-er-er-er-er c-could somebody-er-er-help-er-uh-uh-uh (choking sounds)…I’m gonna die-er-er-I’m…gonna die-er-help-er-er-seizure-er- [chokes, then quiet]” (Darley & Latané, 1968, p. 379) .

In actuality, there were no other students. These comments had been prerecorded and were played back to create the appearance of a real emergency. The key to the study was that some participants were told that the discussion involved only one other student (the victim), others were told that it involved two other students, and still others were told that it included five other students. Because this was the only difference between these three groups of participants, any difference in their tendency to help the victim would have to have been caused by it. And sure enough, the likelihood that the participant left the room to seek help for the “victim” decreased from 85% to 62% to 31% as the number of “witnesses” increased.

The Parable of the 38 Witnesses