- Multi-Tiered System of Supports Build effective, district-wide MTSS

- School Climate & Culture Create a safe, supportive learning environment

- Positive Behavior Interventions & Supports Promote positive behavior and climate

- Family Engagement Engage families as partners in education

- Platform Holistic data and student support tools

- Integrations Daily syncs with district data systems and assessments

- Professional Development Strategic advising, workshop facilitation, and ongoing support

- Success Stories

- Surveys and Toolkits

- Product Demos

- Events and Conferences

AIM FOR IMPACT

Join us to hear from AI visionaries and education leaders on building future-ready schools.

- Connecticut

- Massachusetts

- Mississippi

- New Hampshire

- North Carolina

- North Dakota

- Pennsylvania

- Rhode Island

- South Carolina

- South Dakota

- West Virginia

- Testimonials

- About Panorama

- Data Privacy

- Leadership Team

- In the Press

- Request a Demo

- Popular Posts

- Multi-Tiered System of Supports

- Family Engagement

- Social-Emotional Well-Being

- College and Career Readiness

Show Categories

School Climate

45 survey questions to understand student engagement in online learning.

In our work with K-12 school districts during the COVID-19 pandemic, countless district leaders and school administrators have told us how challenging it's been to build student engagement outside of the traditional classroom.

Not only that, but the challenges associated with online learning may have the largest impact on students from marginalized communities. Research suggests that some groups of students experience more difficulty with academic performance and engagement when course content is delivered online vs. face-to-face.

As you look to improve the online learning experience for students, take a moment to understand how students, caregivers, and staff are currently experiencing virtual learning. Where are the areas for improvement? How supported do students feel in their online coursework? Do teachers feel equipped to support students through synchronous and asynchronous facilitation? How confident do families feel in supporting their children at home?

Below, we've compiled a bank of 45 questions to understand student engagement in online learning. Interested in running a student, family, or staff engagement survey? Click here to learn about Panorama's survey analytics platform for K-12 school districts.

Download Toolkit: 9 Virtual Learning Resources to Engage Students, Families, and Staff

45 Questions to Understand Student Engagement in Online Learning

For students (grades 3-5 and 6-12):.

1. How excited are you about going to your classes?

2. How often do you get so focused on activities in your classes that you lose track of time?

3. In your classes, how eager are you to participate?

4. When you are not in school, how often do you talk about ideas from your classes?

5. Overall, how interested are you in your classes?

6. What are the most engaging activities that happen in this class?

7. Which aspects of class have you found least engaging?

8. If you were teaching class, what is the one thing you would do to make it more engaging for all students?

9. How do you know when you are feeling engaged in class?

10. What projects/assignments/activities do you find most engaging in this class?

11. What does this teacher do to make this class engaging?

12. How much effort are you putting into your classes right now?

13. How difficult or easy is it for you to try hard on your schoolwork right now?

14. How difficult or easy is it for you to stay focused on your schoolwork right now?

15. If you have missed in-person school recently, why did you miss school?

16. If you have missed online classes recently, why did you miss class?

17. How would you like to be learning right now?

18. How happy are you with the amount of time you spend speaking with your teacher?

19. How difficult or easy is it to use the distance learning technology (computer, tablet, video calls, learning applications, etc.)?

20. What do you like about school right now?

21. What do you not like about school right now?

22. When you have online schoolwork, how often do you have the technology (laptop, tablet, computer, etc) you need?

23. How difficult or easy is it for you to connect to the internet to access your schoolwork?

24. What has been the hardest part about completing your schoolwork?

25. How happy are you with how much time you spend in specials or enrichment (art, music, PE, etc.)?

26. Are you getting all the help you need with your schoolwork right now?

27. How sure are you that you can do well in school right now?

28. Are there adults at your school you can go to for help if you need it right now?

29. If you are participating in distance learning, how often do you hear from your teachers individually?

For Families, Parents, and Caregivers:

30 How satisfied are you with the way learning is structured at your child’s school right now?

31. Do you think your child should spend less or more time learning in person at school right now?

32. How difficult or easy is it for your child to use the distance learning tools (video calls, learning applications, etc.)?

33. How confident are you in your ability to support your child's education during distance learning?

34. How confident are you that teachers can motivate students to learn in the current model?

35. What is working well with your child’s education that you would like to see continued?

36. What is challenging with your child’s education that you would like to see improved?

37. Does your child have their own tablet, laptop, or computer available for schoolwork when they need it?

38. What best describes your child's typical internet access?

39. Is there anything else you would like us to know about your family’s needs at this time?

For Teachers and Staff:

40. In the past week, how many of your students regularly participated in your virtual classes?

41. In the past week, how engaged have students been in your virtual classes?

42. In the past week, how engaged have students been in your in-person classes?

43. Is there anything else you would like to share about student engagement at this time?

44. What is working well with the current learning model that you would like to see continued?

45. What is challenging about the current learning model that you would like to see improved?

Elevate Student, Family, and Staff Voices This Year With Panorama

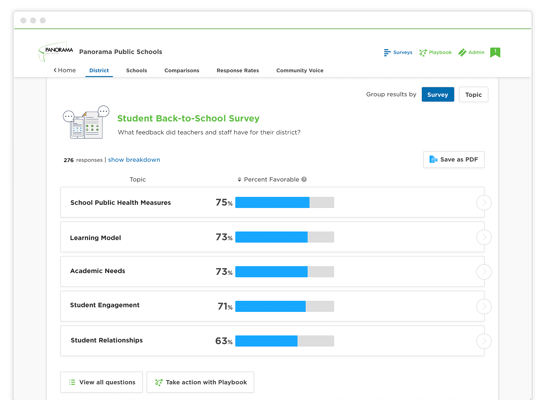

Schools and districts can use Panorama’s leading survey administration and analytics platform to quickly gather and take action on information from students, families, teachers, and staff. The questions are applicable to all types of K-12 school settings and grade levels, as well as to communities serving students from a range of socioeconomic backgrounds.

In the Panorama platform, educators can view and disaggregate results by topic, question, demographic group, grade level, school, and more to inform priority areas and action plans. Districts may use the data to improve teaching and learning models, build stronger academic and social-emotional support systems, improve stakeholder communication, and inform staff professional development.

To learn more about Panorama's survey platform, get in touch with our team.

Related Articles

44 Questions to Ask Students, Families, and Staff During the Pandemic

Identify ways to support students, families, and staff in your school district during the pandemic with these 44 questions.

Engaging Your School Community in Survey Results (Q&A Ep. 4)

Learn how to engage principals, staff, families, and students in the survey results when running a stakeholder feedback program around school climate.

Strategies to Promote Positive Student-Teacher Relationships

Explore four strategies for building strong student-teacher relationships in your school.

Featured Resource

9 virtual learning resources to connect with students, families, and staff.

We've bundled our top resources for building belonging in hybrid or distance learning environments.

Join 90,000+ education leaders on our weekly newsletter.

A Systematic Review of the Research Topics in Online Learning During COVID-19: Documenting the Sudden Shift

- Min Young Doo Kangwon National University http://orcid.org/0000-0003-3565-2159

- Meina Zhu Wayne State University

- Curtis J. Bonk Indiana University Bloomington

Since most schools and learners had no choice but to learn online during the pandemic, online learning became the mainstream learning mode rather than a substitute for traditional face-to-face learning. Given this enormous change in online learning, we conducted a systematic review of 191 of the most recent online learning studies published during the COVID-19 era. The systematic review results indicated that the themes regarding “courses and instructors” became popular during the pandemic, whereas most online learning research has focused on “learners” pre-COVID-19. Notably, the research topics “course and instructors” and “course technology” received more attention than prior to COVID-19. We found that “engagement” remained the most common research theme even after the pandemic. New research topics included parents, technology acceptance or adoption of online learning, and learners’ and instructors’ perceptions of online learning.

An, H., Mongillo, G., Sung, W., & Fuentes, D. (2022). Factors affecting online learning during the COVID-19 pandemic: The lived experiences of parents, teachers, and administrators in U.S. high-needs K-12 schools. The Journal of Online Learning Research (JOLR), 8(2), 203-234. https://www.learntechlib.org/primary/p/220404/

Aslan, S., Li, Q., Bonk, C. J., & Nachman, L. (2022). An overnight educational transformation: How did the pandemic turn early childhood education upside down? Online Learning, 26(2), 52-77. DOI: http://dx.doi.org/10.24059/olj.v26i2.2748

Azizan, S. N., Lee, A. S. H., Crosling, G., Atherton, G., Arulanandam, B. V., Lee, C. E., &

Abdul Rahim, R. B. (2022). Online learning and COVID-19 in higher education: The value of IT models in assessing students’ satisfaction. International Journal of Emerging Technologies in Learning (iJET), 17(3), 245–278. https://doi.org/10.3991/ijet.v17i03.24871

Beatty, B. J. (2019). Hybrid-flexible course design (1st ed.). EdTech Books. https://edtechbooks.org/hyflex

Berge, Z., & Mrozowski, S. (2001). Review of research in distance education, 1990 to 1999. American Journal of Distance Education, 15(3), 5–19. https://doi.org/ 10.1080/08923640109527090

Bond, M. (2020). Schools and emergency remote education during the COVID-19 pandemic: A living rapid systematic review. Asian Journal of Distance Education, 15(2), 191-247. http://www.asianjde.com/ojs/index.php/AsianJDE/article/view/517

Bond, M., Bedenlier, S., Marín, V. I., & Händel, M. (2021). Emergency remote teaching in higher education: Mapping the first global online semester. International Journal of Educational Technology in Higher Education, 18(1), 1-24. https://doi.org/10.1186/s41239-021-00282-x

Bonk, C. J. (2020). Pandemic ponderings, 30 years to today: Synchronous signals, saviors, or survivors? Distance Education, 41(4), 589-599. https://doi.org/10.1080/01587919.2020.1821610

Bonk, C. J., & Graham, C. R. (Eds.) (2006). Handbook of blended learning: Global perspectives, local designs. Pfeiffer Publishing.

Bonk, C. J., Olson, T., Wisher, R. A., & Orvis, K. L. (2002). Learning from focus groups: An examination of blended learning. Journal of Distance Education, 17(3), 97-118.

Braun, V., & Clarke, V. (2006). Using thematic analysis in psychology. Qualitative Research in Psychology, 3(2), 77–101. https://doi.org/10.1191/1478088706qp063oa

Braun, V., Clarke, V., & Rance, N. (2014). How to use thematic analysis with interview data. In A. Vossler & N. Moller (Eds.), The counselling & psychotherapy research handbook, 183–197. Sage.

Canales-Romero, D., & Hachfeld, A (2021). Juggling school and work from home: Results from a survey on German families with school-aged children during the early COVID-19 lockdown. Frontiers in Psychology, 12. https://doi.org/10.3389/fpsyg.2021.734257

Cao, Y., Zhang, S., Chan, M.C.E., Kang. Y. (2021). Post-pandemic reflections: lessons from Chinese mathematics teachers about online mathematics instruction. Asia Pacific Education Review, 22, 157–168. https://doi.org/10.1007/s12564-021-09694-w

The Centers for Disease Control and Prevention (2022, May 4). COVID-19 forecasts: Deaths. Retrieved from https://www.cdc.gov/coronavirus/2019-ncov/science/forecasting/forecasting-us.html

Chang, H. M, & Kim. H. J. (2021). Predicting the pass probability of secondary school students taking online classes. Computers & Education, 164, 104110. https://doi.org/10.1016/j.compedu.2020.104110

Charumilind, S. Craven, M., Lamb, J., Sabow, A., Singhal, S., & Wilson, M. (2022, March 1). When will the COVID-19 pandemic end? McKinsey & Company. https://www.mckinsey.com/industries/healthcare-systems-and-services/our-insights/when-will-the-covid-19-pandemic-end

Cooper, H. (1988). The structure of knowledge synthesis: A taxonomy of literature reviews. Knowledge in Society, 1, 104–126.

Crompton, H., Burke, D., Jordan, K., & Wilson, S. W. (2021). Learning with technology during emergencies: A systematic review of K‐12 education. British Journal of Educational Technology, 52(4), 1554-1575. https://doi.org/10.1111/bjet.13114

Davis, F. D. (1989). Perceived usefulness, perceived ease of use, and user acceptance of information technology. MIS Quarterly, 13(3), 319-340. https://doi.org/10.2307/249008

Erwin, B. (2021, November). A policymaker’s guide to virtual schools. Education Commission of the States. https://www.ecs.org/wp-content/uploads/Policymakers-Guide-to-Virtual-Schools.pdf

Gross, B. (2021). Surging enrollment in virtual schools during the pandemic spurs new questions for policymakers. Center on Reinventing Public Education, Arizona State University. https://crpe.org/surging-enrollment-in-virtual-schools-during-the-pandemic-spurs-new-questions-for-policymakers/

Hamaidi, D. D. A., Arouri, D. Y. M., Noufal, R. K., & Aldrou, I. T. (2021). Parents’ perceptions of their children’s experiences with distance learning during the COVID-19 pandemic. The International Review of Research in Open and Distributed Learning, 22(2), 224-241. https://doi.org/10.19173/irrodl.v22i2.5154

Heo, H., Bonk, C. J., & Doo, M. Y. (2022). Influences of depression, self-efficacy, and resource management on learning engagement in blended learning during COVID-19. The Internet and Higher Education, 54, https://doi.org/10.1016/j.iheduc.2022.100856

Hodges, C., Moore, S., Lockee, B., Trust, T., & Bond, A. (2020, March 27). The differences between emergency remote teaching and online learning. EDUCAUSE Review. https://er.educause.edu/articles/2020/3/the-difference-between-emergency-remote-teachingand-online-learning

Huang, L. & Zhang, T. (2021). Perceived social support, psychological capital, and subjective well-being among college students in the context of online learning during the COVID-19 pandemic. Asia-Pacific Education Researcher. https://doi.org/10.1007/s40299-021-00608-3

Kanwar, A., & Daniel, J. (2020). Report to Commonwealth education ministers: From response to resilience. Commonwealth of Learning. http://oasis.col.org/handle/11599/3592

Lederman, D. (2019). Online enrollments grow, but pace slows. Inside Higher Ed. https://www.insidehighered.com/digital-learning/article/2019/12/11/more-students-study-online-rate-growth-slowed-2018

Lee, K. (2019). Rewriting a history of open universities: (Hi)stories of distance teachers. The International Review of Research in Open and Distributed Learning, 20(4), 1-12. https://doi.org/10.19173/irrodl.v20i3.4070

Liu, Y., & Butzlaff, A. (2021). Where's the germs? The effects of using virtual reality on nursing students' hospital infection prevention during the COVID-19 pandemic. Journal of Computer Assisted Learning, 37(6), 1622–1628. https://doi.org/10.1111/jcal.12601

Maloney, E. J., & Kim, J. (2020, June 10). Learning in 2050. Inside Higher Ed. https://www.insidehighered.com/digital-learning/blogs/learning-innovation/learning-2050

Martin, F., Sun, T., & Westine, C. D. (2020). A systematic review of research on online teaching and learning from 2009 to 2018. Computers & Education, 159, 104009.

Miks, J., & McIlwaine, J. (2020, April 20). Keeping the world’s children learning through COVID-19. UNICEF. https://www.unicef.org/coronavirus/keeping-worlds-children-learning-through-covid-19

Mishra, S., Sahoo, S., & Pandey, S. (2021). Research trends in online distance learning during the COVID-19 pandemic. Distance Education, 42(4), 494-519. https://doi.org/10.1080/01587919.2021.1986373

Moore, M. G. (Ed.) (2007). The handbook of distance education (2nd Ed.). Lawrence Erlbaum Associates.

Moore, M. G., & Kearsley, G. (2012). Distance education: A systems view (3rd ed.). Wadsworth.

Munir, F., Anwar, A., & Kee, D. M. H. (2021). The online learning and students’ fear of COVID-19: Study in Malaysia and Pakistan. The International Review of Research in Open and Distributed Learning, 22(4), 1-21. https://doi.org/10.19173/irrodl.v22i4.5637

National Center for Education Statistics (2015). Number of virtual schools by state and school type, magnet status, charter status, and shared-time status: School year 2013–14. https://nces.ed.gov/ccd/tables/201314_Virtual_Schools_table_1.asp

National Center for Education Statistics (2020). Number of virtual schools by state and school type, magnet status, charter status, and shared-time status: School year 2018–19. https://nces.ed.gov/ccd/tables/201819_Virtual_Schools_table_1.asp

National Center for Education Statistics (2021). Number of virtual schools by state and school type, magnet status, charter status, and shared-time status: School year 2019–20. https://nces.ed.gov/ccd/tables/201920_Virtual_Schools_table_1.asp

Nguyen T., Netto, C.L.M., Wilkins, J.F., Bröker, P., Vargas, E.E., Sealfon, C.D., Puthipiroj, P., Li, K.S., Bowler, J.E., Hinson, H.R., Pujar, M. & Stein, G.M. (2021). Insights into students’ experiences and perceptions of remote learning methods: From the COVID-19 pandemic to best practice for the future. Frontiers in Education, 6, 647986. doi: 10.3389/feduc.2021.647986

Oinas, S., Hotulainen, R., Koivuhovi, S., Brunila, K., & Vainikainen, M-P. (2022). Remote learning experiences of girls, boys and non-binary students. Computers & Education, 183, [104499]. https://doi.org/10.1016/j.compedu.2022.104499

Park, A. (2022, April 29). The U.S. is in a 'Controlled Pandemic' Phase of COVID-19. But what does that mean? Time. https://time.com/6172048/covid-19-controlled-pandemic-endemic/

Petersen, G. B. L., Petkakis, G., & Makransky, G. (2022). A study of how immersion and interactivity drive VR learning. Computers & Education, 179, 104429, https://doi.org/10.1016/j.compedu.2021.104429

Picciano, A., Dziuban, C., & Graham, C. R. (Eds.) (2014). Blended learning: Research perspectives, Volume 2. Routledge.

Picciano, A., Dziuban, C., Graham, C. R. & Moskal, P. (Eds.) (2022). Blended learning: Research perspectives, Volume 3. Routledge.

Pollard, R., & Kumar, S. (2021). Mentoring graduate students online: Strategies and challenges. The International Review of Research in Open and Distributed Learning, 22(2), 267-284. https://doi.org/10.19173/irrodl.v22i2.5093

Salis-Pilco, S. Z., Yang. Y., Zhang. Z. (2022). Student engagement in online learning in Latin American higher education during the COVID-19 pandemic: A systematic review. British Journal of Educational Technology, 53(3), 593-619. https://doi.org/10.1111/bjet.13190

Shen, Y. W., Reynolds, T. H., Bonk, C. J., & Brush, T. A. (2013). A case study of applying blended learning in an accelerated post-baccalaureate teacher education program. Journal of Educational Technology Development and Exchange, 6(1), 59-78.

Seabra, F., Teixeira, A., Abelha, M., Aires, L. (2021). Emergency remote teaching and learning in Portugal: Preschool to secondary school teachers’ perceptions. Education Sciences, 11, 349. https://doi.org/ 10.3390/educsci11070349

Tallent-Runnels, M. K., Thomas, J. A., Lan, W. Y., Cooper, S., Ahern, T. C., Shaw, S. M., & Liu, X. (2006). Teaching courses online: A review of the research. Review of Educational Research, 76(1), 93–135. https://doi.org/10.3102/00346543076001093 .

Theirworld. (2020, March 20). Hundreds of millions of students now learning from home after coronavirus crisis shuts their schools. ReliefWeb. https://reliefweb.int/report/world/hundreds-millions-students-now-learning-home-after-coronavirus-crisis-shuts-their

UNESCO (2020). UNESCO rallies international organizations, civil society and private sector partners in a broad Coalition to ensure #LearningNeverStops. https://en.unesco.org/news/unesco-rallies-international-organizations-civil-society-and-private-sector-partners-broad

VanLeeuwen, C. A., Veletsianos, G., Johnson, N., & Belikov, O. (2021). Never-ending repetitiveness, sadness, loss, and “juggling with a blindfold on:” Lived experiences of Canadian college and university faculty members during the COVID-19

pandemic. British Journal of Educational Technology, 52, 1306-1322

https://doi.org/10.1111/bjet.13065

Wedemeyer, C. A. (1981). Learning at the back door: Reflections on non-traditional learning in the lifespan. University of Wisconsin Press.

Zawacki-Richter, O., Backer, E., & Vogt, S. (2009). Review of distance education research (2000 to 2008): Analysis of research areas, methods, and authorship patterns. International Review of Research in Open and Distance Learning, 10(6), 30. https://doi.org/10.19173/irrodl.v10i6.741

Zhan, Z., Li, Y., Yuan, X., & Chen, Q. (2021). To be or not to be: Parents’ willingness to send their children back to school after the COVID-19 outbreak. The Asia-Pacific Education Researcher. https://doi.org/10.1007/s40299-021-00610-9

As a condition of publication, the author agrees to apply the Creative Commons – Attribution International 4.0 (CC-BY) License to OLJ articles. See: https://creativecommons.org/licenses/by/4.0/ .

This licence allows anyone to reproduce OLJ articles at no cost and without further permission as long as they attribute the author and the journal. This permission includes printing, sharing and other forms of distribution.

Author(s) hold copyright in their work, and retain publishing rights without restrictions

The DOAJ Seal is awarded to journals that demonstrate best practice in open access publishing

OLC Membership

OLC Research Center

Information

- For Readers

- For Authors

- For Librarians

Advertisement

The effects of online education on academic success: A meta-analysis study

- Published: 06 September 2021

- Volume 27 , pages 429–450, ( 2022 )

Cite this article

- Hakan Ulum ORCID: orcid.org/0000-0002-1398-6935 1

86k Accesses

33 Citations

4 Altmetric

Explore all metrics

The purpose of this study is to analyze the effect of online education, which has been extensively used on student achievement since the beginning of the pandemic. In line with this purpose, a meta-analysis of the related studies focusing on the effect of online education on students’ academic achievement in several countries between the years 2010 and 2021 was carried out. Furthermore, this study will provide a source to assist future studies with comparing the effect of online education on academic achievement before and after the pandemic. This meta-analysis study consists of 27 studies in total. The meta-analysis involves the studies conducted in the USA, Taiwan, Turkey, China, Philippines, Ireland, and Georgia. The studies included in the meta-analysis are experimental studies, and the total sample size is 1772. In the study, the funnel plot, Duval and Tweedie’s Trip and Fill Analysis, Orwin’s Safe N Analysis, and Egger’s Regression Test were utilized to determine the publication bias, which has been found to be quite low. Besides, Hedge’s g statistic was employed to measure the effect size for the difference between the means performed in accordance with the random effects model. The results of the study show that the effect size of online education on academic achievement is on a medium level. The heterogeneity test results of the meta-analysis study display that the effect size does not differ in terms of class level, country, online education approaches, and lecture moderators.

Explore related subjects

- Digital Education and Educational Technology

Avoid common mistakes on your manuscript.

1 Introduction

Information and communication technologies have become a powerful force in transforming the educational settings around the world. The pandemic has been an important factor in transferring traditional physical classrooms settings through adopting information and communication technologies and has also accelerated the transformation. The literature supports that learning environments connected to information and communication technologies highly satisfy students. Therefore, we need to keep interest in technology-based learning environments. Clearly, technology has had a huge impact on young people's online lives. This digital revolution can synergize the educational ambitions and interests of digitally addicted students. In essence, COVID-19 has provided us with an opportunity to embrace online learning as education systems have to keep up with the rapid emergence of new technologies.

Information and communication technologies that have an effect on all spheres of life are also actively included in the education field. With the recent developments, using technology in education has become inevitable due to personal and social reasons (Usta, 2011a ). Online education may be given as an example of using information and communication technologies as a consequence of the technological developments. Also, it is crystal clear that online learning is a popular way of obtaining instruction (Demiralay et al., 2016 ; Pillay et al., 2007 ), which is defined by Horton ( 2000 ) as a way of education that is performed through a web browser or an online application without requiring an extra software or a learning source. Furthermore, online learning is described as a way of utilizing the internet to obtain the related learning sources during the learning process, to interact with the content, the teacher, and other learners, as well as to get support throughout the learning process (Ally, 2004 ). Online learning has such benefits as learning independently at any time and place (Vrasidas & MsIsaac, 2000 ), granting facility (Poole, 2000 ), flexibility (Chizmar & Walbert, 1999 ), self-regulation skills (Usta, 2011b ), learning with collaboration, and opportunity to plan self-learning process.

Even though online education practices have not been comprehensive as it is now, internet and computers have been used in education as alternative learning tools in correlation with the advances in technology. The first distance education attempt in the world was initiated by the ‘Steno Courses’ announcement published in Boston newspaper in 1728. Furthermore, in the nineteenth century, Sweden University started the “Correspondence Composition Courses” for women, and University Correspondence College was afterwards founded for the correspondence courses in 1843 (Arat & Bakan, 2011 ). Recently, distance education has been performed through computers, assisted by the facilities of the internet technologies, and soon, it has evolved into a mobile education practice that is emanating from progress in the speed of internet connection, and the development of mobile devices.

With the emergence of pandemic (Covid-19), face to face education has almost been put to a halt, and online education has gained significant importance. The Microsoft management team declared to have 750 users involved in the online education activities on the 10 th March, just before the pandemic; however, on March 24, they informed that the number of users increased significantly, reaching the number of 138,698 users (OECD, 2020 ). This event supports the view that it is better to commonly use online education rather than using it as a traditional alternative educational tool when students do not have the opportunity to have a face to face education (Geostat, 2019 ). The period of Covid-19 pandemic has emerged as a sudden state of having limited opportunities. Face to face education has stopped in this period for a long time. The global spread of Covid-19 affected more than 850 million students all around the world, and it caused the suspension of face to face education. Different countries have proposed several solutions in order to maintain the education process during the pandemic. Schools have had to change their curriculum, and many countries supported the online education practices soon after the pandemic. In other words, traditional education gave its way to online education practices. At least 96 countries have been motivated to access online libraries, TV broadcasts, instructions, sources, video lectures, and online channels (UNESCO, 2020 ). In such a painful period, educational institutions went through online education practices by the help of huge companies such as Microsoft, Google, Zoom, Skype, FaceTime, and Slack. Thus, online education has been discussed in the education agenda more intensively than ever before.

Although online education approaches were not used as comprehensively as it has been used recently, it was utilized as an alternative learning approach in education for a long time in parallel with the development of technology, internet and computers. The academic achievement of the students is often aimed to be promoted by employing online education approaches. In this regard, academicians in various countries have conducted many studies on the evaluation of online education approaches and published the related results. However, the accumulation of scientific data on online education approaches creates difficulties in keeping, organizing and synthesizing the findings. In this research area, studies are being conducted at an increasing rate making it difficult for scientists to be aware of all the research outside of their expertise. Another problem encountered in the related study area is that online education studies are repetitive. Studies often utilize slightly different methods, measures, and/or examples to avoid duplication. This erroneous approach makes it difficult to distinguish between significant differences in the related results. In other words, if there are significant differences in the results of the studies, it may be difficult to express what variety explains the differences in these results. One obvious solution to these problems is to systematically review the results of various studies and uncover the sources. One method of performing such systematic syntheses is the application of meta-analysis which is a methodological and statistical approach to draw conclusions from the literature. At this point, how effective online education applications are in increasing the academic success is an important detail. Has online education, which is likely to be encountered frequently in the continuing pandemic period, been successful in the last ten years? If successful, how much was the impact? Did different variables have an impact on this effect? Academics across the globe have carried out studies on the evaluation of online education platforms and publishing the related results (Chiao et al., 2018 ). It is quite important to evaluate the results of the studies that have been published up until now, and that will be published in the future. Has the online education been successful? If it has been, how big is the impact? Do the different variables affect this impact? What should we consider in the next coming online education practices? These questions have all motivated us to carry out this study. We have conducted a comprehensive meta-analysis study that tries to provide a discussion platform on how to develop efficient online programs for educators and policy makers by reviewing the related studies on online education, presenting the effect size, and revealing the effect of diverse variables on the general impact.

There have been many critical discussions and comprehensive studies on the differences between online and face to face learning; however, the focus of this paper is different in the sense that it clarifies the magnitude of the effect of online education and teaching process, and it represents what factors should be controlled to help increase the effect size. Indeed, the purpose here is to provide conscious decisions in the implementation of the online education process.

The general impact of online education on the academic achievement will be discovered in the study. Therefore, this will provide an opportunity to get a general overview of the online education which has been practiced and discussed intensively in the pandemic period. Moreover, the general impact of online education on academic achievement will be analyzed, considering different variables. In other words, the current study will allow to totally evaluate the study results from the related literature, and to analyze the results considering several cultures, lectures, and class levels. Considering all the related points, this study seeks to answer the following research questions:

What is the effect size of online education on academic achievement?

How do the effect sizes of online education on academic achievement change according to the moderator variable of the country?

How do the effect sizes of online education on academic achievement change according to the moderator variable of the class level?

How do the effect sizes of online education on academic achievement change according to the moderator variable of the lecture?

How do the effect sizes of online education on academic achievement change according to the moderator variable of the online education approaches?

This study aims at determining the effect size of online education, which has been highly used since the beginning of the pandemic, on students’ academic achievement in different courses by using a meta-analysis method. Meta-analysis is a synthesis method that enables gathering of several study results accurately and efficiently, and getting the total results in the end (Tsagris & Fragkos, 2018 ).

2.1 Selecting and coding the data (studies)

The required literature for the meta-analysis study was reviewed in July, 2020, and the follow-up review was conducted in September, 2020. The purpose of the follow-up review was to include the studies which were published in the conduction period of this study, and which met the related inclusion criteria. However, no study was encountered to be included in the follow-up review.

In order to access the studies in the meta-analysis, the databases of Web of Science, ERIC, and SCOPUS were reviewed by utilizing the keywords ‘online learning and online education’. Not every database has a search engine that grants access to the studies by writing the keywords, and this obstacle was considered to be an important problem to be overcome. Therefore, a platform that has a special design was utilized by the researcher. With this purpose, through the open access system of Cukurova University Library, detailed reviews were practiced using EBSCO Information Services (EBSCO) that allow reviewing the whole collection of research through a sole searching box. Since the fundamental variables of this study are online education and online learning, the literature was systematically reviewed in the related databases (Web of Science, ERIC, and SCOPUS) by referring to the keywords. Within this scope, 225 articles were accessed, and the studies were included in the coding key list formed by the researcher. The name of the researchers, the year, the database (Web of Science, ERIC, and SCOPUS), the sample group and size, the lectures that the academic achievement was tested in, the country that the study was conducted in, and the class levels were all included in this coding key.

The following criteria were identified to include 225 research studies which were coded based on the theoretical basis of the meta-analysis study: (1) The studies should be published in the refereed journals between the years 2020 and 2021, (2) The studies should be experimental studies that try to determine the effect of online education and online learning on academic achievement, (3) The values of the stated variables or the required statistics to calculate these values should be stated in the results of the studies, and (4) The sample group of the study should be at a primary education level. These criteria were also used as the exclusion criteria in the sense that the studies that do not meet the required criteria were not included in the present study.

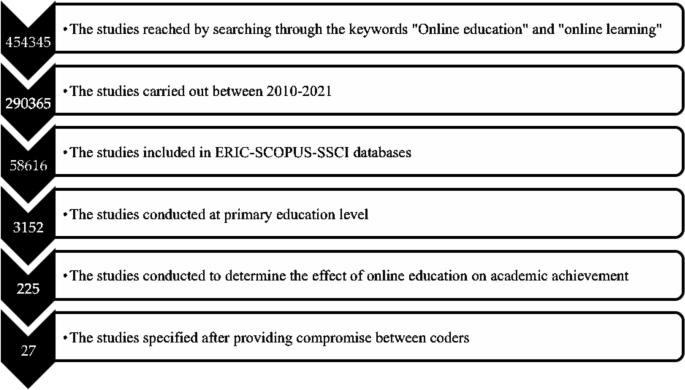

After the inclusion criteria were determined, a systematic review process was conducted, following the year criterion of the study by means of EBSCO. Within this scope, 290,365 studies that analyze the effect of online education and online learning on academic achievement were accordingly accessed. The database (Web of Science, ERIC, and SCOPUS) was also used as a filter by analyzing the inclusion criteria. Hence, the number of the studies that were analyzed was 58,616. Afterwards, the keyword ‘primary education’ was used as the filter and the number of studies included in the study decreased to 3152. Lastly, the literature was reviewed by using the keyword ‘academic achievement’ and 225 studies were accessed. All the information of 225 articles was included in the coding key.

It is necessary for the coders to review the related studies accurately and control the validity, safety, and accuracy of the studies (Stewart & Kamins, 2001 ). Within this scope, the studies that were determined based on the variables used in this study were first reviewed by three researchers from primary education field, then the accessed studies were combined and processed in the coding key by the researcher. All these studies that were processed in the coding key were analyzed in accordance with the inclusion criteria by all the researchers in the meetings, and it was decided that 27 studies met the inclusion criteria (Atici & Polat, 2010 ; Carreon, 2018 ; Ceylan & Elitok Kesici, 2017 ; Chae & Shin, 2016 ; Chiang et al. 2014 ; Ercan, 2014 ; Ercan et al., 2016 ; Gwo-Jen et al., 2018 ; Hayes & Stewart, 2016 ; Hwang et al., 2012 ; Kert et al., 2017 ; Lai & Chen, 2010 ; Lai et al., 2015 ; Meyers et al., 2015 ; Ravenel et al., 2014 ; Sung et al., 2016 ; Wang & Chen, 2013 ; Yu, 2019 ; Yu & Chen, 2014 ; Yu & Pan, 2014 ; Yu et al., 2010 ; Zhong et al., 2017 ). The data from the studies meeting the inclusion criteria were independently processed in the second coding key by three researchers, and consensus meetings were arranged for further discussion. After the meetings, researchers came to an agreement that the data were coded accurately and precisely. Having identified the effect sizes and heterogeneity of the study, moderator variables that will show the differences between the effect sizes were determined. The data related to the determined moderator variables were added to the coding key by three researchers, and a new consensus meeting was arranged. After the meeting, researchers came to an agreement that moderator variables were coded accurately and precisely.

2.2 Study group

27 studies are included in the meta-analysis. The total sample size of the studies that are included in the analysis is 1772. The characteristics of the studies included are given in Table 1 .

2.3 Publication bias

Publication bias is the low capability of published studies on a research subject to represent all completed studies on the same subject (Card, 2011 ; Littell et al., 2008 ). Similarly, publication bias is the state of having a relationship between the probability of the publication of a study on a subject, and the effect size and significance that it produces. Within this scope, publication bias may occur when the researchers do not want to publish the study as a result of failing to obtain the expected results, or not being approved by the scientific journals, and consequently not being included in the study synthesis (Makowski et al., 2019 ). The high possibility of publication bias in a meta-analysis study negatively affects (Pecoraro, 2018 ) the accuracy of the combined effect size, causing the average effect size to be reported differently than it should be (Borenstein et al., 2009 ). For this reason, the possibility of publication bias in the included studies was tested before determining the effect sizes of the relationships between the stated variables. The possibility of publication bias of this meta-analysis study was analyzed by using the funnel plot, Orwin’s Safe N Analysis, Duval and Tweedie’s Trip and Fill Analysis, and Egger’s Regression Test.

2.4 Selecting the model

After determining the probability of publication bias of this meta-analysis study, the statistical model used to calculate the effect sizes was selected. The main approaches used in the effect size calculations according to the differentiation level of inter-study variance are fixed and random effects models (Pigott, 2012 ). Fixed effects model refers to the homogeneity of the characteristics of combined studies apart from the sample sizes, while random effects model refers to the parameter diversity between the studies (Cumming, 2012 ). While calculating the average effect size in the random effects model (Deeks et al., 2008 ) that is based on the assumption that effect predictions of different studies are only the result of a similar distribution, it is necessary to consider several situations such as the effect size apart from the sample error of combined studies, characteristics of the participants, duration, scope, and pattern of the study (Littell et al., 2008 ). While deciding the model in the meta-analysis study, the assumptions on the sample characteristics of the studies included in the analysis and the inferences that the researcher aims to make should be taken into consideration. The fact that the sample characteristics of the studies conducted in the field of social sciences are affected by various parameters shows that using random effects model is more appropriate in this sense. Besides, it is stated that the inferences made with the random effects model are beyond the studies included in the meta-analysis (Field, 2003 ; Field & Gillett, 2010 ). Therefore, using random effects model also contributes to the generalization of research data. The specified criteria for the statistical model selection show that according to the nature of the meta-analysis study, the model should be selected just before the analysis (Borenstein et al., 2007 ; Littell et al., 2008 ). Within this framework, it was decided to make use of the random effects model, considering that the students who are the samples of the studies included in the meta-analysis are from different countries and cultures, the sample characteristics of the studies differ, and the patterns and scopes of the studies vary as well.

2.5 Heterogeneity

Meta-analysis facilitates analyzing the research subject with different parameters by showing the level of diversity between the included studies. Within this frame, whether there is a heterogeneous distribution between the studies included in the study or not has been evaluated in the present study. The heterogeneity of the studies combined in this meta-analysis study has been determined through Q and I 2 tests. Q test evaluates the random distribution probability of the differences between the observed results (Deeks et al., 2008 ). Q value exceeding 2 value calculated according to the degree of freedom and significance, indicates the heterogeneity of the combined effect sizes (Card, 2011 ). I 2 test, which is the complementary of the Q test, shows the heterogeneity amount of the effect sizes (Cleophas & Zwinderman, 2017 ). I 2 value being higher than 75% is explained as high level of heterogeneity.

In case of encountering heterogeneity in the studies included in the meta-analysis, the reasons of heterogeneity can be analyzed by referring to the study characteristics. The study characteristics which may be related to the heterogeneity between the included studies can be interpreted through subgroup analysis or meta-regression analysis (Deeks et al., 2008 ). While determining the moderator variables, the sufficiency of the number of variables, the relationship between the moderators, and the condition to explain the differences between the results of the studies have all been considered in the present study. Within this scope, it was predicted in this meta-analysis study that the heterogeneity can be explained with the country, class level, and lecture moderator variables of the study in terms of the effect of online education, which has been highly used since the beginning of the pandemic, and it has an impact on the students’ academic achievement in different lectures. Some subgroups were evaluated and categorized together, considering that the number of effect sizes of the sub-dimensions of the specified variables is not sufficient to perform moderator analysis (e.g. the countries where the studies were conducted).

2.6 Interpreting the effect sizes

Effect size is a factor that shows how much the independent variable affects the dependent variable positively or negatively in each included study in the meta-analysis (Dinçer, 2014 ). While interpreting the effect sizes obtained from the meta-analysis, the classifications of Cohen et al. ( 2007 ) have been utilized. The case of differentiating the specified relationships of the situation of the country, class level, and school subject variables of the study has been identified through the Q test, degree of freedom, and p significance value Fig. 1 and 2 .

3 Findings and results

The purpose of this study is to determine the effect size of online education on academic achievement. Before determining the effect sizes in the study, the probability of publication bias of this meta-analysis study was analyzed by using the funnel plot, Orwin’s Safe N Analysis, Duval and Tweedie’s Trip and Fill Analysis, and Egger’s Regression Test.

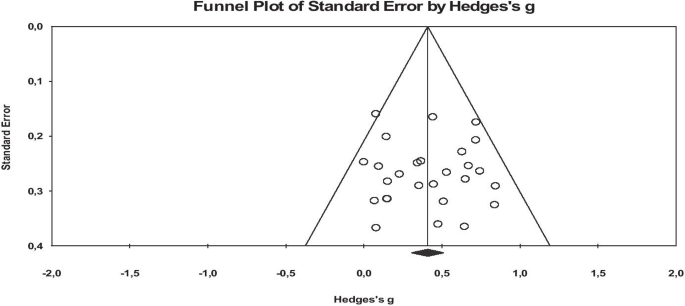

When the funnel plots are examined, it is seen that the studies included in the analysis are distributed symmetrically on both sides of the combined effect size axis, and they are generally collected in the middle and lower sections. The probability of publication bias is low according to the plots. However, since the results of the funnel scatter plots may cause subjective interpretations, they have been supported by additional analyses (Littell et al., 2008 ). Therefore, in order to provide an extra proof for the probability of publication bias, it has been analyzed through Orwin’s Safe N Analysis, Duval and Tweedie’s Trip and Fill Analysis, and Egger’s Regression Test (Table 2 ).

Table 2 consists of the results of the rates of publication bias probability before counting the effect size of online education on academic achievement. According to the table, Orwin Safe N analysis results show that it is not necessary to add new studies to the meta-analysis in order for Hedges g to reach a value outside the range of ± 0.01. The Duval and Tweedie test shows that excluding the studies that negatively affect the symmetry of the funnel scatter plots for each meta-analysis or adding their exact symmetrical equivalents does not significantly differentiate the calculated effect size. The insignificance of the Egger tests results reveals that there is no publication bias in the meta-analysis study. The results of the analysis indicate the high internal validity of the effect sizes and the adequacy of representing the studies conducted on the relevant subject.

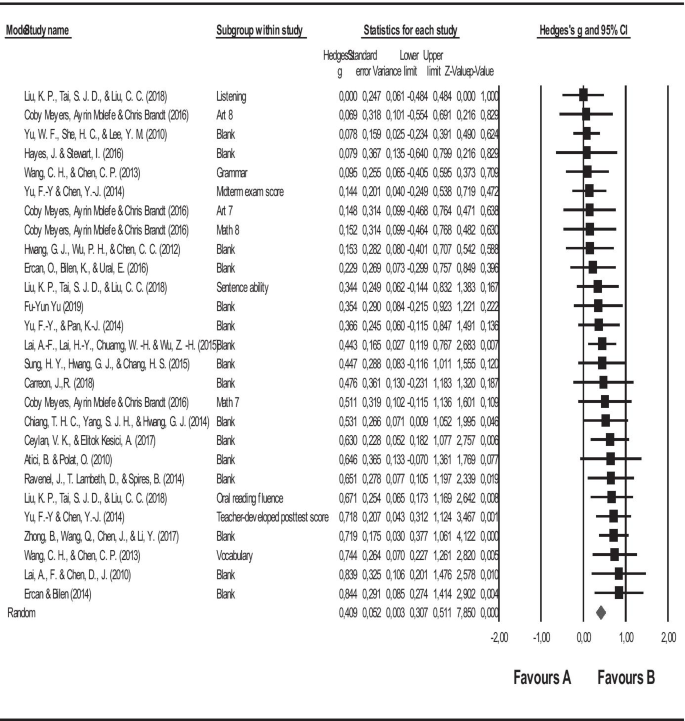

In this study, it was aimed to determine the effect size of online education on academic achievement after testing the publication bias. In line with the first purpose of the study, the forest graph regarding the effect size of online education on academic achievement is shown in Fig. 3 , and the statistics regarding the effect size are given in Table 3 .

The flow chart of the scanning and selection process of the studies

Funnel plot graphics representing the effect size of the effects of online education on academic success

Forest graph related to the effect size of online education on academic success

The square symbols in the forest graph in Fig. 3 represent the effect sizes, while the horizontal lines show the intervals in 95% confidence of the effect sizes, and the diamond symbol shows the overall effect size. When the forest graph is analyzed, it is seen that the lower and upper limits of the combined effect sizes are generally close to each other, and the study loads are similar. This similarity in terms of study loads indicates the similarity of the contribution of the combined studies to the overall effect size.

Figure 3 clearly represents that the study of Liu and others (Liu et al., 2018 ) has the lowest, and the study of Ercan and Bilen ( 2014 ) has the highest effect sizes. The forest graph shows that all the combined studies and the overall effect are positive. Furthermore, it is simply understood from the forest graph in Fig. 3 and the effect size statistics in Table 3 that the results of the meta-analysis study conducted with 27 studies and analyzing the effect of online education on academic achievement illustrate that this relationship is on average level (= 0.409).

After the analysis of the effect size in the study, whether the studies included in the analysis are distributed heterogeneously or not has also been analyzed. The heterogeneity of the combined studies was determined through the Q and I 2 tests. As a result of the heterogeneity test, Q statistical value was calculated as 29.576. With 26 degrees of freedom at 95% significance level in the chi-square table, the critical value is accepted as 38.885. The Q statistical value (29.576) counted in this study is lower than the critical value of 38.885. The I 2 value, which is the complementary of the Q statistics, is 12.100%. This value indicates that the accurate heterogeneity or the total variability that can be attributed to variability between the studies is 12%. Besides, p value is higher than (0.285) p = 0.05. All these values [Q (26) = 29.579, p = 0.285; I2 = 12.100] indicate that there is a homogeneous distribution between the effect sizes, and fixed effects model should be used to interpret these effect sizes. However, some researchers argue that even if the heterogeneity is low, it should be evaluated based on the random effects model (Borenstein et al., 2007 ). Therefore, this study gives information about both models. The heterogeneity of the combined studies has been attempted to be explained with the characteristics of the studies included in the analysis. In this context, the final purpose of the study is to determine the effect of the country, academic level, and year variables on the findings. Accordingly, the statistics regarding the comparison of the stated relations according to the countries where the studies were conducted are given in Table 4 .

As seen in Table 4 , the effect of online education on academic achievement does not differ significantly according to the countries where the studies were conducted in. Q test results indicate the heterogeneity of the relationships between the variables in terms of countries where the studies were conducted in. According to the table, the effect of online education on academic achievement was reported as the highest in other countries, and the lowest in the US. The statistics regarding the comparison of the stated relations according to the class levels are given in Table 5 .

As seen in Table 5 , the effect of online education on academic achievement does not differ according to the class level. However, the effect of online education on academic achievement is the highest in the 4 th class. The statistics regarding the comparison of the stated relations according to the class levels are given in Table 6 .

As seen in Table 6 , the effect of online education on academic achievement does not differ according to the school subjects included in the studies. However, the effect of online education on academic achievement is the highest in ICT subject.

The obtained effect size in the study was formed as a result of the findings attained from primary studies conducted in 7 different countries. In addition, these studies are the ones on different approaches to online education (online learning environments, social networks, blended learning, etc.). In this respect, the results may raise some questions about the validity and generalizability of the results of the study. However, the moderator analyzes, whether for the country variable or for the approaches covered by online education, did not create significant differences in terms of the effect sizes. If significant differences were to occur in terms of effect sizes, we could say that the comparisons we will make by comparing countries under the umbrella of online education would raise doubts in terms of generalizability. Moreover, no study has been found in the literature that is not based on a special approach or does not contain a specific technique conducted under the name of online education alone. For instance, one of the commonly used definitions is blended education which is defined as an educational model in which online education is combined with traditional education method (Colis & Moonen, 2001 ). Similarly, Rasmussen ( 2003 ) defines blended learning as “a distance education method that combines technology (high technology such as television, internet, or low technology such as voice e-mail, conferences) with traditional education and training.” Further, Kerres and Witt (2003) define blended learning as “combining face-to-face learning with technology-assisted learning.” As it is clearly observed, online education, which has a wider scope, includes many approaches.

As seen in Table 7 , the effect of online education on academic achievement does not differ according to online education approaches included in the studies. However, the effect of online education on academic achievement is the highest in Web Based Problem Solving Approach.

4 Conclusions and discussion

Considering the developments during the pandemics, it is thought that the diversity in online education applications as an interdisciplinary pragmatist field will increase, and the learning content and processes will be enriched with the integration of new technologies into online education processes. Another prediction is that more flexible and accessible learning opportunities will be created in online education processes, and in this way, lifelong learning processes will be strengthened. As a result, it is predicted that in the near future, online education and even digital learning with a newer name will turn into the main ground of education instead of being an alternative or having a support function in face-to-face learning. The lessons learned from the early period online learning experience, which was passed with rapid adaptation due to the Covid19 epidemic, will serve to develop this method all over the world, and in the near future, online learning will become the main learning structure through increasing its functionality with the contribution of new technologies and systems. If we look at it from this point of view, there is a necessity to strengthen online education.

In this study, the effect of online learning on academic achievement is at a moderate level. To increase this effect, the implementation of online learning requires support from teachers to prepare learning materials, to design learning appropriately, and to utilize various digital-based media such as websites, software technology and various other tools to support the effectiveness of online learning (Rolisca & Achadiyah, 2014 ). According to research conducted by Rahayu et al. ( 2017 ), it has been proven that the use of various types of software increases the effectiveness and quality of online learning. Implementation of online learning can affect students' ability to adapt to technological developments in that it makes students use various learning resources on the internet to access various types of information, and enables them to get used to performing inquiry learning and active learning (Hart et al., 2019 ; Prestiadi et al., 2019 ). In addition, there may be many reasons for the low level of effect in this study. The moderator variables examined in this study could be a guide in increasing the level of practical effect. However, the effect size did not differ significantly for all moderator variables. Different moderator analyzes can be evaluated in order to increase the level of impact of online education on academic success. If confounding variables that significantly change the effect level are detected, it can be spoken more precisely in order to increase this level. In addition to the technical and financial problems, the level of impact will increase if a few other difficulties are eliminated such as students, lack of interaction with the instructor, response time, and lack of traditional classroom socialization.

In addition, COVID-19 pandemic related social distancing has posed extreme difficulties for all stakeholders to get online as they have to work in time constraints and resource constraints. Adopting the online learning environment is not just a technical issue, it is a pedagogical and instructive challenge as well. Therefore, extensive preparation of teaching materials, curriculum, and assessment is vital in online education. Technology is the delivery tool and requires close cross-collaboration between teaching, content and technology teams (CoSN, 2020 ).

Online education applications have been used for many years. However, it has come to the fore more during the pandemic process. This result of necessity has brought with it the discussion of using online education instead of traditional education methods in the future. However, with this research, it has been revealed that online education applications are moderately effective. The use of online education instead of face-to-face education applications can only be possible with an increase in the level of success. This may have been possible with the experience and knowledge gained during the pandemic process. Therefore, the meta-analysis of experimental studies conducted in the coming years will guide us. In this context, experimental studies using online education applications should be analyzed well. It would be useful to identify variables that can change the level of impacts with different moderators. Moderator analyzes are valuable in meta-analysis studies (for example, the role of moderators in Karl Pearson's typhoid vaccine studies). In this context, each analysis study sheds light on future studies. In meta-analyses to be made about online education, it would be beneficial to go beyond the moderators determined in this study. Thus, the contribution of similar studies to the field will increase more.

The purpose of this study is to determine the effect of online education on academic achievement. In line with this purpose, the studies that analyze the effect of online education approaches on academic achievement have been included in the meta-analysis. The total sample size of the studies included in the meta-analysis is 1772. While the studies included in the meta-analysis were conducted in the US, Taiwan, Turkey, China, Philippines, Ireland, and Georgia, the studies carried out in Europe could not be reached. The reason may be attributed to that there may be more use of quantitative research methods from a positivist perspective in the countries with an American academic tradition. As a result of the study, it was found out that the effect size of online education on academic achievement (g = 0.409) was moderate. In the studies included in the present research, we found that online education approaches were more effective than traditional ones. However, contrary to the present study, the analysis of comparisons between online and traditional education in some studies shows that face-to-face traditional learning is still considered effective compared to online learning (Ahmad et al., 2016 ; Hamdani & Priatna, 2020 ; Wei & Chou, 2020 ). Online education has advantages and disadvantages. The advantages of online learning compared to face-to-face learning in the classroom is the flexibility of learning time in online learning, the learning time does not include a single program, and it can be shaped according to circumstances (Lai et al., 2019 ). The next advantage is the ease of collecting assignments for students, as these can be done without having to talk to the teacher. Despite this, online education has several weaknesses, such as students having difficulty in understanding the material, teachers' inability to control students, and students’ still having difficulty interacting with teachers in case of internet network cuts (Swan, 2007 ). According to Astuti et al ( 2019 ), face-to-face education method is still considered better by students than e-learning because it is easier to understand the material and easier to interact with teachers. The results of the study illustrated that the effect size (g = 0.409) of online education on academic achievement is of medium level. Therefore, the results of the moderator analysis showed that the effect of online education on academic achievement does not differ in terms of country, lecture, class level, and online education approaches variables. After analyzing the literature, several meta-analyses on online education were published (Bernard et al., 2004 ; Machtmes & Asher, 2000 ; Zhao et al., 2005 ). Typically, these meta-analyzes also include the studies of older generation technologies such as audio, video, or satellite transmission. One of the most comprehensive studies on online education was conducted by Bernard et al. ( 2004 ). In this study, 699 independent effect sizes of 232 studies published from 1985 to 2001 were analyzed, and face-to-face education was compared to online education, with respect to success criteria and attitudes of various learners from young children to adults. In this meta-analysis, an overall effect size close to zero was found for the students' achievement (g + = 0.01).

In another meta-analysis study carried out by Zhao et al. ( 2005 ), 98 effect sizes were examined, including 51 studies on online education conducted between 1996 and 2002. According to the study of Bernard et al. ( 2004 ), this meta-analysis focuses on the activities done in online education lectures. As a result of the research, an overall effect size close to zero was found for online education utilizing more than one generation technology for students at different levels. However, the salient point of the meta-analysis study of Zhao et al. is that it takes the average of different types of results used in a study to calculate an overall effect size. This practice is problematic because the factors that develop one type of learner outcome (e.g. learner rehabilitation), particularly course characteristics and practices, may be quite different from those that develop another type of outcome (e.g. learner's achievement), and it may even cause damage to the latter outcome. While mixing the studies with different types of results, this implementation may obscure the relationship between practices and learning.

Some meta-analytical studies have focused on the effectiveness of the new generation distance learning courses accessed through the internet for specific student populations. For instance, Sitzmann and others (Sitzmann et al., 2006 ) reviewed 96 studies published from 1996 to 2005, comparing web-based education of job-related knowledge or skills with face-to-face one. The researchers found that web-based education in general was slightly more effective than face-to-face education, but it is insufficient in terms of applicability ("knowing how to apply"). In addition, Sitzmann et al. ( 2006 ) revealed that Internet-based education has a positive effect on theoretical knowledge in quasi-experimental studies; however, it positively affects face-to-face education in experimental studies performed by random assignment. This moderator analysis emphasizes the need to pay attention to the factors of designs of the studies included in the meta-analysis. The designs of the studies included in this meta-analysis study were ignored. This can be presented as a suggestion to the new studies that will be conducted.

Another meta-analysis study was conducted by Cavanaugh et al. ( 2004 ), in which they focused on online education. In this study on internet-based distance education programs for students under 12 years of age, the researchers combined 116 results from 14 studies published between 1999 and 2004 to calculate an overall effect that was not statistically different from zero. The moderator analysis carried out in this study showed that there was no significant factor affecting the students' success. This meta-analysis used multiple results of the same study, ignoring the fact that different results of the same student would not be independent from each other.

In conclusion, some meta-analytical studies analyzed the consequences of online education for a wide range of students (Bernard et al., 2004 ; Zhao et al., 2005 ), and the effect sizes were generally low in these studies. Furthermore, none of the large-scale meta-analyzes considered the moderators, database quality standards or class levels in the selection of the studies, while some of them just referred to the country and lecture moderators. Advances in internet-based learning tools, the pandemic process, and increasing popularity in different learning contexts have required a precise meta-analysis of students' learning outcomes through online learning. Previous meta-analysis studies were typically based on the studies, involving narrow range of confounding variables. In the present study, common but significant moderators such as class level and lectures during the pandemic process were discussed. For instance, the problems have been experienced especially in terms of eligibility of class levels in online education platforms during the pandemic process. It was found that there is a need to study and make suggestions on whether online education can meet the needs of teachers and students.

Besides, the main forms of online education in the past were to watch the open lectures of famous universities and educational videos of institutions. In addition, online education is mainly a classroom-based teaching implemented by teachers in their own schools during the pandemic period, which is an extension of the original school education. This meta-analysis study will stand as a source to compare the effect size of the online education forms of the past decade with what is done today, and what will be done in the future.

Lastly, the heterogeneity test results of the meta-analysis study display that the effect size does not differ in terms of class level, country, online education approaches, and lecture moderators.

*Studies included in meta-analysis

Ahmad, S., Sumardi, K., & Purnawan, P. (2016). Komparasi Peningkatan Hasil Belajar Antara Pembelajaran Menggunakan Sistem Pembelajaran Online Terpadu Dengan Pembelajaran Klasikal Pada Mata Kuliah Pneumatik Dan Hidrolik. Journal of Mechanical Engineering Education, 2 (2), 286–292.

Article Google Scholar

Ally, M. (2004). Foundations of educational theory for online learning. Theory and Practice of Online Learning, 2 , 15–44. Retrieved on the 11th of September, 2020 from https://eddl.tru.ca/wp-content/uploads/2018/12/01_Anderson_2008-Theory_and_Practice_of_Online_Learning.pdf

Arat, T., & Bakan, Ö. (2011). Uzaktan eğitim ve uygulamaları. Selçuk Üniversitesi Sosyal Bilimler Meslek Yüksek Okulu Dergisi , 14 (1–2), 363–374. https://doi.org/10.29249/selcuksbmyd.540741

Astuti, C. C., Sari, H. M. K., & Azizah, N. L. (2019). Perbandingan Efektifitas Proses Pembelajaran Menggunakan Metode E-Learning dan Konvensional. Proceedings of the ICECRS, 2 (1), 35–40.

*Atici, B., & Polat, O. C. (2010). Influence of the online learning environments and tools on the student achievement and opinions. Educational Research and Reviews, 5 (8), 455–464. Retrieved on the 11th of October, 2020 from https://academicjournals.org/journal/ERR/article-full-text-pdf/4C8DD044180.pdf

Bernard, R. M., Abrami, P. C., Lou, Y., Borokhovski, E., Wade, A., Wozney, L., et al. (2004). How does distance education compare with classroom instruction? A meta- analysis of the empirical literature. Review of Educational Research, 3 (74), 379–439. https://doi.org/10.3102/00346543074003379

Borenstein, M., Hedges, L. V., Higgins, J. P. T., & Rothstein, H. R. (2009). Introduction to meta-analysis . Wiley.

Book Google Scholar

Borenstein, M., Hedges, L., & Rothstein, H. (2007). Meta-analysis: Fixed effect vs. random effects . UK: Wiley.

Card, N. A. (2011). Applied meta-analysis for social science research: Methodology in the social sciences . Guilford.

Google Scholar

*Carreon, J. R. (2018 ). Facebook as integrated blended learning tool in technology and livelihood education exploratory. Retrieved on the 1st of October, 2020 from https://files.eric.ed.gov/fulltext/EJ1197714.pdf

Cavanaugh, C., Gillan, K. J., Kromrey, J., Hess, M., & Blomeyer, R. (2004). The effects of distance education on K-12 student outcomes: A meta-analysis. Learning Point Associates/North Central Regional Educational Laboratory (NCREL) . Retrieved on the 11th of September, 2020 from https://files.eric.ed.gov/fulltext/ED489533.pdf

*Ceylan, V. K., & Elitok Kesici, A. (2017). Effect of blended learning to academic achievement. Journal of Human Sciences, 14 (1), 308. https://doi.org/10.14687/jhs.v14i1.4141

*Chae, S. E., & Shin, J. H. (2016). Tutoring styles that encourage learner satisfaction, academic engagement, and achievement in an online environment. Interactive Learning Environments, 24(6), 1371–1385. https://doi.org/10.1080/10494820.2015.1009472

*Chiang, T. H. C., Yang, S. J. H., & Hwang, G. J. (2014). An augmented reality-based mobile learning system to improve students’ learning achievements and motivations in natural science inquiry activities. Educational Technology and Society, 17 (4), 352–365. Retrieved on the 11th of September, 2020 from https://www.researchgate.net/profile/Gwo_Jen_Hwang/publication/287529242_An_Augmented_Reality-based_Mobile_Learning_System_to_Improve_Students'_Learning_Achievements_and_Motivations_in_Natural_Science_Inquiry_Activities/links/57198c4808ae30c3f9f2c4ac.pdf

Chiao, H. M., Chen, Y. L., & Huang, W. H. (2018). Examining the usability of an online virtual tour-guiding platform for cultural tourism education. Journal of Hospitality, Leisure, Sport & Tourism Education, 23 (29–38), 1. https://doi.org/10.1016/j.jhlste.2018.05.002

Chizmar, J. F., & Walbert, M. S. (1999). Web-based learning environments guided by principles of good teaching practice. Journal of Economic Education, 30 (3), 248–264. https://doi.org/10.2307/1183061

Cleophas, T. J., & Zwinderman, A. H. (2017). Modern meta-analysis: Review and update of methodologies . Switzerland: Springer. https://doi.org/10.1007/978-3-319-55895-0

Cohen, L., Manion, L., & Morrison, K. (2007). Observation. Research Methods in Education, 6 , 396–412. Retrieved on the 11th of September, 2020 from https://www.researchgate.net/profile/Nabil_Ashraf2/post/How_to_get_surface_potential_Vs_Voltage_curve_from_CV_and_GV_measurements_of_MOS_capacitor/attachment/5ac6033cb53d2f63c3c405b4/AS%3A612011817844736%401522926396219/download/Very+important_C-V+characterization+Lehigh+University+thesis.pdf

Colis, B., & Moonen, J. (2001). Flexible Learning in a Digital World: Experiences and Expectations. Open & Distance Learning Series . Stylus Publishing.

CoSN. (2020). COVID-19 Response: Preparing to Take School Online. CoSN. (2020). COVID-19 Response: Preparing to Take School Online. Retrieved on the 3rd of September, 2021 from https://www.cosn.org/sites/default/files/COVID-19%20Member%20Exclusive_0.pdf

Cumming, G. (2012). Understanding new statistics: Effect sizes, confidence intervals, and meta-analysis. New York, USA: Routledge. https://doi.org/10.4324/9780203807002

Deeks, J. J., Higgins, J. P. T., & Altman, D. G. (2008). Analysing data and undertaking meta-analyses . In J. P. T. Higgins & S. Green (Eds.), Cochrane handbook for systematic reviews of interventions (pp. 243–296). Sussex: John Wiley & Sons. https://doi.org/10.1002/9780470712184.ch9

Demiralay, R., Bayır, E. A., & Gelibolu, M. F. (2016). Öğrencilerin bireysel yenilikçilik özellikleri ile çevrimiçi öğrenmeye hazır bulunuşlukları ilişkisinin incelenmesi. Eğitim ve Öğretim Araştırmaları Dergisi, 5 (1), 161–168. https://doi.org/10.23891/efdyyu.2017.10

Dinçer, S. (2014). Eğitim bilimlerinde uygulamalı meta-analiz. Pegem Atıf İndeksi, 2014(1), 1–133. https://doi.org/10.14527/pegem.001

*Durak, G., Cankaya, S., Yunkul, E., & Ozturk, G. (2017). The effects of a social learning network on students’ performances and attitudes. European Journal of Education Studies, 3 (3), 312–333. 10.5281/zenodo.292951

*Ercan, O. (2014). Effect of web assisted education supported by six thinking hats on students’ academic achievement in science and technology classes . European Journal of Educational Research, 3 (1), 9–23. https://doi.org/10.12973/eu-jer.3.1.9

Ercan, O., & Bilen, K. (2014). Effect of web assisted education supported by six thinking hats on students’ academic achievement in science and technology classes. European Journal of Educational Research, 3 (1), 9–23.

*Ercan, O., Bilen, K., & Ural, E. (2016). “Earth, sun and moon”: Computer assisted instruction in secondary school science - Achievement and attitudes. Issues in Educational Research, 26 (2), 206–224. https://doi.org/10.12973/eu-jer.3.1.9

Field, A. P. (2003). The problems in using fixed-effects models of meta-analysis on real-world data. Understanding Statistics, 2 (2), 105–124. https://doi.org/10.1207/s15328031us0202_02

Field, A. P., & Gillett, R. (2010). How to do a meta-analysis. British Journal of Mathematical and Statistical Psychology, 63 (3), 665–694. https://doi.org/10.1348/00071010x502733

Geostat. (2019). ‘Share of households with internet access’, National statistics office of Georgia . Retrieved on the 2nd September 2020 from https://www.geostat.ge/en/modules/categories/106/information-and-communication-technologies-usage-in-households

*Gwo-Jen, H., Nien-Ting, T., & Xiao-Ming, W. (2018). Creating interactive e-books through learning by design: The impacts of guided peer-feedback on students’ learning achievements and project outcomes in science courses. Journal of Educational Technology & Society., 21 (1), 25–36. Retrieved on the 2nd of October, 2020 https://ae-uploads.uoregon.edu/ISTE/ISTE2019/PROGRAM_SESSION_MODEL/HANDOUTS/112172923/CreatingInteractiveeBooksthroughLearningbyDesignArticle2018.pdf

Hamdani, A. R., & Priatna, A. (2020). Efektifitas implementasi pembelajaran daring (full online) dimasa pandemi Covid-19 pada jenjang Sekolah Dasar di Kabupaten Subang. Didaktik: Jurnal Ilmiah PGSD STKIP Subang, 6 (1), 1–9.

Hart, C. M., Berger, D., Jacob, B., Loeb, S., & Hill, M. (2019). Online learning, offline outcomes: Online course taking and high school student performance. Aera Open, 5(1).

*Hayes, J., & Stewart, I. (2016). Comparing the effects of derived relational training and computer coding on intellectual potential in school-age children. The British Journal of Educational Psychology, 86 (3), 397–411. https://doi.org/10.1111/bjep.12114

Horton, W. K. (2000). Designing web-based training: How to teach anyone anything anywhere anytime (Vol. 1). Wiley Publishing.

*Hwang, G. J., Wu, P. H., & Chen, C. C. (2012). An online game approach for improving students’ learning performance in web-based problem-solving activities. Computers and Education, 59 (4), 1246–1256. https://doi.org/10.1016/j.compedu.2012.05.009

*Kert, S. B., Köşkeroğlu Büyükimdat, M., Uzun, A., & Çayiroğlu, B. (2017). Comparing active game-playing scores and academic performances of elementary school students. Education 3–13, 45 (5), 532–542. https://doi.org/10.1080/03004279.2016.1140800

*Lai, A. F., & Chen, D. J. (2010). Web-based two-tier diagnostic test and remedial learning experiment. International Journal of Distance Education Technologies, 8 (1), 31–53. https://doi.org/10.4018/jdet.2010010103

*Lai, A. F., Lai, H. Y., Chuang W. H., & Wu, Z.H. (2015). Developing a mobile learning management system for outdoors nature science activities based on 5e learning cycle. Proceedings of the International Conference on e-Learning, ICEL. Proceedings of the International Association for Development of the Information Society (IADIS) International Conference on e-Learning (Las Palmas de Gran Canaria, Spain, July 21–24, 2015). Retrieved on the 14th November 2020 from https://files.eric.ed.gov/fulltext/ED562095.pdf

Lai, C. H., Lin, H. W., Lin, R. M., & Tho, P. D. (2019). Effect of peer interaction among online learning community on learning engagement and achievement. International Journal of Distance Education Technologies (IJDET), 17 (1), 66–77.

Littell, J. H., Corcoran, J., & Pillai, V. (2008). Systematic reviews and meta-analysis . Oxford University.

*Liu, K. P., Tai, S. J. D., & Liu, C. C. (2018). Enhancing language learning through creation: the effect of digital storytelling on student learning motivation and performance in a school English course. Educational Technology Research and Development, 66 (4), 913–935. https://doi.org/10.1007/s11423-018-9592-z