- school Campus Bookshelves

- menu_book Bookshelves

- perm_media Learning Objects

- login Login

- how_to_reg Request Instructor Account

- hub Instructor Commons

- Download Page (PDF)

- Download Full Book (PDF)

- Periodic Table

- Physics Constants

- Scientific Calculator

- Reference & Cite

- Tools expand_more

- Readability

selected template will load here

This action is not available.

10.29: Hypothesis Test for a Difference in Two Population Means (1 of 2)

- Last updated

- Save as PDF

- Page ID 14167

Learning Objectives

- Under appropriate conditions, conduct a hypothesis test about a difference between two population means. State a conclusion in context.

Using the Hypothesis Test for a Difference in Two Population Means

The general steps of this hypothesis test are the same as always. As expected, the details of the conditions for use of the test and the test statistic are unique to this test (but similar in many ways to what we have seen before.)

Step 1: Determine the hypotheses.

The hypotheses for a difference in two population means are similar to those for a difference in two population proportions. The null hypothesis, H 0 , is again a statement of “no effect” or “no difference.”

- H 0 : μ 1 – μ 2 = 0, which is the same as H 0 : μ 1 = μ 2

The alternative hypothesis, H a , can be any one of the following.

- H a : μ 1 – μ 2 < 0, which is the same as H a : μ 1 < μ 2

- H a : μ 1 – μ 2 > 0, which is the same as H a : μ 1 > μ 2

- H a : μ 1 – μ 2 ≠ 0, which is the same as H a : μ 1 ≠ μ 2

Step 2: Collect the data.

As usual, how we collect the data determines whether we can use it in the inference procedure. We have our usual two requirements for data collection.

- Samples must be random to remove or minimize bias.

- Samples must be representative of the populations in question.

We use this hypothesis test when the data meets the following conditions.

- The two random samples are independent .

- The variable is normally distributed in both populations . If this variable is not known, samples of more than 30 will have a difference in sample means that can be modeled adequately by the t-distribution. As we discussed in “Hypothesis Test for a Population Mean,” t-procedures are robust even when the variable is not normally distributed in the population. If checking normality in the populations is impossible, then we look at the distribution in the samples. If a histogram or dotplot of the data does not show extreme skew or outliers, we take it as a sign that the variable is not heavily skewed in the populations, and we use the inference procedure. (Note: This is the same condition we used for the one-sample t-test in “Hypothesis Test for a Population Mean.”)

Step 3: Assess the evidence.

If the conditions are met, then we calculate the t-test statistic. The t-test statistic has a familiar form.

Since the null hypothesis assumes there is no difference in the population means, the expression (μ 1 – μ 2 ) is always zero.

As we learned in “Estimating a Population Mean,” the t-distribution depends on the degrees of freedom (df) . In the one-sample and matched-pair cases df = n – 1. For the two-sample t-test, determining the correct df is based on a complicated formula that we do not cover in this course. We will either give the df or use technology to find the df . With the t-test statistic and the degrees of freedom, we can use the appropriate t-model to find the P-value, just as we did in “Hypothesis Test for a Population Mean.” We can even use the same simulation.

Step 4: State a conclusion.

To state a conclusion, we follow what we have done with other hypothesis tests. We compare our P-value to a stated level of significance.

- If the P-value ≤ α, we reject the null hypothesis in favor of the alternative hypothesis.

- If the P-value > α, we fail to reject the null hypothesis. We do not have enough evidence to support the alternative hypothesis.

As always, we state our conclusion in context, usually by referring to the alternative hypothesis.

“Context and Calories”

Does the company you keep impact what you eat? This example comes from an article titled “Impact of Group Settings and Gender on Meals Purchased by College Students” (Allen-O’Donnell, M., T. C. Nowak, K. A. Snyder, and M. D. Cottingham, Journal of Applied Social Psychology 49(9), 2011, onlinelibrary.wiley.com/doi/10.1111/j.1559-1816.2011.00804.x/full) . In this study, researchers examined this issue in the context of gender-related theories in their field. For our purposes, we look at this research more narrowly.

Step 1: Stating the hypotheses.

In the article, the authors make the following hypothesis. “The attempt to appear feminine will be empirically demonstrated by the purchase of fewer calories by women in mixed-gender groups than by women in same-gender groups.” We translate this into a simpler and narrower research question: Do women purchase fewer calories when they eat with men compared to when they eat with women?

Here the two populations are “women eating with women” (population 1) and “women eating with men” (population 2). The variable is the calories in the meal. We test the following hypotheses at the 5% level of significance.

The null hypothesis is always H 0 : μ 1 – μ 2 = 0, which is the same as H 0 : μ 1 = μ 2 .

The alternative hypothesis H a : μ 1 – μ 2 > 0, which is the same as H a : μ 1 > μ 2 .

Here μ 1 represents the mean number of calories ordered by women when they were eating with other women, and μ 2 represents the mean number of calories ordered by women when they were eating with men.

Note: It does not matter which population we label as 1 or 2, but once we decide, we have to stay consistent throughout the hypothesis test. Since we expect the number of calories to be greater for the women eating with other women, the difference is positive if “women eating with women” is population 1. If you prefer to work with positive numbers, choose the group with the larger expected mean as population 1. This is a good general tip.

Step 2: Collect Data.

As usual, there are two major things to keep in mind when considering the collection of data.

- Samples need to be representative of the population in question.

- Samples need to be random in order to remove or minimize bias.

Representative Samples?

The researchers state their hypothesis in terms of “women.” We did the same. But the researchers gathered data by watching people eat at the HUB Rock Café II on the campus of Indiana University of Pennsylvania during the Spring semester of 2006. Almost all of the women in the data set were white undergraduates between the ages of 18 and 24, so there are some definite limitations on the scope of this study. These limitations will affect our conclusion (and the specific definition of the population means in our hypotheses.)

Random Samples?

The observations were collected on February 13, 2006, through February 22, 2006, between 11 a.m. and 7 p.m. We can see that the researchers included both lunch and dinner. They also made observations on all days of the week to ensure that weekly customer patterns did not confound their findings. The authors state that “since the time period for observations and the place where [they] observed students were limited, the sample was a convenience sample.” Despite these limitations, the researchers conducted inference procedures with the data, and the results were published in a reputable journal. We will also conduct inference with this data, but we also include a discussion of the limitations of the study with our conclusion. The authors did this, also.

Do the data met the conditions for use of a t-test?

The researchers reported the following sample statistics.

- In a sample of 45 women dining with other women, the average number of calories ordered was 850, and the standard deviation was 252.

- In a sample of 27 women dining with men, the average number of calories ordered was 719, and the standard deviation was 322.

One of the samples has fewer than 30 women. We need to make sure the distribution of calories in this sample is not heavily skewed and has no outliers, but we do not have access to a spreadsheet of the actual data. Since the researchers conducted a t-test with this data, we will assume that the conditions are met. This includes the assumption that the samples are independent.

As noted previously, the researchers reported the following sample statistics.

To compute the t-test statistic, make sure sample 1 corresponds to population 1. Here our population 1 is “women eating with other women.” So x 1 = 850, s 1 = 252, n 1 =45, and so on.

Using technology, we determined that the degrees of freedom are about 45 for this data. To find the P-value, we use our familiar simulation of the t-distribution. Since the alternative hypothesis is a “greater than” statement, we look for the area to the right of T = 1.81. The P-value is 0.0385.

Generic Conclusion

The hypotheses for this test are H 0 : μ 1 – μ 2 = 0 and H a : μ 1 – μ 2 > 0. Since the P-value is less than the significance level (0.0385 < 0.05), we reject H 0 and accept H a .

Conclusion in context

At Indiana University of Pennsylvania, the mean number of calories ordered by undergraduate women eating with other women is greater than the mean number of calories ordered by undergraduate women eating with men (P-value = 0.0385).

Comment about Conclusions

In the conclusion above, we did not generalize the findings to all women. Since the samples included only undergraduate women at one university, we included this information in our conclusion. But our conclusion is a cautious statement of the findings. The authors see the results more broadly in the context of theories in the field of social psychology. In the context of these theories, they write, “Our findings support the assertion that meal size is a tool for influencing the impressions of others. For traditional-age, predominantly White college women, diminished meal size appears to be an attempt to assert femininity in groups that include men.” This viewpoint is echoed in the following summary of the study for the general public on National Public Radio (npr.org).

- Both men and women appear to choose larger portions when they eat with women, and both men and women choose smaller portions when they eat in the company of men, according to new research published in the Journal of Applied Social Psychology . The study, conducted among a sample of 127 college students, suggests that both men and women are influenced by unconscious scripts about how to behave in each other’s company. And these scripts change the way men and women eat when they eat together and when they eat apart.

Should we be concerned that the findings of this study are generalized in this way? Perhaps. But the authors of the article address this concern by including the following disclaimer with their findings: “While the results of our research are suggestive, they should be replicated with larger, representative samples. Studies should be done not only with primarily White, middle-class college students, but also with students who differ in terms of race/ethnicity, social class, age, sexual orientation, and so forth.” This is an example of good statistical practice. It is often very difficult to select truly random samples from the populations of interest. Researchers therefore discuss the limitations of their sampling design when they discuss their conclusions.

In the following activities, you will have the opportunity to practice parts of the hypothesis test for a difference in two population means. On the next page, the activities focus on the entire process and also incorporate technology.

National Health and Nutrition Survey

https://assessments.lumenlearning.co...sessments/3705

https://assessments.lumenlearning.co...sessments/3782

https://assessments.lumenlearning.co...sessments/3706

Contributors and Attributions

- Concepts in Statistics. Provided by : Open Learning Initiative. Located at : http://oli.cmu.edu . License : CC BY: Attribution

Statistics Made Easy

Two Sample t-test: Definition, Formula, and Example

A two sample t-test is used to determine whether or not two population means are equal.

This tutorial explains the following:

- The motivation for performing a two sample t-test.

- The formula to perform a two sample t-test.

- The assumptions that should be met to perform a two sample t-test.

- An example of how to perform a two sample t-test.

Two Sample t-test: Motivation

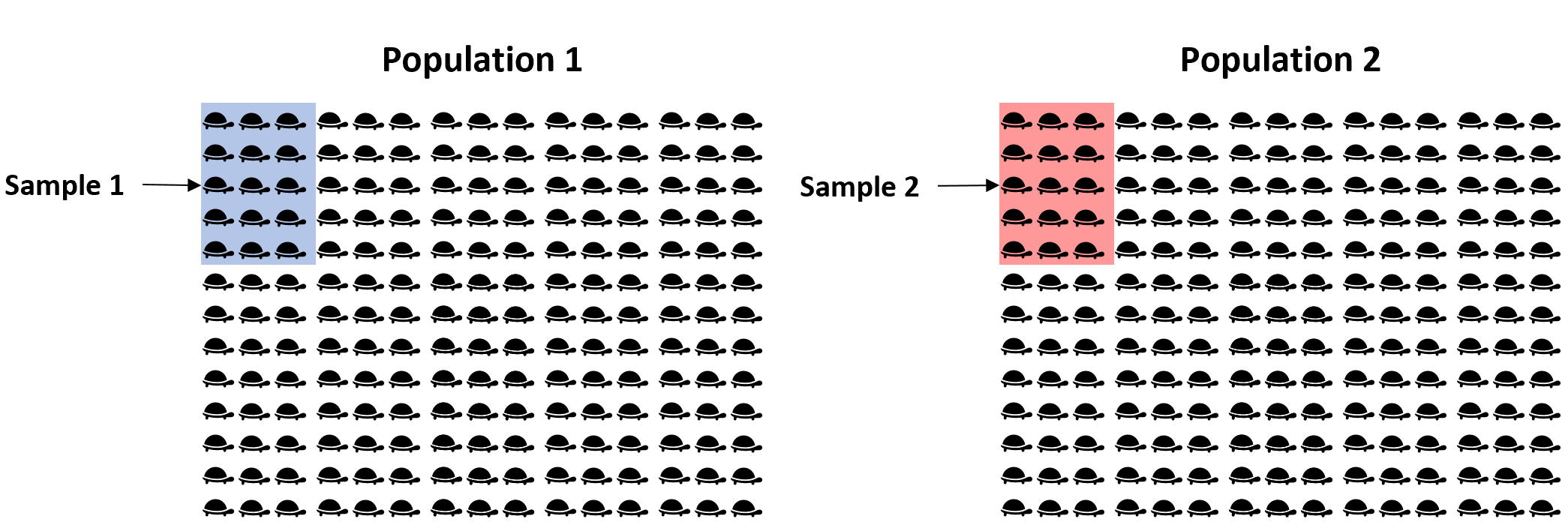

Suppose we want to know whether or not the mean weight between two different species of turtles is equal. Since there are thousands of turtles in each population, it would be too time-consuming and costly to go around and weigh each individual turtle.

Instead, we might take a simple random sample of 15 turtles from each population and use the mean weight in each sample to determine if the mean weight is equal between the two populations:

However, it’s virtually guaranteed that the mean weight between the two samples will be at least a little different. The question is whether or not this difference is statistically significant . Fortunately, a two sample t-test allows us to answer this question.

Two Sample t-test: Formula

A two-sample t-test always uses the following null hypothesis:

- H 0 : μ 1 = μ 2 (the two population means are equal)

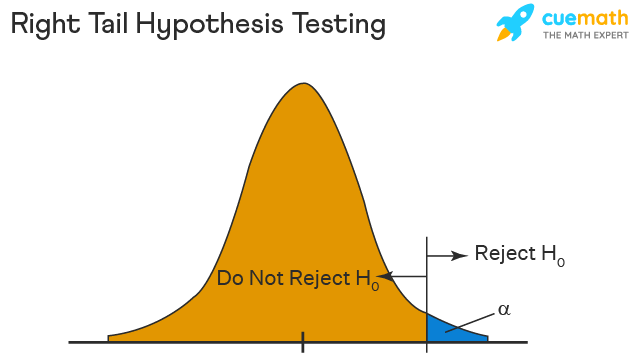

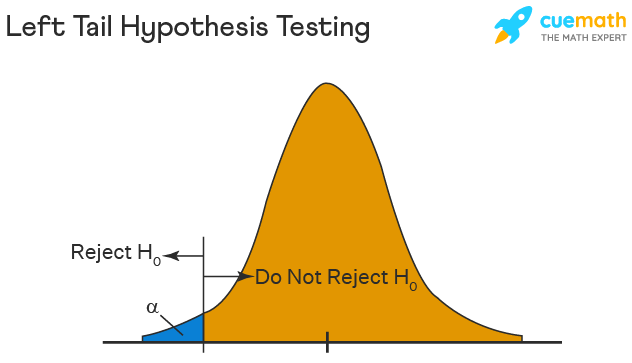

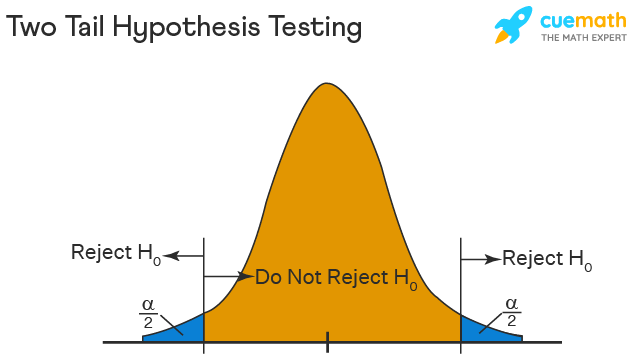

The alternative hypothesis can be either two-tailed, left-tailed, or right-tailed:

- H 1 (two-tailed): μ 1 ≠ μ 2 (the two population means are not equal)

- H 1 (left-tailed): μ 1 < μ 2 (population 1 mean is less than population 2 mean)

- H 1 (right-tailed): μ 1 > μ 2 (population 1 mean is greater than population 2 mean)

We use the following formula to calculate the test statistic t:

Test statistic: ( x 1 – x 2 ) / s p (√ 1/n 1 + 1/n 2 )

where x 1 and x 2 are the sample means, n 1 and n 2 are the sample sizes, and where s p is calculated as:

s p = √ (n 1 -1)s 1 2 + (n 2 -1)s 2 2 / (n 1 +n 2 -2)

where s 1 2 and s 2 2 are the sample variances.

If the p-value that corresponds to the test statistic t with (n 1 +n 2 -1) degrees of freedom is less than your chosen significance level (common choices are 0.10, 0.05, and 0.01) then you can reject the null hypothesis.

Two Sample t-test: Assumptions

For the results of a two sample t-test to be valid, the following assumptions should be met:

- The observations in one sample should be independent of the observations in the other sample.

- The data should be approximately normally distributed.

- The two samples should have approximately the same variance. If this assumption is not met, you should instead perform Welch’s t-test .

- The data in both samples was obtained using a random sampling method .

Two Sample t-test : Example

Suppose we want to know whether or not the mean weight between two different species of turtles is equal. To test this, will perform a two sample t-test at significance level α = 0.05 using the following steps:

Step 1: Gather the sample data.

Suppose we collect a random sample of turtles from each population with the following information:

- Sample size n 1 = 40

- Sample mean weight x 1 = 300

- Sample standard deviation s 1 = 18.5

- Sample size n 2 = 38

- Sample mean weight x 2 = 305

- Sample standard deviation s 2 = 16.7

Step 2: Define the hypotheses.

We will perform the two sample t-test with the following hypotheses:

- H 0 : μ 1 = μ 2 (the two population means are equal)

- H 1 : μ 1 ≠ μ 2 (the two population means are not equal)

Step 3: Calculate the test statistic t .

First, we will calculate the pooled standard deviation s p :

s p = √ (n 1 -1)s 1 2 + (n 2 -1)s 2 2 / (n 1 +n 2 -2) = √ (40-1)18.5 2 + (38-1)16.7 2 / (40+38-2) = 17.647

Next, we will calculate the test statistic t :

t = ( x 1 – x 2 ) / s p (√ 1/n 1 + 1/n 2 ) = (300-305) / 17.647(√ 1/40 + 1/38 ) = -1.2508

Step 4: Calculate the p-value of the test statistic t .

According to the T Score to P Value Calculator , the p-value associated with t = -1.2508 and degrees of freedom = n 1 +n 2 -2 = 40+38-2 = 76 is 0.21484 .

Step 5: Draw a conclusion.

Since this p-value is not less than our significance level α = 0.05, we fail to reject the null hypothesis. We do not have sufficient evidence to say that the mean weight of turtles between these two populations is different.

Note: You can also perform this entire two sample t-test by simply using the Two Sample t-test Calculator .

Additional Resources

The following tutorials explain how to perform a two-sample t-test using different statistical programs:

How to Perform a Two Sample t-test in Excel How to Perform a Two Sample t-test in SPSS How to Perform a Two Sample t-test in Stata How to Perform a Two Sample t-test in R How to Perform a Two Sample t-test in Python How to Perform a Two Sample t-test on a TI-84 Calculator

Published by Zach

Leave a reply cancel reply.

Your email address will not be published. Required fields are marked *

Want to create or adapt books like this? Learn more about how Pressbooks supports open publishing practices.

Inference for Comparing 2 Population Means (HT for 2 Means, independent samples)

More of the good stuff! We will need to know how to label the null and alternative hypothesis, calculate the test statistic, and then reach our conclusion using the critical value method or the p-value method.

The Test Statistic for a Test of 2 Means from Independent Samples:

[latex]t = \displaystyle \frac{(\bar{x_1} - \bar{x_2}) - (\mu_1 - \mu_2)}{\sqrt{\displaystyle \frac{s_1^2}{n_1} + \displaystyle \frac{s_2^2}{n_2}}}[/latex]

What the different symbols mean:

[latex]n_1[/latex] is the sample size for the first group

[latex]n_2[/latex] is the sample size for the second group

[latex]df[/latex], the degrees of freedom, is the smaller of [latex]n_1 - 1[/latex] and [latex]n_2 - 1[/latex]

[latex]\mu_1[/latex] is the population mean from the first group

[latex]\mu_2[/latex] is the population mean from the second group

[latex]\bar{x_1}[/latex] is the sample mean for the first group

[latex]\bar{x_2}[/latex] is the sample mean for the second group

[latex]s_1[/latex] is the sample standard deviation for the first group

[latex]s_2[/latex] is the sample standard deviation for the second group

[latex]\alpha[/latex] is the significance level , usually given within the problem, or if not given, we assume it to be 5% or 0.05

Assumptions when conducting a Test for 2 Means from Independent Samples:

- We do not know the population standard deviations, and we do not assume they are equal

- The two samples or groups are independent

- Both samples are simple random samples

- Both populations are Normally distributed OR both samples are large ([latex]n_1 > 30[/latex] and [latex]n_2 > 30[/latex])

Steps to conduct the Test for 2 Means from Independent Samples:

- Identify all the symbols listed above (all the stuff that will go into the formulas). This includes [latex]n_1[/latex] and [latex]n_2[/latex], [latex]df[/latex], [latex]\mu_1[/latex] and [latex]\mu_2[/latex], [latex]\bar{x_1}[/latex] and [latex]\bar{x_2}[/latex], [latex]s_1[/latex] and [latex]s_2[/latex], and [latex]\alpha[/latex]

- Identify the null and alternative hypotheses

- Calculate the test statistic, [latex]t = \displaystyle \frac{(\bar{x_1} - \bar{x_2}) - (\mu_1 - \mu_2)}{\sqrt{\displaystyle \frac{s_1^2}{n_1} + \displaystyle \frac{s_2^2}{n_2}}}[/latex]

- Find the critical value(s) OR the p-value OR both

- Apply the Decision Rule

- Write up a conclusion for the test

Example 1: Study on the effectiveness of stents for stroke patients [1]

In this study , researchers randomly assigned stroke patients to two groups: one received the current standard care (control) and the other received a stent surgery in addition to the standard care (stent treatment). If the stents work, the treatment group should have a lower average disability score . Do the results give convincing statistical evidence that the stent treatment reduces the average disability from stroke?

Since we are being asked for convincing statistical evidence, a hypothesis test should be conducted. In this case, we are dealing with averages from two samples or groups (the patients with stent treatment and patients receiving the standard care), so we will conduct a Test of 2 Means.

- [latex]n_1 = 98[/latex] is the sample size for the first group

- [latex]n_2 = 93[/latex] is the sample size for the second group

- [latex]df[/latex], the degrees of freedom, is the smaller of [latex]98 - 1 = 97[/latex] and [latex]93 - 1 = 92[/latex], so [latex]df = 92[/latex]

- [latex]\bar{x_1} = 2.26[/latex] is the sample mean for the first group

- [latex]\bar{x_2} = 3.23[/latex] is the sample mean for the second group

- [latex]s_1 = 1.78[/latex] is the sample standard deviation for the first group

- [latex]s_2 = 1.78[/latex] is the sample standard deviation for the second group

- [latex]\alpha = 0.05[/latex] (we were not told a specific value in the problem, so we are assuming it is 5%)

- One additional assumption we extend from the null hypothesis is that [latex]\mu_1 - \mu_2 = 0[/latex]; this means that in our formula, those variables cancel out

- [latex]H_{0}: \mu_1 = \mu_2[/latex]

- [latex]H_{A}: \mu_1 < \mu_2[/latex]

- [latex]t = \displaystyle \frac{(\bar{x_1} - \bar{x_2}) - (\mu_1 - \mu_2)}{\sqrt{\displaystyle \frac{s_1^2}{n_1} + \displaystyle \frac{s_2^2}{n_2}}} = \displaystyle \frac{(2.26 - 3.23) - 0)}{\sqrt{\displaystyle \frac{1.78^2}{98} + \displaystyle \frac{1.78^2}{93}}} = -3.76[/latex]

- StatDisk : We can conduct this test using StatDisk. The nice thing about StatDisk is that it will also compute the test statistic. From the main menu above we click on Analysis, Hypothesis Testing, and then Mean Two Independent Samples. From there enter the 0.05 significance, along with the specific values as outlined in the picture below in Step 2. Notice the alternative hypothesis is the [latex]<[/latex] option. Enter the sample size, mean, and standard deviation for each group, and make sure that unequal variances is selected. Now we click on Evaluate. If you check the values, the test statistic is reported in the Step 3 display, as well as the P-Value of 0.00011.

- Applying the Decision Rule: We now compare this to our significance level, which is 0.05. If the p-value is smaller or equal to the alpha level, we have enough evidence for our claim, otherwise we do not. Here, [latex]p-value = 0.00011[/latex], which is definitely smaller than [latex]\alpha = 0.05[/latex], so we have enough evidence for the alternative hypothesis…but what does this mean?

- Conclusion: Because our p-value of [latex]0.00011[/latex] is less than our [latex]\alpha[/latex] level of [latex]0.05[/latex], we reject [latex]H_{0}[/latex]. We have convincing statistical evidence that the stent treatment reduces the average disability from stroke.

Example 2: Home Run Distances

In 1998, Sammy Sosa and Mark McGwire (2 players in Major League Baseball) were on pace to set a new home run record. At the end of the season McGwire ended up with 70 home runs, and Sosa ended up with 66. The home run distances were recorded and compared (sometimes a player’s home run distance is used to measure their “power”). Do the results give convincing statistical evidence that the home run distances are different from each other? Who would you say “hit the ball farther” in this comparison?

Since we are being asked for convincing statistical evidence, a hypothesis test should be conducted. In this case, we are dealing with averages from two samples or groups (the home run distances), so we will conduct a Test of 2 Means.

- [latex]n_1 = 70[/latex] is the sample size for the first group

- [latex]n_2 = 66[/latex] is the sample size for the second group

- [latex]df[/latex], the degrees of freedom, is the smaller of [latex]70 - 1 = 69[/latex] and [latex]66 - 1 = 65[/latex], so [latex]df = 65[/latex]

- [latex]\bar{x_1} = 418.5[/latex] is the sample mean for the first group

- [latex]\bar{x_2} = 404.8[/latex] is the sample mean for the second group

- [latex]s_1 = 45.5[/latex] is the sample standard deviation for the first group

- [latex]s_2 = 35.7[/latex] is the sample standard deviation for the second group

- [latex]H_{A}: \mu_1 \neq \mu_2[/latex]

- [latex]t = \displaystyle \frac{(\bar{x_1} - \bar{x_2}) - (\mu_1 - \mu_2)}{\sqrt{\displaystyle \frac{s_1^2}{n_1} + \displaystyle \frac{s_2^2}{n_2}}} = \displaystyle \frac{(418.5 - 404.8) - 0)}{\sqrt{\displaystyle \frac{45.5^2}{70} + \displaystyle \frac{35.7^2}{65}}} = 1.95[/latex]

- StatDisk : We can conduct this test using StatDisk. The nice thing about StatDisk is that it will also compute the test statistic. From the main menu above we click on Analysis, Hypothesis Testing, and then Mean Two Independent Samples. From there enter the 0.05 significance, along with the specific values as outlined in the picture below in Step 2. Notice the alternative hypothesis is the [latex]\neq[/latex] option. Enter the sample size, mean, and standard deviation for each group, and make sure that unequal variances is selected. Now we click on Evaluate. If you check the values, the test statistic is reported in the Step 3 display, as well as the P-Value of 0.05221.

- Applying the Decision Rule: We now compare this to our significance level, which is 0.05. If the p-value is smaller or equal to the alpha level, we have enough evidence for our claim, otherwise we do not. Here, [latex]p-value = 0.05221[/latex], which is larger than [latex]\alpha = 0.05[/latex], so we do not have enough evidence for the alternative hypothesis…but what does this mean?

- Conclusion: Because our p-value of [latex]0.05221[/latex] is larger than our [latex]\alpha[/latex] level of [latex]0.05[/latex], we fail to reject [latex]H_{0}[/latex]. We do not have convincing statistical evidence that the home run distances are different.

- Follow-up commentary: But what does this mean? There actually was a difference, right? If we take McGwire’s average and subtract Sosa’s average we get a difference of 13.7. What this result indicates is that the difference is not statistically significant; it could be due more to random chance than something meaningful. Other factors, such as sample size, could also be a determining factor (with a larger sample size, the difference may have been more meaningful).

- Adapted from the Skew The Script curriculum ( skewthescript.org ), licensed under CC BY-NC-Sa 4.0 ↵

Basic Statistics Copyright © by Allyn Leon is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License , except where otherwise noted.

Share This Book

- Study Guides

- Two Sample t test for Comparing Two Means

- Method of Statistical Inference

- Types of Statistics

- Steps in the Process

- Making Predictions

- Comparing Results

- Probability

- Quiz: Introduction to Statistics

- What Are Statistics?

- Quiz: Bar Chart

- Quiz: Pie Chart

- Introduction to Graphic Displays

- Quiz: Dot Plot

- Quiz: Introduction to Graphic Displays

- Frequency Histogram

- Relative Frequency Histogram

- Quiz: Relative Frequency Histogram

- Frequency Polygon

- Quiz: Frequency Polygon

- Frequency Distribution

- Stem-and-Leaf

- Box Plot (Box-and-Whiskers)

- Quiz: Box Plot (Box-and-Whiskers)

- Scatter Plot

- Measures of Central Tendency

- Quiz: Measures of Central Tendency

- Measures of Variability

- Quiz: Measures of Variability

- Measurement Scales

- Quiz: Introduction to Numerical Measures

- Classic Theory

- Relative Frequency Theory

- Probability of Simple Events

- Quiz: Probability of Simple Events

- Independent Events

- Dependent Events

- Introduction to Probability

- Quiz: Introduction to Probability

- Probability of Joint Occurrences

- Quiz: Probability of Joint Occurrences

- Non-Mutually-Exclusive Outcomes

- Quiz: Non-Mutually-Exclusive Outcomes

- Double-Counting

- Conditional Probability

- Quiz: Conditional Probability

- Probability Distributions

- Quiz: Probability Distributions

- The Binomial

- Quiz: The Binomial

- Quiz: Sampling Distributions

- Random and Systematic Error

- Central Limit Theorem

- Quiz: Central Limit Theorem

- Populations, Samples, Parameters, and Statistics

- Properties of the Normal Curve

- Quiz: Populations, Samples, Parameters, and Statistics

- Sampling Distributions

- Quiz: Properties of the Normal Curve

- Normal Approximation to the Binomial

- Quiz: Normal Approximation to the Binomial

- Quiz: Stating Hypotheses

- The Test Statistic

- Quiz: The Test Statistic

- One- and Two-Tailed Tests

- Quiz: One- and Two-Tailed Tests

- Type I and II Errors

- Quiz: Type I and II Errors

- Stating Hypotheses

- Significance

- Quiz: Significance

- Point Estimates and Confidence Intervals

- Quiz: Point Estimates and Confidence Intervals

- Estimating a Difference Score

- Quiz: Estimating a Difference Score

- Univariate Tests: An Overview

- Quiz: Univariate Tests: An Overview

- One-Sample z-test

- Quiz: One-Sample z-test

- One-Sample t-test

- Quiz: One-Sample t-test

- Two-Sample z-test for Comparing Two Means

- Quiz: Introduction to Univariate Inferential Tests

- Quiz: Two-Sample z-test for Comparing Two Means

- Quiz: Two-Sample t-test for Comparing Two Means

- Paired Difference t-test

- Quiz: Paired Difference t-test

- Test for a Single Population Proportion

- Quiz: Test for a Single Population Proportion

- Test for Comparing Two Proportions

- Quiz: Test for Comparing Two Proportions

- Quiz: Simple Linear Regression

- Chi-Square (X2)

- Quiz: Chi-Square (X2)

- Correlation

- Quiz: Correlation

- Simple Linear Regression

- Common Mistakes

- Statistics Tables

- Quiz: Cumulative Review A

- Quiz: Cumulative Review B

- Statistics Quizzes

Requirements : Two normally distributed but independent populations, σ is unknown

Hypothesis test

An experiment is conducted to determine whether intensive tutoring (covering a great deal of material in a fixed amount of time) is more effective than paced tutoring (covering less material in the same amount of time). Two randomly chosen groups are tutored separately and then administered proficiency tests. Use a significance level of α < 0.05.

Let μ 1 represent the population mean for the intensive tutoring group and μ 2 represent the population mean for the paced tutoring group.

null hypothesis : H 0 : μ 1 = μ 2

or H 0 : μ 1 – μ 2 = 0

alternative hypothesis : H a : μ 1 > μ 2

or: H a : μ 1 – μ 2 > 0

The degrees of freedom parameter is the smaller of (12 – 1) and (10 – 1), or 9. Because this is a one‐tailed test, the alpha level (0.05) is not divided by two. The next step is to look up t .05,9 in the t‐ table (Table 3 in "Statistics Tables"), which gives a critical value of 1.833. The computed t of 1.166 does not exceed the tabled value, so the null hypothesis cannot be rejected. This test has not provided statistically significant evidence that intensive tutoring is superior to paced tutoring.

Estimate a 90 percent confidence interval for the difference between the number of raisins per box in two brands of breakfast cereal.

The interval is (–2.81, 19.81).

You can be 90 percent confident that Brand A cereal has between 2.81 fewer and 19.81 more raisins per box than Brand B. The fact that the interval contains 0 means that if you had performed a test of the hypothesis that the two population means are different (using the same significance level), you would not have been able to reject the null hypothesis of no difference.

If the two population distributions can be assumed to have the same variance—and, therefore, the same standard deviation— s 1 and s 2 can be pooled together, each weighted by the number of cases in each sample. Although using pooled variance in a t‐ test is generally more likely to yield significant results than using separate variances, it is often hard to know whether the variances of the two populations are equal. For this reason, the pooled variance method should be used with caution. The formula for the pooled estimator of σ 2 is

where s 1 and s 2 are the standard deviations of the two samples and n 1 and n 2 are the sizes of the two samples.

The formula for comparing the means of two populations using pooled variance is

df = n 1 + n 2 – 2

Does right‐ or left‐handedness affect how fast people type? Random samples of students from a typing class are given a typing speed test (words per minute), and the results are compared. Significance level for the test: 0.10. Because you are looking for a difference between the groups in either direction (right‐handed faster than left, or vice versa), this is a two‐tailed test.

or: H 0 : μ 1 – μ 2 = 0

alternative hypothesis : H a : μ 1 ≠ μ 2

or: H a : μ 1 – μ 2 ≠ 0

First, calculate the pooled variance:

Next, calculate the t‐ value:

The degrees‐of ‐ freedom parameter is 16 + 9 – 2, or 23. This test is a two‐tailed one, so you divide the alpha level (0.10) by two. Next, you look up t .05,23 in the t‐ table (Table 3 in "Statistics Tables"), which gives a critical value

of 1.714. This value is larger than the absolute value of the computed t of –1.598, so the null hypothesis of equal population means cannot be rejected. There is no evidence that right‐ or left ‐ handedness has any effect on typing speed.

Previous Quiz: Two-Sample z-test for Comparing Two Means

Next Quiz: Two-Sample t-test for Comparing Two Means

- Online Quizzes for CliffsNotes Statistics QuickReview, 2nd Edition

Two Sample t-test: Definition, Formula, and Example

A two sample t-test is used to determine whether or not two population means are equal.

This tutorial explains the following:

- The motivation for performing a two sample t-test.

- The formula to perform a two sample t-test.

- The assumptions that should be met to perform a two sample t-test.

- An example of how to perform a two sample t-test.

Two Sample t-test: Motivation

Suppose we want to know whether or not the mean weight between two different species of turtles is equal. Since there are thousands of turtles in each population, it would be too time-consuming and costly to go around and weigh each individual turtle.

Instead, we might take a simple random sample of 15 turtles from each population and use the mean weight in each sample to determine if the mean weight is equal between the two populations:

However, it’s virtually guaranteed that the mean weight between the two samples will be at least a little different. The question is whether or not this difference is statistically significant . Fortunately, a two sample t-test allows us to answer this question.

Two Sample t-test: Formula

A two-sample t-test always uses the following null hypothesis:

- H 0 : μ 1 = μ 2 (the two population means are equal)

The alternative hypothesis can be either two-tailed, left-tailed, or right-tailed:

- H 1 (two-tailed): μ 1 ≠ μ 2 (the two population means are not equal)

- H 1 (left-tailed): μ 1 2 (population 1 mean is less than population 2 mean)

- H 1 (right-tailed): μ 1 > μ 2 (population 1 mean is greater than population 2 mean)

We use the following formula to calculate the test statistic t:

Test statistic: ( x 1 – x 2 ) / s p (√ 1/n 1 + 1/n 2 )

where x 1 and x 2 are the sample means, n 1 and n 2 are the sample sizes, and where s p is calculated as:

s p = √ (n 1 -1)s 1 2 + (n 2 -1)s 2 2 / (n 1 +n 2 -2)

where s 1 2 and s 2 2 are the sample variances.

If the p-value that corresponds to the test statistic t with (n 1 +n 2 -1) degrees of freedom is less than your chosen significance level (common choices are 0.10, 0.05, and 0.01) then you can reject the null hypothesis.

Two Sample t-test: Assumptions

For the results of a two sample t-test to be valid, the following assumptions should be met:

- The observations in one sample should be independent of the observations in the other sample.

- The data should be approximately normally distributed.

- The two samples should have approximately the same variance. If this assumption is not met, you should instead perform Welch’s t-test .

- The data in both samples was obtained using a random sampling method .

Two Sample t-test : Example

Suppose we want to know whether or not the mean weight between two different species of turtles is equal. To test this, will perform a two sample t-test at significance level α = 0.05 using the following steps:

Step 1: Gather the sample data.

Suppose we collect a random sample of turtles from each population with the following information:

- Sample size n 1 = 40

- Sample mean weight x 1 = 300

- Sample standard deviation s 1 = 18.5

- Sample size n 2 = 38

- Sample mean weight x 2 = 305

- Sample standard deviation s 2 = 16.7

Step 2: Define the hypotheses.

We will perform the two sample t-test with the following hypotheses:

- H 0 : μ 1 = μ 2 (the two population means are equal)

- H 1 : μ 1 ≠ μ 2 (the two population means are not equal)

Step 3: Calculate the test statistic t .

First, we will calculate the pooled standard deviation s p :

s p = √ (n 1 -1)s 1 2 + (n 2 -1)s 2 2 / (n 1 +n 2 -2) = √ (40-1)18.5 2 + (38-1)16.7 2 / (40+38-2) = 17.647

Next, we will calculate the test statistic t :

t = ( x 1 – x 2 ) / s p (√ 1/n 1 + 1/n 2 ) = (300-305) / 17.647(√ 1/40 + 1/38 ) = -1.2508

Step 4: Calculate the p-value of the test statistic t .

According to the T Score to P Value Calculator , the p-value associated with t = -1.2508 and degrees of freedom = n 1 +n 2 -2 = 40+38-2 = 76 is 0.21484 .

Step 5: Draw a conclusion.

Since this p-value is not less than our significance level α = 0.05, we fail to reject the null hypothesis. We do not have sufficient evidence to say that the mean weight of turtles between these two populations is different.

Note: You can also perform this entire two sample t-test by simply using the Two Sample t-test Calculator .

Additional Resources

The following tutorials explain how to perform a two-sample t-test using different statistical programs:

How to Perform a Two Sample t-test in Excel How to Perform a Two Sample t-test in SPSS How to Perform a Two Sample t-test in Stata How to Perform a Two Sample t-test in R How to Perform a Two Sample t-test in Python How to Perform a Two Sample t-test on a TI-84 Calculator

An Introduction to the Binomial Distribution

4 examples of using linear regression in real life, related posts, three-way anova: definition & example, two sample z-test: definition, formula, and example, one sample z-test: definition, formula, and example, how to find a confidence interval for a..., an introduction to the exponential distribution, an introduction to the uniform distribution, the breusch-pagan test: definition & example, population vs. sample: what’s the difference, introduction to multiple linear regression, dunn’s test for multiple comparisons.

If you're seeing this message, it means we're having trouble loading external resources on our website.

If you're behind a web filter, please make sure that the domains *.kastatic.org and *.kasandbox.org are unblocked.

To log in and use all the features of Khan Academy, please enable JavaScript in your browser.

Statistics and probability

Course: statistics and probability > unit 13.

- Statistical significance of experiment

- Statistical significance on bus speeds

- Hypothesis testing in experiments

- Difference of sample means distribution

- Confidence interval of difference of means

- Clarification of confidence interval of difference of means

Hypothesis test for difference of means

Want to join the conversation.

- Upvote Button navigates to signup page

- Downvote Button navigates to signup page

- Flag Button navigates to signup page

Video transcript

Hypothesis Testing for Means & Proportions

Lisa Sullivan, PhD

Professor of Biostatistics

Boston University School of Public Health

Introduction

This is the first of three modules that will addresses the second area of statistical inference, which is hypothesis testing, in which a specific statement or hypothesis is generated about a population parameter, and sample statistics are used to assess the likelihood that the hypothesis is true. The hypothesis is based on available information and the investigator's belief about the population parameters. The process of hypothesis testing involves setting up two competing hypotheses, the null hypothesis and the alternate hypothesis. One selects a random sample (or multiple samples when there are more comparison groups), computes summary statistics and then assesses the likelihood that the sample data support the research or alternative hypothesis. Similar to estimation, the process of hypothesis testing is based on probability theory and the Central Limit Theorem.

This module will focus on hypothesis testing for means and proportions. The next two modules in this series will address analysis of variance and chi-squared tests.

Learning Objectives

After completing this module, the student will be able to:

- Define null and research hypothesis, test statistic, level of significance and decision rule

- Distinguish between Type I and Type II errors and discuss the implications of each

- Explain the difference between one and two sided tests of hypothesis

- Estimate and interpret p-values

- Explain the relationship between confidence interval estimates and p-values in drawing inferences

- Differentiate hypothesis testing procedures based on type of outcome variable and number of sample

Introduction to Hypothesis Testing

Techniques for hypothesis testing .

The techniques for hypothesis testing depend on

- the type of outcome variable being analyzed (continuous, dichotomous, discrete)

- the number of comparison groups in the investigation

- whether the comparison groups are independent (i.e., physically separate such as men versus women) or dependent (i.e., matched or paired such as pre- and post-assessments on the same participants).

In estimation we focused explicitly on techniques for one and two samples and discussed estimation for a specific parameter (e.g., the mean or proportion of a population), for differences (e.g., difference in means, the risk difference) and ratios (e.g., the relative risk and odds ratio). Here we will focus on procedures for one and two samples when the outcome is either continuous (and we focus on means) or dichotomous (and we focus on proportions).

General Approach: A Simple Example

The Centers for Disease Control (CDC) reported on trends in weight, height and body mass index from the 1960's through 2002. 1 The general trend was that Americans were much heavier and slightly taller in 2002 as compared to 1960; both men and women gained approximately 24 pounds, on average, between 1960 and 2002. In 2002, the mean weight for men was reported at 191 pounds. Suppose that an investigator hypothesizes that weights are even higher in 2006 (i.e., that the trend continued over the subsequent 4 years). The research hypothesis is that the mean weight in men in 2006 is more than 191 pounds. The null hypothesis is that there is no change in weight, and therefore the mean weight is still 191 pounds in 2006.

In order to test the hypotheses, we select a random sample of American males in 2006 and measure their weights. Suppose we have resources available to recruit n=100 men into our sample. We weigh each participant and compute summary statistics on the sample data. Suppose in the sample we determine the following:

Do the sample data support the null or research hypothesis? The sample mean of 197.1 is numerically higher than 191. However, is this difference more than would be expected by chance? In hypothesis testing, we assume that the null hypothesis holds until proven otherwise. We therefore need to determine the likelihood of observing a sample mean of 197.1 or higher when the true population mean is 191 (i.e., if the null hypothesis is true or under the null hypothesis). We can compute this probability using the Central Limit Theorem. Specifically,

(Notice that we use the sample standard deviation in computing the Z score. This is generally an appropriate substitution as long as the sample size is large, n > 30. Thus, there is less than a 1% probability of observing a sample mean as large as 197.1 when the true population mean is 191. Do you think that the null hypothesis is likely true? Based on how unlikely it is to observe a sample mean of 197.1 under the null hypothesis (i.e., <1% probability), we might infer, from our data, that the null hypothesis is probably not true.

Suppose that the sample data had turned out differently. Suppose that we instead observed the following in 2006:

How likely it is to observe a sample mean of 192.1 or higher when the true population mean is 191 (i.e., if the null hypothesis is true)? We can again compute this probability using the Central Limit Theorem. Specifically,

There is a 33.4% probability of observing a sample mean as large as 192.1 when the true population mean is 191. Do you think that the null hypothesis is likely true?

Neither of the sample means that we obtained allows us to know with certainty whether the null hypothesis is true or not. However, our computations suggest that, if the null hypothesis were true, the probability of observing a sample mean >197.1 is less than 1%. In contrast, if the null hypothesis were true, the probability of observing a sample mean >192.1 is about 33%. We can't know whether the null hypothesis is true, but the sample that provided a mean value of 197.1 provides much stronger evidence in favor of rejecting the null hypothesis, than the sample that provided a mean value of 192.1. Note that this does not mean that a sample mean of 192.1 indicates that the null hypothesis is true; it just doesn't provide compelling evidence to reject it.

In essence, hypothesis testing is a procedure to compute a probability that reflects the strength of the evidence (based on a given sample) for rejecting the null hypothesis. In hypothesis testing, we determine a threshold or cut-off point (called the critical value) to decide when to believe the null hypothesis and when to believe the research hypothesis. It is important to note that it is possible to observe any sample mean when the true population mean is true (in this example equal to 191), but some sample means are very unlikely. Based on the two samples above it would seem reasonable to believe the research hypothesis when x̄ = 197.1, but to believe the null hypothesis when x̄ =192.1. What we need is a threshold value such that if x̄ is above that threshold then we believe that H 1 is true and if x̄ is below that threshold then we believe that H 0 is true. The difficulty in determining a threshold for x̄ is that it depends on the scale of measurement. In this example, the threshold, sometimes called the critical value, might be 195 (i.e., if the sample mean is 195 or more then we believe that H 1 is true and if the sample mean is less than 195 then we believe that H 0 is true). Suppose we are interested in assessing an increase in blood pressure over time, the critical value will be different because blood pressures are measured in millimeters of mercury (mmHg) as opposed to in pounds. In the following we will explain how the critical value is determined and how we handle the issue of scale.

First, to address the issue of scale in determining the critical value, we convert our sample data (in particular the sample mean) into a Z score. We know from the module on probability that the center of the Z distribution is zero and extreme values are those that exceed 2 or fall below -2. Z scores above 2 and below -2 represent approximately 5% of all Z values. If the observed sample mean is close to the mean specified in H 0 (here m =191), then Z will be close to zero. If the observed sample mean is much larger than the mean specified in H 0 , then Z will be large.

In hypothesis testing, we select a critical value from the Z distribution. This is done by first determining what is called the level of significance, denoted α ("alpha"). What we are doing here is drawing a line at extreme values. The level of significance is the probability that we reject the null hypothesis (in favor of the alternative) when it is actually true and is also called the Type I error rate.

α = Level of significance = P(Type I error) = P(Reject H 0 | H 0 is true).

Because α is a probability, it ranges between 0 and 1. The most commonly used value in the medical literature for α is 0.05, or 5%. Thus, if an investigator selects α=0.05, then they are allowing a 5% probability of incorrectly rejecting the null hypothesis in favor of the alternative when the null is in fact true. Depending on the circumstances, one might choose to use a level of significance of 1% or 10%. For example, if an investigator wanted to reject the null only if there were even stronger evidence than that ensured with α=0.05, they could choose a =0.01as their level of significance. The typical values for α are 0.01, 0.05 and 0.10, with α=0.05 the most commonly used value.

Suppose in our weight study we select α=0.05. We need to determine the value of Z that holds 5% of the values above it (see below).

The critical value of Z for α =0.05 is Z = 1.645 (i.e., 5% of the distribution is above Z=1.645). With this value we can set up what is called our decision rule for the test. The rule is to reject H 0 if the Z score is 1.645 or more.

With the first sample we have

Because 2.38 > 1.645, we reject the null hypothesis. (The same conclusion can be drawn by comparing the 0.0087 probability of observing a sample mean as extreme as 197.1 to the level of significance of 0.05. If the observed probability is smaller than the level of significance we reject H 0 ). Because the Z score exceeds the critical value, we conclude that the mean weight for men in 2006 is more than 191 pounds, the value reported in 2002. If we observed the second sample (i.e., sample mean =192.1), we would not be able to reject the null hypothesis because the Z score is 0.43 which is not in the rejection region (i.e., the region in the tail end of the curve above 1.645). With the second sample we do not have sufficient evidence (because we set our level of significance at 5%) to conclude that weights have increased. Again, the same conclusion can be reached by comparing probabilities. The probability of observing a sample mean as extreme as 192.1 is 33.4% which is not below our 5% level of significance.

Hypothesis Testing: Upper-, Lower, and Two Tailed Tests

The procedure for hypothesis testing is based on the ideas described above. Specifically, we set up competing hypotheses, select a random sample from the population of interest and compute summary statistics. We then determine whether the sample data supports the null or alternative hypotheses. The procedure can be broken down into the following five steps.

- Step 1. Set up hypotheses and select the level of significance α.

H 0 : Null hypothesis (no change, no difference);

H 1 : Research hypothesis (investigator's belief); α =0.05

- Step 2. Select the appropriate test statistic.

The test statistic is a single number that summarizes the sample information. An example of a test statistic is the Z statistic computed as follows:

When the sample size is small, we will use t statistics (just as we did when constructing confidence intervals for small samples). As we present each scenario, alternative test statistics are provided along with conditions for their appropriate use.

- Step 3. Set up decision rule.

The decision rule is a statement that tells under what circumstances to reject the null hypothesis. The decision rule is based on specific values of the test statistic (e.g., reject H 0 if Z > 1.645). The decision rule for a specific test depends on 3 factors: the research or alternative hypothesis, the test statistic and the level of significance. Each is discussed below.

- The decision rule depends on whether an upper-tailed, lower-tailed, or two-tailed test is proposed. In an upper-tailed test the decision rule has investigators reject H 0 if the test statistic is larger than the critical value. In a lower-tailed test the decision rule has investigators reject H 0 if the test statistic is smaller than the critical value. In a two-tailed test the decision rule has investigators reject H 0 if the test statistic is extreme, either larger than an upper critical value or smaller than a lower critical value.

- The exact form of the test statistic is also important in determining the decision rule. If the test statistic follows the standard normal distribution (Z), then the decision rule will be based on the standard normal distribution. If the test statistic follows the t distribution, then the decision rule will be based on the t distribution. The appropriate critical value will be selected from the t distribution again depending on the specific alternative hypothesis and the level of significance.

- The third factor is the level of significance. The level of significance which is selected in Step 1 (e.g., α =0.05) dictates the critical value. For example, in an upper tailed Z test, if α =0.05 then the critical value is Z=1.645.

The following figures illustrate the rejection regions defined by the decision rule for upper-, lower- and two-tailed Z tests with α=0.05. Notice that the rejection regions are in the upper, lower and both tails of the curves, respectively. The decision rules are written below each figure.

Rejection Region for Lower-Tailed Z Test (H 1 : μ < μ 0 ) with α =0.05

The decision rule is: Reject H 0 if Z < 1.645.

Rejection Region for Two-Tailed Z Test (H 1 : μ ≠ μ 0 ) with α =0.05

The decision rule is: Reject H 0 if Z < -1.960 or if Z > 1.960.

The complete table of critical values of Z for upper, lower and two-tailed tests can be found in the table of Z values to the right in "Other Resources."

Critical values of t for upper, lower and two-tailed tests can be found in the table of t values in "Other Resources."

- Step 4. Compute the test statistic.

Here we compute the test statistic by substituting the observed sample data into the test statistic identified in Step 2.

- Step 5. Conclusion.

The final conclusion is made by comparing the test statistic (which is a summary of the information observed in the sample) to the decision rule. The final conclusion will be either to reject the null hypothesis (because the sample data are very unlikely if the null hypothesis is true) or not to reject the null hypothesis (because the sample data are not very unlikely).

If the null hypothesis is rejected, then an exact significance level is computed to describe the likelihood of observing the sample data assuming that the null hypothesis is true. The exact level of significance is called the p-value and it will be less than the chosen level of significance if we reject H 0 .

Statistical computing packages provide exact p-values as part of their standard output for hypothesis tests. In fact, when using a statistical computing package, the steps outlined about can be abbreviated. The hypotheses (step 1) should always be set up in advance of any analysis and the significance criterion should also be determined (e.g., α =0.05). Statistical computing packages will produce the test statistic (usually reporting the test statistic as t) and a p-value. The investigator can then determine statistical significance using the following: If p < α then reject H 0 .

- Step 1. Set up hypotheses and determine level of significance

H 0 : μ = 191 H 1 : μ > 191 α =0.05

The research hypothesis is that weights have increased, and therefore an upper tailed test is used.

- Step 2. Select the appropriate test statistic.

Because the sample size is large (n > 30) the appropriate test statistic is

- Step 3. Set up decision rule.

In this example, we are performing an upper tailed test (H 1 : μ> 191), with a Z test statistic and selected α =0.05. Reject H 0 if Z > 1.645.

We now substitute the sample data into the formula for the test statistic identified in Step 2.

We reject H 0 because 2.38 > 1.645. We have statistically significant evidence at a =0.05, to show that the mean weight in men in 2006 is more than 191 pounds. Because we rejected the null hypothesis, we now approximate the p-value which is the likelihood of observing the sample data if the null hypothesis is true. An alternative definition of the p-value is the smallest level of significance where we can still reject H 0 . In this example, we observed Z=2.38 and for α=0.05, the critical value was 1.645. Because 2.38 exceeded 1.645 we rejected H 0 . In our conclusion we reported a statistically significant increase in mean weight at a 5% level of significance. Using the table of critical values for upper tailed tests, we can approximate the p-value. If we select α=0.025, the critical value is 1.96, and we still reject H 0 because 2.38 > 1.960. If we select α=0.010 the critical value is 2.326, and we still reject H 0 because 2.38 > 2.326. However, if we select α=0.005, the critical value is 2.576, and we cannot reject H 0 because 2.38 < 2.576. Therefore, the smallest α where we still reject H 0 is 0.010. This is the p-value. A statistical computing package would produce a more precise p-value which would be in between 0.005 and 0.010. Here we are approximating the p-value and would report p < 0.010.

Type I and Type II Errors

In all tests of hypothesis, there are two types of errors that can be committed. The first is called a Type I error and refers to the situation where we incorrectly reject H 0 when in fact it is true. This is also called a false positive result (as we incorrectly conclude that the research hypothesis is true when in fact it is not). When we run a test of hypothesis and decide to reject H 0 (e.g., because the test statistic exceeds the critical value in an upper tailed test) then either we make a correct decision because the research hypothesis is true or we commit a Type I error. The different conclusions are summarized in the table below. Note that we will never know whether the null hypothesis is really true or false (i.e., we will never know which row of the following table reflects reality).

Table - Conclusions in Test of Hypothesis

In the first step of the hypothesis test, we select a level of significance, α, and α= P(Type I error). Because we purposely select a small value for α, we control the probability of committing a Type I error. For example, if we select α=0.05, and our test tells us to reject H 0 , then there is a 5% probability that we commit a Type I error. Most investigators are very comfortable with this and are confident when rejecting H 0 that the research hypothesis is true (as it is the more likely scenario when we reject H 0 ).

When we run a test of hypothesis and decide not to reject H 0 (e.g., because the test statistic is below the critical value in an upper tailed test) then either we make a correct decision because the null hypothesis is true or we commit a Type II error. Beta (β) represents the probability of a Type II error and is defined as follows: β=P(Type II error) = P(Do not Reject H 0 | H 0 is false). Unfortunately, we cannot choose β to be small (e.g., 0.05) to control the probability of committing a Type II error because β depends on several factors including the sample size, α, and the research hypothesis. When we do not reject H 0 , it may be very likely that we are committing a Type II error (i.e., failing to reject H 0 when in fact it is false). Therefore, when tests are run and the null hypothesis is not rejected we often make a weak concluding statement allowing for the possibility that we might be committing a Type II error. If we do not reject H 0 , we conclude that we do not have significant evidence to show that H 1 is true. We do not conclude that H 0 is true.

The most common reason for a Type II error is a small sample size.

Tests with One Sample, Continuous Outcome

Hypothesis testing applications with a continuous outcome variable in a single population are performed according to the five-step procedure outlined above. A key component is setting up the null and research hypotheses. The objective is to compare the mean in a single population to known mean (μ 0 ). The known value is generally derived from another study or report, for example a study in a similar, but not identical, population or a study performed some years ago. The latter is called a historical control. It is important in setting up the hypotheses in a one sample test that the mean specified in the null hypothesis is a fair and reasonable comparator. This will be discussed in the examples that follow.

Test Statistics for Testing H 0 : μ= μ 0

- if n > 30

- if n < 30

Note that statistical computing packages will use the t statistic exclusively and make the necessary adjustments for comparing the test statistic to appropriate values from probability tables to produce a p-value.

The National Center for Health Statistics (NCHS) published a report in 2005 entitled Health, United States, containing extensive information on major trends in the health of Americans. Data are provided for the US population as a whole and for specific ages, sexes and races. The NCHS report indicated that in 2002 Americans paid an average of $3,302 per year on health care and prescription drugs. An investigator hypothesizes that in 2005 expenditures have decreased primarily due to the availability of generic drugs. To test the hypothesis, a sample of 100 Americans are selected and their expenditures on health care and prescription drugs in 2005 are measured. The sample data are summarized as follows: n=100, x̄

=$3,190 and s=$890. Is there statistical evidence of a reduction in expenditures on health care and prescription drugs in 2005? Is the sample mean of $3,190 evidence of a true reduction in the mean or is it within chance fluctuation? We will run the test using the five-step approach.

- Step 1. Set up hypotheses and determine level of significance

H 0 : μ = 3,302 H 1 : μ < 3,302 α =0.05

The research hypothesis is that expenditures have decreased, and therefore a lower-tailed test is used.

This is a lower tailed test, using a Z statistic and a 5% level of significance. Reject H 0 if Z < -1.645.

- Step 4. Compute the test statistic.

We do not reject H 0 because -1.26 > -1.645. We do not have statistically significant evidence at α=0.05 to show that the mean expenditures on health care and prescription drugs are lower in 2005 than the mean of $3,302 reported in 2002.

Recall that when we fail to reject H 0 in a test of hypothesis that either the null hypothesis is true (here the mean expenditures in 2005 are the same as those in 2002 and equal to $3,302) or we committed a Type II error (i.e., we failed to reject H 0 when in fact it is false). In summarizing this test, we conclude that we do not have sufficient evidence to reject H 0 . We do not conclude that H 0 is true, because there may be a moderate to high probability that we committed a Type II error. It is possible that the sample size is not large enough to detect a difference in mean expenditures.

The NCHS reported that the mean total cholesterol level in 2002 for all adults was 203. Total cholesterol levels in participants who attended the seventh examination of the Offspring in the Framingham Heart Study are summarized as follows: n=3,310, x̄ =200.3, and s=36.8. Is there statistical evidence of a difference in mean cholesterol levels in the Framingham Offspring?

Here we want to assess whether the sample mean of 200.3 in the Framingham sample is statistically significantly different from 203 (i.e., beyond what we would expect by chance). We will run the test using the five-step approach.

H 0 : μ= 203 H 1 : μ≠ 203 α=0.05

The research hypothesis is that cholesterol levels are different in the Framingham Offspring, and therefore a two-tailed test is used.

- Step 3. Set up decision rule.

This is a two-tailed test, using a Z statistic and a 5% level of significance. Reject H 0 if Z < -1.960 or is Z > 1.960.

We reject H 0 because -4.22 ≤ -1. .960. We have statistically significant evidence at α=0.05 to show that the mean total cholesterol level in the Framingham Offspring is different from the national average of 203 reported in 2002. Because we reject H 0 , we also approximate a p-value. Using the two-sided significance levels, p < 0.0001.

Statistical Significance versus Clinical (Practical) Significance

This example raises an important concept of statistical versus clinical or practical significance. From a statistical standpoint, the total cholesterol levels in the Framingham sample are highly statistically significantly different from the national average with p < 0.0001 (i.e., there is less than a 0.01% chance that we are incorrectly rejecting the null hypothesis). However, the sample mean in the Framingham Offspring study is 200.3, less than 3 units different from the national mean of 203. The reason that the data are so highly statistically significant is due to the very large sample size. It is always important to assess both statistical and clinical significance of data. This is particularly relevant when the sample size is large. Is a 3 unit difference in total cholesterol a meaningful difference?

Consider again the NCHS-reported mean total cholesterol level in 2002 for all adults of 203. Suppose a new drug is proposed to lower total cholesterol. A study is designed to evaluate the efficacy of the drug in lowering cholesterol. Fifteen patients are enrolled in the study and asked to take the new drug for 6 weeks. At the end of 6 weeks, each patient's total cholesterol level is measured and the sample statistics are as follows: n=15, x̄ =195.9 and s=28.7. Is there statistical evidence of a reduction in mean total cholesterol in patients after using the new drug for 6 weeks? We will run the test using the five-step approach.

H 0 : μ= 203 H 1 : μ< 203 α=0.05

- Step 2. Select the appropriate test statistic.

Because the sample size is small (n<30) the appropriate test statistic is

This is a lower tailed test, using a t statistic and a 5% level of significance. In order to determine the critical value of t, we need degrees of freedom, df, defined as df=n-1. In this example df=15-1=14. The critical value for a lower tailed test with df=14 and a =0.05 is -2.145 and the decision rule is as follows: Reject H 0 if t < -2.145.

We do not reject H 0 because -0.96 > -2.145. We do not have statistically significant evidence at α=0.05 to show that the mean total cholesterol level is lower than the national mean in patients taking the new drug for 6 weeks. Again, because we failed to reject the null hypothesis we make a weaker concluding statement allowing for the possibility that we may have committed a Type II error (i.e., failed to reject H 0 when in fact the drug is efficacious).

This example raises an important issue in terms of study design. In this example we assume in the null hypothesis that the mean cholesterol level is 203. This is taken to be the mean cholesterol level in patients without treatment. Is this an appropriate comparator? Alternative and potentially more efficient study designs to evaluate the effect of the new drug could involve two treatment groups, where one group receives the new drug and the other does not, or we could measure each patient's baseline or pre-treatment cholesterol level and then assess changes from baseline to 6 weeks post-treatment. These designs are also discussed here.

Video - Comparing a Sample Mean to Known Population Mean (8:20)

Link to transcript of the video

Tests with One Sample, Dichotomous Outcome

Hypothesis testing applications with a dichotomous outcome variable in a single population are also performed according to the five-step procedure. Similar to tests for means, a key component is setting up the null and research hypotheses. The objective is to compare the proportion of successes in a single population to a known proportion (p 0 ). That known proportion is generally derived from another study or report and is sometimes called a historical control. It is important in setting up the hypotheses in a one sample test that the proportion specified in the null hypothesis is a fair and reasonable comparator.

In one sample tests for a dichotomous outcome, we set up our hypotheses against an appropriate comparator. We select a sample and compute descriptive statistics on the sample data. Specifically, we compute the sample size (n) and the sample proportion which is computed by taking the ratio of the number of successes to the sample size,

We then determine the appropriate test statistic (Step 2) for the hypothesis test. The formula for the test statistic is given below.

Test Statistic for Testing H 0 : p = p 0

if min(np 0 , n(1-p 0 )) > 5

The formula above is appropriate for large samples, defined when the smaller of np 0 and n(1-p 0 ) is at least 5. This is similar, but not identical, to the condition required for appropriate use of the confidence interval formula for a population proportion, i.e.,

Here we use the proportion specified in the null hypothesis as the true proportion of successes rather than the sample proportion. If we fail to satisfy the condition, then alternative procedures, called exact methods must be used to test the hypothesis about the population proportion.

Example:

The NCHS report indicated that in 2002 the prevalence of cigarette smoking among American adults was 21.1%. Data on prevalent smoking in n=3,536 participants who attended the seventh examination of the Offspring in the Framingham Heart Study indicated that 482/3,536 = 13.6% of the respondents were currently smoking at the time of the exam. Suppose we want to assess whether the prevalence of smoking is lower in the Framingham Offspring sample given the focus on cardiovascular health in that community. Is there evidence of a statistically lower prevalence of smoking in the Framingham Offspring study as compared to the prevalence among all Americans?

H 0 : p = 0.211 H 1 : p < 0.211 α=0.05

We must first check that the sample size is adequate. Specifically, we need to check min(np 0 , n(1-p 0 )) = min( 3,536(0.211), 3,536(1-0.211))=min(746, 2790)=746. The sample size is more than adequate so the following formula can be used:

This is a lower tailed test, using a Z statistic and a 5% level of significance. Reject H 0 if Z < -1.645.

We reject H 0 because -10.93 < -1.645. We have statistically significant evidence at α=0.05 to show that the prevalence of smoking in the Framingham Offspring is lower than the prevalence nationally (21.1%). Here, p < 0.0001.

The NCHS report indicated that in 2002, 75% of children aged 2 to 17 saw a dentist in the past year. An investigator wants to assess whether use of dental services is similar in children living in the city of Boston. A sample of 125 children aged 2 to 17 living in Boston are surveyed and 64 reported seeing a dentist over the past 12 months. Is there a significant difference in use of dental services between children living in Boston and the national data?

Calculate this on your own before checking the answer.

Video - Hypothesis Test for One Sample and a Dichotomous Outcome (3:55)

Tests with Two Independent Samples, Continuous Outcome

There are many applications where it is of interest to compare two independent groups with respect to their mean scores on a continuous outcome. Here we compare means between groups, but rather than generating an estimate of the difference, we will test whether the observed difference (increase, decrease or difference) is statistically significant or not. Remember, that hypothesis testing gives an assessment of statistical significance, whereas estimation gives an estimate of effect and both are important.

Here we discuss the comparison of means when the two comparison groups are independent or physically separate. The two groups might be determined by a particular attribute (e.g., sex, diagnosis of cardiovascular disease) or might be set up by the investigator (e.g., participants assigned to receive an experimental treatment or placebo). The first step in the analysis involves computing descriptive statistics on each of the two samples. Specifically, we compute the sample size, mean and standard deviation in each sample and we denote these summary statistics as follows:

for sample 1:

for sample 2:

The designation of sample 1 and sample 2 is arbitrary. In a clinical trial setting the convention is to call the treatment group 1 and the control group 2. However, when comparing men and women, for example, either group can be 1 or 2.

In the two independent samples application with a continuous outcome, the parameter of interest in the test of hypothesis is the difference in population means, μ 1 -μ 2 . The null hypothesis is always that there is no difference between groups with respect to means, i.e.,

The null hypothesis can also be written as follows: H 0 : μ 1 = μ 2 . In the research hypothesis, an investigator can hypothesize that the first mean is larger than the second (H 1 : μ 1 > μ 2 ), that the first mean is smaller than the second (H 1 : μ 1 < μ 2 ), or that the means are different (H 1 : μ 1 ≠ μ 2 ). The three different alternatives represent upper-, lower-, and two-tailed tests, respectively. The following test statistics are used to test these hypotheses.

Test Statistics for Testing H 0 : μ 1 = μ 2

- if n 1 > 30 and n 2 > 30

- if n 1 < 30 or n 2 < 30

NOTE: The formulas above assume equal variability in the two populations (i.e., the population variances are equal, or s 1 2 = s 2 2 ). This means that the outcome is equally variable in each of the comparison populations. For analysis, we have samples from each of the comparison populations. If the sample variances are similar, then the assumption about variability in the populations is probably reasonable. As a guideline, if the ratio of the sample variances, s 1 2 /s 2 2 is between 0.5 and 2 (i.e., if one variance is no more than double the other), then the formulas above are appropriate. If the ratio of the sample variances is greater than 2 or less than 0.5 then alternative formulas must be used to account for the heterogeneity in variances.

The test statistics include Sp, which is the pooled estimate of the common standard deviation (again assuming that the variances in the populations are similar) computed as the weighted average of the standard deviations in the samples as follows:

Because we are assuming equal variances between groups, we pool the information on variability (sample variances) to generate an estimate of the variability in the population. Note: Because Sp is a weighted average of the standard deviations in the sample, Sp will always be in between s 1 and s 2 .)

Data measured on n=3,539 participants who attended the seventh examination of the Offspring in the Framingham Heart Study are shown below.

Suppose we now wish to assess whether there is a statistically significant difference in mean systolic blood pressures between men and women using a 5% level of significance.

H 0 : μ 1 = μ 2

H 1 : μ 1 ≠ μ 2 α=0.05

Because both samples are large ( > 30), we can use the Z test statistic as opposed to t. Note that statistical computing packages use t throughout. Before implementing the formula, we first check whether the assumption of equality of population variances is reasonable. The guideline suggests investigating the ratio of the sample variances, s 1 2 /s 2 2 . Suppose we call the men group 1 and the women group 2. Again, this is arbitrary; it only needs to be noted when interpreting the results. The ratio of the sample variances is 17.5 2 /20.1 2 = 0.76, which falls between 0.5 and 2 suggesting that the assumption of equality of population variances is reasonable. The appropriate test statistic is

We now substitute the sample data into the formula for the test statistic identified in Step 2. Before substituting, we will first compute Sp, the pooled estimate of the common standard deviation.

Notice that the pooled estimate of the common standard deviation, Sp, falls in between the standard deviations in the comparison groups (i.e., 17.5 and 20.1). Sp is slightly closer in value to the standard deviation in the women (20.1) as there were slightly more women in the sample. Recall, Sp is a weight average of the standard deviations in the comparison groups, weighted by the respective sample sizes.

Now the test statistic:

We reject H 0 because 2.66 > 1.960. We have statistically significant evidence at α=0.05 to show that there is a difference in mean systolic blood pressures between men and women. The p-value is p < 0.010.