10 Real World Data Science Case Studies Projects with Example

Top 10 Data Science Case Studies Projects with Examples and Solutions in Python to inspire your data science learning in 2023.

BelData science has been a trending buzzword in recent times. With wide applications in various sectors like healthcare , education, retail, transportation, media, and banking -data science applications are at the core of pretty much every industry out there. The possibilities are endless: analysis of frauds in the finance sector or the personalization of recommendations on eCommerce businesses. We have developed ten exciting data science case studies to explain how data science is leveraged across various industries to make smarter decisions and develop innovative personalized products tailored to specific customers.

Walmart Sales Forecasting Data Science Project

Downloadable solution code | Explanatory videos | Tech Support

Table of Contents

Data science case studies in retail , data science case study examples in entertainment industry , data analytics case study examples in travel industry , case studies for data analytics in social media , real world data science projects in healthcare, data analytics case studies in oil and gas, what is a case study in data science, how do you prepare a data science case study, 10 most interesting data science case studies with examples.

So, without much ado, let's get started with data science business case studies !

With humble beginnings as a simple discount retailer, today, Walmart operates in 10,500 stores and clubs in 24 countries and eCommerce websites, employing around 2.2 million people around the globe. For the fiscal year ended January 31, 2021, Walmart's total revenue was $559 billion showing a growth of $35 billion with the expansion of the eCommerce sector. Walmart is a data-driven company that works on the principle of 'Everyday low cost' for its consumers. To achieve this goal, they heavily depend on the advances of their data science and analytics department for research and development, also known as Walmart Labs. Walmart is home to the world's largest private cloud, which can manage 2.5 petabytes of data every hour! To analyze this humongous amount of data, Walmart has created 'Data Café,' a state-of-the-art analytics hub located within its Bentonville, Arkansas headquarters. The Walmart Labs team heavily invests in building and managing technologies like cloud, data, DevOps , infrastructure, and security.

Walmart is experiencing massive digital growth as the world's largest retailer . Walmart has been leveraging Big data and advances in data science to build solutions to enhance, optimize and customize the shopping experience and serve their customers in a better way. At Walmart Labs, data scientists are focused on creating data-driven solutions that power the efficiency and effectiveness of complex supply chain management processes. Here are some of the applications of data science at Walmart:

i) Personalized Customer Shopping Experience

Walmart analyses customer preferences and shopping patterns to optimize the stocking and displaying of merchandise in their stores. Analysis of Big data also helps them understand new item sales, make decisions on discontinuing products, and the performance of brands.

ii) Order Sourcing and On-Time Delivery Promise

Millions of customers view items on Walmart.com, and Walmart provides each customer a real-time estimated delivery date for the items purchased. Walmart runs a backend algorithm that estimates this based on the distance between the customer and the fulfillment center, inventory levels, and shipping methods available. The supply chain management system determines the optimum fulfillment center based on distance and inventory levels for every order. It also has to decide on the shipping method to minimize transportation costs while meeting the promised delivery date.

Here's what valued users are saying about ProjectPro

Anand Kumpatla

Sr Data Scientist @ Doubleslash Software Solutions Pvt Ltd

Savvy Sahai

Data Science Intern, Capgemini

Not sure what you are looking for?

iii) Packing Optimization

Also known as Box recommendation is a daily occurrence in the shipping of items in retail and eCommerce business. When items of an order or multiple orders for the same customer are ready for packing, Walmart has developed a recommender system that picks the best-sized box which holds all the ordered items with the least in-box space wastage within a fixed amount of time. This Bin Packing problem is a classic NP-Hard problem familiar to data scientists .

Whenever items of an order or multiple orders placed by the same customer are picked from the shelf and are ready for packing, the box recommendation system determines the best-sized box to hold all the ordered items with a minimum of in-box space wasted. This problem is known as the Bin Packing Problem, another classic NP-Hard problem familiar to data scientists.

Here is a link to a sales prediction data science case study to help you understand the applications of Data Science in the real world. Walmart Sales Forecasting Project uses historical sales data for 45 Walmart stores located in different regions. Each store contains many departments, and you must build a model to project the sales for each department in each store. This data science case study aims to create a predictive model to predict the sales of each product. You can also try your hands-on Inventory Demand Forecasting Data Science Project to develop a machine learning model to forecast inventory demand accurately based on historical sales data.

Get Closer To Your Dream of Becoming a Data Scientist with 70+ Solved End-to-End ML Projects

Amazon is an American multinational technology-based company based in Seattle, USA. It started as an online bookseller, but today it focuses on eCommerce, cloud computing , digital streaming, and artificial intelligence . It hosts an estimate of 1,000,000,000 gigabytes of data across more than 1,400,000 servers. Through its constant innovation in data science and big data Amazon is always ahead in understanding its customers. Here are a few data analytics case study examples at Amazon:

i) Recommendation Systems

Data science models help amazon understand the customers' needs and recommend them to them before the customer searches for a product; this model uses collaborative filtering. Amazon uses 152 million customer purchases data to help users to decide on products to be purchased. The company generates 35% of its annual sales using the Recommendation based systems (RBS) method.

Here is a Recommender System Project to help you build a recommendation system using collaborative filtering.

ii) Retail Price Optimization

Amazon product prices are optimized based on a predictive model that determines the best price so that the users do not refuse to buy it based on price. The model carefully determines the optimal prices considering the customers' likelihood of purchasing the product and thinks the price will affect the customers' future buying patterns. Price for a product is determined according to your activity on the website, competitors' pricing, product availability, item preferences, order history, expected profit margin, and other factors.

Check Out this Retail Price Optimization Project to build a Dynamic Pricing Model.

iii) Fraud Detection

Being a significant eCommerce business, Amazon remains at high risk of retail fraud. As a preemptive measure, the company collects historical and real-time data for every order. It uses Machine learning algorithms to find transactions with a higher probability of being fraudulent. This proactive measure has helped the company restrict clients with an excessive number of returns of products.

You can look at this Credit Card Fraud Detection Project to implement a fraud detection model to classify fraudulent credit card transactions.

New Projects

Let us explore data analytics case study examples in the entertainment indusry.

Ace Your Next Job Interview with Mock Interviews from Experts to Improve Your Skills and Boost Confidence!

Netflix started as a DVD rental service in 1997 and then has expanded into the streaming business. Headquartered in Los Gatos, California, Netflix is the largest content streaming company in the world. Currently, Netflix has over 208 million paid subscribers worldwide, and with thousands of smart devices which are presently streaming supported, Netflix has around 3 billion hours watched every month. The secret to this massive growth and popularity of Netflix is its advanced use of data analytics and recommendation systems to provide personalized and relevant content recommendations to its users. The data is collected over 100 billion events every day. Here are a few examples of data analysis case studies applied at Netflix :

i) Personalized Recommendation System

Netflix uses over 1300 recommendation clusters based on consumer viewing preferences to provide a personalized experience. Some of the data that Netflix collects from its users include Viewing time, platform searches for keywords, Metadata related to content abandonment, such as content pause time, rewind, rewatched. Using this data, Netflix can predict what a viewer is likely to watch and give a personalized watchlist to a user. Some of the algorithms used by the Netflix recommendation system are Personalized video Ranking, Trending now ranker, and the Continue watching now ranker.

ii) Content Development using Data Analytics

Netflix uses data science to analyze the behavior and patterns of its user to recognize themes and categories that the masses prefer to watch. This data is used to produce shows like The umbrella academy, and Orange Is the New Black, and the Queen's Gambit. These shows seem like a huge risk but are significantly based on data analytics using parameters, which assured Netflix that they would succeed with its audience. Data analytics is helping Netflix come up with content that their viewers want to watch even before they know they want to watch it.

iii) Marketing Analytics for Campaigns

Netflix uses data analytics to find the right time to launch shows and ad campaigns to have maximum impact on the target audience. Marketing analytics helps come up with different trailers and thumbnails for other groups of viewers. For example, the House of Cards Season 5 trailer with a giant American flag was launched during the American presidential elections, as it would resonate well with the audience.

Here is a Customer Segmentation Project using association rule mining to understand the primary grouping of customers based on various parameters.

Get FREE Access to Machine Learning Example Codes for Data Cleaning , Data Munging, and Data Visualization

In a world where Purchasing music is a thing of the past and streaming music is a current trend, Spotify has emerged as one of the most popular streaming platforms. With 320 million monthly users, around 4 billion playlists, and approximately 2 million podcasts, Spotify leads the pack among well-known streaming platforms like Apple Music, Wynk, Songza, amazon music, etc. The success of Spotify has mainly depended on data analytics. By analyzing massive volumes of listener data, Spotify provides real-time and personalized services to its listeners. Most of Spotify's revenue comes from paid premium subscriptions. Here are some of the examples of case study on data analytics used by Spotify to provide enhanced services to its listeners:

i) Personalization of Content using Recommendation Systems

Spotify uses Bart or Bayesian Additive Regression Trees to generate music recommendations to its listeners in real-time. Bart ignores any song a user listens to for less than 30 seconds. The model is retrained every day to provide updated recommendations. A new Patent granted to Spotify for an AI application is used to identify a user's musical tastes based on audio signals, gender, age, accent to make better music recommendations.

Spotify creates daily playlists for its listeners, based on the taste profiles called 'Daily Mixes,' which have songs the user has added to their playlists or created by the artists that the user has included in their playlists. It also includes new artists and songs that the user might be unfamiliar with but might improve the playlist. Similar to it is the weekly 'Release Radar' playlists that have newly released artists' songs that the listener follows or has liked before.

ii) Targetted marketing through Customer Segmentation

With user data for enhancing personalized song recommendations, Spotify uses this massive dataset for targeted ad campaigns and personalized service recommendations for its users. Spotify uses ML models to analyze the listener's behavior and group them based on music preferences, age, gender, ethnicity, etc. These insights help them create ad campaigns for a specific target audience. One of their well-known ad campaigns was the meme-inspired ads for potential target customers, which was a huge success globally.

iii) CNN's for Classification of Songs and Audio Tracks

Spotify builds audio models to evaluate the songs and tracks, which helps develop better playlists and recommendations for its users. These allow Spotify to filter new tracks based on their lyrics and rhythms and recommend them to users like similar tracks ( collaborative filtering). Spotify also uses NLP ( Natural language processing) to scan articles and blogs to analyze the words used to describe songs and artists. These analytical insights can help group and identify similar artists and songs and leverage them to build playlists.

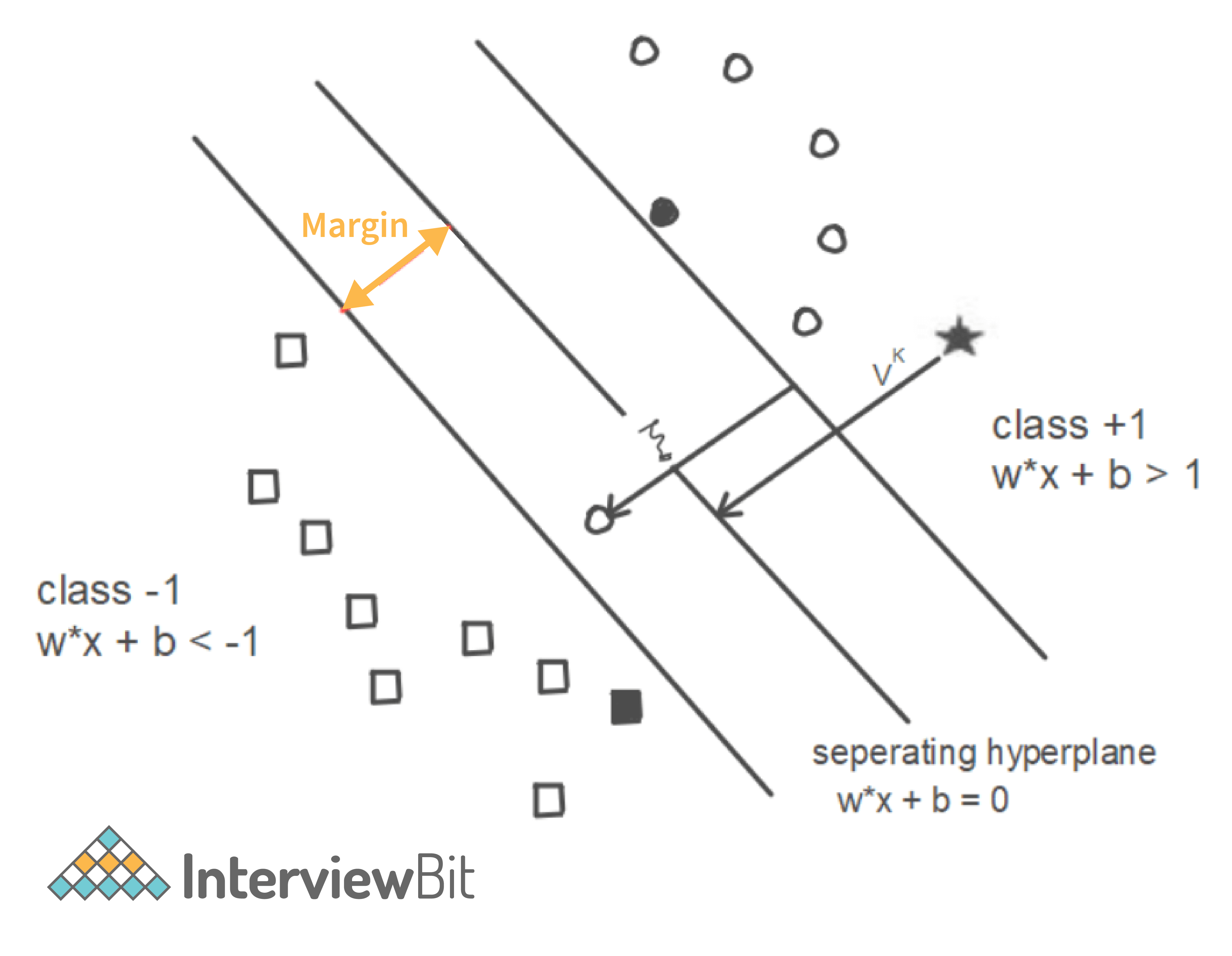

Here is a Music Recommender System Project for you to start learning. We have listed another music recommendations dataset for you to use for your projects: Dataset1 . You can use this dataset of Spotify metadata to classify songs based on artists, mood, liveliness. Plot histograms, heatmaps to get a better understanding of the dataset. Use classification algorithms like logistic regression, SVM, and Principal component analysis to generate valuable insights from the dataset.

Explore Categories

Below you will find case studies for data analytics in the travel and tourism industry.

Airbnb was born in 2007 in San Francisco and has since grown to 4 million Hosts and 5.6 million listings worldwide who have welcomed more than 1 billion guest arrivals in almost every country across the globe. Airbnb is active in every country on the planet except for Iran, Sudan, Syria, and North Korea. That is around 97.95% of the world. Using data as a voice of their customers, Airbnb uses the large volume of customer reviews, host inputs to understand trends across communities, rate user experiences, and uses these analytics to make informed decisions to build a better business model. The data scientists at Airbnb are developing exciting new solutions to boost the business and find the best mapping for its customers and hosts. Airbnb data servers serve approximately 10 million requests a day and process around one million search queries. Data is the voice of customers at AirBnB and offers personalized services by creating a perfect match between the guests and hosts for a supreme customer experience.

i) Recommendation Systems and Search Ranking Algorithms

Airbnb helps people find 'local experiences' in a place with the help of search algorithms that make searches and listings precise. Airbnb uses a 'listing quality score' to find homes based on the proximity to the searched location and uses previous guest reviews. Airbnb uses deep neural networks to build models that take the guest's earlier stays into account and area information to find a perfect match. The search algorithms are optimized based on guest and host preferences, rankings, pricing, and availability to understand users’ needs and provide the best match possible.

ii) Natural Language Processing for Review Analysis

Airbnb characterizes data as the voice of its customers. The customer and host reviews give a direct insight into the experience. The star ratings alone cannot be an excellent way to understand it quantitatively. Hence Airbnb uses natural language processing to understand reviews and the sentiments behind them. The NLP models are developed using Convolutional neural networks .

Practice this Sentiment Analysis Project for analyzing product reviews to understand the basic concepts of natural language processing.

iii) Smart Pricing using Predictive Analytics

The Airbnb hosts community uses the service as a supplementary income. The vacation homes and guest houses rented to customers provide for rising local community earnings as Airbnb guests stay 2.4 times longer and spend approximately 2.3 times the money compared to a hotel guest. The profits are a significant positive impact on the local neighborhood community. Airbnb uses predictive analytics to predict the prices of the listings and help the hosts set a competitive and optimal price. The overall profitability of the Airbnb host depends on factors like the time invested by the host and responsiveness to changing demands for different seasons. The factors that impact the real-time smart pricing are the location of the listing, proximity to transport options, season, and amenities available in the neighborhood of the listing.

Here is a Price Prediction Project to help you understand the concept of predictive analysis which is widely common in case studies for data analytics.

Uber is the biggest global taxi service provider. As of December 2018, Uber has 91 million monthly active consumers and 3.8 million drivers. Uber completes 14 million trips each day. Uber uses data analytics and big data-driven technologies to optimize their business processes and provide enhanced customer service. The Data Science team at uber has been exploring futuristic technologies to provide better service constantly. Machine learning and data analytics help Uber make data-driven decisions that enable benefits like ride-sharing, dynamic price surges, better customer support, and demand forecasting. Here are some of the real world data science projects used by uber:

i) Dynamic Pricing for Price Surges and Demand Forecasting

Uber prices change at peak hours based on demand. Uber uses surge pricing to encourage more cab drivers to sign up with the company, to meet the demand from the passengers. When the prices increase, the driver and the passenger are both informed about the surge in price. Uber uses a predictive model for price surging called the 'Geosurge' ( patented). It is based on the demand for the ride and the location.

ii) One-Click Chat

Uber has developed a Machine learning and natural language processing solution called one-click chat or OCC for coordination between drivers and users. This feature anticipates responses for commonly asked questions, making it easy for the drivers to respond to customer messages. Drivers can reply with the clock of just one button. One-Click chat is developed on Uber's machine learning platform Michelangelo to perform NLP on rider chat messages and generate appropriate responses to them.

iii) Customer Retention

Failure to meet the customer demand for cabs could lead to users opting for other services. Uber uses machine learning models to bridge this demand-supply gap. By using prediction models to predict the demand in any location, uber retains its customers. Uber also uses a tier-based reward system, which segments customers into different levels based on usage. The higher level the user achieves, the better are the perks. Uber also provides personalized destination suggestions based on the history of the user and their frequently traveled destinations.

You can take a look at this Python Chatbot Project and build a simple chatbot application to understand better the techniques used for natural language processing. You can also practice the working of a demand forecasting model with this project using time series analysis. You can look at this project which uses time series forecasting and clustering on a dataset containing geospatial data for forecasting customer demand for ola rides.

Explore More Data Science and Machine Learning Projects for Practice. Fast-Track Your Career Transition with ProjectPro

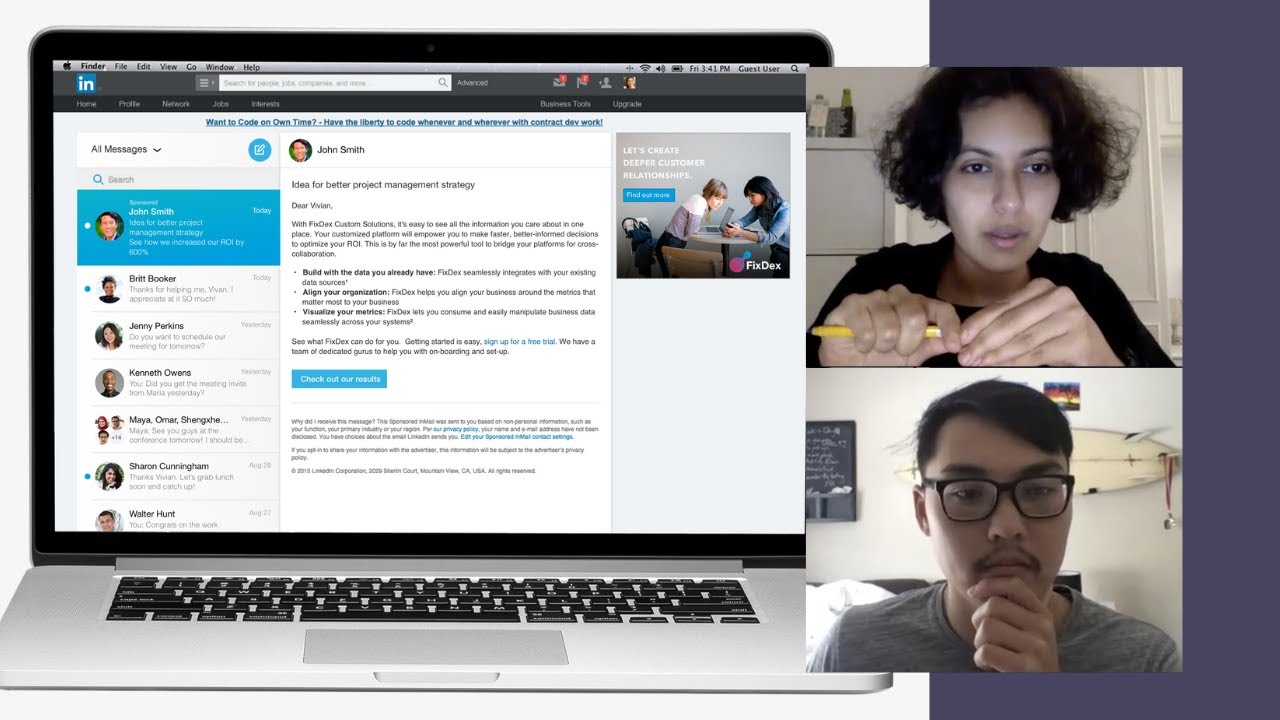

7) LinkedIn

LinkedIn is the largest professional social networking site with nearly 800 million members in more than 200 countries worldwide. Almost 40% of the users access LinkedIn daily, clocking around 1 billion interactions per month. The data science team at LinkedIn works with this massive pool of data to generate insights to build strategies, apply algorithms and statistical inferences to optimize engineering solutions, and help the company achieve its goals. Here are some of the real world data science projects at LinkedIn:

i) LinkedIn Recruiter Implement Search Algorithms and Recommendation Systems

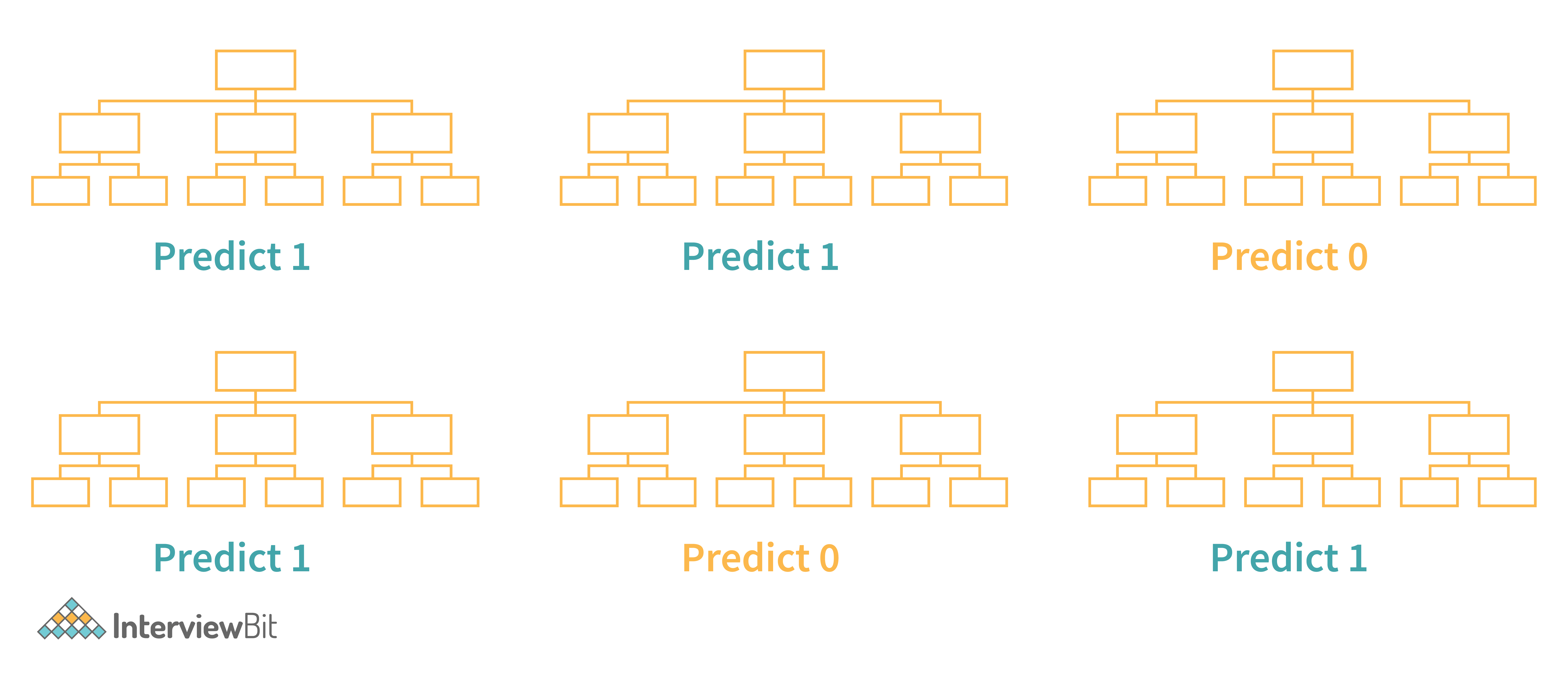

LinkedIn Recruiter helps recruiters build and manage a talent pool to optimize the chances of hiring candidates successfully. This sophisticated product works on search and recommendation engines. The LinkedIn recruiter handles complex queries and filters on a constantly growing large dataset. The results delivered have to be relevant and specific. The initial search model was based on linear regression but was eventually upgraded to Gradient Boosted decision trees to include non-linear correlations in the dataset. In addition to these models, the LinkedIn recruiter also uses the Generalized Linear Mix model to improve the results of prediction problems to give personalized results.

ii) Recommendation Systems Personalized for News Feed

The LinkedIn news feed is the heart and soul of the professional community. A member's newsfeed is a place to discover conversations among connections, career news, posts, suggestions, photos, and videos. Every time a member visits LinkedIn, machine learning algorithms identify the best exchanges to be displayed on the feed by sorting through posts and ranking the most relevant results on top. The algorithms help LinkedIn understand member preferences and help provide personalized news feeds. The algorithms used include logistic regression, gradient boosted decision trees and neural networks for recommendation systems.

iii) CNN's to Detect Inappropriate Content

To provide a professional space where people can trust and express themselves professionally in a safe community has been a critical goal at LinkedIn. LinkedIn has heavily invested in building solutions to detect fake accounts and abusive behavior on their platform. Any form of spam, harassment, inappropriate content is immediately flagged and taken down. These can range from profanity to advertisements for illegal services. LinkedIn uses a Convolutional neural networks based machine learning model. This classifier trains on a training dataset containing accounts labeled as either "inappropriate" or "appropriate." The inappropriate list consists of accounts having content from "blocklisted" phrases or words and a small portion of manually reviewed accounts reported by the user community.

Here is a Text Classification Project to help you understand NLP basics for text classification. You can find a news recommendation system dataset to help you build a personalized news recommender system. You can also use this dataset to build a classifier using logistic regression, Naive Bayes, or Neural networks to classify toxic comments.

Get confident to build end-to-end projects

Access to a curated library of 250+ end-to-end industry projects with solution code, videos and tech support.

Pfizer is a multinational pharmaceutical company headquartered in New York, USA. One of the largest pharmaceutical companies globally known for developing a wide range of medicines and vaccines in disciplines like immunology, oncology, cardiology, and neurology. Pfizer became a household name in 2010 when it was the first to have a COVID-19 vaccine with FDA. In early November 2021, The CDC has approved the Pfizer vaccine for kids aged 5 to 11. Pfizer has been using machine learning and artificial intelligence to develop drugs and streamline trials, which played a massive role in developing and deploying the COVID-19 vaccine. Here are a few data analytics case studies by Pfizer :

i) Identifying Patients for Clinical Trials

Artificial intelligence and machine learning are used to streamline and optimize clinical trials to increase their efficiency. Natural language processing and exploratory data analysis of patient records can help identify suitable patients for clinical trials. These can help identify patients with distinct symptoms. These can help examine interactions of potential trial members' specific biomarkers, predict drug interactions and side effects which can help avoid complications. Pfizer's AI implementation helped rapidly identify signals within the noise of millions of data points across their 44,000-candidate COVID-19 clinical trial.

ii) Supply Chain and Manufacturing

Data science and machine learning techniques help pharmaceutical companies better forecast demand for vaccines and drugs and distribute them efficiently. Machine learning models can help identify efficient supply systems by automating and optimizing the production steps. These will help supply drugs customized to small pools of patients in specific gene pools. Pfizer uses Machine learning to predict the maintenance cost of equipment used. Predictive maintenance using AI is the next big step for Pharmaceutical companies to reduce costs.

iii) Drug Development

Computer simulations of proteins, and tests of their interactions, and yield analysis help researchers develop and test drugs more efficiently. In 2016 Watson Health and Pfizer announced a collaboration to utilize IBM Watson for Drug Discovery to help accelerate Pfizer's research in immuno-oncology, an approach to cancer treatment that uses the body's immune system to help fight cancer. Deep learning models have been used recently for bioactivity and synthesis prediction for drugs and vaccines in addition to molecular design. Deep learning has been a revolutionary technique for drug discovery as it factors everything from new applications of medications to possible toxic reactions which can save millions in drug trials.

You can create a Machine learning model to predict molecular activity to help design medicine using this dataset . You may build a CNN or a Deep neural network for this data analyst case study project.

Access Data Science and Machine Learning Project Code Examples

9) Shell Data Analyst Case Study Project

Shell is a global group of energy and petrochemical companies with over 80,000 employees in around 70 countries. Shell uses advanced technologies and innovations to help build a sustainable energy future. Shell is going through a significant transition as the world needs more and cleaner energy solutions to be a clean energy company by 2050. It requires substantial changes in the way in which energy is used. Digital technologies, including AI and Machine Learning, play an essential role in this transformation. These include efficient exploration and energy production, more reliable manufacturing, more nimble trading, and a personalized customer experience. Using AI in various phases of the organization will help achieve this goal and stay competitive in the market. Here are a few data analytics case studies in the petrochemical industry:

i) Precision Drilling

Shell is involved in the processing mining oil and gas supply, ranging from mining hydrocarbons to refining the fuel to retailing them to customers. Recently Shell has included reinforcement learning to control the drilling equipment used in mining. Reinforcement learning works on a reward-based system based on the outcome of the AI model. The algorithm is designed to guide the drills as they move through the surface, based on the historical data from drilling records. It includes information such as the size of drill bits, temperatures, pressures, and knowledge of the seismic activity. This model helps the human operator understand the environment better, leading to better and faster results will minor damage to machinery used.

ii) Efficient Charging Terminals

Due to climate changes, governments have encouraged people to switch to electric vehicles to reduce carbon dioxide emissions. However, the lack of public charging terminals has deterred people from switching to electric cars. Shell uses AI to monitor and predict the demand for terminals to provide efficient supply. Multiple vehicles charging from a single terminal may create a considerable grid load, and predictions on demand can help make this process more efficient.

iii) Monitoring Service and Charging Stations

Another Shell initiative trialed in Thailand and Singapore is the use of computer vision cameras, which can think and understand to watch out for potentially hazardous activities like lighting cigarettes in the vicinity of the pumps while refueling. The model is built to process the content of the captured images and label and classify it. The algorithm can then alert the staff and hence reduce the risk of fires. You can further train the model to detect rash driving or thefts in the future.

Here is a project to help you understand multiclass image classification. You can use the Hourly Energy Consumption Dataset to build an energy consumption prediction model. You can use time series with XGBoost to develop your model.

10) Zomato Case Study on Data Analytics

Zomato was founded in 2010 and is currently one of the most well-known food tech companies. Zomato offers services like restaurant discovery, home delivery, online table reservation, online payments for dining, etc. Zomato partners with restaurants to provide tools to acquire more customers while also providing delivery services and easy procurement of ingredients and kitchen supplies. Currently, Zomato has over 2 lakh restaurant partners and around 1 lakh delivery partners. Zomato has closed over ten crore delivery orders as of date. Zomato uses ML and AI to boost their business growth, with the massive amount of data collected over the years from food orders and user consumption patterns. Here are a few examples of data analyst case study project developed by the data scientists at Zomato:

i) Personalized Recommendation System for Homepage

Zomato uses data analytics to create personalized homepages for its users. Zomato uses data science to provide order personalization, like giving recommendations to the customers for specific cuisines, locations, prices, brands, etc. Restaurant recommendations are made based on a customer's past purchases, browsing history, and what other similar customers in the vicinity are ordering. This personalized recommendation system has led to a 15% improvement in order conversions and click-through rates for Zomato.

You can use the Restaurant Recommendation Dataset to build a restaurant recommendation system to predict what restaurants customers are most likely to order from, given the customer location, restaurant information, and customer order history.

ii) Analyzing Customer Sentiment

Zomato uses Natural language processing and Machine learning to understand customer sentiments using social media posts and customer reviews. These help the company gauge the inclination of its customer base towards the brand. Deep learning models analyze the sentiments of various brand mentions on social networking sites like Twitter, Instagram, Linked In, and Facebook. These analytics give insights to the company, which helps build the brand and understand the target audience.

iii) Predicting Food Preparation Time (FPT)

Food delivery time is an essential variable in the estimated delivery time of the order placed by the customer using Zomato. The food preparation time depends on numerous factors like the number of dishes ordered, time of the day, footfall in the restaurant, day of the week, etc. Accurate prediction of the food preparation time can help make a better prediction of the Estimated delivery time, which will help delivery partners less likely to breach it. Zomato uses a Bidirectional LSTM-based deep learning model that considers all these features and provides food preparation time for each order in real-time.

Data scientists are companies' secret weapons when analyzing customer sentiments and behavior and leveraging it to drive conversion, loyalty, and profits. These 10 data science case studies projects with examples and solutions show you how various organizations use data science technologies to succeed and be at the top of their field! To summarize, Data Science has not only accelerated the performance of companies but has also made it possible to manage & sustain their performance with ease.

FAQs on Data Analysis Case Studies

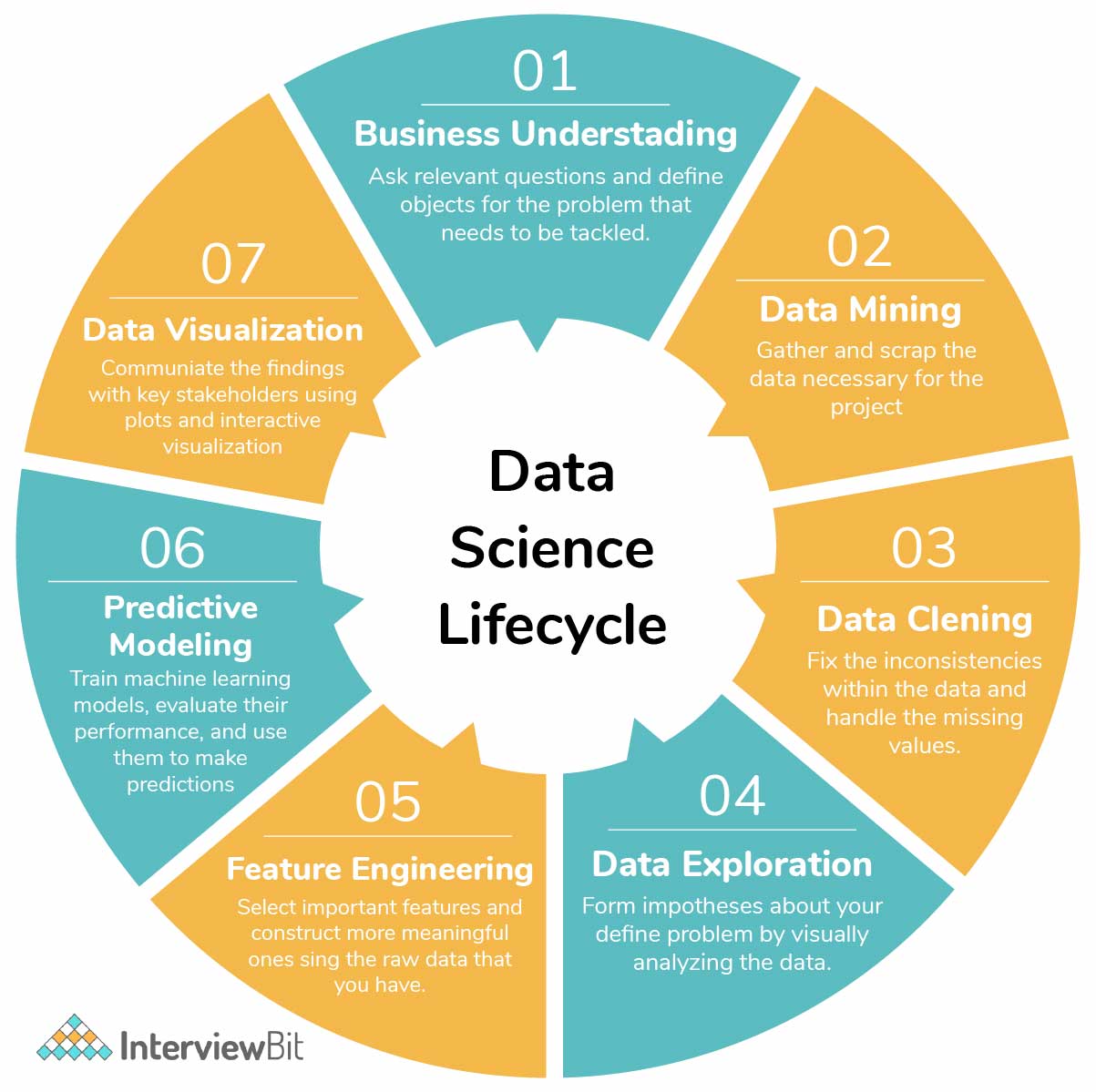

A case study in data science is an in-depth analysis of a real-world problem using data-driven approaches. It involves collecting, cleaning, and analyzing data to extract insights and solve challenges, offering practical insights into how data science techniques can address complex issues across various industries.

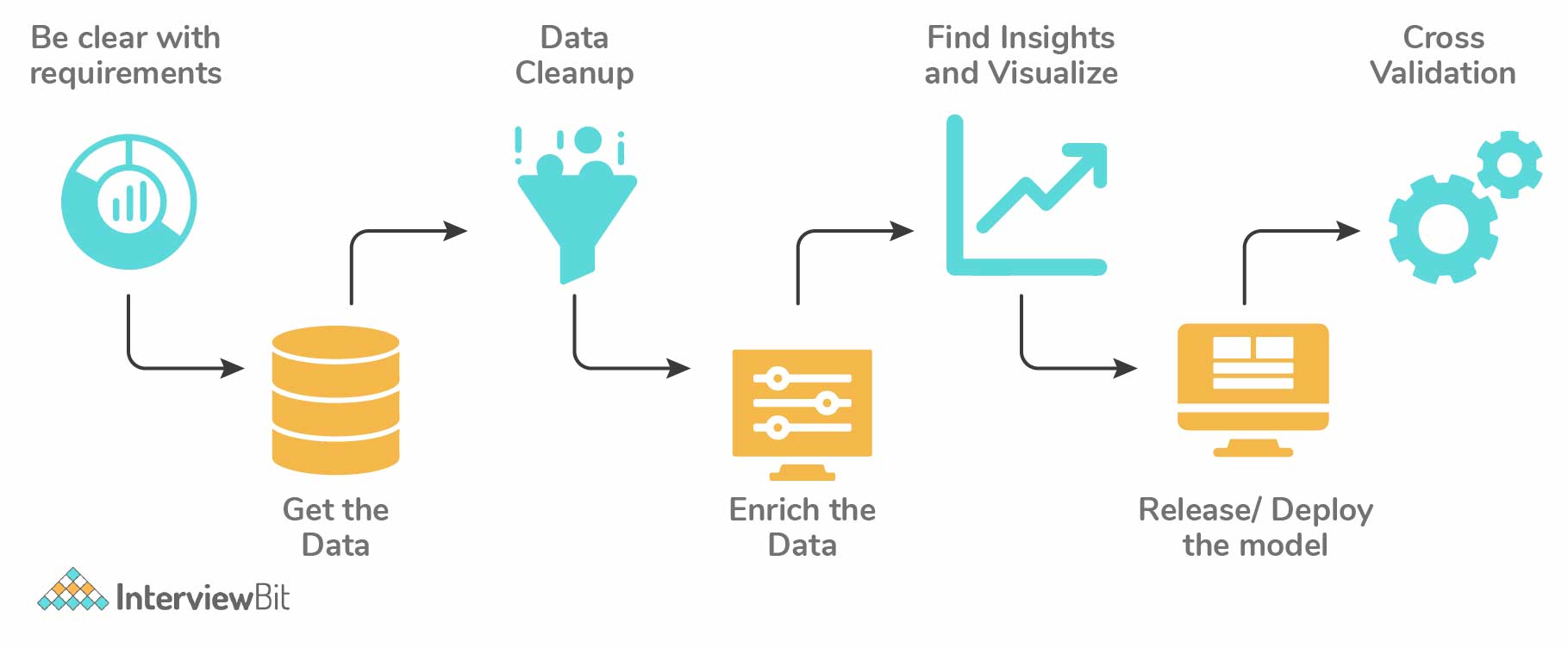

To create a data science case study, identify a relevant problem, define objectives, and gather suitable data. Clean and preprocess data, perform exploratory data analysis, and apply appropriate algorithms for analysis. Summarize findings, visualize results, and provide actionable recommendations, showcasing the problem-solving potential of data science techniques.

About the Author

ProjectPro is the only online platform designed to help professionals gain practical, hands-on experience in big data, data engineering, data science, and machine learning related technologies. Having over 270+ reusable project templates in data science and big data with step-by-step walkthroughs,

© 2024

© 2024 Iconiq Inc.

Privacy policy

User policy

Write for ProjectPro

FOR EMPLOYERS

Top 10 real-world data science case studies.

Aditya Sharma

Aditya is a content writer with 5+ years of experience writing for various industries including Marketing, SaaS, B2B, IT, and Edtech among others. You can find him watching anime or playing games when he’s not writing.

Frequently Asked Questions

Real-world data science case studies differ significantly from academic examples. While academic exercises often feature clean, well-structured data and simplified scenarios, real-world projects tackle messy, diverse data sources with practical constraints and genuine business objectives. These case studies reflect the complexities data scientists face when translating data into actionable insights in the corporate world.

Real-world data science projects come with common challenges. Data quality issues, including missing or inaccurate data, can hinder analysis. Domain expertise gaps may result in misinterpretation of results. Resource constraints might limit project scope or access to necessary tools and talent. Ethical considerations, like privacy and bias, demand careful handling.

Lastly, as data and business needs evolve, data science projects must adapt and stay relevant, posing an ongoing challenge.

Real-world data science case studies play a crucial role in helping companies make informed decisions. By analyzing their own data, businesses gain valuable insights into customer behavior, market trends, and operational efficiencies.

These insights empower data-driven strategies, aiding in more effective resource allocation, product development, and marketing efforts. Ultimately, case studies bridge the gap between data science and business decision-making, enhancing a company's ability to thrive in a competitive landscape.

Key takeaways from these case studies for organizations include the importance of cultivating a data-driven culture that values evidence-based decision-making. Investing in robust data infrastructure is essential to support data initiatives. Collaborating closely between data scientists and domain experts ensures that insights align with business goals.

Finally, continuous monitoring and refinement of data solutions are critical for maintaining relevance and effectiveness in a dynamic business environment. Embracing these principles can lead to tangible benefits and sustainable success in real-world data science endeavors.

Data science is a powerful driver of innovation and problem-solving across diverse industries. By harnessing data, organizations can uncover hidden patterns, automate repetitive tasks, optimize operations, and make informed decisions.

In healthcare, for example, data-driven diagnostics and treatment plans improve patient outcomes. In finance, predictive analytics enhances risk management. In transportation, route optimization reduces costs and emissions. Data science empowers industries to innovate and solve complex challenges in ways that were previously unimaginable.

Hire remote developers

Tell us the skills you need and we'll find the best developer for you in days, not weeks.

2024 Guide: 20+ Essential Data Science Case Study Interview Questions

Case studies are often the most challenging aspect of data science interview processes. They are crafted to resemble a company’s existing or previous projects, assessing a candidate’s ability to tackle prompts, convey their insights, and navigate obstacles.

To excel in data science case study interviews, practice is crucial. It will enable you to develop strategies for approaching case studies, asking the right questions to your interviewer, and providing responses that showcase your skills while adhering to time constraints.

The best way of doing this is by using a framework for answering case studies. For example, you could use the product metrics framework and the A/B testing framework to answer most case studies that come up in data science interviews.

There are four main types of data science case studies:

- Product Case Studies - This type of case study tackles a specific product or feature offering, often tied to the interviewing company. Interviewers are generally looking for a sense of business sense geared towards product metrics.

- Data Analytics Case Study Questions - Data analytics case studies ask you to propose possible metrics in order to investigate an analytics problem. Additionally, you must write a SQL query to pull your proposed metrics, and then perform analysis using the data you queried, just as you would do in the role.

- Modeling and Machine Learning Case Studies - Modeling case studies are more varied and focus on assessing your intuition for building models around business problems.

- Business Case Questions - Similar to product questions, business cases tackle issues or opportunities specific to the organization that is interviewing you. Often, candidates must assess the best option for a certain business plan being proposed, and formulate a process for solving the specific problem.

How Case Study Interviews Are Conducted

Oftentimes as an interviewee, you want to know the setting and format in which to expect the above questions to be asked. Unfortunately, this is company-specific: Some prefer real-time settings, where candidates actively work through a prompt after receiving it, while others offer some period of days (say, a week) before settling in for a presentation of your findings.

It is therefore important to have a system for answering these questions that will accommodate all possible formats, such that you are prepared for any set of circumstances (we provide such a framework below).

Why Are Case Study Questions Asked?

Case studies assess your thought process in answering data science questions. Specifically, interviewers want to see that you have the ability to think on your feet, and to work through real-world problems that likely do not have a right or wrong answer. Real-world case studies that are affecting businesses are not binary; there is no black-and-white, yes-or-no answer. This is why it is important that you can demonstrate decisiveness in your investigations, as well as show your capacity to consider impacts and topics from a variety of angles. Once you are in the role, you will be dealing directly with the ambiguity at the heart of decision-making.

Perhaps most importantly, case interviews assess your ability to effectively communicate your conclusions. On the job, data scientists exchange information across teams and divisions, so a significant part of the interviewer’s focus will be on how you process and explain your answer.

Quick tip: Because case questions in data science interviews tend to be product- and company-focused, it is extremely beneficial to research current projects and developments across different divisions , as these initiatives might end up as the case study topic.

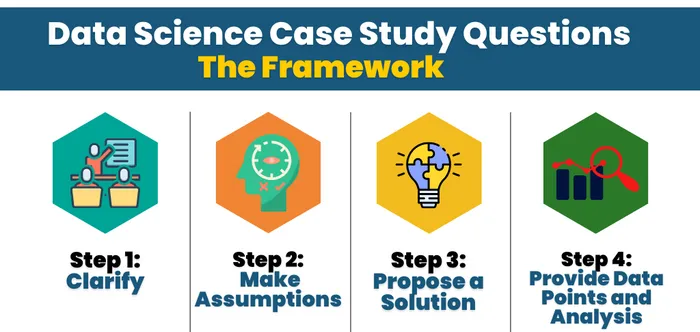

How to Answer Data Science Case Study Questions (The Framework)

There are four main steps to tackling case questions in Data Science interviews, regardless of the type: clarify, make assumptions, gather context, and provide data points and analysis.

Step 1: Clarify

Clarifying is used to gather more information . More often than not, these case studies are designed to be confusing and vague. There will be unorganized data intentionally supplemented with extraneous or omitted information, so it is the candidate’s responsibility to dig deeper, filter out bad information, and fill gaps. Interviewers will be observing how an applicant asks questions and reach their solution.

For example, with a product question, you might take into consideration:

- What is the product?

- How does the product work?

- How does the product align with the business itself?

Step 2: Make Assumptions

When you have made sure that you have evaluated and understand the dataset, start investigating and discarding possible hypotheses. Developing insights on the product at this stage complements your ability to glean information from the dataset, and the exploration of your ideas is paramount to forming a successful hypothesis. You should be communicating your hypotheses with the interviewer, such that they can provide clarifying remarks on how the business views the product, and to help you discard unworkable lines of inquiry. If we continue to think about a product question, some important questions to evaluate and draw conclusions from include:

- Who uses the product? Why?

- What are the goals of the product?

- How does the product interact with other services or goods the company offers?

The goal of this is to reduce the scope of the problem at hand, and ask the interviewer questions upfront that allow you to tackle the meat of the problem instead of focusing on less consequential edge cases.

Step 3: Propose a Solution

Now that a hypothesis is formed that has incorporated the dataset and an understanding of the business-related context, it is time to apply that knowledge in forming a solution. Remember, the hypothesis is simply a refined version of the problem that uses the data on hand as its basis to being solved. The solution you create can target this narrow problem, and you can have full faith that it is addressing the core of the case study question.

Keep in mind that there isn’t a single expected solution, and as such, there is a certain freedom here to determine the exact path for investigation.

Step 4: Provide Data Points and Analysis

Finally, providing data points and analysis in support of your solution involves choosing and prioritizing a main metric. As with all prior factors, this step must be tied back to the hypothesis and the main goal of the problem. From that foundation, it is important to trace through and analyze different examples– from the main metric–in order to validate the hypothesis.

Quick tip: Every case question tends to have multiple solutions. Therefore, you should absolutely consider and communicate any potential trade-offs of your chosen method. Be sure you are communicating the pros and cons of your approach.

Note: In some special cases, solutions will also be assessed on the ability to convey information in layman’s terms. Regardless of the structure, applicants should always be prepared to solve through the framework outlined above in order to answer the prompt.

The Role of Effective Communication

There have been multiple articles and discussions conducted by interviewers behind the Data Science Case Study portion, and they all boil down success in case studies to one main factor: effective communication.

All the analysis in the world will not help if interviewees cannot verbally work through and highlight their thought process within the case study. Again, interviewers are keyed at this stage of the hiring process to look for well-developed “soft-skills” and problem-solving capabilities. Demonstrating those traits is key to succeeding in this round.

To this end, the best advice possible would be to practice actively going through example case studies, such as those available in the Interview Query questions bank . Exploring different topics with a friend in an interview-like setting with cold recall (no Googling in between!) will be uncomfortable and awkward, but it will also help reveal weaknesses in fleshing out the investigation.

Don’t worry if the first few times are terrible! Developing a rhythm will help with gaining self-confidence as you become better at assessing and learning through these sessions.

Finding the right data science talent for case studies? OutSearch.ai ’s AI-driven platform streamlines this by pinpointing candidates who excel in real-world scenarios. Discover how they can help you match with top problem-solvers.

Product Case Study Questions

With product data science case questions , the interviewer wants to get an idea of your product sense intuition. Specifically, these questions assess your ability to identify which metrics should be proposed in order to understand a product.

1. How would you measure the success of private stories on Instagram, where only certain close friends can see the story?

Start by answering: What is the goal of the private story feature on Instagram? You can’t evaluate “success” without knowing what the initial objective of the product was, to begin with.

One specific goal of this feature would be to drive engagement. A private story could potentially increase interactions between users, and grow awareness of the feature.

Now, what types of metrics might you propose to assess user engagement? For a high-level overview, we could look at:

- Average stories per user per day

- Average Close Friends stories per user per day

However, we would also want to further bucket our users to see the effect that Close Friends stories have on user engagement. By bucketing users by age, date joined, or another metric, we could see how engagement is affected within certain populations, giving us insight on success that could be lost if looking at the overall population.

2. How would you measure the success of acquiring new users through a 30-day free trial at Netflix?

More context: Netflix is offering a promotion where users can enroll in a 30-day free trial. After 30 days, customers will automatically be charged based on their selected package. How would you measure acquisition success, and what metrics would you propose to measure the success of the free trial?

One way we can frame the concept specifically to this problem is to think about controllable inputs, external drivers, and then the observable output . Start with the major goals of Netflix:

- Acquiring new users to their subscription plan.

- Decreasing churn and increasing retention.

Looking at acquisition output metrics specifically, there are several top-level stats that we can look at, including:

- Conversion rate percentage

- Cost per free trial acquisition

- Daily conversion rate

With these conversion metrics, we would also want to bucket users by cohort. This would help us see the percentage of free users who were acquired, as well as retention by cohort.

3. How would you measure the success of Facebook Groups?

Start by considering the key function of Facebook Groups . You could say that Groups are a way for users to connect with other users through a shared interest or real-life relationship. Therefore, the user’s goal is to experience a sense of community, which will also drive our business goal of increasing user engagement.

What general engagement metrics can we associate with this value? An objective metric like Groups monthly active users would help us see if Facebook Groups user base is increasing or decreasing. Plus, we could monitor metrics like posting, commenting, and sharing rates.

There are other products that Groups impact, however, specifically the Newsfeed. We need to consider Newsfeed quality and examine if updates from Groups clog up the content pipeline and if users prioritize those updates over other Newsfeed items. This evaluation will give us a better sense of if Groups actually contribute to higher engagement levels.

4. How would you analyze the effectiveness of a new LinkedIn chat feature that shows a “green dot” for active users?

Note: Given engineering constraints, the new feature is impossible to A/B test before release. When you approach case study questions, remember always to clarify any vague terms. In this case, “effectiveness” is very vague. To help you define that term, you would want first to consider what the goal is of adding a green dot to LinkedIn chat.

5. How would you diagnose why weekly active users are up 5%, but email notification open rates are down 2%?

What assumptions can you make about the relationship between weekly active users and email open rates? With a case question like this, you would want to first answer that line of inquiry before proceeding.

Hint: Open rate can decrease when its numerator decreases (fewer people open emails) or its denominator increases (more emails are sent overall). Taking these two factors into account, what are some hypotheses we can make about our decrease in the open rate compared to our increase in weekly active users?

Data Analytics Case Study Questions

Data analytics case studies ask you to dive into analytics problems. Typically these questions ask you to examine metrics trade-offs or investigate changes in metrics. In addition to proposing metrics, you also have to write SQL queries to generate the metrics, which is why they are sometimes referred to as SQL case study questions .

6. Using the provided data, generate some specific recommendations on how DoorDash can improve.

In this DoorDash analytics case study take-home question you are provided with the following dataset:

- Customer order time

- Restaurant order time

- Driver arrives at restaurant time

- Order delivered time

- Customer ID

- Amount of discount

- Amount of tip

With a dataset like this, there are numerous recommendations you can make. A good place to start is by thinking about the DoorDash marketplace, which includes drivers, riders and merchants. How could you analyze the data to increase revenue, driver/user retention and engagement in that marketplace?

7. After implementing a notification change, the total number of unsubscribes increases. Write a SQL query to show how unsubscribes are affecting login rates over time.

This is a Twitter data science interview question , and let’s say you implemented this new feature using an A/B test. You are provided with two tables: events (which includes login, nologin and unsubscribe ) and variants (which includes control or variant ).

We are tasked with comparing multiple different variables at play here. There is the new notification system, along with its effect of creating more unsubscribes. We can also see how login rates compare for unsubscribes for each bucket of the A/B test.

Given that we want to measure two different changes, we know we have to use GROUP BY for the two variables: date and bucket variant. What comes next?

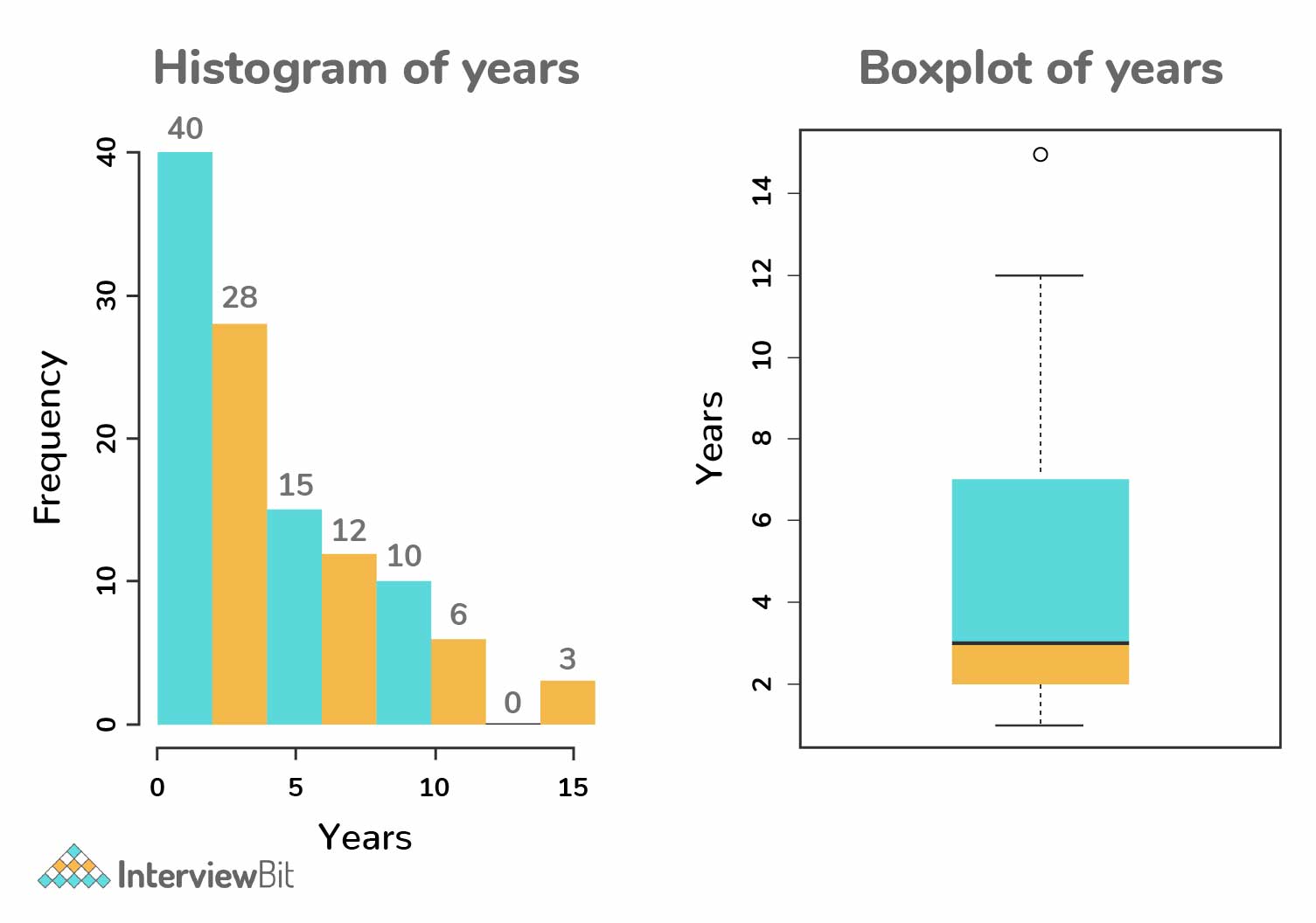

8. Write a query to disprove the hypothesis: Data scientists who switch jobs more often end up getting promoted faster.

More context: You are provided with a table of user experiences representing each person’s past work experiences and timelines.

This question requires a bit of creative problem-solving to understand how we can prove or disprove the hypothesis. The hypothesis is that a data scientist that ends up switching jobs more often gets promoted faster.

Therefore, in analyzing this dataset, we can prove this hypothesis by separating the data scientists into specific segments on how often they jump in their careers.

For example, if we looked at the number of job switches for data scientists that have been in their field for five years, we could prove the hypothesis that the number of data science managers increased as the number of career jumps also rose.

- Never switched jobs: 10% are managers

- Switched jobs once: 20% are managers

- Switched jobs twice: 30% are managers

- Switched jobs three times: 40% are managers

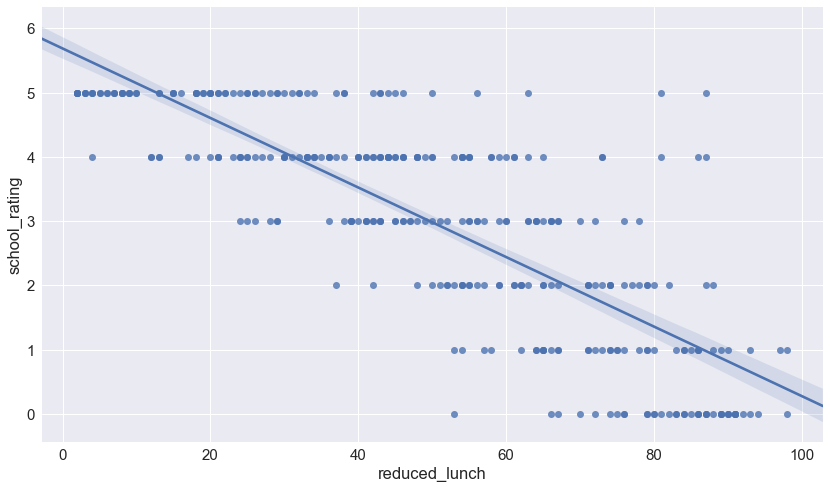

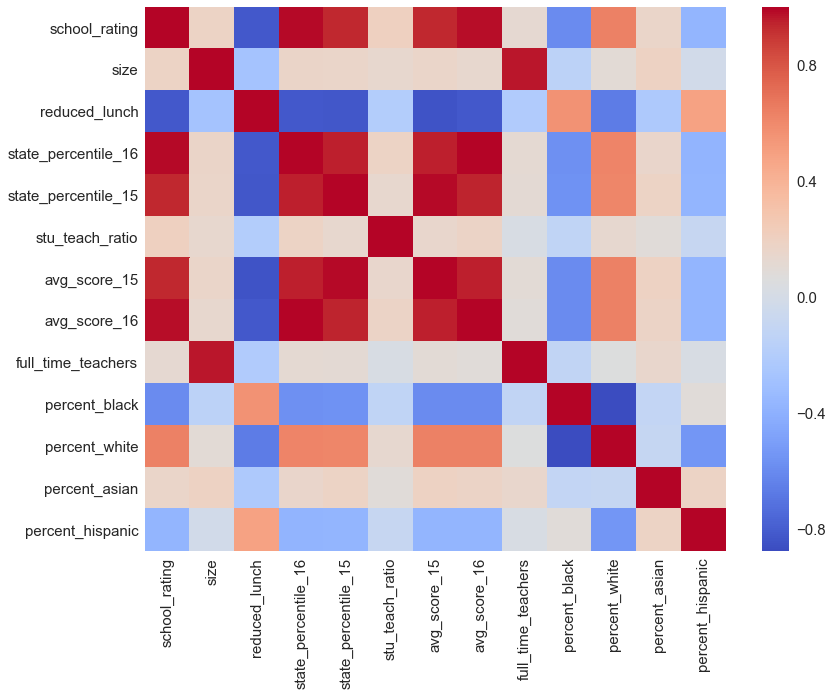

9. Write a SQL query to investigate the hypothesis: Click-through rate is dependent on search result rating.

More context: You are given a table with search results on Facebook, which includes query (search term), position (the search position), and rating (human rating from 1 to 5). Each row represents a single search and includes a column has_clicked that represents whether a user clicked or not.

This question requires us to formulaically do two things: create a metric that can analyze a problem that we face and then actually compute that metric.

Think about the data we want to display to prove or disprove the hypothesis. Our output metric is CTR (clickthrough rate). If CTR is high when search result ratings are high and CTR is low when the search result ratings are low, then our hypothesis is proven. However, if the opposite is true, CTR is low when the search result ratings are high, or there is no proven correlation between the two, then our hypothesis is not proven.

With that structure in mind, we can then look at the results split into different search rating buckets. If we measure the CTR for queries that all have results rated at 1 and then measure CTR for queries that have results rated at lower than 2, etc., we can measure to see if the increase in rating is correlated with an increase in CTR.

10. How would you help a supermarket chain determine which product categories should be prioritized in their inventory restructuring efforts?

You’re working as a Data Scientist in a local grocery chain’s data science team. The business team has decided to allocate store floor space by product category (e.g., electronics, sports and travel, food and beverages). Help the team understand which product categories to prioritize as well as answering questions such as how customer demographics affect sales, and how each city’s sales per product category differs.

Check out our Data Analytics Learning Path .

Modeling and Machine Learning Case Questions

Machine learning case questions assess your ability to build models to solve business problems. These questions can range from applying machine learning to solve a specific case scenario to assessing the validity of a hypothetical existing model . The modeling case study requires a candidate to evaluate and explain any certain part of the model building process.

11. Describe how you would build a model to predict Uber ETAs after a rider requests a ride.

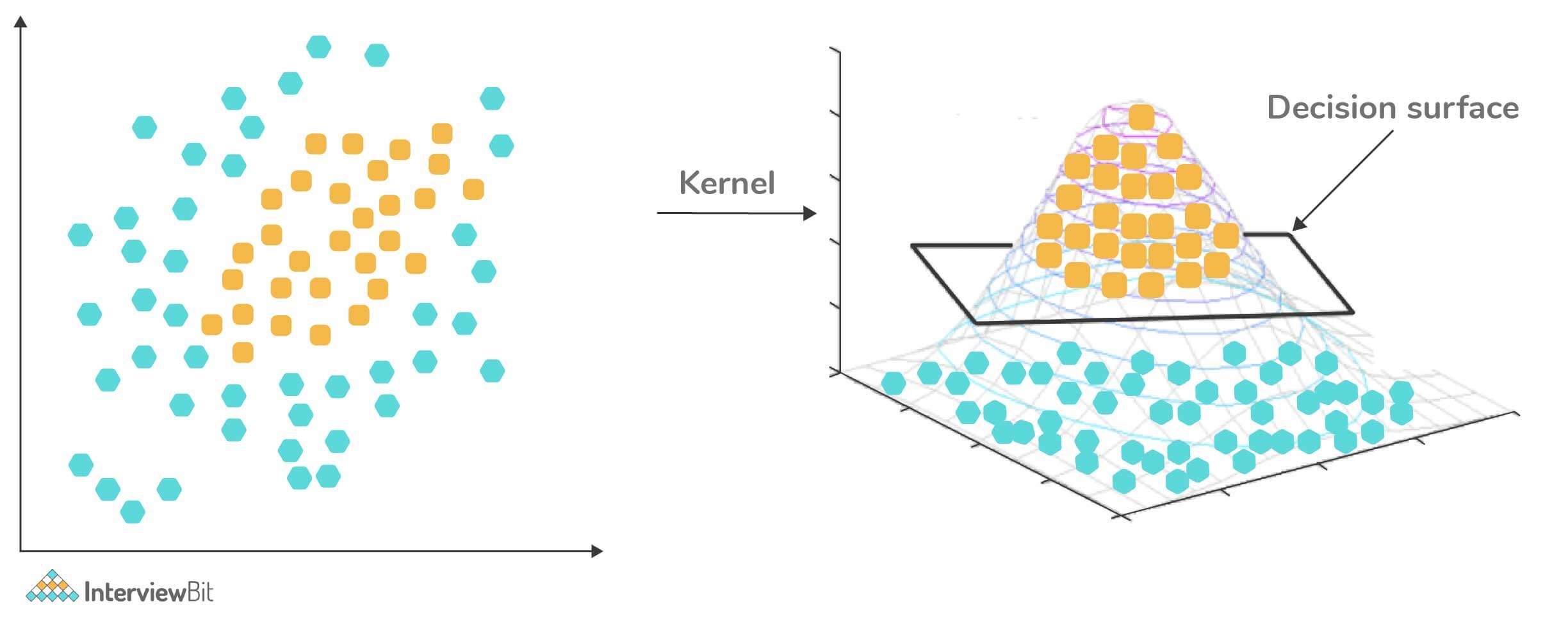

Common machine learning case study problems like this are designed to explain how you would build a model. Many times this can be scoped down to specific parts of the model building process. Examining the example above, we could break it up into:

How would you evaluate the predictions of an Uber ETA model?

What features would you use to predict the Uber ETA for ride requests?

Our recommended framework breaks down a modeling and machine learning case study to individual steps in order to tackle each one thoroughly. In each full modeling case study, you will want to go over:

- Data processing

- Feature Selection

- Model Selection

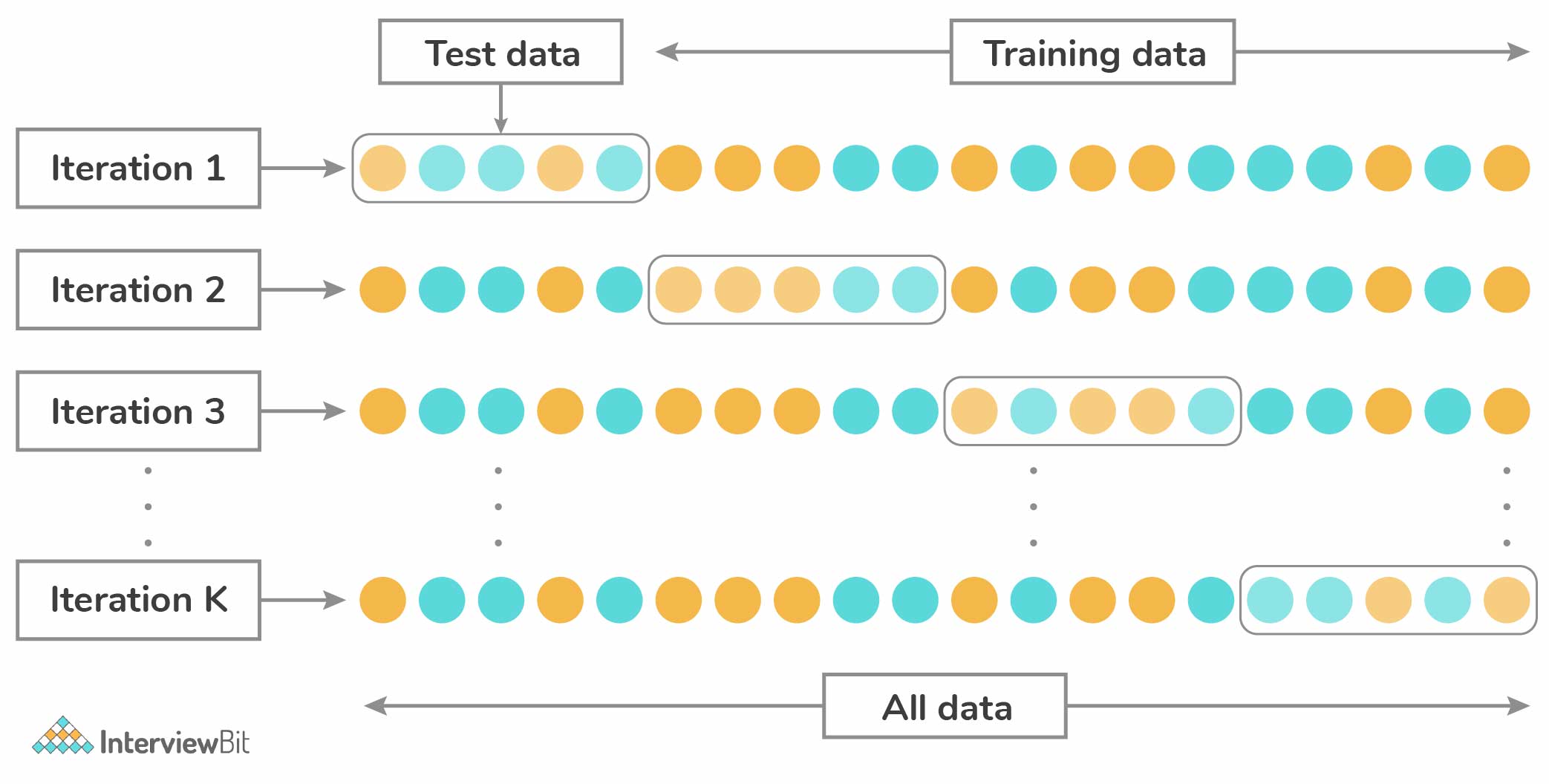

- Cross Validation

- Evaluation Metrics

- Testing and Roll Out

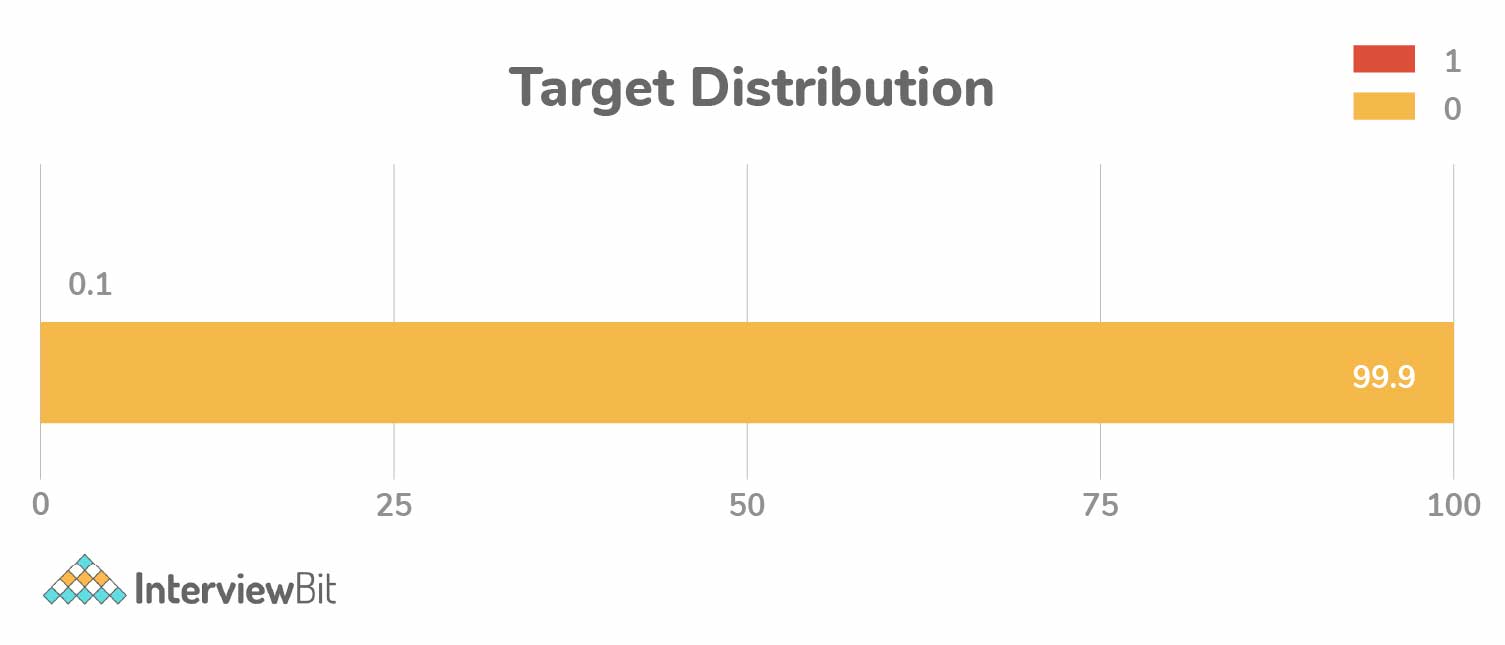

12. How would you build a model that sends bank customers a text message when fraudulent transactions are detected?

Additionally, the customer can approve or deny the transaction via text response.

Let’s start out by understanding what kind of model would need to be built. We know that since we are working with fraud, there has to be a case where either a fraudulent transaction is or is not present .

Hint: This problem is a binary classification problem. Given the problem scenario, what considerations do we have to think about when first building this model? What would the bank fraud data look like?

13. How would you design the inputs and outputs for a model that detects potential bombs at a border crossing?

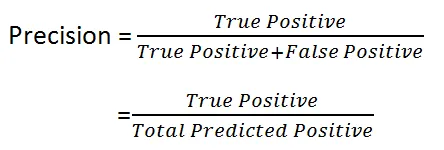

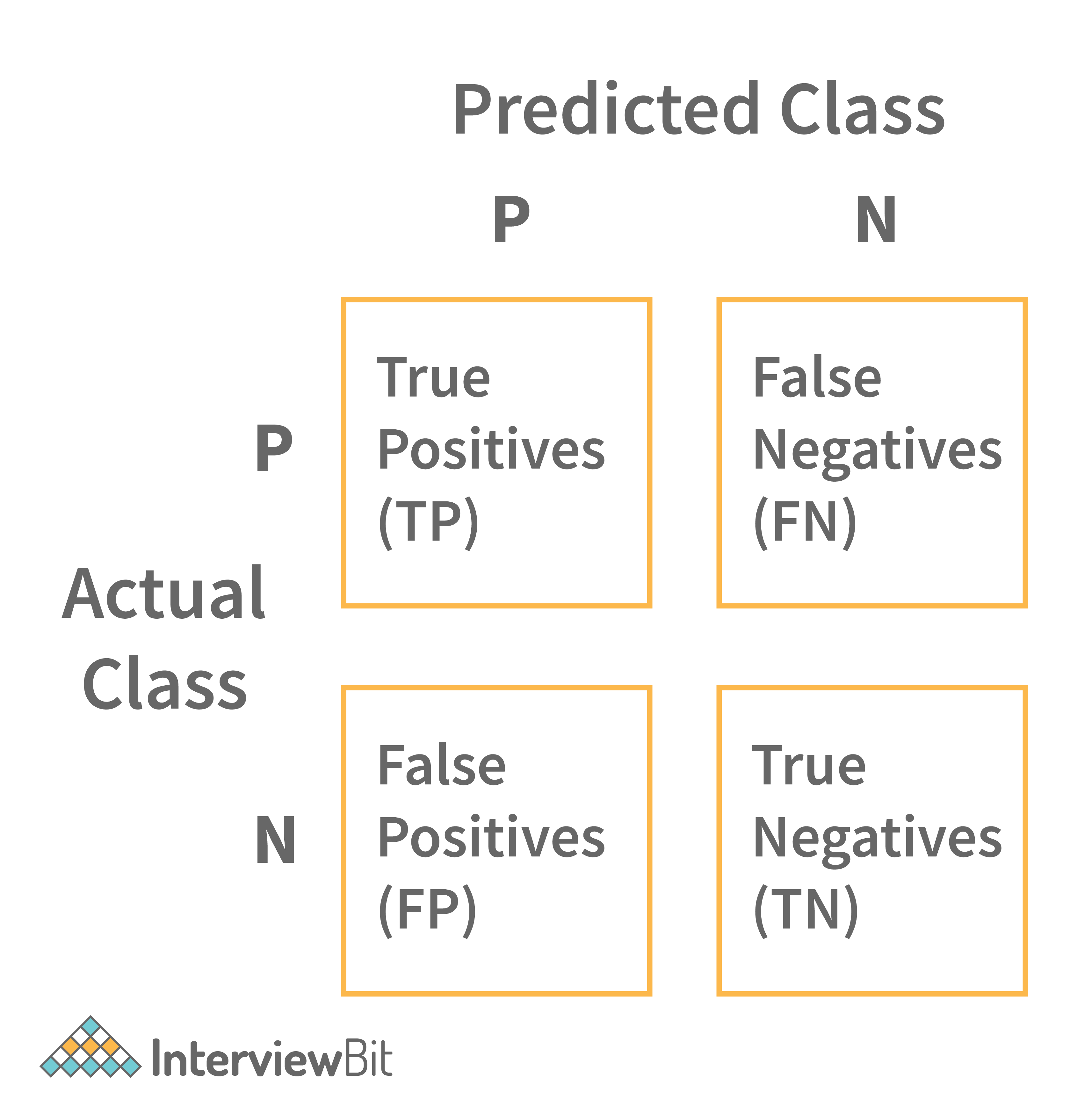

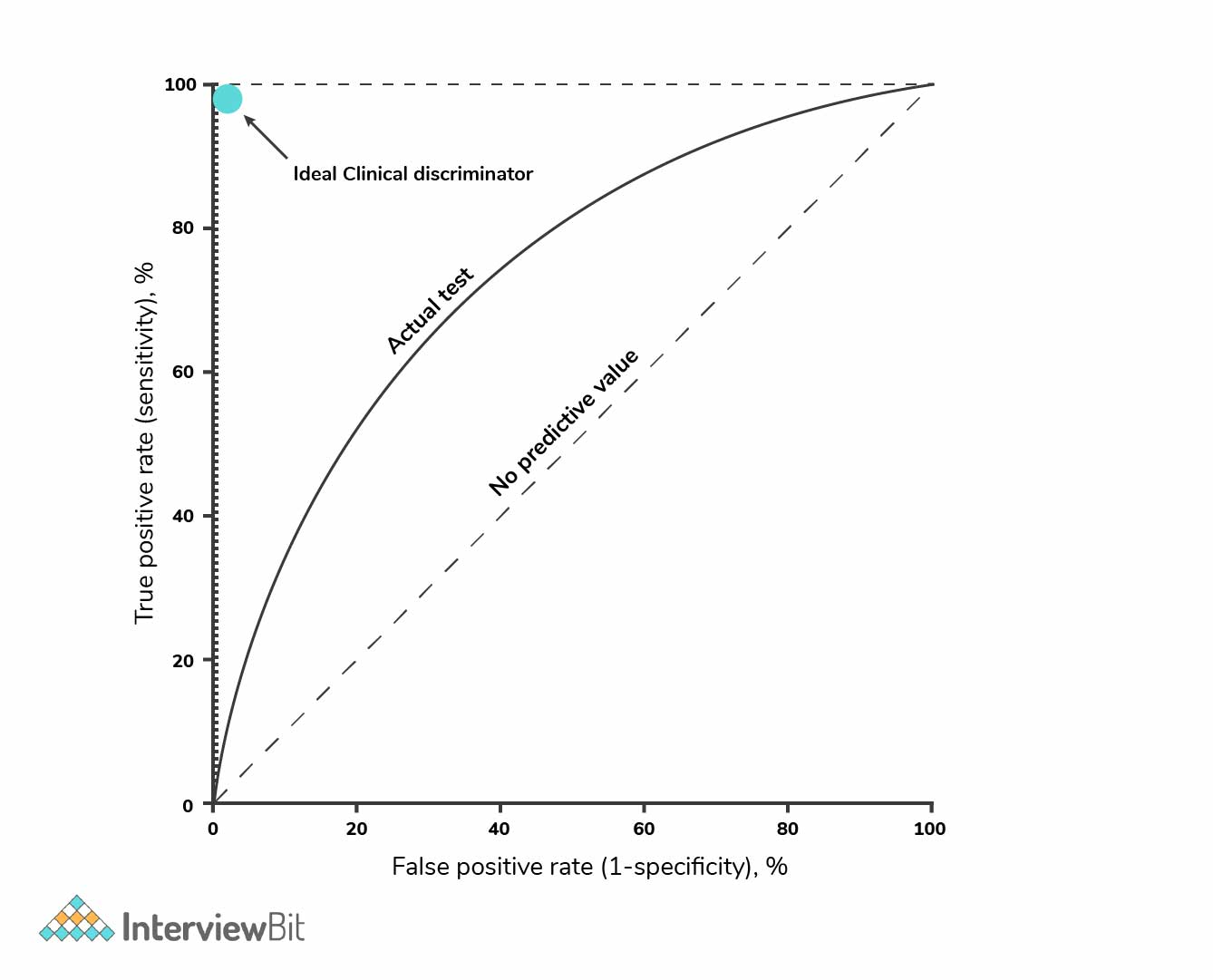

Additional questions. How would you test the model and measure its accuracy? Remember the equation for precision:

Because we can not have high TrueNegatives, recall should be high when assessing the model.

14. Which model would you choose to predict Airbnb booking prices: Linear regression or random forest regression?

Start by answering this question: What are the main differences between linear regression and random forest?

Random forest regression is based on the ensemble machine learning technique of bagging . The two key concepts of random forests are:

- Random sampling of training observations when building trees.

- Random subsets of features for splitting nodes.

Random forest regressions also discretize continuous variables, since they are based on decision trees and can split categorical and continuous variables.

Linear regression, on the other hand, is the standard regression technique in which relationships are modeled using a linear predictor function, the most common example represented as y = Ax + B.

Let’s see how each model is applicable to Airbnb’s bookings. One thing we need to do in the interview is to understand more context around the problem of predicting bookings. To do so, we need to understand which features are present in our dataset.

We can assume the dataset will have features like:

- Location features.

- Seasonality.

- Number of bedrooms and bathrooms.

- Private room, shared, entire home, etc.

- External demand (conferences, festivals, sporting events).

Which model would be the best fit for this feature set?

15. Using a binary classification model that pre-approves candidates for a loan, how would you give each rejected application a rejection reason?

More context: You do not have access to the feature weights. Start by thinking about the problem like this: How would the problem change if we had ten, one thousand, or ten thousand applicants that had gone through the loan qualification program?

Pretend that we have three people: Alice, Bob, and Candace that have all applied for a loan. Simplifying the financial lending loan model, let us assume the only features are the total number of credit cards , the dollar amount of current debt , and credit age . Here is a scenario:

Alice: 10 credit cards, 5 years of credit age, $\$20K$ in debt

Bob: 10 credit cards, 5 years of credit age, $\$15K$ in debt

Candace: 10 credit cards, 5 years of credit age, $\$10K$ in debt

If Candace is approved, we can logically point to the fact that Candace’s $\$10K$ in debt swung the model to approve her for a loan. How did we reason this out?

If the sample size analyzed was instead thousands of people who had the same number of credit cards and credit age with varying levels of debt, we could figure out the model’s average loan acceptance rate for each numerical amount of current debt. Then we could plot these on a graph to model the y-value (average loan acceptance) versus the x-value (dollar amount of current debt). These graphs are called partial dependence plots.

Business Case Questions

In data science interviews, business case study questions task you with addressing problems as they relate to the business. You might be asked about topics like estimation and calculation, as well as applying problem-solving to a larger case. One tip: Be sure to read up on the company’s products and ventures before your interview to expose yourself to possible topics.

16. How would you estimate the average lifetime value of customers at a business that has existed for just over one year?

More context: You know that the product costs $\$100$ per month, averages 10% in monthly churn, and the average customer stays for 3.5 months.

Remember that lifetime value is defined by the prediction of the net revenue attributed to the entire future relationship with all customers averaged. Therefore, $\$100$ * 3.5 = $\$350$… But is it that simple?

Because this company is so new, our average customer length (3.5 months) is biased from the short possible length of time that anyone could have been a customer (one year maximum). How would you then model out LTV knowing the churn rate and product cost?

17. How would you go about removing duplicate product names (e.g. iPhone X vs. Apple iPhone 10) in a massive database?

See the full solution for this Amazon business case question on YouTube:

18. What metrics would you monitor to know if a 50% discount promotion is a good idea for a ride-sharing company?

This question has no correct answer and is rather designed to test your reasoning and communication skills related to product/business cases. First, start by stating your assumptions. What are the goals of this promotion? It is likely that the goal of the discount is to grow revenue and increase retention. A few other assumptions you might make include:

- The promotion will be applied uniformly across all users.

- The 50% discount can only be used for a single ride.

How would we be able to evaluate this pricing strategy? An A/B test between the control group (no discount) and test group (discount) would allow us to evaluate Long-term revenue vs average cost of the promotion. Using these two metrics how could we measure if the promotion is a good idea?

19. A bank wants to create a new partner card, e.g. Whole Foods Chase credit card). How would you determine what the next partner card should be?

More context: Say you have access to all customer spending data. With this question, there are several approaches you can take. As your first step, think about the business reason for credit card partnerships: they help increase acquisition and customer retention.

One of the simplest solutions would be to sum all transactions grouped by merchants. This would identify the merchants who see the highest spending amounts. However, the one issue might be that some merchants have a high-spend value but low volume. How could we counteract this potential pitfall? Is the volume of transactions even an important factor in our credit card business? The more questions you ask, the more may spring to mind.

20. How would you assess the value of keeping a TV show on a streaming platform like Netflix?

Say that Netflix is working on a deal to renew the streaming rights for a show like The Office , which has been on Netflix for one year. Your job is to value the benefit of keeping the show on Netflix.

Start by trying to understand the reasons why Netflix would want to renew the show. Netflix mainly has three goals for what their content should help achieve:

- Acquisition: To increase the number of subscribers.

- Retention: To increase the retention of active subscribers and keep them on as paying members.

- Revenue: To increase overall revenue.

One solution to value the benefit would be to estimate a lower and upper bound to understand the percentage of users that would be affected by The Office being removed. You could then run these percentages against your known acquisition and retention rates.

21. How would you determine which products are to be put on sale?

Let’s say you work at Amazon. It’s nearing Black Friday, and you are tasked with determining which products should be put on sale. You have access to historical pricing and purchasing data from items that have been on sale before. How would you determine what products should go on sale to best maximize profit during Black Friday?

To start with this question, aggregate data from previous years for products that have been on sale during Black Friday or similar events. You can then compare elements such as historical sales volume, inventory levels, and profit margins.

Learn More About Feature Changes

This course is designed teach you everything you need to know about feature changes:

More Data Science Interview Resources

Case studies are one of the most common types of data science interview questions . Practice with the data science course from Interview Query, which includes product and machine learning modules.

- Data Science

Top 12 Data Science Case Studies: Across Various Industries

Home Blog Data Science Top 12 Data Science Case Studies: Across Various Industries

Data science has become popular in the last few years due to its successful application in making business decisions. Data scientists have been using data science techniques to solve challenging real-world issues in healthcare, agriculture, manufacturing, automotive, and many more. For this purpose, a data enthusiast needs to stay updated with the latest technological advancements in AI . An excellent way to achieve this is through reading industry data science case studies. I recommend checking out Data Science With Python course syllabus to start your data science journey. In this discussion, I will present some case studies to you that contain detailed and systematic data analysis of people, objects, or entities focusing on multiple factors present in the dataset. Aspiring and practising data scientists can motivate themselves to learn more about the sector, an alternative way of thinking, or methods to improve their organization based on comparable experiences. Almost every industry uses data science in some way. You can learn more about data science fundamentals in this data science course content . From my standpoint, data scientists may use it to spot fraudulent conduct in insurance claims. Automotive data scientists may use it to improve self-driving cars. In contrast, e-commerce data scientists can use it to add more personalization for their consumers—the possibilities are unlimited and unexplored. Let’s look at the top eight data science case studies in this article so you can understand how businesses from many sectors have benefitted from data science to boost productivity, revenues, and more. Read on to explore more or use the following links to go straight to the case study of your choice.

Examples of Data Science Case Studies

- Hospitality: Airbnb focuses on growth by analyzing customer voice using data science. Qantas uses predictive analytics to mitigate losses

- Healthcare: Novo Nordisk is Driving innovation with NLP. AstraZeneca harnesses data for innovation in medicine

- Covid 19: Johnson and Johnson use s d ata science to fight the Pandemic

- E-commerce: Amazon uses data science to personalize shop p ing experiences and improve customer satisfaction

- Supply chain management : UPS optimizes supp l y chain with big data analytics

- Meteorology: IMD leveraged data science to achieve a rec o rd 1.2m evacuation before cyclone ''Fani''

- Entertainment Industry: Netflix u ses data science to personalize the content and improve recommendations. Spotify uses big data to deliver a rich user experience for online music streaming

- Banking and Finance: HDFC utilizes Big D ata Analytics to increase income and enhance the banking experience

Top 8 Data Science Case Studies [For Various Industries]

1. data science in hospitality industry.

In the hospitality sector, data analytics assists hotels in better pricing strategies, customer analysis, brand marketing , tracking market trends, and many more.

Airbnb focuses on growth by analyzing customer voice using data science. A famous example in this sector is the unicorn '' Airbnb '', a startup that focussed on data science early to grow and adapt to the market faster. This company witnessed a 43000 percent hypergrowth in as little as five years using data science. They included data science techniques to process the data, translate this data for better understanding the voice of the customer, and use the insights for decision making. They also scaled the approach to cover all aspects of the organization. Airbnb uses statistics to analyze and aggregate individual experiences to establish trends throughout the community. These analyzed trends using data science techniques impact their business choices while helping them grow further.

Travel industry and data science

Predictive analytics benefits many parameters in the travel industry. These companies can use recommendation engines with data science to achieve higher personalization and improved user interactions. They can study and cross-sell products by recommending relevant products to drive sales and increase revenue. Data science is also employed in analyzing social media posts for sentiment analysis, bringing invaluable travel-related insights. Whether these views are positive, negative, or neutral can help these agencies understand the user demographics, the expected experiences by their target audiences, and so on. These insights are essential for developing aggressive pricing strategies to draw customers and provide better customization to customers in the travel packages and allied services. Travel agencies like Expedia and Booking.com use predictive analytics to create personalized recommendations, product development, and effective marketing of their products. Not just travel agencies but airlines also benefit from the same approach. Airlines frequently face losses due to flight cancellations, disruptions, and delays. Data science helps them identify patterns and predict possible bottlenecks, thereby effectively mitigating the losses and improving the overall customer traveling experience.

How Qantas uses predictive analytics to mitigate losses

Qantas , one of Australia's largest airlines, leverages data science to reduce losses caused due to flight delays, disruptions, and cancellations. They also use it to provide a better traveling experience for their customers by reducing the number and length of delays caused due to huge air traffic, weather conditions, or difficulties arising in operations. Back in 2016, when heavy storms badly struck Australia's east coast, only 15 out of 436 Qantas flights were cancelled due to their predictive analytics-based system against their competitor Virgin Australia, which witnessed 70 cancelled flights out of 320.

2. Data Science in Healthcare

The Healthcare sector is immensely benefiting from the advancements in AI. Data science, especially in medical imaging, has been helping healthcare professionals come up with better diagnoses and effective treatments for patients. Similarly, several advanced healthcare analytics tools have been developed to generate clinical insights for improving patient care. These tools also assist in defining personalized medications for patients reducing operating costs for clinics and hospitals. Apart from medical imaging or computer vision, Natural Language Processing (NLP) is frequently used in the healthcare domain to study the published textual research data.

A. Pharmaceutical

Driving innovation with NLP: Novo Nordisk. Novo Nordisk uses the Linguamatics NLP platform from internal and external data sources for text mining purposes that include scientific abstracts, patents, grants, news, tech transfer offices from universities worldwide, and more. These NLP queries run across sources for the key therapeutic areas of interest to the Novo Nordisk R&D community. Several NLP algorithms have been developed for the topics of safety, efficacy, randomized controlled trials, patient populations, dosing, and devices. Novo Nordisk employs a data pipeline to capitalize the tools' success on real-world data and uses interactive dashboards and cloud services to visualize this standardized structured information from the queries for exploring commercial effectiveness, market situations, potential, and gaps in the product documentation. Through data science, they are able to automate the process of generating insights, save time and provide better insights for evidence-based decision making.

How AstraZeneca harnesses data for innovation in medicine. AstraZeneca is a globally known biotech company that leverages data using AI technology to discover and deliver newer effective medicines faster. Within their R&D teams, they are using AI to decode the big data to understand better diseases like cancer, respiratory disease, and heart, kidney, and metabolic diseases to be effectively treated. Using data science, they can identify new targets for innovative medications. In 2021, they selected the first two AI-generated drug targets collaborating with BenevolentAI in Chronic Kidney Disease and Idiopathic Pulmonary Fibrosis.

Data science is also helping AstraZeneca redesign better clinical trials, achieve personalized medication strategies, and innovate the process of developing new medicines. Their Center for Genomics Research uses data science and AI to analyze around two million genomes by 2026. Apart from this, they are training their AI systems to check these images for disease and biomarkers for effective medicines for imaging purposes. This approach helps them analyze samples accurately and more effortlessly. Moreover, it can cut the analysis time by around 30%.

AstraZeneca also utilizes AI and machine learning to optimize the process at different stages and minimize the overall time for the clinical trials by analyzing the clinical trial data. Summing up, they use data science to design smarter clinical trials, develop innovative medicines, improve drug development and patient care strategies, and many more.

C. Wearable Technology

Wearable technology is a multi-billion-dollar industry. With an increasing awareness about fitness and nutrition, more individuals now prefer using fitness wearables to track their routines and lifestyle choices.

Fitness wearables are convenient to use, assist users in tracking their health, and encourage them to lead a healthier lifestyle. The medical devices in this domain are beneficial since they help monitor the patient's condition and communicate in an emergency situation. The regularly used fitness trackers and smartwatches from renowned companies like Garmin, Apple, FitBit, etc., continuously collect physiological data of the individuals wearing them. These wearable providers offer user-friendly dashboards to their customers for analyzing and tracking progress in their fitness journey.

3. Covid 19 and Data Science

In the past two years of the Pandemic, the power of data science has been more evident than ever. Different pharmaceutical companies across the globe could synthesize Covid 19 vaccines by analyzing the data to understand the trends and patterns of the outbreak. Data science made it possible to track the virus in real-time, predict patterns, devise effective strategies to fight the Pandemic, and many more.

How Johnson and Johnson uses data science to fight the Pandemic

The data science team at Johnson and Johnson leverages real-time data to track the spread of the virus. They built a global surveillance dashboard (granulated to county level) that helps them track the Pandemic's progress, predict potential hotspots of the virus, and narrow down the likely place where they should test its investigational COVID-19 vaccine candidate. The team works with in-country experts to determine whether official numbers are accurate and find the most valid information about case numbers, hospitalizations, mortality and testing rates, social compliance, and local policies to populate this dashboard. The team also studies the data to build models that help the company identify groups of individuals at risk of getting affected by the virus and explore effective treatments to improve patient outcomes.

4. Data Science in E-commerce

In the e-commerce sector , big data analytics can assist in customer analysis, reduce operational costs, forecast trends for better sales, provide personalized shopping experiences to customers, and many more.

Amazon uses data science to personalize shopping experiences and improve customer satisfaction. Amazon is a globally leading eCommerce platform that offers a wide range of online shopping services. Due to this, Amazon generates a massive amount of data that can be leveraged to understand consumer behavior and generate insights on competitors' strategies. Amazon uses its data to provide recommendations to its users on different products and services. With this approach, Amazon is able to persuade its consumers into buying and making additional sales. This approach works well for Amazon as it earns 35% of the revenue yearly with this technique. Additionally, Amazon collects consumer data for faster order tracking and better deliveries.

Similarly, Amazon's virtual assistant, Alexa, can converse in different languages; uses speakers and a camera to interact with the users. Amazon utilizes the audio commands from users to improve Alexa and deliver a better user experience.

5. Data Science in Supply Chain Management

Predictive analytics and big data are driving innovation in the Supply chain domain. They offer greater visibility into the company operations, reduce costs and overheads, forecasting demands, predictive maintenance, product pricing, minimize supply chain interruptions, route optimization, fleet management , drive better performance, and more.

Optimizing supply chain with big data analytics: UPS