Problem-Solving Agents In Artificial Intelligence

In artificial intelligence, a problem-solving agent refers to a type of intelligent agent designed to address and solve complex problems or tasks in its environment. These agents are a fundamental concept in AI and are used in various applications, from game-playing algorithms to robotics and decision-making systems. Here are some key characteristics and components of a problem-solving agent:

- Perception : Problem-solving agents typically have the ability to perceive or sense their environment. They can gather information about the current state of the world, often through sensors, cameras, or other data sources.

- Knowledge Base : These agents often possess some form of knowledge or representation of the problem domain. This knowledge can be encoded in various ways, such as rules, facts, or models, depending on the specific problem.

- Reasoning : Problem-solving agents employ reasoning mechanisms to make decisions and select actions based on their perception and knowledge. This involves processing information, making inferences, and selecting the best course of action.

- Planning : For many complex problems, problem-solving agents engage in planning. They consider different sequences of actions to achieve their goals and decide on the most suitable action plan.

- Actuation : After determining the best course of action, problem-solving agents take actions to interact with their environment. This can involve physical actions in the case of robotics or making decisions in more abstract problem-solving domains.

- Feedback : Problem-solving agents often receive feedback from their environment, which they use to adjust their actions and refine their problem-solving strategies. This feedback loop helps them adapt to changing conditions and improve their performance.

- Learning : Some problem-solving agents incorporate machine learning techniques to improve their performance over time. They can learn from experience, adapt their strategies, and become more efficient at solving similar problems in the future.

Problem-solving agents can vary greatly in complexity, from simple algorithms that solve straightforward puzzles to highly sophisticated AI systems that tackle complex, real-world problems. The design and implementation of problem-solving agents depend on the specific problem domain and the goals of the AI application.

Hello, I’m Hridhya Manoj. I’m passionate about technology and its ever-evolving landscape. With a deep love for writing and a curious mind, I enjoy translating complex concepts into understandable, engaging content. Let’s explore the world of tech together

Which Of The Following Is A Privilege In SQL Standard

Implicit Return Type Int In C

Leave a Comment Cancel reply

Save my name, email, and website in this browser for the next time I comment.

Reach Out to Us for Any Query

SkillVertex is an edtech organization that aims to provide upskilling and training to students as well as working professionals by delivering a diverse range of programs in accordance with their needs and future aspirations.

© 2024 Skill Vertex

Problem Solving Agents in Artificial Intelligence

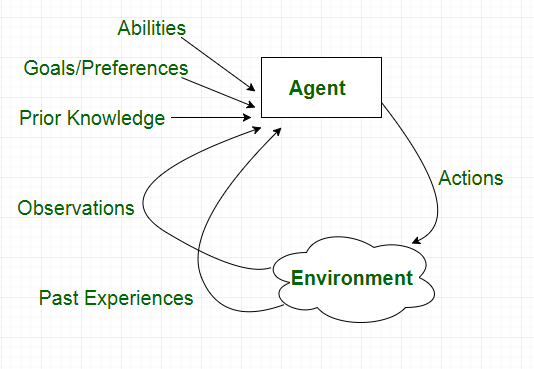

In this post, we will talk about Problem Solving agents in Artificial Intelligence, which are sort of goal-based agents. Because the straight mapping from states to actions of a basic reflex agent is too vast to retain for a complex environment, we utilize goal-based agents that may consider future actions and the desirability of outcomes.

You Will Learn

Problem Solving Agents

Problem Solving Agents decide what to do by finding a sequence of actions that leads to a desirable state or solution.

An agent may need to plan when the best course of action is not immediately visible. They may need to think through a series of moves that will lead them to their goal state. Such an agent is known as a problem solving agent , and the computation it does is known as a search .

The problem solving agent follows this four phase problem solving process:

- Goal Formulation: This is the first and most basic phase in problem solving. It arranges specific steps to establish a target/goal that demands some activity to reach it. AI agents are now used to formulate goals.

- Problem Formulation: It is one of the fundamental steps in problem-solving that determines what action should be taken to reach the goal.

- Search: After the Goal and Problem Formulation, the agent simulates sequences of actions and has to look for a sequence of actions that reaches the goal. This process is called search, and the sequence is called a solution . The agent might have to simulate multiple sequences that do not reach the goal, but eventually, it will find a solution, or it will find that no solution is possible. A search algorithm takes a problem as input and outputs a sequence of actions.

- Execution: After the search phase, the agent can now execute the actions that are recommended by the search algorithm, one at a time. This final stage is known as the execution phase.

Problems and Solution

Before we move into the problem formulation phase, we must first define a problem in terms of problem solving agents.

A formal definition of a problem consists of five components:

Initial State

Transition model.

It is the agent’s starting state or initial step towards its goal. For example, if a taxi agent needs to travel to a location(B), but the taxi is already at location(A), the problem’s initial state would be the location (A).

It is a description of the possible actions that the agent can take. Given a state s, Actions ( s ) returns the actions that can be executed in s. Each of these actions is said to be appropriate in s.

It describes what each action does. It is specified by a function Result ( s, a ) that returns the state that results from doing action an in state s.

The initial state, actions, and transition model together define the state space of a problem, a set of all states reachable from the initial state by any sequence of actions. The state space forms a graph in which the nodes are states, and the links between the nodes are actions.

It determines if the given state is a goal state. Sometimes there is an explicit list of potential goal states, and the test merely verifies whether the provided state is one of them. The goal is sometimes expressed via an abstract attribute rather than an explicitly enumerated set of conditions.

It assigns a numerical cost to each path that leads to the goal. The problem solving agents choose a cost function that matches its performance measure. Remember that the optimal solution has the lowest path cost of all the solutions .

Example Problems

The problem solving approach has been used in a wide range of work contexts. There are two kinds of problem approaches

- Standardized/ Toy Problem: Its purpose is to demonstrate or practice various problem solving techniques. It can be described concisely and precisely, making it appropriate as a benchmark for academics to compare the performance of algorithms.

- Real-world Problems: It is real-world problems that need solutions. It does not rely on descriptions, unlike a toy problem, yet we can have a basic description of the issue.

Some Standardized/Toy Problems

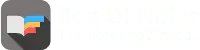

Vacuum world problem.

Let us take a vacuum cleaner agent and it can move left or right and its jump is to suck up the dirt from the floor.

The vacuum world’s problem can be stated as follows:

States: A world state specifies which objects are housed in which cells. The objects in the vacuum world are the agent and any dirt. The agent can be in either of the two cells in the simple two-cell version, and each call can include dirt or not, therefore there are 2×2×2 = 8 states. A vacuum environment with n cells has n×2 n states in general.

Initial State: Any state can be specified as the starting point.

Actions: We defined three actions in the two-cell world: sucking, moving left, and moving right. More movement activities are required in a two-dimensional multi-cell world.

Transition Model: Suck cleans the agent’s cell of any filth; Forward moves the agent one cell forward in the direction it is facing unless it meets a wall, in which case the action has no effect. Backward moves the agent in the opposite direction, whilst TurnRight and TurnLeft rotate it by 90°.

Goal States: The states in which every cell is clean.

Action Cost: Each action costs 1.

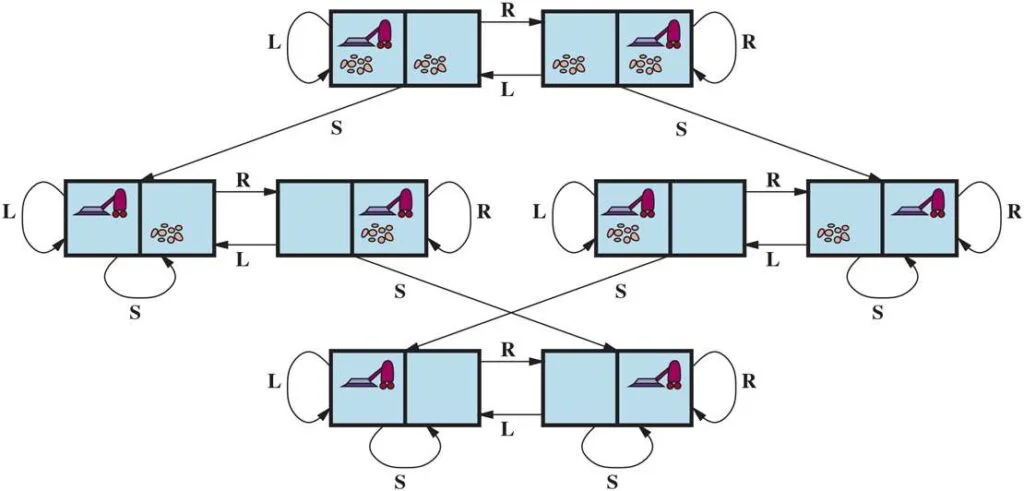

8 Puzzle Problem

In a sliding-tile puzzle , a number of tiles (sometimes called blocks or pieces) are arranged in a grid with one or more blank spaces so that some of the tiles can slide into the blank space. One variant is the Rush Hour puzzle, in which cars and trucks slide around a 6 x 6 grid in an attempt to free a car from the traffic jam. Perhaps the best-known variant is the 8- puzzle (see Figure below ), which consists of a 3 x 3 grid with eight numbered tiles and one blank space, and the 15-puzzle on a 4 x 4 grid. The object is to reach a specified goal state, such as the one shown on the right of the figure. The standard formulation of the 8 puzzles is as follows:

STATES : A state description specifies the location of each of the tiles.

INITIAL STATE : Any state can be designated as the initial state. (Note that a parity property partitions the state space—any given goal can be reached from exactly half of the possible initial states.)

ACTIONS : While in the physical world it is a tile that slides, the simplest way of describing action is to think of the blank space moving Left , Right , Up , or Down . If the blank is at an edge or corner then not all actions will be applicable.

TRANSITION MODEL : Maps a state and action to a resulting state; for example, if we apply Left to the start state in the Figure below, the resulting state has the 5 and the blank switched.

GOAL STATE : It identifies whether we have reached the correct goal state. Although any state could be the goal, we typically specify a state with the numbers in order, as in the Figure above.

ACTION COST : Each action costs 1.

You Might Like:

- Agents in Artificial Intelligence

Types of Environments in Artificial Intelligence

- Understanding PEAS in Artificial Intelligence

- River Crossing Puzzle | Farmer, Wolf, Goat and Cabbage

Share Article:

Digital image processing: all you need to know.

- Speakers & Mentors

- AI services

Examples of Problem Solving Agents in Artificial Intelligence

In the field of artificial intelligence, problem-solving agents play a vital role in finding solutions to complex tasks and challenges. These agents are designed to mimic human intelligence and utilize a range of algorithms and techniques to tackle various problems. By analyzing data, making predictions, and finding optimal solutions, problem-solving agents demonstrate the power and potential of artificial intelligence.

One example of a problem-solving agent in artificial intelligence is a chess-playing program. These agents are capable of evaluating millions of possible moves and predicting the best one to make based on a wide array of factors. By utilizing advanced algorithms and machine learning techniques, these agents can analyze the current state of the game, anticipate future moves, and make strategic decisions to outplay even the most skilled human opponents.

Another example of problem-solving agents in artificial intelligence is autonomous driving systems. These agents are designed to navigate complex road networks, make split-second decisions, and ensure the safety of both passengers and pedestrians. By continuously analyzing sensor data, identifying obstacles, and calculating optimal paths, these agents can effectively solve problems related to navigation, traffic congestion, and collision avoidance.

Definition and Importance of Problem Solving Agents

A problem solving agent is a type of artificial intelligence agent that is designed to identify and solve problems. These agents are programmed to analyze information, develop potential solutions, and select the best course of action to solve a given problem.

Problem solving agents are an essential aspect of artificial intelligence, as they have the ability to tackle complex problems that humans may find difficult or time-consuming to solve. These agents can handle large amounts of data and perform calculations and analysis at a much faster rate than humans.

Problem solving agents can be found in various domains, including healthcare, finance, manufacturing, and transportation. For example, in healthcare, problem solving agents can analyze patient data and medical records to diagnose diseases and recommend treatment plans. In finance, these agents can analyze market trends and make investment decisions.

The importance of problem solving agents in artificial intelligence lies in their ability to automate and streamline processes, improve efficiency, and reduce human error. These agents can also handle repetitive tasks, freeing up human resources for more complex and strategic work.

In addition, problem solving agents can learn and adapt from past experiences, making them even more effective over time. They can continuously analyze and optimize their problem-solving strategies, resulting in better decision-making and outcomes.

In conclusion, problem solving agents are a fundamental component of artificial intelligence. Their ability to analyze information, develop solutions, and make decisions has a significant impact on various industries and fields. Through their automation and optimization capabilities, problem solving agents contribute to improving efficiency, reducing errors, and enhancing decision-making processes.

Problem Solving Agent Architecture

A problem-solving agent is a central component in the field of artificial intelligence that is designed to tackle complex problems and find solutions. The architecture of a problem-solving agent consists of several key components that work together to achieve intelligent problem-solving.

One of the main components of a problem-solving agent is the knowledge base. This is where the agent stores relevant information and data that it can use to solve problems. The knowledge base can include facts, rules, and heuristics that the agent has acquired through learning or from experts in the domain.

Another important component of a problem-solving agent is the inference engine. This is the part of the agent that is responsible for reasoning and making logical deductions. The inference engine uses the knowledge base to generate possible solutions to a problem by applying various reasoning techniques, such as deduction, induction, and abduction.

Furthermore, a problem-solving agent often includes a search algorithm or strategy. This is used to systematically explore possible solutions and search for the best one. The search algorithm can be guided by various heuristics or constraints to efficiently navigate through the solution space.

In addition to these components, a problem-solving agent may also have a learning component. This allows the agent to improve its problem-solving capabilities over time through experience. The learning component can help the agent adapt its knowledge base, refine its inference engine, or adjust its search strategy based on feedback or new information.

Overall, the architecture of a problem-solving agent is designed to enable intelligent problem-solving by combining knowledge representation, reasoning, search, and learning. By utilizing these components, problem-solving agents can tackle a wide range of problems and find effective solutions in various domains.

Uninformed Search Algorithms

In the field of artificial intelligence, problem-solving agents are often designed to navigate a large search space in order to find a solution to a given problem. Uninformed search algorithms, also known as blind search algorithms, are a class of algorithms that do not use any additional information about the problem to guide their search.

Breadth-First Search (BFS)

Breadth-First Search (BFS) is one of the most basic uninformed search algorithms. It explores all the neighbor nodes at the present depth before moving on to the nodes at the next depth level. BFS is implemented using a queue data structure, where the nodes to be explored are added to the back of the queue and the nodes to be explored next are removed from the front of the queue.

For example, BFS can be used to find the shortest path between two cities on a road map, exploring all possible paths in a breadth-first manner to find the optimal solution.

Depth-First Search (DFS)

Depth-First Search (DFS) is another uninformed search algorithm that explores the deepest path first before backtracking. It is implemented using a stack data structure, where nodes are added to the top of the stack and the nodes to be explored next are removed from the top of the stack.

DFS can be used in situations where the goal state is likely to be far from the starting state, as it explores the deepest paths first. However, it may get stuck in an infinite loop if there is a cycle in the search space.

For example, DFS can be used to solve a maze, exploring different paths until the goal state (exit of the maze) is reached.

Overall, uninformed search algorithms provide a foundational approach to problem-solving in artificial intelligence. They do not rely on any additional problem-specific knowledge, making them applicable to a wide range of problems. While they may not always find the optimal solution or have high efficiency, they provide a starting point for more sophisticated search algorithms.

Breadth-First Search

Breadth-First Search is a problem-solving algorithm commonly used in artificial intelligence. It is an uninformed search algorithm that explores all the immediate variations of a problem before moving on to the next level of variations.

Examples of problems that can be solved using Breadth-First Search include finding the shortest path between two points in a graph, solving a sliding puzzle, or searching for a word in a large text document.

How Breadth-First Search Works

The Breadth-First Search algorithm starts at the initial state of the problem and expands all the immediate successor states. It then explores the successor states of the expanded states, continuing this process until a goal state is reached.

At each step of the algorithm, the breadth-first search maintains a queue of states to explore. The algorithm removes a state from the front of the queue, explores its successor states, and adds them to the back of the queue. This ensures that states are explored in the order they were added to the queue, resulting in a breadth-first exploration of the problem space.

The algorithm also keeps track of the visited states to avoid revisiting them in the future, preventing infinite loops in cases where the problem space contains cycles.

Benefits and Limitations

Breadth-First Search guarantees that the shortest path to a goal state is found, if such a path exists. It explores all possible paths of increasing lengths until a goal state is reached, ensuring that shorter paths are explored first.

However, the main limitation of Breadth-First Search is its memory requirements. As it explores all immediate successor states, it needs to keep track of a large number of states in memory. This can become impractical for problems with a large state space. Additionally, Breadth-First Search does not take into account the cost or quality of the paths it explores, making it less suitable for problems with complex cost or objective functions.

Depth-First Search

Depth-First Search (DFS) is a common algorithm used in the field of artificial intelligence to solve various types of problems. It is a search strategy that explores as far as possible along each branch of a tree-like structure before backtracking.

In the context of problem-solving agents, DFS is often used to traverse graph-based problem spaces in search of a solution. This algorithm starts at an initial state and explores all possible actions from that state until a goal state is found or all possible paths have been exhausted.

One example of using DFS in artificial intelligence is solving mazes. The agent starts at the entrance of the maze and explores one path at a time, prioritizing depth rather than breadth. It keeps track of the visited nodes and backtracks whenever it encounters a dead end, until it reaches the goal state (the exit of the maze).

Another example is solving puzzles, such as the famous Eight Queens Problem. In this problem, the agent needs to place eight queens on a chessboard in such a way that no two queens threaten each other. DFS can be used to explore all possible combinations of queen placements, backtracking whenever a placement is found to be invalid, until a valid solution is found or all possibilities have been exhausted.

DFS has advantages and disadvantages. Its main advantage is its simplicity and low memory usage, as it only needs to store the path from the initial state to the current state. However, it can get stuck in infinite loops if not implemented properly, and it may not always find the optimal solution.

In conclusion, DFS is a useful algorithm for problem-solving agents in artificial intelligence. It can be applied to a wide range of problems and provides a straightforward approach to exploring problem spaces. By understanding its strengths and limitations, developers can effectively utilize DFS to find solutions efficiently.

Iterative Deepening Depth-First Search

Iterative Deepening Depth-First Search (IDDFS) is a popular search algorithm used in problem solving within the field of artificial intelligence. It is a combination of depth-first search and breadth-first search algorithms and is designed to overcome some of the limitations of traditional depth-first search.

IDDFS operates in a similar way to depth-first search by exploring a problem space depth-wise. However, it does not keep track of the visited nodes in the search tree as depth-first search does. Instead, it uses a depth limit, which is gradually increased with each iteration, to restrict the depth to which it explores the search tree. This allows IDDFS to gradually explore the search space, starting from a shallow depth and progressively moving to deeper depths.

- Data Science

- Data Analysis

- Data Visualization

- Machine Learning

- Deep Learning

- Computer Vision

- Artificial Intelligence

- AI ML DS Interview Series

- AI ML DS Projects series

- Data Engineering

- Web Scrapping

- Artificial Intelligence (AI) Algorithms

- AO* algorithm - Artificial intelligence

- What is Artificial General Intelligence (AGI)?

- Turing Test in Artificial Intelligence

- Artificial Intelligence | An Introduction

- Artificial Intelligence - Boon or Bane

- What is Artificial Intelligence?

- Explainable Artificial Intelligence(XAI)

- Game Playing in Artificial Intelligence

- Emergence Of Artificial Intelligence

- Impact and Example of Artificial Intelligence

- Types of Artificial Intelligence

- Advantages and Disadvantage of Artificial Intelligence

- Artificial Intelligence in NASA and DARPA in 2000s

- Problem Solving in Artificial Intelligence

- What is Artificial Narrow Intelligence (ANI)?

- Artificial Intelligence - Terminology

- Chinese Room Argument in Artificial Intelligence

- Artificial Intelligence in Robotics

Agents in Artificial Intelligence

In artificial intelligence, an agent is a computer program or system that is designed to perceive its environment, make decisions and take actions to achieve a specific goal or set of goals. The agent operates autonomously, meaning it is not directly controlled by a human operator.

Agents can be classified into different types based on their characteristics, such as whether they are reactive or proactive, whether they have a fixed or dynamic environment, and whether they are single or multi-agent systems.

- Reactive agents are those that respond to immediate stimuli from their environment and take actions based on those stimuli. Proactive agents, on the other hand, take initiative and plan ahead to achieve their goals. The environment in which an agent operates can also be fixed or dynamic. Fixed environments have a static set of rules that do not change, while dynamic environments are constantly changing and require agents to adapt to new situations.

- Multi-agent systems involve multiple agents working together to achieve a common goal. These agents may have to coordinate their actions and communicate with each other to achieve their objectives. Agents are used in a variety of applications, including robotics, gaming, and intelligent systems. They can be implemented using different programming languages and techniques, including machine learning and natural language processing.

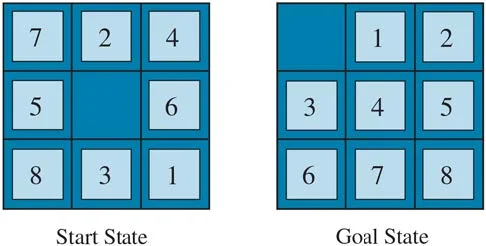

Artificial intelligence is defined as the study of rational agents. A rational agent could be anything that makes decisions, such as a person, firm, machine, or software. It carries out an action with the best outcome after considering past and current percepts(agent’s perceptual inputs at a given instance). An AI system is composed of an agent and its environment . The agents act in their environment. The environment may contain other agents.

An agent is anything that can be viewed as:

- Perceiving its environment through sensors and

- Acting upon that environment through actuators

Note : Every agent can perceive its own actions (but not always the effects).

Interaction of Agents with the Environment

Structure of an AI Agent

To understand the structure of Intelligent Agents, we should be familiar with Architecture and Agent programs. Architecture is the machinery that the agent executes on. It is a device with sensors and actuators, for example, a robotic car, a camera, and a PC. An agent program is an implementation of an agent function. An agent function is a map from the percept sequence(history of all that an agent has perceived to date) to an action.

Agent = Architecture + Agent Program

There are many examples of agents in artificial intelligence. Here are a few:

- Intelligent personal assistants: These are agents that are designed to help users with various tasks, such as scheduling appointments, sending messages, and setting reminders. Examples of intelligent personal assistants include Siri, Alexa, and Google Assistant.

- Autonomous robots: These are agents that are designed to operate autonomously in the physical world. They can perform tasks such as cleaning, sorting, and delivering goods. Examples of autonomous robots include the Roomba vacuum cleaner and the Amazon delivery robot.

- Gaming agents: These are agents that are designed to play games, either against human opponents or other agents. Examples of gaming agents include chess-playing agents and poker-playing agents.

- Fraud detection agents: These are agents that are designed to detect fraudulent behavior in financial transactions. They can analyze patterns of behavior to identify suspicious activity and alert authorities. Examples of fraud detection agents include those used by banks and credit card companies.

- Traffic management agents: These are agents that are designed to manage traffic flow in cities. They can monitor traffic patterns, adjust traffic lights, and reroute vehicles to minimize congestion. Examples of traffic management agents include those used in smart cities around the world.

- A software agent has Keystrokes, file contents, received network packages that act as sensors and displays on the screen, files, and sent network packets acting as actuators.

- A Human-agent has eyes, ears, and other organs which act as sensors, and hands, legs, mouth, and other body parts act as actuators.

- A Robotic agent has Cameras and infrared range finders which act as sensors and various motors act as actuators.

Characteristics of an Agent

Types of Agents

Agents can be grouped into five classes based on their degree of perceived intelligence and capability :

Simple Reflex Agents

Model-Based Reflex Agents

Goal-Based Agents

Utility-Based Agents

Learning Agent

- Multi-agent systems

- Hierarchical agents

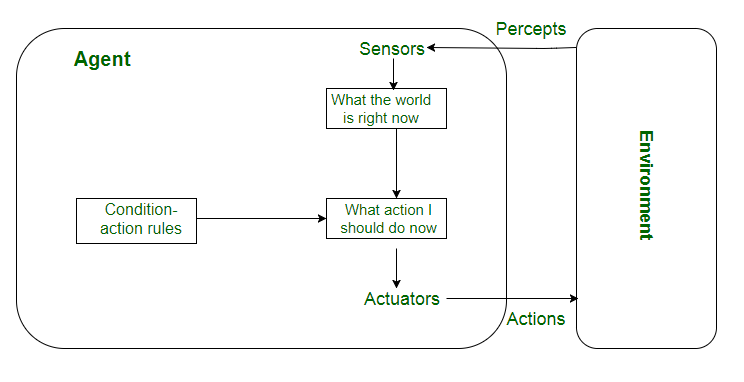

Simple reflex agents ignore the rest of the percept history and act only on the basis of the current percept . Percept history is the history of all that an agent has perceived to date. The agent function is based on the condition-action rule . A condition-action rule is a rule that maps a state i.e., a condition to an action. If the condition is true, then the action is taken, else not. This agent function only succeeds when the environment is fully observable. For simple reflex agents operating in partially observable environments, infinite loops are often unavoidable. It may be possible to escape from infinite loops if the agent can randomize its actions.

Problems with Simple reflex agents are :

- Very limited intelligence.

- No knowledge of non-perceptual parts of the state.

- Usually too big to generate and store.

- If there occurs any change in the environment, then the collection of rules needs to be updated.

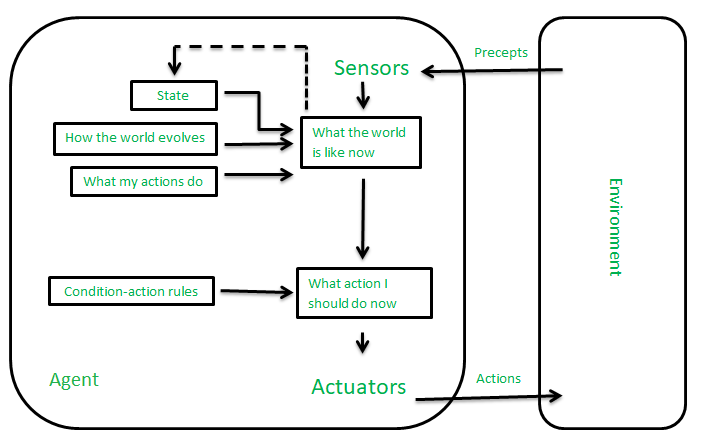

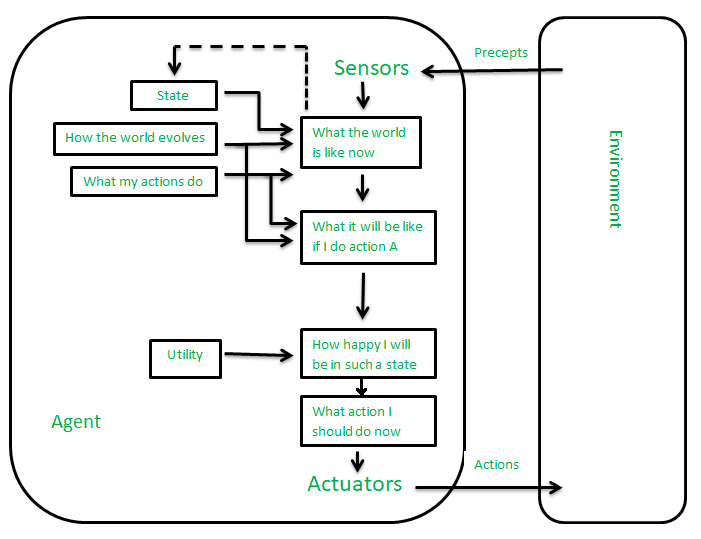

It works by finding a rule whose condition matches the current situation. A model-based agent can handle partially observable environments by the use of a model about the world. The agent has to keep track of the internal state which is adjusted by each percept and that depends on the percept history. The current state is stored inside the agent which maintains some kind of structure describing the part of the world which cannot be seen.

Updating the state requires information about:

- How the world evolves independently from the agent?

- How do the agent’s actions affect the world?

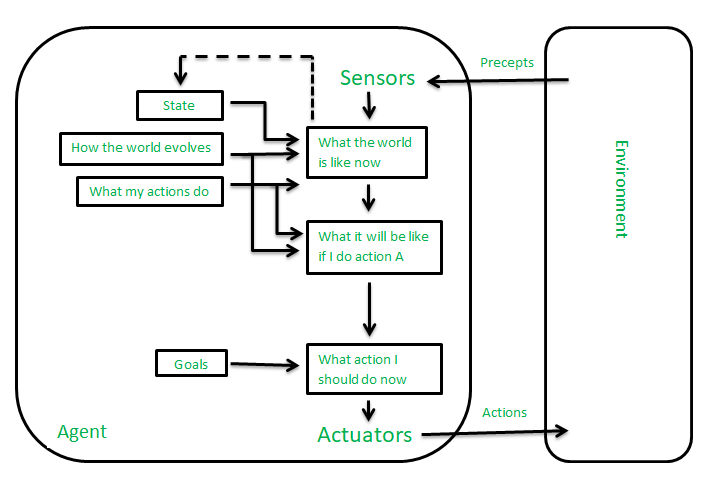

These kinds of agents take decisions based on how far they are currently from their goal (description of desirable situations). Their every action is intended to reduce their distance from the goal. This allows the agent a way to choose among multiple possibilities, selecting the one which reaches a goal state. The knowledge that supports its decisions is represented explicitly and can be modified, which makes these agents more flexible. They usually require search and planning. The goal-based agent’s behavior can easily be changed.

The agents which are developed having their end uses as building blocks are called utility-based agents. When there are multiple possible alternatives, then to decide which one is best, utility-based agents are used. They choose actions based on a preference (utility) for each state. Sometimes achieving the desired goal is not enough. We may look for a quicker, safer, cheaper trip to reach a destination. Agent happiness should be taken into consideration. Utility describes how “happy” the agent is. Because of the uncertainty in the world, a utility agent chooses the action that maximizes the expected utility. A utility function maps a state onto a real number which describes the associated degree of happiness.

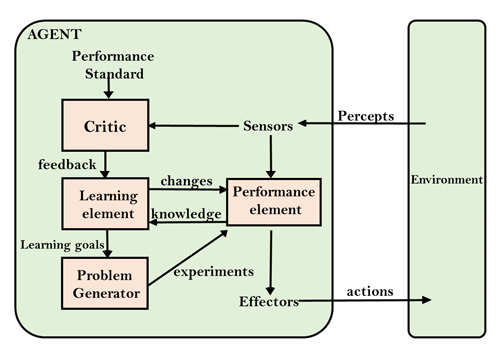

A learning agent in AI is the type of agent that can learn from its past experiences or it has learning capabilities. It starts to act with basic knowledge and then is able to act and adapt automatically through learning. A learning agent has mainly four conceptual components, which are:

- Learning element: It is responsible for making improvements by learning from the environment.

- Critic: The learning element takes feedback from critics which describes how well the agent is doing with respect to a fixed performance standard.

- Performance element: It is responsible for selecting external action.

- Problem Generator: This component is responsible for suggesting actions that will lead to new and informative experiences.

Multi-Agent Systems

These agents interact with other agents to achieve a common goal. They may have to coordinate their actions and communicate with each other to achieve their objective.

A multi-agent system (MAS) is a system composed of multiple interacting agents that are designed to work together to achieve a common goal. These agents may be autonomous or semi-autonomous and are capable of perceiving their environment, making decisions, and taking action to achieve the common objective.

MAS can be used in a variety of applications, including transportation systems, robotics, and social networks. They can help improve efficiency, reduce costs, and increase flexibility in complex systems. MAS can be classified into different types based on their characteristics, such as whether the agents have the same or different goals, whether the agents are cooperative or competitive, and whether the agents are homogeneous or heterogeneous.

- In a homogeneous MAS, all the agents have the same capabilities, goals, and behaviors.

- In contrast, in a heterogeneous MAS, the agents have different capabilities, goals, and behaviors.

This can make coordination more challenging but can also lead to more flexible and robust systems.

Cooperative MAS involves agents working together to achieve a common goal, while competitive MAS involves agents working against each other to achieve their own goals. In some cases, MAS can also involve both cooperative and competitive behavior, where agents must balance their own interests with the interests of the group.

MAS can be implemented using different techniques, such as game theory , machine learning , and agent-based modeling. Game theory is used to analyze strategic interactions between agents and predict their behavior. Machine learning is used to train agents to improve their decision-making capabilities over time. Agent-based modeling is used to simulate complex systems and study the interactions between agents.

Overall, multi-agent systems are a powerful tool in artificial intelligence that can help solve complex problems and improve efficiency in a variety of applications.

Hierarchical Agents

These agents are organized into a hierarchy, with high-level agents overseeing the behavior of lower-level agents. The high-level agents provide goals and constraints, while the low-level agents carry out specific tasks. Hierarchical agents are useful in complex environments with many tasks and sub-tasks.

- Hierarchical agents are agents that are organized into a hierarchy, with high-level agents overseeing the behavior of lower-level agents. The high-level agents provide goals and constraints, while the low-level agents carry out specific tasks. This structure allows for more efficient and organized decision-making in complex environments.

- Hierarchical agents can be implemented in a variety of applications, including robotics, manufacturing, and transportation systems. They are particularly useful in environments where there are many tasks and sub-tasks that need to be coordinated and prioritized.

- In a hierarchical agent system, the high-level agents are responsible for setting goals and constraints for the lower-level agents. These goals and constraints are typically based on the overall objective of the system. For example, in a manufacturing system, the high-level agents might set production targets for the lower-level agents based on customer demand.

- The low-level agents are responsible for carrying out specific tasks to achieve the goals set by the high-level agents. These tasks may be relatively simple or more complex, depending on the specific application. For example, in a transportation system, low-level agents might be responsible for managing traffic flow at specific intersections.

- Hierarchical agents can be organized into different levels, depending on the complexity of the system. In a simple system, there may be only two levels: high-level agents and low-level agents. In a more complex system, there may be multiple levels, with intermediate-level agents responsible for coordinating the activities of lower-level agents.

- One advantage of hierarchical agents is that they allow for more efficient use of resources. By organizing agents into a hierarchy, it is possible to allocate tasks to the agents that are best suited to carry them out, while avoiding duplication of effort. This can lead to faster, more efficient decision-making and better overall performance of the system.

Overall, hierarchical agents are a powerful tool in artificial intelligence that can help solve complex problems and improve efficiency in a variety of applications.

Uses of Agents

Agents are used in a wide range of applications in artificial intelligence, including:

- Robotics: Agents can be used to control robots and automate tasks in manufacturing, transportation, and other industries.

- Smart homes and buildings: Agents can be used to control heating, lighting, and other systems in smart homes and buildings, optimizing energy use and improving comfort.

- Transportation systems: Agents can be used to manage traffic flow, optimize routes for autonomous vehicles, and improve logistics and supply chain management.

- Healthcare: Agents can be used to monitor patients, provide personalized treatment plans, and optimize healthcare resource allocation.

- Finance: Agents can be used for automated trading, fraud detection, and risk management in the financial industry.

- Games: Agents can be used to create intelligent opponents in games and simulations, providing a more challenging and realistic experience for players.

- Natural language processing: Agents can be used for language translation, question answering, and chatbots that can communicate with users in natural language .

- Cybersecurity: Agents can be used for intrusion detection, malware analysis, and network security.

- Environmental monitoring: Agents can be used to monitor and manage natural resources, track climate change, and improve environmental sustainability.

- Social media: Agents can be used to analyze social media data, identify trends and patterns, and provide personalized recommendations to users.

Overall, agents are a versatile and powerful tool in artificial intelligence that can help solve a wide range of problems in different fields.

Please Login to comment...

Similar reads, improve your coding skills with practice.

What kind of Experience do you want to share?

The promise and the reality of gen AI agents in the enterprise

The evolution of generative AI (gen AI) has opened the door to great opportunities across organizations, particularly regarding gen AI agents—AI-powered software entities that plan and perform tasks or aid humans by delivering specific services on their behalf. So far, adoption at scale across businesses has faced difficulties because of data quality, employee distrust, and cost of implementation. In addition, capabilities have raced ahead of leaders’ capacity to imagine how these agents could be used to transform work.

However, as gen AI technologies progress and the next-generation agents emerge, we expect more use cases to be unlocked, deployment costs to decrease, long-tail use cases to become economically viable, and more at-scale automation to take place across a wider range of enterprise processes, employee experiences, and customer interfaces. This evolution will demand investing in strong AI trust and risk management practices and policies as well as platforms for managing and monitoring agent-based systems.

In this interview, McKinsey Digital’s Barr Seitz speaks with senior partners Jorge Amar and Lari Hämäläinen and partner Nicolai von Bismarck to explore the evolution of gen AI agents and how companies can and should implement the technology, where the pools of value lie for the enterprise as a whole. They particularly explore what these developments mean for customer service. An edited transcript of the conversation follows.

Barr Seitz: What exactly is a gen AI agent?

Lari Hämäläinen: When we talk about gen AI agents, we mean software entities that can orchestrate complex workflows, coordinate activities among multiple agents, apply logic, and evaluate answers. These agents can help automate processes in organizations or augment workers and customers as they perform processes. This is valuable because it will not only help humans do their jobs better but also fully digitalize underlying processes and services.

For example, in customer services, recent developments in short- and long-term memory structures enable these agents to personalize interactions with external customers and internal users, and help human agents learn. All of this means that gen AI agents are getting much closer to becoming true virtual workers that can both augment and automate enterprise services in all areas of the business, from HR to finance to customer service. That means we’re well on our way to automating a wide range of tasks in many service functions while also improving service quality.

Barr Seitz: Where do you see the greatest value from gen AI agents?

Jorge Amar: We have estimated that gen AI enterprise use cases could yield $2.6 trillion to $4.4 trillion annually in value across more than 60 use cases. 1 “ The economic potential of generative AI: The next productivity frontier ,” McKinsey, June 14, 2023. But how much of this value is realized as business growth and productivity will depend on how quickly enterprises can reimagine and truly transform work in priority domains—that is, user journeys, processes across an entire chain of activities, or a function.

Gen-AI-enabled agents hold the promise of accelerating the automation of a very long tail of workflows that would otherwise require inordinate amounts of resources to implement. And the potential extends even beyond these use cases: 60 to 70 percent of the work hours in today’s global economy could theoretically be automated by applying a wide variety of existing technology capabilities, including generative AI, but doing so will require a lot in terms of solutions development and enterprise adoption.

Consider customer service. Currently, the value of gen AI agents in the customer service environment is going to come either from a volume reduction or a reduction in average handling times. For example, in work we published earlier this year, we looked at 5,000 customer service agents using gen AI and found that issue resolution increased by 14 percent an hour, while time spent handling issues went down 9 percent. 2 “ The economic potential of generative AI: The next productivity frontier ,” McKinsey, June 14, 2023.

About QuantumBlack, AI by McKinsey

QuantumBlack, McKinsey’s AI arm, helps companies transform using the power of technology, technical expertise, and industry experts. With thousands of practitioners at QuantumBlack (data engineers, data scientists, product managers, designers, and software engineers) and McKinsey (industry and domain experts), we are working to solve the world’s most important AI challenges. QuantumBlack Labs is our center of technology development and client innovation, which has been driving cutting-edge advancements and developments in AI through locations across the globe.

The other area for value is agent training. Typically, we see that it takes somewhere between six to nine months for a new agent to perform at par with the level of more tenured peers. With this technology, we see that time come down to three months, in some cases, because new agents have at their disposal a vast library of interventions and scripts that have worked in other situations.

Over time, as gen AI agents become more proficient, I expect to see them improve customer satisfaction and generate revenue. By supporting human agents and working autonomously, for example, gen AI agents will be critical not just in helping customers with their immediate questions but also beyond, be that selling new services or addressing broader needs. As companies add more gen AI agents, costs are likely to come down, and this will open up a wider array of customer experience options for companies, such as offering more high-touch interactions with human agents as a premium service.

Barr Seitz: What are the opportunities you are already seeing with gen AI agents?

Jorge Amar: Customer care will be one of the first but definitely not the only function with at-scale AI agents. Over the past year, we have seen a lot of successful pilots with gen AI agents helping to improve customer service functions. For example, you could have a customer service agent who is on the phone with a customer and receives help in real time from a dedicated gen AI agent that is, for instance, recommending the best knowledge article to refer to or what the best next steps are for the conversation. The gen AI agent can also give coaching on behavioral elements, such as tone, empathy, and courtesy.

It used to be the case that dedicating an agent to an individual customer at each point of their sales journey was cost-prohibitive. But, as Lari noted, with the latest developments in gen AI agents, now you can do it.

Nicolai von Bismarck: It’s worth emphasizing that gen AI agents not only automate processes but also support human agents. One thing that gen AI agents are so good at, for example, is in helping customer service representatives get personalized coaching not only from a hard-skill perspective but also in soft skills like understanding the context of what is being said. We estimate that applying generative AI to customer care functions could increase productivity by between 30 to 45 percent. 3 “ The economic potential of generative AI: The next productivity frontier ,” McKinsey, June 14, 2023.

Jorge Amar: Yes, and in other cases, gen AI agents assist the customer directly. A digital sales assistant can assist the customer at every point in their decision journey by, for example, retrieving information or providing product specs or cost comparisons—and then remembering the context if the customer visits, leaves, and returns. As those capabilities grow, we can expect these gen AI agents to generate revenue through upselling.

[For more on how companies are using gen AI agents, see the sidebar, “A closer look at gen AI agents: The Lenovo experience.”]

Barr Seitz: Can you clarify why people should believe that gen AI agents are a real opportunity and not just another false technology promise?

A closer look at gen AI agents: The Lenovo experience

Three leaders at Lenovo —Solutions and Services Group chief technology officer Arthur Hu, COO and head of strategy Linda Yao, and Digital Workplace Solutions general manager Raghav Raghunathan—discuss with McKinsey senior partner Lari Hämäläinen and McKinsey Digital’s Barr Seitz how the company uses generative AI (gen AI) agents.

Barr Seitz: What existing gen AI agent applications has Lenovo been running and what sort of impact have you seen from them?

Arthur Hu: We’ve focused on two main areas. One is software engineering. It’s the low-hanging fruit to help our people enhance speed and quality of code production. Our people are already getting 10 percent improvements, and we’re seeing that increase to 15 percent as teams get better at using gen AI agents.

The second one is about support. We have hundreds of millions of interactions with our customers across online, chat, voice, and email. We’re applying LLM [large language model]-enhanced bots to address customer issues across the entire customer journey and are seeing some great improvements already. We believe it’s possible to address as much as 70 to 80 percent of all customer interactions without needing to pull in a human.

Linda Yao: With our gen AI agents helping support customer service, we’re seeing double-digit productivity gains on call handling time. And we’re seeing incredible gains in other places too. We’re finding that marketing teams, for example, are cutting the time it takes to create a great pitch book by 90 percent and also saving on agency fees.

Barr Seitz: How are you getting ready for a world of gen AI agents?

Linda Yao: I was working with our marketing and sales training teams just this morning as part of a program to develop a learning curriculum for our organization, our partners, and our key customers. We’re figuring out what learning should be at all levels of the business and for different roles.

Arthur Hu: On the tech side, employees need to understand what gen AI agents are and how they can help. It’s critical to be able to build trust or they’ll resist adopting it. In many ways, this is a demystification exercise.

Raghav Raghunathan: We see gen AI as a way to level the playing field in new areas. You don’t need a huge talent base now to compete. We’re investing in tools and workflows to allow us to deliver services with much lower labor intensity and better outcomes.

Barr Seitz: What sort of learning programs are you developing to upskill your people?

Linda Yao: The learning paths for managers, for example, focus on building up their technical acumen, understanding how to change their KPIs because team outputs are changing quickly. At the executive level, it’s about helping leaders develop a strong understanding of the tech so they can determine what’s a good use case to invest in, and which one isn’t.

Arthur Hu: We’ve found that as our software engineers learn how to work with gen AI agents, they go from basically just chatting with them for code snippets to developing much broader thinking and focus. They start to think about changing the software workflow, such as working with gen AI agents on ideation and other parts of the value chain.

Raghav Raghunathan: Gen AI provides an experiential learning capability that’s much more effective. They can prepare sales people for customer interactions or guide them during sales calls. This approach is having a much greater impact than previous learning approaches. It gives them a safe space to learn. They can practice their pitches ahead of time and learn through feedback in live situations.

Barr Seitz: How do you see the future of gen AI agents evolving?

Linda Yao: In our use cases to date, we’ve refined gen AI agents so they act as a good assistant. As we start improving the technology, gen AI agents will become more like deputies that human agents can deploy to do tasks. We’re hoping to see productivity improvements, but we expect this to be a big improvement for the employee experience. These are tasks people don’t want to do.

Arthur Hu: There are lots of opportunities, but one area we’re exploring is how to use gen AI to capture discussions and interactions, and feed the insights and outputs into our development pipeline. There are dozens of points in the customer interaction journey, which means we have tons of data to mine to understand complex intent and even autogenerate new knowledge to address issues.

Jorge Amar: These are still early days, of course, but the kinds of capabilities we’re seeing from gen AI agents are simply unprecedented. Unlike past technologies, for example, gen AI not only can theoretically handle the hundreds of millions of interactions between employees and customers across various channels but also can generate much higher-quality interactions, such as delivering personalized content. And we know that personalized service is a key driver of better customer service. There is a big opportunity here because we found in a survey of customer care executives we ran that less than 10 percent of respondents in North America reported greater-than-expected satisfaction with their customer service performance. 4 “ Where is customer care in 2024? ,” McKinsey, March 12, 2024.

Lari Hämäläinen: Let me take the technology view. This is the first time where we have a technology that is fitted to the way humans interact and can be deployed at enterprise scale. Take, for example, the IVR [interactive voice response] experiences we’ve all suffered through on calls. That’s not how humans interact. Humans interact in an unstructured way, often with unspoken intent. And if you think about LLMs [large language models], they were basically created from their inception to handle unstructured data and interactions. In a sense, all the technologies we applied so far to places like customer service worked on the premise that the customer is calling with a very structured set of thoughts that fit predefined conceptions.

Barr Seitz: How has the gen AI agent landscape changed in the past 12 months?

Lari Hämäläinen: The development of gen AI has been extremely fast. In the early days of LLMs, some of their shortcomings, like hallucinations and relatively high processing costs, meant that models were used to generate pretty basic outputs, like providing expertise to humans or generating images. More complex options weren’t viable. For example, consider that in the case of an LLM with just 80 percent accuracy applied to a task with ten related steps, the cumulative accuracy rate would be just 11 percent.

Today, LLMs can be applied to a wider variety of use cases and more complex workflows because of multiple recent innovations. These include advances in the LLMs themselves in terms of their accuracy and capabilities, innovations in short- and long-term memory structures, developments in logic structures and answer evaluation, and frameworks to apply agents and models to complex workflows. LLMs can evaluate and correct “wrong” answers so that you can have much higher accuracy. With an experienced human in the loop to handle cases that are identified as tricky, then the joint human-plus-machine outcome can generate great quality and great productivity.

Finally, it’s worth mentioning that a lot of gen AI applications beyond chat have been custom-built in the past year by bringing different components together. What we are now seeing is the standardization and industrialization of frameworks to become closer to “packaged software.” This will speed up implementation and improve cost efficiency, making real-world applications even more viable, including addressing the long-tail use cases in enterprises.

Barr Seitz: What sorts of hurdles are you seeing in adopting the gen AI agent technology for customer service?

Nicolai von Bismarck: One big hurdle we’re seeing is building trust across the organization in gen AI agents. At one bank, for example, they knew they needed to cut down on wrong answers to build trust. So they created an architecture that checks for hallucinations. Only when the check confirms that the answer is correct is it released. And if the answer isn’t right, the chatbot would say that it cannot answer this question and try to rephrase it. The customer is then able to either get an answer to their question quickly or decide that they want to talk to a live agent. That’s really valuable, as we find that customers across all age groups — even Gen Z — still prefer live phone conversations for customer help and support. .

Jorge Amar: We are seeing very promising results, but these are in controlled environments with a small group of customers or agents. To scale these results, change management will be critical. That’s a big hurdle for organizations. It’s much broader than simply rolling out a new set of tools. Companies are going to need to rewire how functions work so they can get the full value from gen AI agents.

Take data, which needs to be in the right format and place for gen AI technologies to use them effectively. Almost 20 percent of most organizations, in fact, see data as the biggest challenge to capturing value with gen AI. 5 “ The state of AI in 2023: Generative AI’s breakout year ,” McKinsey, August 1, 2023. One example of this kind of issue could be a chatbot sourcing outdated information, like a policy that was used during COVID-19, in delivering an answer. The content might be right, but it’s hopelessly out of date. Companies are going to need to invest in cleaning and organizing their data.

In addition, companies need a real commitment to building AI trust and governance capabilities. These are the principles, policies, processes, and platforms that assure companies are not just compliant with fast-evolving regulations—as seen in the recent EU AI law and similar actions in many countries—but also able to keep the kinds of commitments that they make to customers and employees in terms of fairness and lack of bias. This will also require new learning, new levels of collaboration with legal and risk teams, and new technology to manage and monitor systems at scale.

Change needs to happen in other areas as well. Businesses will need to build extensive and tailored learning curricula for all levels of the customer service function—from managers who will need to create new KPIs and performance management protocols to frontline agents who will need to understand different ways to engage with both customers and gen AI agents.

The technology will need to evolve to be more flexible and develop a stronger life cycle capability to support gen AI tools, what we’d call MLOps [machine learning operations] or, increasingly, gen AI Ops [gen AI operations]. The operating model will need to support small teams working iteratively on new service capabilities. And adoption will require sustained effort and new incentives so that people learn to trust the tools and realize the benefits. This is particularly true with more tenured agents, who believe their own skills cannot be augmented or improved on with gen AI agents. For customer operations alone, we’re talking about a broad effort here, but with more than $400 billion of potential value from gen AI at stake, it’s worth it. 6 “ The economic potential of generative AI: The next productivity frontier ,” McKinsey, June 14, 2023.

Barr Seitz: Staying with customer service, how will gen AI agents help enterprises?

Jorge Amar: This is a great question, because we believe the immediate impact comes from augmenting the work that humans do even as broader automation happens. My belief is that gen AI agents can and will transform various corporate services and workflows. It will help us automate a lot of tasks that were not adding value while creating a better experience for both employees and customers. For example, corporate service centers will become more productive and have better outcomes and deliver better experiences.

In fact, we’re seeing this new technology help reduce employee attrition. As gen AI becomes more pervasive, we may see an emergence of more specialization in service work. Some companies and functions will lead adoption and become fully automated, and some may differentiate by building more high-touch interactions.

Nicolai von Bismarck: As an example, we’re seeing this idea in practice at one German company, which is implementing an AI-based learning and coaching engine. And it’s already seeing a significant improvement in the employee experience as measured while it’s rolling this out, both from a supervisor and employee perspective, because the employees feel that they’re finally getting feedback that is relevant to them. They’re feeling valued, they’re progressing in their careers, and they’re also learning new skills. For instance, instead of taking just retention calls, they can now take sales calls. This experience is providing more variety in the work that people do and less dull repetition.

Lari Hämäläinen: Let me take a broader view. We had earlier modeled a midpoint scenario when 50 percent of today’s work activities could be automated to occur around 2055. But the technology is evolving so much more quickly than anyone had expected—just look at the capabilities of some LLMs that are approaching, and even surpassing, in certain cases, average human levels of proficiency. The innovations in gen AI have helped accelerate that midpoint scenario by about a decade. And it’s going to keep getting faster, so we can expect the adoption timeline to shrink even further. That’s a crucial development that every executive needs to understand.

Jorge Amar is a senior partner in McKinsey’s Miami office, Lari Hämäläinen is a senior partner in the Seattle office, and Nicolai von Bismarck is a partner in the Boston office. Barr Seitz is director of global publishing for McKinsey Digital and is based in the New York office.

Explore a career with us

Related articles.

Moving past gen AI’s honeymoon phase: Seven hard truths for CIOs to get from pilot to scale

Creating a European AI unicorn: Interview with Arthur Mensch, CEO of Mistral AI

Why AI-enabled customer service is key to scaling telco personalization

Download our App for Study Materials and Placement Preparation 📝✅ | Click Here

Get Latest Exam Updates, Free Study materials and Tips

Your Branch Computer Engineering IT Engineering EXTC Engineering Mechanical Engineering Civil Engineering Others.. Year Of Engineering First Year Second Year Third Year Final Year

Clear Your Aptitude In Very First Attempt!

What's Included!

What's included, 6+ exercise.

[MCQ’s] Artificial Intelligence

Introduction to intelligent systems and intelligent agents, search techniques, knowledge and reasoning, uncertain knowledge and reasoning, natural language processing.

1. What is the main task of a problem-solving agent? A. Solve the given problem and reach to goal B. To find out which sequence of action will get it to the goal state C. Both A and B D. None of the Above Ans : C Explanation: The problem-solving agents are one of the goal-based agents

2. What is Initial state + Goal state in Search Terminology? A. Problem Space B. Problem Instance C. Problem Space Graph D. Admissibility Ans : B Explanation: Problem Instance : It is Initial state + Goal state.

3. What is Time Complexity of Breadth First search algorithm? A. b B. b^d C. b^2 D. b^b Ans : B Explanation: Time Complexity of Breadth First search algorithm is b^d.

4. Depth-First Search is implemented in recursion with _______ data structure. A. LIFO B. LILO C. FIFO D. FILO Ans : A Explanation: Depth-First Search implemented in recursion with LIFO stack data structure.

5. How many types are available in uninformed search method? A. 2 B. 3 C. 4 D. 5 Ans : D Explanation: The five types of uninformed search method are Breadth-first, Uniform-cost, Depth-first, Depth-limited and Bidirectional search.

6. Which data structure conveniently used to implement BFS? A. Stacks B. Queues C. Priority Queues D. None of the Above Ans : B Explanation: Queue is the most convenient data structure, but memory used to store nodes can be reduced by using circular queues.

7. How many types of informed search method are in artificial intelligence? A. 2 B. 3 C. 4 D. 5 Ans : C Explanation: The four types of informed search method are best-first search, Greedy best-first search, A* search and memory bounded heuristic search.

8. Greedy search strategy chooses the node for expansion in ___________ A. Shallowest B. Deepest C. The one closest to the goal node D. Minimum heuristic cost Ans : C Explanation: Sometimes minimum heuristics can be used, sometimes maximum heuristics function can be used. It depends upon the application on which the algorithm is applied.

9. What is disadvantage of Greedy Best First Search? A. This algorithm is neither complete, nor optimal. B. It can get stuck in loops. It is not optimal. C. There can be multiple long paths with the cost ≤ C* D. may not terminate and go on infinitely on one path Ans : B Explanation: The disadvantage of Greedy Best First Search is that it can get stuck in loops. It is not optimal.

10. Searching using query on Internet is, use of ___________ type of agent. A. Offline agent B. Online Agent C. Goal Based D. Both B and C Ans : D Explanation: Refer to the definitions of both the type of agent.

11. An AI system is composed of? A. agent B. environment C. Both A and B D. None of the Above Ans : C Explanation: An AI system is composed of an agent and its environment.

12. Which instruments are used for perceiving and acting upon the environment? A. Sensors and Actuators B. Sensors C. Perceiver D. Perceiver and Sensor Ans : A Explanation: An agent is anything that can be viewed as perceiving and acting upon the environment through the sensors and actuators.

13. Which of the following is not a type of agents in artificial intelligence? A. Model based B. Utility based C. Simple reflex D. target based Ans : D Explanation: The four types of agents are Simple reflex, Model based, Goal based and Utility based agents.

14. Which is used to improve the agents performance? A. Perceiving B. Observing C. Learning D. Sequence Ans : C Explanation: An agent can improve its performance by storing its previous actions.

15. Rationality of an agent does not depends on? A. performance measures B. Percept Sequence C. reaction D. actions Ans : C Explanation: Rationality of an agent does not depends on reaction

16. Agent’s structure can be viewed as ? A. Architecture B. Agent Program C. Architecture + Agent Program D. None of the Above Ans : C Explanation: Agent’s structure can be viewed as – Agent = Architecture + Agent Program

17. What is the action of task environment in artificial intelligence? A. Problem B. Solution C. Agent D. Observation Ans : A Explanation: Task environments will pose a problem and rational agent will find the solution for the posed problem.

18. What kind of environment is crossword puzzle? A. Dynamic B. Static C. Semi Dynamic D. Continuous Ans : B Explanation: As the problem in crossword puzzle are posed at beginning itself, So it is static.

19. What could possibly be the environment of a Satellite Image Analysis System? A. Computers in space and earth B. Image categorization techniques C. Statistical data on image pixel intensity value and histograms D. All of the above Ans : D Explanation: An environment is something which agent stays in.

20. Which kind of agent architecture should an agent an use? A. Relaxed B. Relational C. Both A and B D. None of the AboveAns : C Explanation: Because an agent may experience any kind of situation, So that an agent should use all kinds of architecture.

21. Which depends on the percepts and actions available to the agent? a) Agent b) Sensor c) Design problem d) None of the mentioned Answer: c Explanation: The design problem depends on the percepts and actions available to the agent, the goals that the agent’s behavior should satisfy.

22. Which were built in such a way that humans had to supply the inputs and interpret the outputs? a) Agents b) AI system c) Sensor d) Actuators Answer: b Explanation: AI systems were built in such a way that humans had to supply the inputs and interpret the outputs.

23. Which technology uses miniaturized accelerometers and gyroscopes? a) Sensors b) Actuators c) MEMS d) None of the mentioned Answer: c Explanation: Micro ElectroMechanical System uses miniaturized accelerometers and gyroscopes and is used to produce actuators.

24. What is used for tracking uncertain events? a) Filtering algorithm b) Sensors c) Actuators d) None of the mentioned Answer: a Explanation: Filtering algorithm is used for tracking uncertain events because in this the real perception is involved.

25. What is not represented by using propositional logic? a) Objects b) Relations c) Both Objects & Relations d) None of the mentioned Answer: c Explanation: Objects and relations are not represented by using propositional logic explicitly.

26. Which functions are used as preferences over state history? a) Award b) Reward c) Explicit d) Implicit Answer: b Explanation: Reward functions may be that preferences over states are really compared from preferences over state histories.

27. Which kind of agent architecture should an agent an use? a) Relaxed b) Logic c) Relational d) All of the mentioned Answer: d Explanation: Because an agent may experience any kind of situation, So that an agent should use all kinds of architecture.

28. Specify the agent architecture name that is used to capture all kinds of actions. a) Complex b) Relational c) Hybrid d) None of the mentioned Answer: c Explanation: A complete agent must be able to do anything by using hybrid architecture.

29. Which agent enables the deliberation about the computational entities and actions? a) Hybrid b) Reflective c) Relational d) None of the mentioned Answer: b Explanation: Because it enables the agent to capture within itself.

30. What can operate over the joint state space? a) Decision-making algorithm b) Learning algorithm c) Complex algorithm d) Both Decision-making & Learning algorithm

31. What is the action of task environment in artificial intelligence? a) Problem b) Solution c) Agent d) Observation Answer: a Explanation: Task environments will pose a problem and rational agent will find the solution for the posed problem.

32. What is the expansion if PEAS in task environment? a) Peer, Environment, Actuators, Sense b) Perceiving, Environment, Actuators, Sensors c) Performance, Environment, Actuators, Sensors d) None of the mentioned Answer: c Explanation: Task environment will contain PEAS which is used to perform the action independently.

33. What kind of observing environments are present in artificial intelligence? a) Partial b) Fully c) Learning d) Both Partial & Fully Answer: d Explanation: Partial and fully observable environments are present in artificial intelligence.

34. What kind of environment is strategic in artificial intelligence? a) Deterministic b) Rational c) Partial d) Stochastic Answer: a Explanation: If the environment is deterministic except for the action of other agent is called deterministic.

35. What kind of environment is crossword puzzle? a) Static b) Dynamic c) Semi Dynamic d) None of the mentioned Answer: a Explanation: As the problem in crossword puzzle are posed at beginning itself, So it is static.

36. What kind of behavior does the stochastic environment posses? a) Local b) Deterministic c) Rational d) Primary Answer: a Explanation: Stochastic behavior are rational because it avoids the pitfall of predictability.

37. Which is used to select the particular environment to run the agent? a) Environment creator b) Environment Generator c) Both Environment creator & Generator d) None of the mentioned Answer: b Explanation: None.

38. Which environment is called as semi dynamic? a) Environment does not change with the passage of time b) Agent performance changes c) Environment will be changed d) Environment does not change with the passage of time, but Agent performance changes Answer: d Explanation: If the environment does not change with the passage of time, but the agent performance changes by time.

39. Where does the performance measure is included? a) Rational agent b) Task environment c) Actuators d) Sensor Answer: b Explanation: In PEAS, Where P stands for performance measure which is always included in task environment.

1. Which search strategy is also called as blind search? a) Uninformed search b) Informed search c) Simple reflex search d) All of the mentioned Answer: a Explanation: In blind search, We can search the states without having any additional information. So uninformed search method is blind search.

2. How many types are available in uninformed search method? a) 3 b) 4 c) 5 d) 6 Answer: c Explanation: The five types of uninformed search method are Breadth-first, Uniform-cost, Depth-first, Depth-limited and Bidirectional search.

3. Which search is implemented with an empty first-in-first-out queue? a) Depth-first search b) Breadth-first search c) Bidirectional search d) None of the mentioned Answer: b Explanation: Because of FIFO queue, it will assure that the nodes that are visited first will be expanded first.

4. When is breadth-first search is optimal? a) When there is less number of nodes b) When all step costs are equal c) When all step costs are unequal d) None of the mentioned Answer: b Explanation: Because it always expands the shallowest unexpanded node.

5. How many successors are generated in backtracking search? a) 1 b) 2 c) 3 d) 4 Answer: a Explanation: Each partially expanded node remembers which successor to generate next because of these conditions, it uses less memory.

6. What is the space complexity of Depth-first search? a) O(b) b) O(bl) c) O(m) d) O(bm) Answer: d Explanation: O(bm) is the space complexity where b is the branching factor and m is the maximum depth of the search tree.

7. How many parts does a problem consists of? a) 1 b) 2 c) 3 d) 4 Answer: d Explanation: The four parts of the problem are initial state, set of actions, goal test and path cost.

8. Which algorithm is used to solve any kind of problem? a) Breadth-first algorithm b) Tree algorithm c) Bidirectional search algorithm d) None of the mentioned Answer: b Explanation: Tree algorithm is used because specific variants of the algorithm embed different strategies.

9. Which search algorithm imposes a fixed depth limit on nodes? a) Depth-limited search b) Depth-first search c) Iterative deepening search d) Bidirectional search Answer: a Explanation: None.

10. Which search implements stack operation for searching the states? a) Depth-limited search b) Depth-first search c) Breadth-first search d) None of the mentioned Answer: b

11. What is the other name of informed search strategy? a) Simple search b) Heuristic search c) Online search d) None of the mentioned Answer: b Explanation: A key point of informed search strategy is heuristic function, So it is called as heuristic function.

12. How many types of informed search method are in artificial intelligence? a) 1 b) 2 c) 3 d) 4 Answer: d Explanation: The four types of informed search method are best-first search, Greedy best-first search, A* search and memory bounded heuristic search.

13. Which search uses the problem specific knowledge beyond the definition of the problem? a) Informed search b) Depth-first search c) Breadth-first search d) Uninformed search Answer: a Explanation: Informed search can solve the problem beyond the function definition, So does it can find the solution more efficiently.

14. Which function will select the lowest expansion node at first for evaluation? a) Greedy best-first search b) Best-first search c) Depth-first search d) None of the mentioned Answer: b Explanation: The lowest expansion node is selected because the evaluation measures distance to the goal.

15. What is the heuristic function of greedy best-first search? a) f(n) != h(n) b) f(n) < h(n) c) f(n) = h(n) d) f(n) > h(n) Answer: c Explanation: None.

16. Which search uses only the linear space for searching? a) Best-first search b) Recursive best-first search c) Depth-first search d) None of the mentioned Answer: b Explanation: Recursive best-first search will mimic the operation of standard best-first search, but using only the linear space.

17. Which method is used to search better by learning? a) Best-first search b) Depth-first search c) Metalevel state space d) None of the mentioned Answer: c Explanation: This search strategy will help to problem solving efficiency by using learning.

18. Which search is complete and optimal when h(n) is consistent? a) Best-first search b) Depth-first search c) Both Best-first & Depth-first search d) A* search Answer: d Explanation: None.

19. Which is used to improve the performance of heuristic search? a) Quality of nodes b) Quality of heuristic function c) Simple form of nodes d) None of the mentioned Answer: b Explanation: Good heuristic can be constructed by relaxing the problem, So the performance of heuristic search can be improved.

20. Which search method will expand the node that is closest to the goal? a) Best-first search b) Greedy best-first search c) A* search d) None of the mentioned Answer: b Explanation: Because of using greedy best-first search, It will quickly lead to the solution of the problem.

21. In many problems the path to goal is irrelevant, this class of problems can be solved using ____________ a) Informed Search Techniques b) Uninformed Search Techniques c) Local Search Techniques d) Informed & Uninformed Search Techniques Answer: c Explanation: If the path to the goal does not matter, we might consider a different class of algorithms, ones that do not worry about paths at all. Local search algorithms operate using a single current state (rather than multiple paths) and generally move only to neighbors of that state.

22. Though local search algorithms are not systematic, key advantages would include __________ a) Less memory b) More time c) Finds a solution in large infinite space d) Less memory & Finds a solution in large infinite space Answer: d Explanation: Two advantages: (1) they use very little memory-usually a constant amount; and (2) they can often find reasonable solutions in large or infinite (continuous) state spaces for which systematic algorithms are unsuitable.

23. A complete, local search algorithm always finds goal if one exists, an optimal algorithm always finds a global minimum/maximum. a) True b) False Answer: a Explanation: An algorithm is complete if it finds a solution if exists and optimal if finds optimal goal (minimum or maximum).

24. _______________ Is an algorithm, a loop that continually moves in the direction of increasing value – that is uphill. a) Up-Hill Search b) Hill-Climbing c) Hill algorithm d) Reverse-Down-Hill search Answer: b Explanation: Refer the definition of Hill-Climbing approach.

25. When will Hill-Climbing algorithm terminate? a) Stopping criterion met b) Global Min/Max is achieved c) No neighbor has higher value d) All of the mentioned Answer: c Explanation: When no neighbor is having higher value, algorithm terminates fetching local min/max.

26. What are the main cons of hill-climbing search? a) Terminates at local optimum & Does not find optimum solution b) Terminates at global optimum & Does not find optimum solution c) Does not find optimum solution & Fail to find a solution d) Fail to find a solution View Answer Answer: a Explanation: Algorithm terminates at local optimum values, hence fails to find optimum solution.