Statistical Papers

Statistical Papers is a forum for presentation and critical assessment of statistical methods encouraging the discussion of methodological foundations and potential applications.

- The Journal stresses statistical methods that have broad applications, giving special attention to those relevant to the economic and social sciences.

- Covers all topics of modern data science, such as frequentist and Bayesian design and inference as well as statistical learning.

- Contains original research papers (regular articles), survey articles, short communications, reports on statistical software, and book reviews.

- High author satisfaction with 90% likely to publish in the journal again.

- Werner G. Müller,

- Carsten Jentsch,

- Shuangzhe Liu,

- Ulrike Schneider

Latest issue

Volume 65, Issue 2

Latest articles

Analyzing quantitative performance: bayesian estimation of 3-component mixture geometric distributions based on kumaraswamy prior.

- Nadeem Akhtar

- Sajjad Ahmad Khan

- Haifa Alqahtani

Variation comparison between infinitely divisible distributions and the normal distribution

Multivariate stochastic comparisons of sequential order statistics with non-identical components

- Tanmay Sahoo

- Nil Kamal Hazra

- Narayanaswamy Balakrishnan

New copula families and mixing properties

- Martial Longla

Multiple random change points in survival analysis with applications to clinical trials

Journal updates

Write & submit: overleaf latex template.

Overleaf LaTeX Template

Journal information

- Australian Business Deans Council (ABDC) Journal Quality List

- Current Index to Statistics

- Google Scholar

- Japanese Science and Technology Agency (JST)

- Mathematical Reviews

- Norwegian Register for Scientific Journals and Series

- OCLC WorldCat Discovery Service

- Research Papers in Economics (RePEc)

- Science Citation Index Expanded (SCIE)

- TD Net Discovery Service

- UGC-CARE List (India)

Rights and permissions

Editorial policies

© Springer-Verlag GmbH Germany, part of Springer Nature

- Find a journal

- Publish with us

- Track your research

- Youth Program

- Wharton Online

Research Papers / Publications

- Search by keyword

- Search by citation

Page 1 of 3

A generalization to the log-inverse Weibull distribution and its applications in cancer research

In this paper we consider a generalization of a log-transformed version of the inverse Weibull distribution. Several theoretical properties of the distribution are studied in detail including expressions for i...

- View Full Text

Approximations of conditional probability density functions in Lebesgue spaces via mixture of experts models

Mixture of experts (MoE) models are widely applied for conditional probability density estimation problems. We demonstrate the richness of the class of MoE models by proving denseness results in Lebesgue space...

Structural properties of generalised Planck distributions

A family of generalised Planck (GP) laws is defined and its structural properties explored. Sometimes subject to parameter restrictions, a GP law is a randomly scaled gamma law; it arises as the equilibrium la...

New class of Lindley distributions: properties and applications

A new generalized class of Lindley distribution is introduced in this paper. This new class is called the T -Lindley{ Y } class of distributions, and it is generated by using the quantile functions of uniform, expon...

Tolerance intervals in statistical software and robustness under model misspecification

A tolerance interval is a statistical interval that covers at least 100 ρ % of the population of interest with a 100(1− α ) % confidence, where ρ and α are pre-specified values in (0, 1). In many scientific fields, su...

Combining assumptions and graphical network into gene expression data analysis

Analyzing gene expression data rigorously requires taking assumptions into consideration but also relies on using information about network relations that exist among genes. Combining these different elements ...

A comparison of zero-inflated and hurdle models for modeling zero-inflated count data

Counts data with excessive zeros are frequently encountered in practice. For example, the number of health services visits often includes many zeros representing the patients with no utilization during a follo...

A general stochastic model for bivariate episodes driven by a gamma sequence

We propose a new stochastic model describing the joint distribution of ( X , N ), where N is a counting variable while X is the sum of N independent gamma random variables. We present the main properties of this gene...

A flexible multivariate model for high-dimensional correlated count data

We propose a flexible multivariate stochastic model for over-dispersed count data. Our methodology is built upon mixed Poisson random vectors ( Y 1 ,…, Y d ), where the { Y i } are conditionally independent Poisson random...

Generalized fiducial inference on the mean of zero-inflated Poisson and Poisson hurdle models

Zero-inflated and hurdle models are widely applied to count data possessing excess zeros, where they can simultaneously model the process from how the zeros were generated and potentially help mitigate the eff...

Multivariate distributions of correlated binary variables generated by pair-copulas

Correlated binary data are prevalent in a wide range of scientific disciplines, including healthcare and medicine. The generalized estimating equations (GEEs) and the multivariate probit (MP) model are two of ...

On two extensions of the canonical Feller–Spitzer distribution

We introduce two extensions of the canonical Feller–Spitzer distribution from the class of Bessel densities, which comprise two distinct stochastically decreasing one-parameter families of positive absolutely ...

A new trivariate model for stochastic episodes

We study the joint distribution of stochastic events described by ( X , Y , N ), where N has a 1-inflated (or deflated) geometric distribution and X , Y are the sum and the maximum of N exponential random variables. Mod...

A flexible univariate moving average time-series model for dispersed count data

Al-Osh and Alzaid ( 1988 ) consider a Poisson moving average (PMA) model to describe the relation among integer-valued time series data; this model, however, is constrained by the underlying equi-dispersion assumpt...

Spatio-temporal analysis of flood data from South Carolina

To investigate the relationship between flood gage height and precipitation in South Carolina from 2012 to 2016, we built a conditional autoregressive (CAR) model using a Bayesian hierarchical framework. This ...

Affine-transformation invariant clustering models

We develop a cluster process which is invariant with respect to unknown affine transformations of the feature space without knowing the number of clusters in advance. Specifically, our proposed method can iden...

Distributions associated with simultaneous multiple hypothesis testing

We develop the distribution for the number of hypotheses found to be statistically significant using the rule from Simes (Biometrika 73: 751–754, 1986) for controlling the family-wise error rate (FWER). We fin...

New families of bivariate copulas via unit weibull distortion

This paper introduces a new family of bivariate copulas constructed using a unit Weibull distortion. Existing copulas play the role of the base or initial copulas that are transformed or distorted into a new f...

Generalized logistic distribution and its regression model

A new generalized asymmetric logistic distribution is defined. In some cases, existing three parameter distributions provide poor fit to heavy tailed data sets. The proposed new distribution consists of only t...

The spherical-Dirichlet distribution

Today, data mining and gene expressions are at the forefront of modern data analysis. Here we introduce a novel probability distribution that is applicable in these fields. This paper develops the proposed sph...

Item fit statistics for Rasch analysis: can we trust them?

To compare fit statistics for the Rasch model based on estimates of unconditional or conditional response probabilities.

Exact distributions of statistics for making inferences on mixed models under the default covariance structure

At this juncture when mixed models are heavily employed in applications ranging from clinical research to business analytics, the purpose of this article is to extend the exact distributional result of Wald (A...

A new discrete pareto type (IV) model: theory, properties and applications

Discrete analogue of a continuous distribution (especially in the univariate domain) is not new in the literature. The work of discretizing continuous distributions begun with the paper by Nakagawa and Osaki (197...

Density deconvolution for generalized skew-symmetric distributions

The density deconvolution problem is considered for random variables assumed to belong to the generalized skew-symmetric (GSS) family of distributions. The approach is semiparametric in that the symmetric comp...

The unifed distribution

We introduce a new distribution with support on (0,1) called unifed. It can be used as the response distribution for a GLM and it is suitable for data aggregation. We make a comparison to the beta regression. ...

On Burr III Marshal Olkin family: development, properties, characterizations and applications

In this paper, a flexible family of distributions with unimodel, bimodal, increasing, increasing and decreasing, inverted bathtub and modified bathtub hazard rate called Burr III-Marshal Olkin-G (BIIIMO-G) fam...

The linearly decreasing stress Weibull (LDSWeibull): a new Weibull-like distribution

Motivated by an engineering pullout test applied to a steel strip embedded in earth, we show how the resulting linearly decreasing force leads naturally to a new distribution, if the force under constant stress i...

Meta analysis of binary data with excessive zeros in two-arm trials

We present a novel Bayesian approach to random effects meta analysis of binary data with excessive zeros in two-arm trials. We discuss the development of likelihood accounting for excessive zeros, the prior, a...

On ( p 1 ,…, p k )-spherical distributions

The class of ( p 1 ,…, p k )-spherical probability laws and a method of simulating random vectors following such distributions are introduced using a new stochastic vector representation. A dynamic geometric disintegra...

A new class of survival distribution for degradation processes subject to shocks

Many systems experience gradual degradation while simultaneously being exposed to a stream of random shocks of varying magnitudes that eventually cause failure when a shock exceeds the residual strength of the...

A new extended normal regression model: simulations and applications

Various applications in natural science require models more accurate than well-known distributions. In this context, several generators of distributions have been recently proposed. We introduce a new four-par...

Multiclass analysis and prediction with network structured covariates

Technological advances associated with data acquisition are leading to the production of complex structured data sets. The recent development on classification with multiclass responses makes it possible to in...

High-dimensional star-shaped distributions

Stochastic representations of star-shaped distributed random vectors having heavy or light tail density generating function g are studied for increasing dimensions along with corresponding geometric measure repre...

A unified complex noncentral Wishart type distribution inspired by massive MIMO systems

The eigenvalue distributions from a complex noncentral Wishart matrix S = X H X has been the subject of interest in various real world applications, where X is assumed to be complex matrix variate normally distribute...

Particle swarm based algorithms for finding locally and Bayesian D -optimal designs

When a model-based approach is appropriate, an optimal design can guide how to collect data judiciously for making reliable inference at minimal cost. However, finding optimal designs for a statistical model w...

Admissible Bernoulli correlations

A multivariate symmetric Bernoulli distribution has marginals that are uniform over the pair {0,1}. Consider the problem of sampling from this distribution given a prescribed correlation between each pair of v...

On p -generalized elliptical random processes

We introduce rank- k -continuous axis-aligned p -generalized elliptically contoured distributions and study their properties such as stochastic representations, moments, and density-like representations. Applying th...

Parameters of stochastic models for electroencephalogram data as biomarkers for child’s neurodevelopment after cerebral malaria

The objective of this study was to test statistical features from the electroencephalogram (EEG) recordings as predictors of neurodevelopment and cognition of Ugandan children after coma due to cerebral malari...

A new generalization of generalized half-normal distribution: properties and regression models

In this paper, a new extension of the generalized half-normal distribution is introduced and studied. We assess the performance of the maximum likelihood estimators of the parameters of the new distribution vi...

Analytical properties of generalized Gaussian distributions

The family of Generalized Gaussian (GG) distributions has received considerable attention from the engineering community, due to the flexible parametric form of its probability density function, in modeling ma...

A new Weibull- X family of distributions: properties, characterizations and applications

We propose a new family of univariate distributions generated from the Weibull random variable, called a new Weibull-X family of distributions. Two special sub-models of the proposed family are presented and t...

The transmuted geometric-quadratic hazard rate distribution: development, properties, characterizations and applications

We propose a five parameter transmuted geometric quadratic hazard rate (TG-QHR) distribution derived from mixture of quadratic hazard rate (QHR), geometric and transmuted distributions via the application of t...

A nonparametric approach for quantile regression

Quantile regression estimates conditional quantiles and has wide applications in the real world. Estimating high conditional quantiles is an important problem. The regular quantile regression (QR) method often...

Mean and variance of ratios of proportions from categories of a multinomial distribution

Ratio distribution is a probability distribution representing the ratio of two random variables, each usually having a known distribution. Currently, there are results when the random variables in the ratio fo...

The power-Cauchy negative-binomial: properties and regression

We propose and study a new compounded model to extend the half-Cauchy and power-Cauchy distributions, which offers more flexibility in modeling lifetime data. The proposed model is analytically tractable and c...

Families of distributions arising from the quantile of generalized lambda distribution

In this paper, the class of T-R { generalized lambda } families of distributions based on the quantile of generalized lambda distribution has been proposed using the T-R { Y } framework. In the development of the T - R {

Risk ratios and Scanlan’s HRX

Risk ratios are distribution function tail ratios and are widely used in health disparities research. Let A and D denote advantaged and disadvantaged populations with cdfs F ...

Joint distribution of k -tuple statistics in zero-one sequences of Markov-dependent trials

We consider a sequence of n , n ≥3, zero (0) - one (1) Markov-dependent trials. We focus on k -tuples of 1s; i.e. runs of 1s of length at least equal to a fixed integer number k , 1≤ k ≤ n . The statistics denoting the n...

Quantile regression for overdispersed count data: a hierarchical method

Generalized Poisson regression is commonly applied to overdispersed count data, and focused on modelling the conditional mean of the response. However, conditional mean regression models may be sensitive to re...

Describing the Flexibility of the Generalized Gamma and Related Distributions

The generalized gamma (GG) distribution is a widely used, flexible tool for parametric survival analysis. Many alternatives and extensions to this family have been proposed. This paper characterizes the flexib...

- ISSN: 2195-5832 (electronic)

Thank you for visiting nature.com. You are using a browser version with limited support for CSS. To obtain the best experience, we recommend you use a more up to date browser (or turn off compatibility mode in Internet Explorer). In the meantime, to ensure continued support, we are displaying the site without styles and JavaScript.

- View all journals

- My Account Login

- Explore content

- About the journal

- Publish with us

- Sign up for alerts

- Data Descriptor

- Open access

- Published: 03 May 2024

A dataset for measuring the impact of research data and their curation

- Libby Hemphill ORCID: orcid.org/0000-0002-3793-7281 1 , 2 ,

- Andrea Thomer 3 ,

- Sara Lafia 1 ,

- Lizhou Fan 2 ,

- David Bleckley ORCID: orcid.org/0000-0001-7715-4348 1 &

- Elizabeth Moss 1

Scientific Data volume 11 , Article number: 442 ( 2024 ) Cite this article

686 Accesses

8 Altmetric

Metrics details

- Research data

- Social sciences

Science funders, publishers, and data archives make decisions about how to responsibly allocate resources to maximize the reuse potential of research data. This paper introduces a dataset developed to measure the impact of archival and data curation decisions on data reuse. The dataset describes 10,605 social science research datasets, their curation histories, and reuse contexts in 94,755 publications that cover 59 years from 1963 to 2022. The dataset was constructed from study-level metadata, citing publications, and curation records available through the Inter-university Consortium for Political and Social Research (ICPSR) at the University of Michigan. The dataset includes information about study-level attributes (e.g., PIs, funders, subject terms); usage statistics (e.g., downloads, citations); archiving decisions (e.g., curation activities, data transformations); and bibliometric attributes (e.g., journals, authors) for citing publications. This dataset provides information on factors that contribute to long-term data reuse, which can inform the design of effective evidence-based recommendations to support high-impact research data curation decisions.

Similar content being viewed by others

SciSciNet: A large-scale open data lake for the science of science research

Data, measurement and empirical methods in the science of science

Interdisciplinarity revisited: evidence for research impact and dynamism

Background & summary.

Recent policy changes in funding agencies and academic journals have increased data sharing among researchers and between researchers and the public. Data sharing advances science and provides the transparency necessary for evaluating, replicating, and verifying results. However, many data-sharing policies do not explain what constitutes an appropriate dataset for archiving or how to determine the value of datasets to secondary users 1 , 2 , 3 . Questions about how to allocate data-sharing resources efficiently and responsibly have gone unanswered 4 , 5 , 6 . For instance, data-sharing policies recognize that not all data should be curated and preserved, but they do not articulate metrics or guidelines for determining what data are most worthy of investment.

Despite the potential for innovation and advancement that data sharing holds, the best strategies to prioritize datasets for preparation and archiving are often unclear. Some datasets are likely to have more downstream potential than others, and data curation policies and workflows should prioritize high-value data instead of being one-size-fits-all. Though prior research in library and information science has shown that the “analytic potential” of a dataset is key to its reuse value 7 , work is needed to implement conceptual data reuse frameworks 8 , 9 , 10 , 11 , 12 , 13 , 14 . In addition, publishers and data archives need guidance to develop metrics and evaluation strategies to assess the impact of datasets.

Several existing resources have been compiled to study the relationship between the reuse of scholarly products, such as datasets (Table 1 ); however, none of these resources include explicit information on how curation processes are applied to data to increase their value, maximize their accessibility, and ensure their long-term preservation. The CCex (Curation Costs Exchange) provides models of curation services along with cost-related datasets shared by contributors but does not make explicit connections between them or include reuse information 15 . Analyses on platforms such as DataCite 16 have focused on metadata completeness and record usage, but have not included related curation-level information. Analyses of GenBank 17 and FigShare 18 , 19 citation networks do not include curation information. Related studies of Github repository reuse 20 and Softcite software citation 21 reveal significant factors that impact the reuse of secondary research products but do not focus on research data. RD-Switchboard 22 and DSKG 23 are scholarly knowledge graphs linking research data to articles, patents, and grants, but largely omit social science research data and do not include curation-level factors. To our knowledge, other studies of curation work in organizations similar to ICPSR – such as GESIS 24 , Dataverse 25 , and DANS 26 – have not made their underlying data available for analysis.

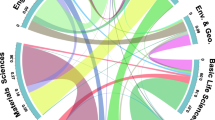

This paper describes a dataset 27 compiled for the MICA project (Measuring the Impact of Curation Actions) led by investigators at ICPSR, a large social science data archive at the University of Michigan. The dataset was originally developed to study the impacts of data curation and archiving on data reuse. The MICA dataset has supported several previous publications investigating the intensity of data curation actions 28 , the relationship between data curation actions and data reuse 29 , and the structures of research communities in a data citation network 30 . Collectively, these studies help explain the return on various types of curatorial investments. The dataset that we introduce in this paper, which we refer to as the MICA dataset, has the potential to address research questions in the areas of science (e.g., knowledge production), library and information science (e.g., scholarly communication), and data archiving (e.g., reproducible workflows).

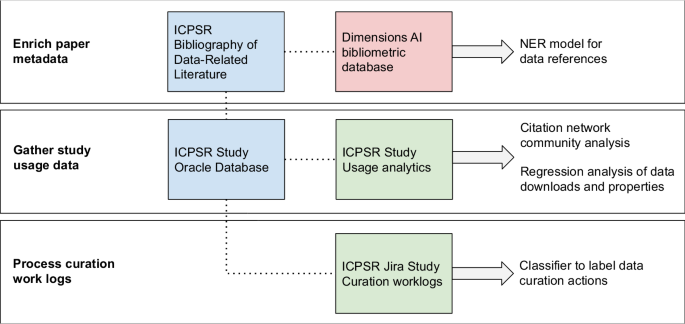

We constructed the MICA dataset 27 using records available at ICPSR, a large social science data archive at the University of Michigan. Data set creation involved: collecting and enriching metadata for articles indexed in the ICPSR Bibliography of Data-related Literature against the Dimensions AI bibliometric database; gathering usage statistics for studies from ICPSR’s administrative database; processing data curation work logs from ICPSR’s project tracking platform, Jira; and linking data in social science studies and series to citing analysis papers (Fig. 1 ).

Steps to prepare MICA dataset for analysis - external sources are red, primary internal sources are blue, and internal linked sources are green.

Enrich paper metadata

The ICPSR Bibliography of Data-related Literature is a growing database of literature in which data from ICPSR studies have been used. Its creation was funded by the National Science Foundation (Award 9977984), and for the past 20 years it has been supported by ICPSR membership and multiple US federally-funded and foundation-funded topical archives at ICPSR. The Bibliography was originally launched in the year 2000 to aid in data discovery by providing a searchable database linking publications to the study data used in them. The Bibliography collects the universe of output based on the data shared in each study through, which is made available through each ICPSR study’s webpage. The Bibliography contains both peer-reviewed and grey literature, which provides evidence for measuring the impact of research data. For an item to be included in the ICPSR Bibliography, it must contain an analysis of data archived by ICPSR or contain a discussion or critique of the data collection process, study design, or methodology 31 . The Bibliography is manually curated by a team of librarians and information specialists at ICPSR who enter and validate entries. Some publications are supplied to the Bibliography by data depositors, and some citations are submitted to the Bibliography by authors who abide by ICPSR’s terms of use requiring them to submit citations to works in which they analyzed data retrieved from ICPSR. Most of the Bibliography is populated by Bibliography team members, who create custom queries for ICPSR studies performed across numerous sources, including Google Scholar, ProQuest, SSRN, and others. Each record in the Bibliography is one publication that has used one or more ICPSR studies. The version we used was captured on 2021-11-16 and included 94,755 publications.

To expand the coverage of the ICPSR Bibliography, we searched exhaustively for all ICPSR study names, unique numbers assigned to ICPSR studies, and DOIs 32 using a full-text index available through the Dimensions AI database 33 . We accessed Dimensions through a license agreement with the University of Michigan. ICPSR Bibliography librarians and information specialists manually reviewed and validated new entries that matched one or more search criteria. We then used Dimensions to gather enriched metadata and full-text links for items in the Bibliography with DOIs. We matched 43% of the items in the Bibliography to enriched Dimensions metadata including abstracts, field of research codes, concepts, and authors’ institutional information; we also obtained links to full text for 16% of Bibliography items. Based on licensing agreements, we included Dimensions identifiers and links to full text so that users with valid publisher and database access can construct an enriched publication dataset.

Gather study usage data

ICPSR maintains a relational administrative database, DBInfo, that organizes study-level metadata and information on data reuse across separate tables. Studies at ICPSR consist of one or more files collected at a single time or for a single purpose; studies in which the same variables are observed over time are grouped into series. Each study at ICPSR is assigned a DOI, and its metadata are stored in DBInfo. Study metadata follows the Data Documentation Initiative (DDI) Codebook 2.5 standard. DDI elements included in our dataset are title, ICPSR study identification number, DOI, authoring entities, description (abstract), funding agencies, subject terms assigned to the study during curation, and geographic coverage. We also created variables based on DDI elements: total variable count, the presence of survey question text in the metadata, the number of author entities, and whether an author entity was an institution. We gathered metadata for ICPSR’s 10,605 unrestricted public-use studies available as of 2021-11-16 ( https://www.icpsr.umich.edu/web/pages/membership/or/metadata/oai.html ).

To link study usage data with study-level metadata records, we joined study metadata from DBinfo on study usage information, which included total study downloads (data and documentation), individual data file downloads, and cumulative citations from the ICPSR Bibliography. We also gathered descriptive metadata for each study and its variables, which allowed us to summarize and append recoded fields onto the study-level metadata such as curation level, number and type of principle investigators, total variable count, and binary variables indicating whether the study data were made available for online analysis, whether survey question text was made searchable online, and whether the study variables were indexed for search. These characteristics describe aspects of the discoverability of the data to compare with other characteristics of the study. We used the study and series numbers included in the ICPSR Bibliography as unique identifiers to link papers to metadata and analyze the community structure of dataset co-citations in the ICPSR Bibliography 32 .

Process curation work logs

Researchers deposit data at ICPSR for curation and long-term preservation. Between 2016 and 2020, more than 3,000 research studies were deposited with ICPSR. Since 2017, ICPSR has organized curation work into a central unit that provides varied levels of curation that vary in the intensity and complexity of data enhancement that they provide. While the levels of curation are standardized as to effort (level one = less effort, level three = most effort), the specific curatorial actions undertaken for each dataset vary. The specific curation actions are captured in Jira, a work tracking program, which data curators at ICPSR use to collaborate and communicate their progress through tickets. We obtained access to a corpus of 669 completed Jira tickets corresponding to the curation of 566 unique studies between February 2017 and December 2019 28 .

To process the tickets, we focused only on their work log portions, which contained free text descriptions of work that data curators had performed on a deposited study, along with the curators’ identifiers, and timestamps. To protect the confidentiality of the data curators and the processing steps they performed, we collaborated with ICPSR’s curation unit to propose a classification scheme, which we used to train a Naive Bayes classifier and label curation actions in each work log sentence. The eight curation action labels we proposed 28 were: (1) initial review and planning, (2) data transformation, (3) metadata, (4) documentation, (5) quality checks, (6) communication, (7) other, and (8) non-curation work. We note that these categories of curation work are very specific to the curatorial processes and types of data stored at ICPSR, and may not match the curation activities at other repositories. After applying the classifier to the work log sentences, we obtained summary-level curation actions for a subset of all ICPSR studies (5%), along with the total number of hours spent on data curation for each study, and the proportion of time associated with each action during curation.

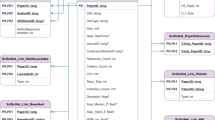

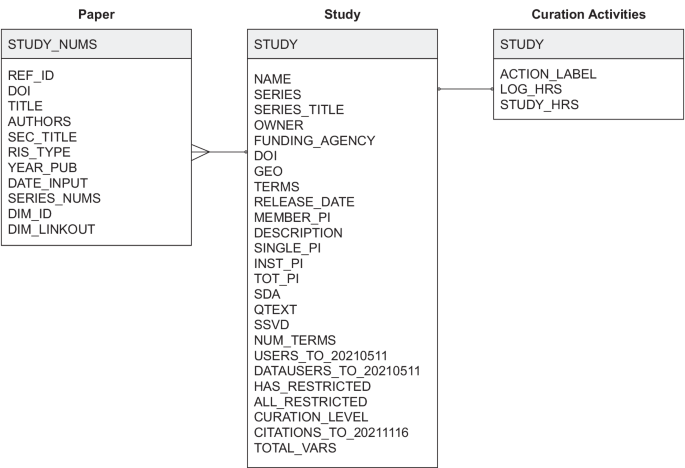

Data Records

The MICA dataset 27 connects records for each of ICPSR’s archived research studies to the research publications that use them and related curation activities available for a subset of studies (Fig. 2 ). Each of the three tables published in the dataset is available as a study archived at ICPSR. The data tables are distributed as statistical files available for use in SAS, SPSS, Stata, and R as well as delimited and ASCII text files. The dataset is organized around studies and papers as primary entities. The studies table lists ICPSR studies, their metadata attributes, and usage information; the papers table was constructed using the ICPSR Bibliography and Dimensions database; and the curation logs table summarizes the data curation steps performed on a subset of ICPSR studies.

Studies (“ICPSR_STUDIES”): 10,605 social science research datasets available through ICPSR up to 2021-11-16 with variables for ICPSR study number, digital object identifier, study name, series number, series title, authoring entities, full-text description, release date, funding agency, geographic coverage, subject terms, topical archive, curation level, single principal investigator (PI), institutional PI, the total number of PIs, total variables in data files, question text availability, study variable indexing, level of restriction, total unique users downloading study data files and codebooks, total unique users downloading data only, and total unique papers citing data through November 2021. Studies map to the papers and curation logs table through ICPSR study numbers as “STUDY”. However, not every study in this table will have records in the papers and curation logs tables.

Papers (“ICPSR_PAPERS”): 94,755 publications collected from 2000-08-11 to 2021-11-16 in the ICPSR Bibliography and enriched with metadata from the Dimensions database with variables for paper number, identifier, title, authors, publication venue, item type, publication date, input date, ICPSR series numbers used in the paper, ICPSR study numbers used in the paper, the Dimension identifier, and the Dimensions link to the publication’s full text. Papers map to the studies table through ICPSR study numbers in the “STUDY_NUMS” field. Each record represents a single publication, and because a researcher can use multiple datasets when creating a publication, each record may list multiple studies or series.

Curation logs (“ICPSR_CURATION_LOGS”): 649 curation logs for 563 ICPSR studies (although most studies in the subset had one curation log, some studies were associated with multiple logs, with a maximum of 10) curated between February 2017 and December 2019 with variables for study number, action labels assigned to work description sentences using a classifier trained on ICPSR curation logs, hours of work associated with a single log entry, and total hours of work logged for the curation ticket. Curation logs map to the study and paper tables through ICPSR study numbers as “STUDY”. Each record represents a single logged action, and future users may wish to aggregate actions to the study level before joining tables.

Entity-relation diagram.

Technical Validation

We report on the reliability of the dataset’s metadata in the following subsections. To support future reuse of the dataset, curation services provided through ICPSR improved data quality by checking for missing values, adding variable labels, and creating a codebook.

All 10,605 studies available through ICPSR have a DOI and a full-text description summarizing what the study is about, the purpose of the study, the main topics covered, and the questions the PIs attempted to answer when they conducted the study. Personal names (i.e., principal investigators) and organizational names (i.e., funding agencies) are standardized against an authority list maintained by ICPSR; geographic names and subject terms are also standardized and hierarchically indexed in the ICPSR Thesaurus 34 . Many of ICPSR’s studies (63%) are in a series and are distributed through the ICPSR General Archive (56%), a non-topical archive that accepts any social or behavioral science data. While study data have been available through ICPSR since 1962, the earliest digital release date recorded for a study was 1984-03-18, when ICPSR’s database was first employed, and the most recent date is 2021-10-28 when the dataset was collected.

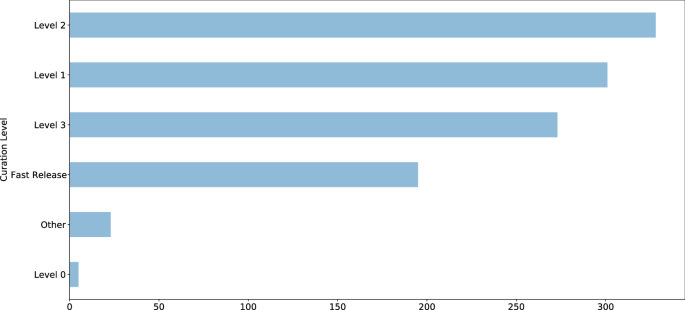

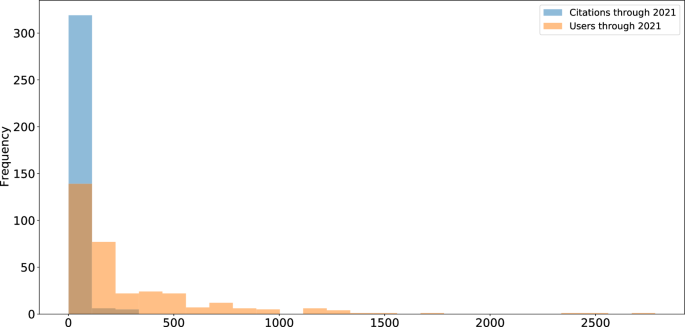

Curation level information was recorded starting in 2017 and is available for 1,125 studies (11%); approximately 80% of studies with assigned curation levels received curation services, equally distributed between Levels 1 (least intensive), 2 (moderately intensive), and 3 (most intensive) (Fig. 3 ). Detailed descriptions of ICPSR’s curation levels are available online 35 . Additional metadata are available for a subset of 421 studies (4%), including information about whether the study has a single PI, an institutional PI, the total number of PIs involved, total variables recorded is available for online analysis, has searchable question text, has variables that are indexed for search, contains one or more restricted files, and whether the study is completely restricted. We provided additional metadata for this subset of ICPSR studies because they were released within the past five years and detailed curation and usage information were available for them. Usage statistics including total downloads and data file downloads are available for this subset of studies as well; citation statistics are available for 8,030 studies (76%). Most ICPSR studies have fewer than 500 users, as indicated by total downloads, or citations (Fig. 4 ).

ICPSR study curation levels.

ICPSR study usage.

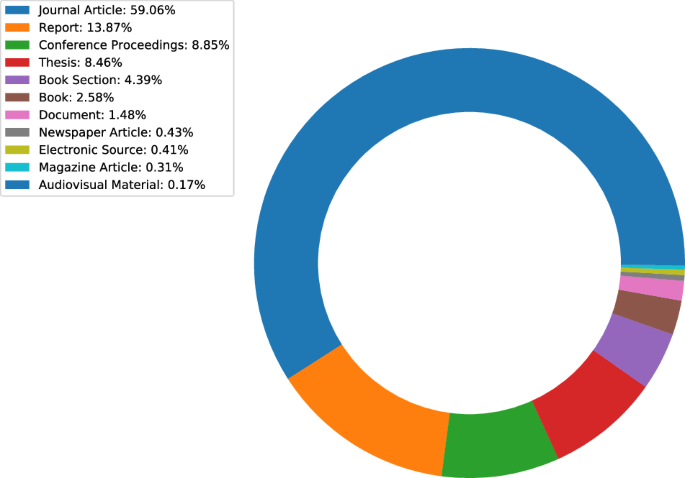

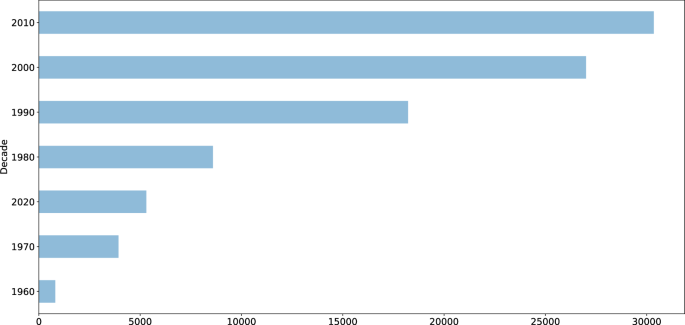

A subset of 43,102 publications (45%) available in the ICPSR Bibliography had a DOI. Author metadata were entered as free text, meaning that variations may exist and require additional normalization and pre-processing prior to analysis. While author information is standardized for each publication, individual names may appear in different sort orders (e.g., “Earls, Felton J.” and “Stephen W. Raudenbush”). Most of the items in the ICPSR Bibliography as of 2021-11-16 were journal articles (59%), reports (14%), conference presentations (9%), or theses (8%) (Fig. 5 ). The number of publications collected in the Bibliography has increased each decade since the inception of ICPSR in 1962 (Fig. 6 ). Most ICPSR studies (76%) have one or more citations in a publication.

ICPSR Bibliography citation types.

ICPSR citations by decade.

Usage Notes

The dataset consists of three tables that can be joined using the “STUDY” key as shown in Fig. 2 . The “ICPSR_PAPERS” table contains one row per paper with one or more cited studies in the “STUDY_NUMS” column. We manipulated and analyzed the tables as CSV files with the Pandas library 36 in Python and the Tidyverse packages 37 in R.

The present MICA dataset can be used independently to study the relationship between curation decisions and data reuse. Evidence of reuse for specific studies is available in several forms: usage information, including downloads and citation counts; and citation contexts within papers that cite data. Analysis may also be performed on the citation network formed between datasets and papers that use them. Finally, curation actions can be associated with properties of studies and usage histories.

This dataset has several limitations of which users should be aware. First, Jira tickets can only be used to represent the intensiveness of curation for activities undertaken since 2017, when ICPSR started using both Curation Levels and Jira. Studies published before 2017 were all curated, but documentation of the extent of that curation was not standardized and therefore could not be included in these analyses. Second, the measure of publications relies upon the authors’ clarity of data citation and the ICPSR Bibliography staff’s ability to discover citations with varying formality and clarity. Thus, there is always a chance that some secondary-data-citing publications have been left out of the bibliography. Finally, there may be some cases in which a paper in the ICSPSR bibliography did not actually obtain data from ICPSR. For example, PIs have often written about or even distributed their data prior to their archival in ICSPR. Therefore, those publications would not have cited ICPSR but they are still collected in the Bibliography as being directly related to the data that were eventually deposited at ICPSR.

In summary, the MICA dataset contains relationships between two main types of entities – papers and studies – which can be mined. The tables in the MICA dataset have supported network analysis (community structure and clique detection) 30 ; natural language processing (NER for dataset reference detection) 32 ; visualizing citation networks (to search for datasets) 38 ; and regression analysis (on curation decisions and data downloads) 29 . The data are currently being used to develop research metrics and recommendation systems for research data. Given that DOIs are provided for ICPSR studies and articles in the ICPSR Bibliography, the MICA dataset can also be used with other bibliometric databases, including DataCite, Crossref, OpenAlex, and related indexes. Subscription-based services, such as Dimensions AI, are also compatible with the MICA dataset. In some cases, these services provide abstracts or full text for papers from which data citation contexts can be extracted for semantic content analysis.

Code availability

The code 27 used to produce the MICA project dataset is available on GitHub at https://github.com/ICPSR/mica-data-descriptor and through Zenodo with the identifier https://doi.org/10.5281/zenodo.8432666 . Data manipulation and pre-processing were performed in Python. Data curation for distribution was performed in SPSS.

He, L. & Han, Z. Do usage counts of scientific data make sense? An investigation of the Dryad repository. Library Hi Tech 35 , 332–342 (2017).

Article Google Scholar

Brickley, D., Burgess, M. & Noy, N. Google dataset search: Building a search engine for datasets in an open web ecosystem. In The World Wide Web Conference - WWW ‘19 , 1365–1375 (ACM Press, San Francisco, CA, USA, 2019).

Buneman, P., Dosso, D., Lissandrini, M. & Silvello, G. Data citation and the citation graph. Quantitative Science Studies 2 , 1399–1422 (2022).

Chao, T. C. Disciplinary reach: Investigating the impact of dataset reuse in the earth sciences. Proceedings of the American Society for Information Science and Technology 48 , 1–8 (2011).

Article ADS Google Scholar

Parr, C. et al . A discussion of value metrics for data repositories in earth and environmental sciences. Data Science Journal 18 , 58 (2019).

Eschenfelder, K. R., Shankar, K. & Downey, G. The financial maintenance of social science data archives: Four case studies of long–term infrastructure work. J. Assoc. Inf. Sci. Technol. 73 , 1723–1740 (2022).

Palmer, C. L., Weber, N. M. & Cragin, M. H. The analytic potential of scientific data: Understanding re-use value. Proceedings of the American Society for Information Science and Technology 48 , 1–10 (2011).

Zimmerman, A. S. New knowledge from old data: The role of standards in the sharing and reuse of ecological data. Sci. Technol. Human Values 33 , 631–652 (2008).

Cragin, M. H., Palmer, C. L., Carlson, J. R. & Witt, M. Data sharing, small science and institutional repositories. Philosophical Transactions of the Royal Society A: Mathematical, Physical and Engineering Sciences 368 , 4023–4038 (2010).

Article ADS CAS Google Scholar

Fear, K. M. Measuring and Anticipating the Impact of Data Reuse . Ph.D. thesis, University of Michigan (2013).

Borgman, C. L., Van de Sompel, H., Scharnhorst, A., van den Berg, H. & Treloar, A. Who uses the digital data archive? An exploratory study of DANS. Proceedings of the Association for Information Science and Technology 52 , 1–4 (2015).

Pasquetto, I. V., Borgman, C. L. & Wofford, M. F. Uses and reuses of scientific data: The data creators’ advantage. Harvard Data Science Review 1 (2019).

Gregory, K., Groth, P., Scharnhorst, A. & Wyatt, S. Lost or found? Discovering data needed for research. Harvard Data Science Review (2020).

York, J. Seeking equilibrium in data reuse: A study of knowledge satisficing . Ph.D. thesis, University of Michigan (2022).

Kilbride, W. & Norris, S. Collaborating to clarify the cost of curation. New Review of Information Networking 19 , 44–48 (2014).

Robinson-Garcia, N., Mongeon, P., Jeng, W. & Costas, R. DataCite as a novel bibliometric source: Coverage, strengths and limitations. Journal of Informetrics 11 , 841–854 (2017).

Qin, J., Hemsley, J. & Bratt, S. E. The structural shift and collaboration capacity in GenBank networks: A longitudinal study. Quantitative Science Studies 3 , 174–193 (2022).

Article PubMed PubMed Central Google Scholar

Acuna, D. E., Yi, Z., Liang, L. & Zhuang, H. Predicting the usage of scientific datasets based on article, author, institution, and journal bibliometrics. In Smits, M. (ed.) Information for a Better World: Shaping the Global Future. iConference 2022 ., 42–52 (Springer International Publishing, Cham, 2022).

Zeng, T., Wu, L., Bratt, S. & Acuna, D. E. Assigning credit to scientific datasets using article citation networks. Journal of Informetrics 14 , 101013 (2020).

Koesten, L., Vougiouklis, P., Simperl, E. & Groth, P. Dataset reuse: Toward translating principles to practice. Patterns 1 , 100136 (2020).

Du, C., Cohoon, J., Lopez, P. & Howison, J. Softcite dataset: A dataset of software mentions in biomedical and economic research publications. J. Assoc. Inf. Sci. Technol. 72 , 870–884 (2021).

Aryani, A. et al . A research graph dataset for connecting research data repositories using RD-Switchboard. Sci Data 5 , 180099 (2018).

Färber, M. & Lamprecht, D. The data set knowledge graph: Creating a linked open data source for data sets. Quantitative Science Studies 2 , 1324–1355 (2021).

Perry, A. & Netscher, S. Measuring the time spent on data curation. Journal of Documentation 78 , 282–304 (2022).

Trisovic, A. et al . Advancing computational reproducibility in the Dataverse data repository platform. In Proceedings of the 3rd International Workshop on Practical Reproducible Evaluation of Computer Systems , P-RECS ‘20, 15–20, https://doi.org/10.1145/3391800.3398173 (Association for Computing Machinery, New York, NY, USA, 2020).

Borgman, C. L., Scharnhorst, A. & Golshan, M. S. Digital data archives as knowledge infrastructures: Mediating data sharing and reuse. Journal of the Association for Information Science and Technology 70 , 888–904, https://doi.org/10.1002/asi.24172 (2019).

Lafia, S. et al . MICA Data Descriptor. Zenodo https://doi.org/10.5281/zenodo.8432666 (2023).

Lafia, S., Thomer, A., Bleckley, D., Akmon, D. & Hemphill, L. Leveraging machine learning to detect data curation activities. In 2021 IEEE 17th International Conference on eScience (eScience) , 149–158, https://doi.org/10.1109/eScience51609.2021.00025 (2021).

Hemphill, L., Pienta, A., Lafia, S., Akmon, D. & Bleckley, D. How do properties of data, their curation, and their funding relate to reuse? J. Assoc. Inf. Sci. Technol. 73 , 1432–44, https://doi.org/10.1002/asi.24646 (2021).

Lafia, S., Fan, L., Thomer, A. & Hemphill, L. Subdivisions and crossroads: Identifying hidden community structures in a data archive’s citation network. Quantitative Science Studies 3 , 694–714, https://doi.org/10.1162/qss_a_00209 (2022).

ICPSR. ICPSR Bibliography of Data-related Literature: Collection Criteria. https://www.icpsr.umich.edu/web/pages/ICPSR/citations/collection-criteria.html (2023).

Lafia, S., Fan, L. & Hemphill, L. A natural language processing pipeline for detecting informal data references in academic literature. Proc. Assoc. Inf. Sci. Technol. 59 , 169–178, https://doi.org/10.1002/pra2.614 (2022).

Hook, D. W., Porter, S. J. & Herzog, C. Dimensions: Building context for search and evaluation. Frontiers in Research Metrics and Analytics 3 , 23, https://doi.org/10.3389/frma.2018.00023 (2018).

https://www.icpsr.umich.edu/web/ICPSR/thesaurus (2002). ICPSR. ICPSR Thesaurus.

https://www.icpsr.umich.edu/files/datamanagement/icpsr-curation-levels.pdf (2020). ICPSR. ICPSR Curation Levels.

McKinney, W. Data Structures for Statistical Computing in Python. In van der Walt, S. & Millman, J. (eds.) Proceedings of the 9th Python in Science Conference , 56–61 (2010).

Wickham, H. et al . Welcome to the Tidyverse. Journal of Open Source Software 4 , 1686 (2019).

Fan, L., Lafia, S., Li, L., Yang, F. & Hemphill, L. DataChat: Prototyping a conversational agent for dataset search and visualization. Proc. Assoc. Inf. Sci. Technol. 60 , 586–591 (2023).

Download references

Acknowledgements

We thank the ICPSR Bibliography staff, the ICPSR Data Curation Unit, and the ICPSR Data Stewardship Committee for their support of this research. This material is based upon work supported by the National Science Foundation under grant 1930645. This project was made possible in part by the Institute of Museum and Library Services LG-37-19-0134-19.

Author information

Authors and affiliations.

Inter-university Consortium for Political and Social Research, University of Michigan, Ann Arbor, MI, 48104, USA

Libby Hemphill, Sara Lafia, David Bleckley & Elizabeth Moss

School of Information, University of Michigan, Ann Arbor, MI, 48104, USA

Libby Hemphill & Lizhou Fan

School of Information, University of Arizona, Tucson, AZ, 85721, USA

Andrea Thomer

You can also search for this author in PubMed Google Scholar

Contributions

L.H. and A.T. conceptualized the study design, D.B., E.M., and S.L. prepared the data, S.L., L.F., and L.H. analyzed the data, and D.B. validated the data. All authors reviewed and edited the manuscript.

Corresponding author

Correspondence to Libby Hemphill .

Ethics declarations

Competing interests.

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/ .

Reprints and permissions

About this article

Cite this article.

Hemphill, L., Thomer, A., Lafia, S. et al. A dataset for measuring the impact of research data and their curation. Sci Data 11 , 442 (2024). https://doi.org/10.1038/s41597-024-03303-2

Download citation

Received : 16 November 2023

Accepted : 24 April 2024

Published : 03 May 2024

DOI : https://doi.org/10.1038/s41597-024-03303-2

Share this article

Anyone you share the following link with will be able to read this content:

Sorry, a shareable link is not currently available for this article.

Provided by the Springer Nature SharedIt content-sharing initiative

Quick links

- Explore articles by subject

- Guide to authors

- Editorial policies

Sign up for the Nature Briefing newsletter — what matters in science, free to your inbox daily.

Advertisement

2022 Impact Factor: 8 2023 Google Scholar h5-index: 80 ISSN: 0034-6535 E-ISSN: 1530-9142

The Review of Economics and Statistics is a 100-year-old general journal of applied economics. Edited at the Harvard Kennedy School, the Review aims to publish both empirical and theoretical contributions that will be of interest to a wide economics readership, building on its long and distinguished history that includes work from such figures as Kenneth Arrow, Milton Friedman, Robert Merton, Paul Samuelson, Robert Solow, and James Tobin. Looking to the future, we aim to publish similarly impactful work from authors who represent the current field of economics in all its diversity. The editorial board aspires to provide timely and fairly adjudicated treatment to all submitters.

Pierre Azoulay

Will Dobbie (Co-Chair)

Raymond Fisman (Co-Chair)

Benjamin R. Handel

Brian A. Jacob

Scott Kominers

Tavneet Suri

Stephen Terry

Affiliations

- Online ISSN 1530-9142

- Print ISSN 0034-6535

A product of The MIT Press

Mit press direct.

- About MIT Press Direct

Information

- Accessibility

- For Authors

- For Customers

- For Librarians

- Direct to Open

- Open Access

- Media Inquiries

- Rights and Permissions

- For Advertisers

- About the MIT Press

- The MIT Press Reader

- MIT Press Blog

- Seasonal Catalogs

- MIT Press Home

- Give to the MIT Press

- Direct Service Desk

- Terms of Use

- Privacy Statement

- Crossref Member

- COUNTER Member

- The MIT Press colophon is registered in the U.S. Patent and Trademark Office

This Feature Is Available To Subscribers Only

Sign In or Create an Account

- ArXiv Papers

Simplifying Debiased Inference via Automatic Differentiation and Probabilistic Programming

Published 5/14/2024

We introduce an algorithm that simplifies the construction of efficient estimators, making them accessible to a broader audience. 'Dimple' takes as input computer code representing a parameter of interest and outputs an efficient estimator. Unlike standard approaches, it does not require users to derive a functional derivative known as the efficient influence function. Dimple avoids this task by applying automatic differentiation to the statistical functional of interest. Doing so requires expressing this functional as a composition of primitives satisfying a novel differentiability condition. Dimple also uses this composition to determine the nuisances it must estimate. In software, primitives can be implemented independently of one another and reused across different estimation problems. We provide a proof-of-concept Python implementation and showcase through examples how it allows users to go from parameter specification to efficient estimation with just a few lines of code.

An official website of the United States government

The .gov means it’s official. Federal government websites often end in .gov or .mil. Before sharing sensitive information, make sure you’re on a federal government site.

The site is secure. The https:// ensures that you are connecting to the official website and that any information you provide is encrypted and transmitted securely.

- Publications

- Account settings

Preview improvements coming to the PMC website in October 2024. Learn More or Try it out now .

- Advanced Search

- Journal List

- Fam Med Community Health

- v.7(2); 2019

Basics of statistics for primary care research

Timothy c guetterman.

Family Medicine, University of Michigan, Michigan Medicine, Ann Arbor, Michigan, USA

The purpose of this article is to provide an accessible introduction to foundational statistical procedures and present the steps of data analysis to address research questions and meet standards for scientific rigour. It is aimed at individuals new to research with less familiarity with statistics, or anyone interested in reviewing basic statistics. After examining a brief overview of foundational statistical techniques, for example, differences between descriptive and inferential statistics, the article illustrates 10 steps in conducting statistical analysis with examples of each. The following are the general steps for statistical analysis: (1) formulate a hypothesis, (2) select an appropriate statistical test, (3) conduct a power analysis, (4) prepare data for analysis, (5) start with descriptive statistics, (6) check assumptions of tests, (7) run the analysis, (8) examine the statistical model, (9) report the results and (10) evaluate threats to validity of the statistical analysis. Researchers in family medicine and community health can follow specific steps to ensure a systematic and rigorous analysis.

Investigators in family medicine and community health often employ quantitative research to address aims that examine trends, relationships among variables or comparisons of groups (Fetters, 2019, this issue). Quantitative research involves collecting structured or closed-ended data, typically in the form of numbers, and analysing that numeric data to address research questions and test hypotheses. Research hypotheses provide a proposition about the expected outcome of research that may be assessed using a variety of methodologies, while statistical hypotheses are specific statements about propositions that can only be tested statistically. Statistical analysis requires a series of steps beginning with formulating hypotheses and selecting appropriate statistical tests. After preparing data for analysis, researchers then proceed with the actual statistical analysis and finally report and interpret the results.

Family medicine and community health researchers often limit their analyses to descriptive statistics—reporting frequencies, means and standard deviation (SD). While sometimes an appropriate stopping point, researchers may be missing opportunities for more advanced analyses. For example, knowing that patients have favourable attitudes about a treatment may be important and can be addressed with descriptive statistics. On the other hand, finding that attitudes are different (or not) between men and women and that difference is statistically significant may give even more actionable information to healthcare professionals. The latter question, about differences, can be addressed through inferential statistical tests. The purpose of this article is to provide an accessible introduction to foundational statistical procedures and present the steps of data analysis to address research questions and meet standards for scientific rigour. It is aimed at individuals new to research with less familiarity with statistics and may be helpful information when reading research or conducting peer review.

Foundational statistical techniques

Statistical analysis is a method of aggregating numeric data and drawing inferences about variables. Statistical procedures may be broadly classified into (1) statistics that describe data—descriptive statistics; and (2) statistics that make inferences about more general situations beyond the actual data set—inferential statistics.

Descriptive statistics

Descriptive statistics aggregate data that are grouped into variables to examine typical values and the spread of values for each variable in a data set. Statistics summarising typical values are referred to as measures of central tendency and include the mean, median and mode. The spread of values is represented through measures of variability, including the variance, SD and range. Together, descriptive statistics provide indicators of the distribution of data, or the frequency of values through the data set as in a histogram plot. Table 1 summarises commonly used descriptive statistics. For consistency, I use the terms independent variable and dependent variable, but in some fields and types of research such as correlational studies the preferred terms may be predictor and outcome variable. An independent variable influences, affects or predicts a dependent variable .

Inferential statistics: comparing groups with t tests and ANOVA

Inferential statistics are another broad category of techniques that go beyond describing a data set. Inferential statistics can help researchers draw conclusions from a sample to a population. 1 We can use inferential statistics to examine differences among groups and the relationships among variables. Table 2 presents a menu of common, fundamental inferential tests. Remember that even more complex statistics rely on these as a foundation.

Inferential statistics

The t test is used to compare two group means by determining whether group differences are likely to have occurred randomly by chance or systematically indicating a real difference. Two common forms are the independent samples t test, which compares means of two unrelated groups, such as means for a treatment group relative to a control group, and the paired samples t test, which compares means of related groups, such as the pretest and post-test scores for the same individuals before and after a treatment. A t test is essentially determining whether the difference in means between groups is larger than the variability within the groups themselves.

Another fundamental set of inferential statistics falls under the general linear model and includes analysis of variance (ANOVA), correlation and regression. To determine whether group means are different, use the t test or the ANOVA. Note that the t test is limited to two groups, but the ANOVA is applicable to two or more groups. For example, an ANOVA could examine whether a primary outcome measure—dependent variable—is significantly different for groups assigned to one of three different interventions. The ANOVA result comes in an F statistic along with a p value or confidence interval (CI), which tells whether there is some significant difference among groups. We then need to use other statistics (eg, planned comparisons or a Bonferroni comparison, to give two possibilities) to determine which of those groups are significantly different from one another. Planned comparisons are established before conducting the analysis to contrast the groups, while other tests like the Bonferroni comparison are conducted post-hoc (ie, after analysis).

Examining relationships using correlation and regression

The general linear model contains two other major methods of analysis, correlation and regression. Correlation reveals whether values between two variables tend to systematically change together. Correlation analysis has three general outcomes: (1) the two variables rise and fall together; (2) as values in one variable rise, the other falls; and (3) the two variables do not appear to be systematically related. To make those determinations, we use the correlation coefficient (r) and related p value or CI. First, use the p value or CI, as compared with established significance criteria (eg, p<0.05), to determine whether a relationship is even statistically significant. If it is not, stop as there is no point in looking at the coefficients. If so, move to the correlation coefficient.

A correlation coefficient provides two very important pieces of information—the strength and direction of the relationship. An r statistic can range from −1.0 to +1.0. Strength is determined by how close the value is to −1.0 or 1.0. Either extreme indicates a perfect relationship, while a value of 0 indicates no relationship. Cohen provides guidance for interpretations: 0.1 is a weak correlation, 0.3 is a medium correlation and 0.5 is a large correlation. 1 2 These interpretations must be considered in the context of the study and relative to the literature. The valence (+ or −) coefficient reveals the direction of the relationship. A negative correlation means one value rises, while the other tends to fall, and a positive coefficient means that the values of the two variables tend to rise and fall together.

Regression adds an additional layer beyond correlation that allows predicting one value from another. Assume we are trying to predict a dependent variable (Y) from an independent variable (X). Simple linear regression gives an equation (Y = b 0 + b 1 X) for a line that we can use to predict one value from another. The three major components of that prediction are the constant (ie, the intercept represented by b 0 ), the systematic explanation of variation (b 1 ), and the error, which is a residual value not accounted for in the equation 3 but available as part of our regression output. To assess a regression model (ie, model fit), examine key pieces of the regression output: (1) F statistic and its significance to determine whether the model systematically accounts for variance in the dependent variable; (2) the r square value for a measure of how much variance in the dependent variable is accounted for by the model; (3) the significance of coefficients for each independent variable in the model; and (4) residuals to examine random error in the model. Other factors, such as outliers, are potentially important (see Field 4 ).

The aforementioned inferential tests are foundational to many other advanced statistics that are beyond the scope of this article. Inferential tests rely on foundational assumptions, including that data are normally distributed, observations are independent, and generally that our dependent or outcome variable is continuous. When data do not meet these assumptions, we turn to non-parametric statistics (see Field 4 ).

A brief history of foundational statistics

Prominent statisticians Karl Pearson and Ronald A Fisher developed and popularised many of the basic statistics that remain a foundation for statistics today. Fisher’s ideas formed the basis of null hypothesis significance testing that sets a criterion for confidence or probability of an event. 4 Among his contributions, Fisher also developed the ANOVA. Pearson’s correlation coefficient provides a way to examine whether two variables are related. The correlation coefficient is denoted by r for a relationship between two variables or R for relationships among more than two variables as in multiple correlation or regression. 4 William Gosset developed the t distribution and later the t test as a way to examine whether two values of means were statistically different. 5

Statistical software

While the aforementioned statistics can be calculated manually, researchers typically use statistical software that process data, calculate statistics and p values, and supply a summary output from the analysis. However, the programs still require an informed researcher to run the correct analysis and interpret the output. Several available programs include SAS, Stata, SPSS and R. Try using the programs through a demonstration or trial period before deciding which one to use. It also helps to know or have access to others using the program should you have questions.

Example study

The remainder of this article presents steps in statistical analysis that apply to many techniques. A recently published study on communication skills to break bad news to a patient with cancer provides an exemplar to illustrate these steps. 6 In that study, the team examined the validity of a competence assessment of communication skills, hypothesising that after receiving training, post-test scores would be statistically improved from pretest scores on the same measure. Another analysis was to examine pretest sensitisation, tested through a hypothesis that a group randomly assigned to receive a pretest and post-test would not be significantly different from a post-test-only group. To test the hypotheses, Guetterman et al 6 examined whether mean differences were statistically significant by applying t tests and ANOVA.

Steps in statistical analysis

Statistical analysis might be considered in 10 related steps. These steps assume necessary background activities, such as conducting literature review and writing clear research question or aims, are already complete.

Step 1. Formulate a hypothesis to test

In statistical analysis, we test hypotheses. Therefore, it is necessary to formulate hypotheses that are testable. A hypothesis is specific, detailed and congruent with statistical procedures. A null hypothesis gives a prediction and typically uses words like ‘no difference’ or ‘no association’. 7 For example, we may hypothesise that group means on a certain measure are not significantly different and test that with an ANOVA or t-test. For example, in the exemplar study, one of the hypotheses was ‘MPathic-VR scores will improve (decreased score reflects better performance) from the preseminar test to the postseminar test based on exposure to the [breaking bad news] BBN intervention’ (p508), which was tested with a t test. 6 Hypotheses about relationships among variables could be tested with correlation and regression. Ultimately, hypotheses are driven by the purpose or aims of a study and further subdivide the purpose or aims into aspects that are specific and testable. When forming hypotheses, a concern is that having too many dependent variables leads to multiple tests of the same data set. This concern, called multiple comparisons or multiplicity, can inflate the likelihood of finding a significant relationship when none exists. Conducting fewer tests and adjusting the p value are ways to mitigate the concern.

Step 2. Select a test to run based on research questions or hypotheses

The statistical test must match the intended hypothesis and research question. Descriptive statistics allow us to examine trends limited to typical values, spread of values and distributions of data. ANOVAs and t tests are methods to test whether means are statistically different among groups and what those differences are. In the exemplar study, the authors used paired samples t-tests for pre–post scores with the same individuals and independent t tests for differences among groups. 6

Correlation is a method to examine whether two or more variables are related to one another, and regression extends that idea by allowing us to fit a line to make predictions about one variable based on a linear relationship to another. These statistical tests alone do not determine cause and effect, but merely associations. Causal inferences can only be made with certain research designs (eg, experiments) and perhaps with advanced statistical techniques (eg, propensity score analysis). Table 3 provides guidance for determining which statistical test to use.

Choosing and interpreting statistics for studies common in primary care

Step 3. Conduct a power analysis to determine a sample size

Before conducting analysis, we need to ensure that we will have an adequate sample size to detect an effect. Sample size relates to the concept of power. For example, to detect a small effect, a larger sample is needed. Larger sample sizes can thus detect a smaller effect. Sample size is determined through a power analysis. The determination of sample size is never a simple percent of the population, but a calculated number based on the planned statistical tests, significance level and effect size. 8 I recommend using G*Power for basic power calculations, although many other options are available. In the exemplar study, the authors did not report their power analysis prior to conducting the study, but they gave a post-hoc power analysis of the actual power based on their sample size and the effect size detected. 6

Step 4. Prepare data for analysis

Data often need cleaning and other preparation before conducting analysis. Problems requiring cleaning include values outside of an acceptable range and missing values. Any particular value could be wrong because of a data entry error or data collection problem. Visually inspecting data can reveal anomalies. For example, an age value of 200 is clearly an error, or a value of 9 on a 1–5 Likert-type scale is an error. An easy way to start inspecting data is to sort each variable by ascending values and then descending values to look for atypical values. Then, try to correct the problem by determining what the value should be. Missing values are a more complicated problem because a concern is why the value is missing. A few missing values at random is not necessarily a concern, but a pattern of missing values (eg, individuals from a specific ethnic group tend to skip a certain question) indicates a systematic missingness that could indicate a problem with the data collection instrument. Descriptive statistics are an additional way to check for errors and ensure data are ready for analysis. While not discussed in the communication assessment exemplar, the authors did prepare data for analysis and report missing values in their descriptive statistics.

Step 5. Always start with descriptive statistics

Before running inferential statistics, it is critical to first describe the data. Obtaining descriptive statistics is a way to check whether data are ready for further analysis. Descriptive statistics give a general sense of trends and can illuminate errors by reviewing frequencies, minimums and maximums that can indicate values outside of the accepted range. Descriptive statistics are also an important step to check whether we meet assumptions for statistical tests. In a quantitative study, descriptive statistics also inform the first table of the results that reports information about the sample, as seen in table 2 of the exemplar study. 6

Step 6. Check assumptions of statistical tests

All statistical tests rely on foundational assumptions. Although some tests are more robust to violations, checking assumptions indicates whether the test is likely to be valid for a particular data set. Foundational parametric statistics (eg, t tests, ANOVA, correlation, regression) assume independent observations and a normal linear distribution of data. In the exemplar study, the authors noted ‘Data from both groups met normality assumptions, based on the Shapiro–Wilk test’ (p508), and gave the statistics in addition to noting specific assumptions for the independent t tests around equality of variances. 6

Step 7. Run the analysis

Conducting the analysis involves running whatever tests were planned. Statistics may be calculated manually or using software like SPSS, Stata, SAS or R. Statistical software provides an output with key tests statistics, p values that indicate whether a result is likely systematic or random, and indicators of fit. In the exemplar study, the authors noted they used SPSS V.22. 6

Step 8. Examine how well the statistical model fits

The first step involves examining whether the statistical model was significant or a good fit. For t tests, ANOVAs, correlation and regression, first examine an overall test of significance. For a t test, if the t statistic is not statistically significant (eg, p>0.05 or a CI crossing 0), we can conclude no significant difference between groups. The communication assessment exemplar reports significance of the t tests along with measures such as equality of variance.

For an ANOVA, if the F statistic is not statistically significant (eg, p>0.05 or a CI crossing 0), we can conclude no significant difference between groups and stop because there is no point in further examining what groups may be different. If the F statistic is significant in an ANOVA, we can then use contrasts or post-hoc tests to examine what is different. For a correlation test, if the r value is not statistically significant (eg, p>0.05 or a CI crossing 0), we can stop because there is no point in looking at the magnitude or direction of the coefficient. If it is significant, we can proceed to interpret the r. Finally, for a regression, we can examine the F statistic as an omnibus test and its significance. If it is not significant, we can stop. If it is significant, then examine the p value of each independent variable and residuals.

Step 9. Report the results of statistical analysis

When writing statistical results, always start with descriptive statistics and note whether assumptions for tests were met. When reporting inferential statistical tests, give the statistic itself (eg, a F statistic), the measure of significance (p value or CI), the effect size and a brief written interpretation of the statistical test. The interpretation, for example, could note that an intervention was not significantly different from the control or that it was associated with improvement that was statistically significant. For example, the exemplar study gives the pre–post means along standard error, t statistic, p value and an interpretation that postseminar means were lower, along with a reminder to the reader that lower is better. 6

When writing for a journal, follow the journal’s style. Many styles italicise non-Greek statistics (eg, the p value), but follow the particular instructions given. Remember a p value can never be 0 even though some statistical programs round the p to 0. In that case, most styles prefer to report as p<0.001.

Step 10. Evaluate threats to statistical conclusion validity

Shadish et al 9 provide nine threats to statistical conclusion validity in drawing inferences about the relationship between two variables; the threats can broadly apply to many statistical analyses. Although it helps to consider and anticipate these threats when designing a research study, some only arise after data collection and analysis. Threats to statistical conclusion validity appear in table 4 . 9 Pertinent threats can be dealt with to the extent possible (eg, if assumptions were not met, select another test) and should be discussed as limitations in the research report. For example, in the exemplar study, the authors noted the sample size as a limitation but reported that a post-hoc power analysis found adequate power. 6

Threats to statistical conclusion validity

Key resources to learn more about statistics include Field 4 and Salkind 10 for foundational information. For advanced statistics, Hair et al 11 and Tabachnick and Fidell 12 provide detailed information on multivariate statistics. Finally, the University of California Los Angeles Institute for Digital Research and Education (stats.idre.ucla.edu/other/annotatedoutput/) provides annotated output from Stata, SAS, Stata and MPlus for many statistical tests to help researchers read the output and understand what it means.

Researchers in family medicine and community health often conduct statistical analyses to address research questions. Following specific steps ensures a systematic and rigorous analysis. Knowledge of these essential statistical procedures will equip family medicine and community health researchers with interpreting literature, reviewing literature and conducting appropriate statistical analysis of their quantitative data.